Prerequisites

Before we begin, make sure the following prerequisites are met:

- SQL Server Data Tools (SSDT) designer installed for Visual Studio.

- SQL Server Integration Services Projects 2022+ Visual Studio extension installed.

- SSIS PowerPack is installed.

Get table partition key ranges in SSIS

-

Open Visual Studio and click Create a new project.

-

Select Integration Services Project. Enter a name and location for your project, then click OK.

-

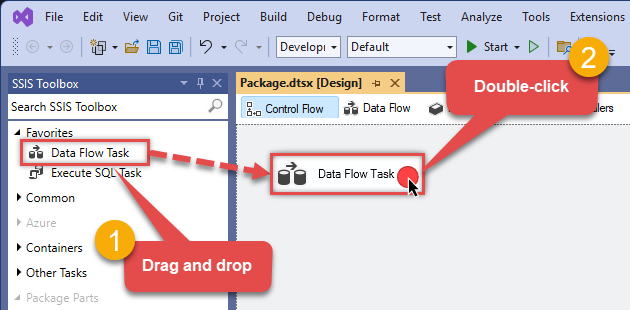

From the SSIS Toolbox, drag and drop a Data Flow Task onto the Control Flow surface, and double-click it:

-

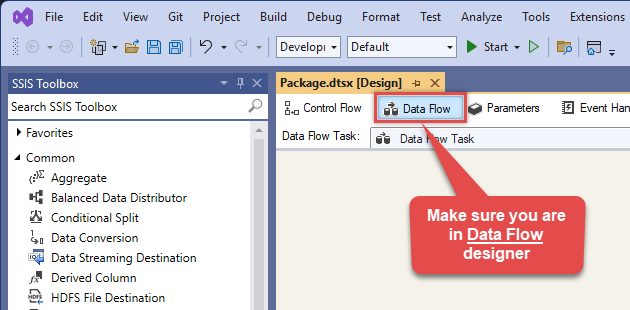

Make sure you are in the Data Flow Task designer:

-

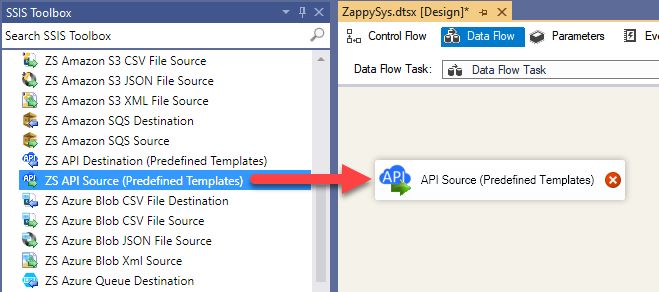

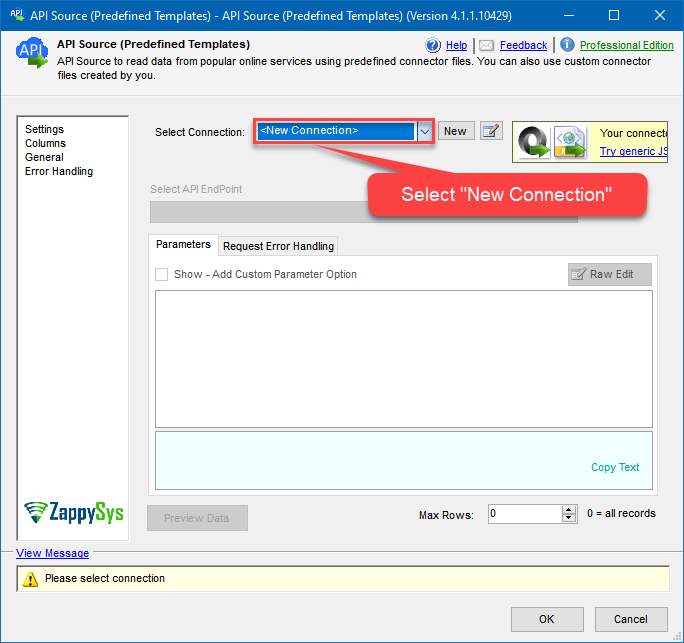

From the SSIS toolbox drag and API Source (Predefined Templates) on the data flow designer surface, and double click on it to edit it:

-

Select New Connection to create a new connection:

-

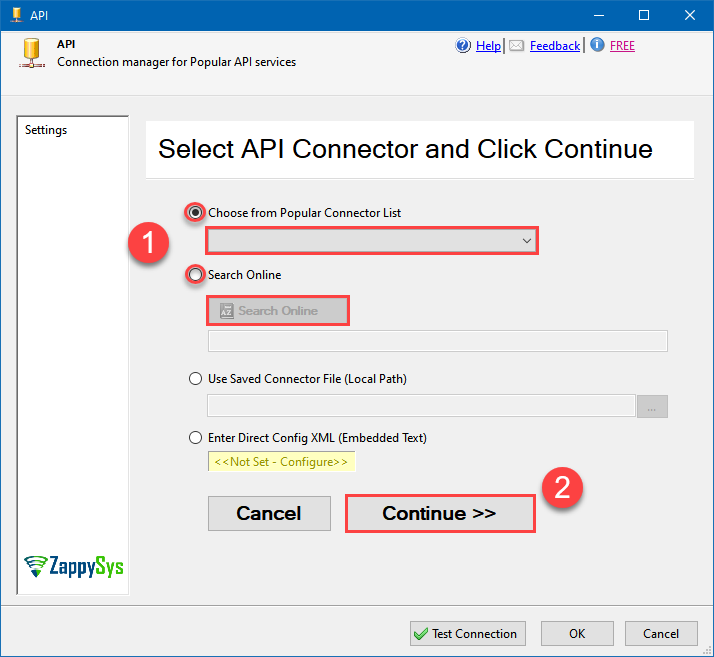

Use a preinstalled Cosmos DB Connector from Popular Connector List or press Search Online radio button to download Cosmos DB Connector. Once downloaded simply use it in the configuration:

Cosmos DB

-

Select your authentication scenario below to expand connection configuration steps to:

- Configure the authentication in Cosmos DB.

- Enter those details into the API Connection Manager configuration.

API Key

Cosmos DB authentication

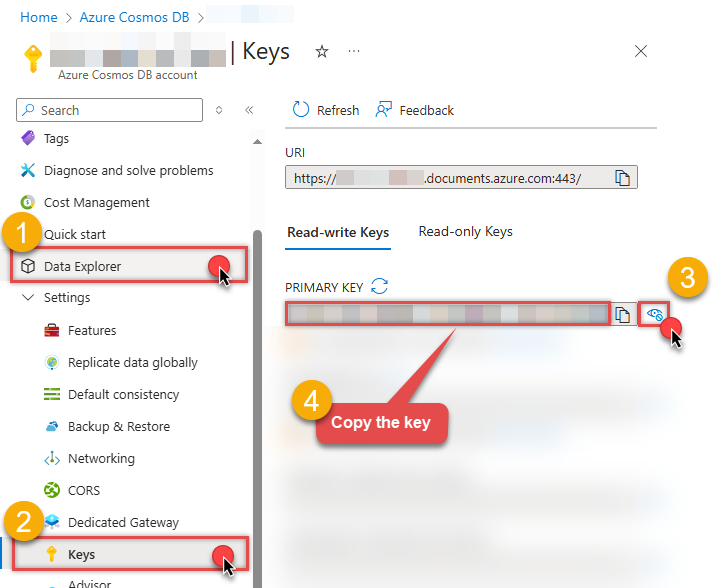

Connecting to your Azure Cosmos DB data requires you to authenticate your REST API access. Follow the instructions below:- Go to your Azure portal homepage: https://portal.azure.com/.

- In the search bar at the top of the homepage, enter Azure Cosmos DB. In the dropdown that appears, select Azure Cosmos DB.

- Click on the name of the database account you want to connect to (also copy and paste the name of the database account for later use).

-

On the next page where you can see all of the database account information, look along the left side and select Keys:

- On the Keys page, you will have two tabs: Read-write Keys and Read-only Keys. If you are going to write data to your database, you need to remain on the Read-write Keys tab. If you are only going to read data from your database, you should select the Read-only Keys tab.

- On the Keys page, copy the PRIMARY KEY value and paste it somewhere for later use (the SECONDARY KEY value may also be copied and used).

- Now go to SSIS package or ODBC data source and use this PRIMARY KEY in API Key authentication configuration.

- Enter the primary or secondary key you recorded in step 6 into the Primary or Secondary Key field.

- Then enter the database account you recorded in step 3 into the Database Account field.

- Next, enter or select the default database you want to connect to using the Default Database field.

- Continue by entering or selecting the default table (i.e. container/collection) you want to connect to using the Default Table (Container/Collection) field.

- Select the Test Connection button at the bottom of the window to verify proper connectivity with your Azure Cosmos DB account.

- If the connection test succeeds, select OK.

- Done! Now you are ready to use Cosmos DB Connector!

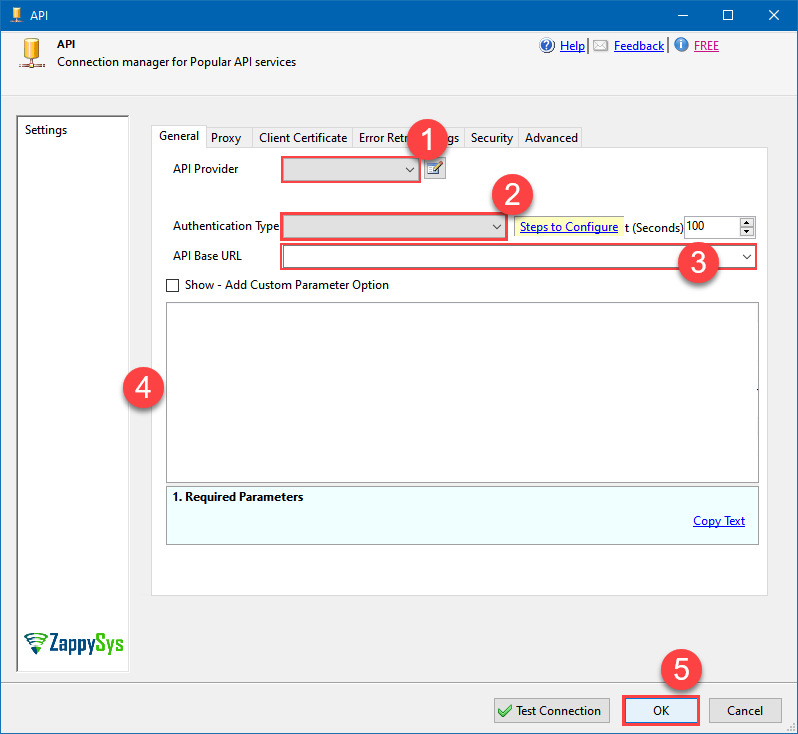

API Connection Manager configuration

Just perform these simple steps to finish authentication configuration:

-

Set Authentication Type to

API Key [Http] - Optional step. Modify API Base URL if needed (in most cases default will work).

- Fill in all the required parameters and set optional parameters if needed.

- Finally, hit OK button:

Cosmos DBAPI Key [Http]https://[$Account$].documents.azure.comRequired Parameters Primary or Secondary Key Fill-in the parameter... Account Name (Case-Sensitive) Fill-in the parameter... Database Name (keep blank to use default) Case-Sensitive Fill-in the parameter... API Version Fill-in the parameter... Optional Parameters Default Table (needed to invoke #DirectSQL)  Find full details in the Cosmos DB Connector authentication reference.

Find full details in the Cosmos DB Connector authentication reference. -

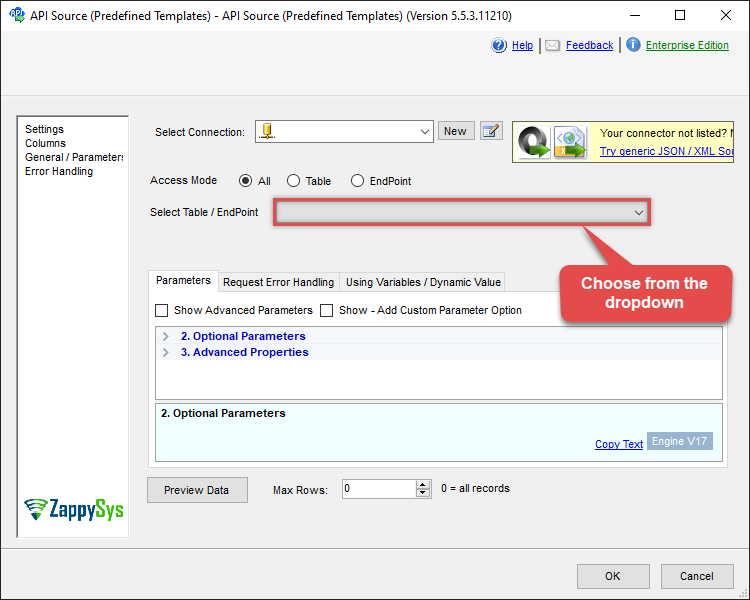

Select Get table partition key ranges endpoint from the dropdown and hit Preview Data:

API Source - Cosmos DBRead and write Azure Cosmos DB data effortlessly. Query, integrate, and manage databases, containers, documents, and users — almost no coding required.Cosmos DBGet table partition key rangesRequired Parameters Table Name (Case-Sensitive) Fill-in the parameter... Optional Parameters Database Name (keep blank to use default) Case-Sensitive

-

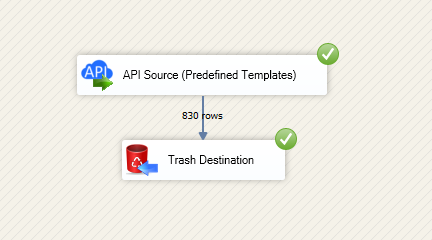

That's it! We are done! Just in a few clicks we configured the call to Cosmos DB using Cosmos DB Connector.

You can load the source data into your desired destination using the Upsert Destination , which supports SQL Server, PostgreSQL, and Amazon Redshift. We also offer other destinations such as CSV , Excel , Azure Table , Salesforce , and more . You can check out our SSIS PowerPack Tasks and components for more options. (*loaded in Trash Destination)

Deploy SSIS package to Azure Data Factory (ADF)

Once your SSIS package is complete,

deploy it to the

Azure-SSIS runtime

within Azure Data Factory.

The setup process requires you

to upload the

SSIS PowerPack

installer to Azure Blob Storage

and then customize the runtime configuration using the main.cmd file.

For a complete walkthrough of these steps,

see our detailed guide on the

Azure Data Factory (SSIS) and Cosmos DB integration.

Cosmos DB Connector actions

Need another use case? Pick the next Cosmos DB action in Azure Data Factory (SSIS) below.

- Create a document in the container

- Create Permission Token for a User (One Table)

- Create User for Database

- Delete a Document by Id

- Get All Documents for a Table

- Get All Users for a Database

- Get Database Information by Id or Name

- Get Document by Id

- Get List of Databases

- Get List of Tables

- Get table information by Id or Name

- Get User by Id or Name

- Query documents using Cosmos DB SQL query language

- Update Document in the Container

- Upsert a document in the container

- Make Generic REST API Request

- Make Generic REST API Request (Bulk Write)

Conclusion

You now know how to get table partition key ranges in Azure Data Factory (SSIS) without writing complex code. Cosmos DB SSIS Connector handled pagination and authentication automatically.

Ready to get started? Download the trial or ping us via chat if you need help: