Introduction

You can connect to your Amazon S3 JSON File data from SSAS via the high-performance Amazon S3 JSON File ODBC Driver. We'll walk you through the entire setup.

Let's not waste time and get started!

Create data source in ZappySys Data Gateway

In this section we will create a data source for Amazon S3 JSON File in the Data Gateway. Let's follow these steps to accomplish that:

-

Download and install ODBC PowerPack (if you haven't already).

-

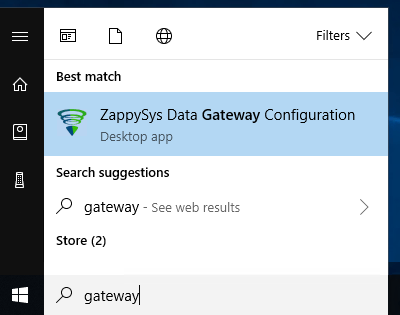

Search for

gatewayin the Windows Start Menu and open ZappySys Data Gateway Configuration:

-

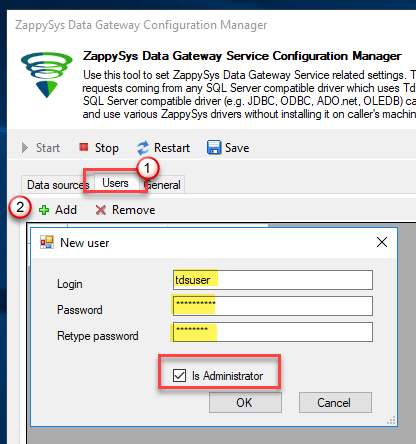

Go to the Users tab and follow these steps to add a Data Gateway user:

- Click the Add button

-

In the Login field enter a username, e.g.,

john - Then enter a Password

- Check the Is Administrator checkbox

- Click OK to save

-

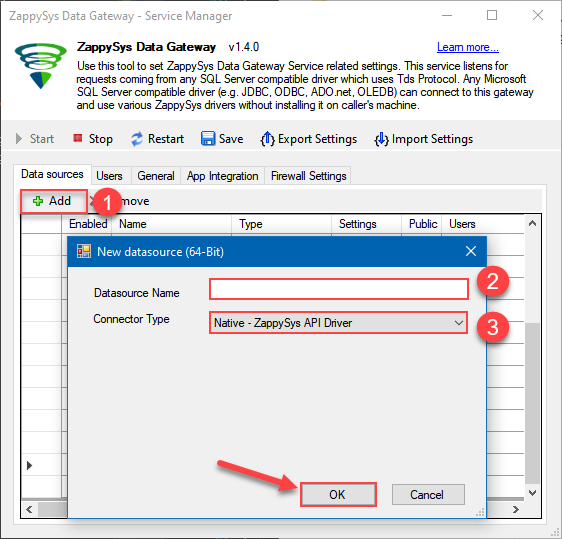

Now we are ready to add a data source:

- Click the Add button

- Give the Data source a name (have it handy for later)

- Then select Native - ZappySys Amazon S3 JSON Driver

- Finally, click OK

AmazonS3JsonFileDSNZappySys Amazon S3 JSON Driver

-

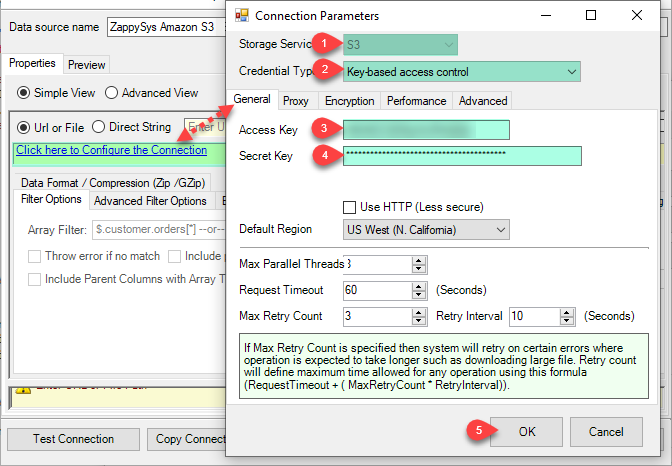

Create and configure a connection for the Amazon S3 storage account.

-

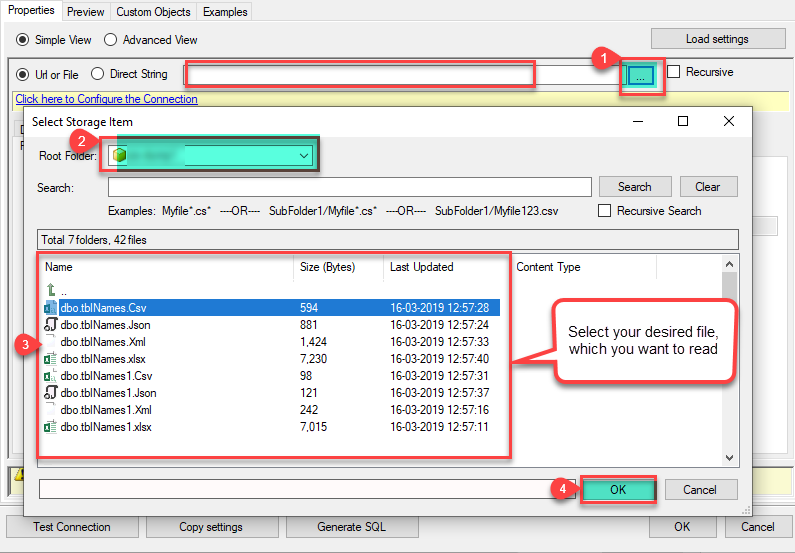

You can use select your desired single file by clicking [...] path button.

mybucket/dbo.tblNames.jsondbo.tblNames.json

----------OR----------You can also read the multiple files stored in Amazon S3 Storage using wildcard pattern supported e.g. dbo.tblNames*.json.

Note: If you want to operation with multiple files then use wild card pattern as below (when you use wild card pattern in source path then system will treat target path as folder regardless you end with slash) mybucket/dbo.tblNames.json (will read only single .JSON file) mybucket/dbo.tbl*.json (all files starting with file name) mybucket/*.json (all files with .json Extension and located under folder subfolder)

mybucket/dbo.tblNames*.json

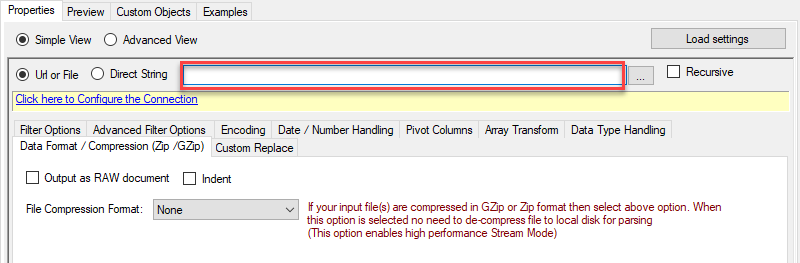

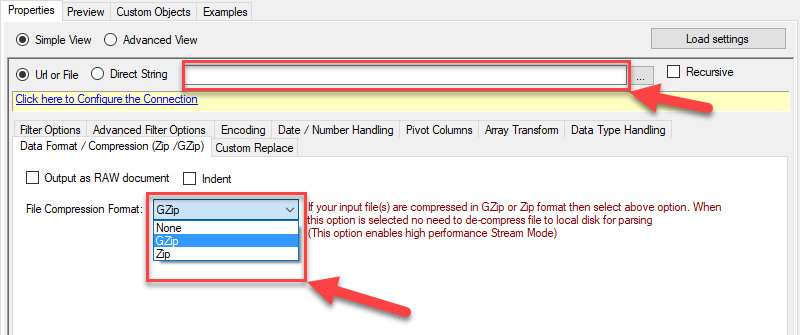

----------OR----------You can also read the zip and gzip compressed files also without extracting it in using Amazon S3 JSON Source File Task.

mybucket/dbo.tblNames*.gz

-

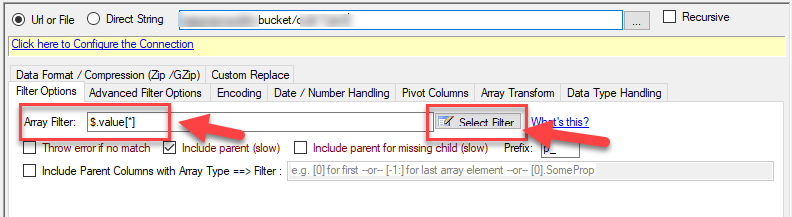

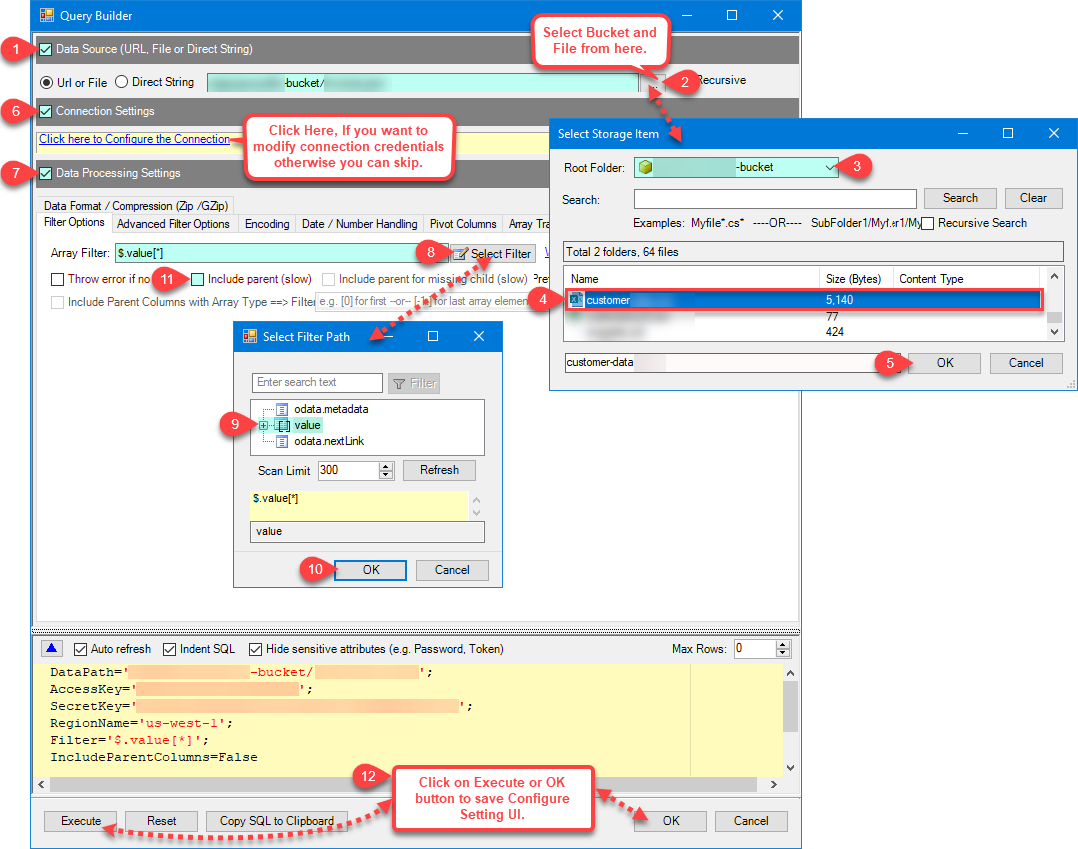

Now select/enter Path expression in Path textbox to extract only specific part of JSON string as below ($.value[*] will get content of value attribute from JSON document. Value attribute is array of JSON documents so we have to use [*] to indicate we want all records of that array)

NOTE: Here, We are using our desired filter, but you need to select your desired filter based on your requirement.

Navigate to the Preview Tab and let's explore the different modes available to access the data.

-

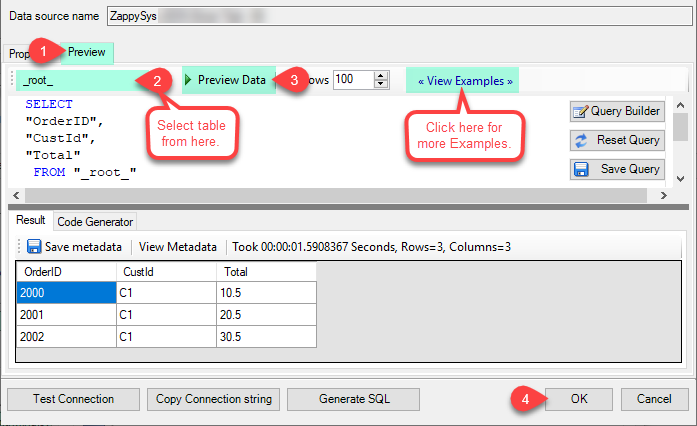

--- Using Direct Query ---

Click on Preview Tab, Select Table from Tables Dropdown and select [value] and click Preview.

-

--- Using Stored Procedure ---

Note : For this you have to Save ODBC Driver configuration and then again reopen to configure same driver. For more information click here.

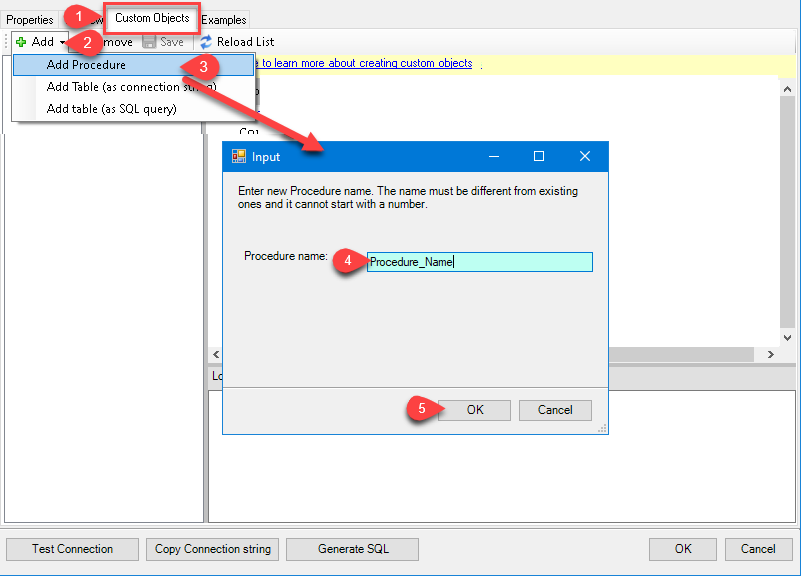

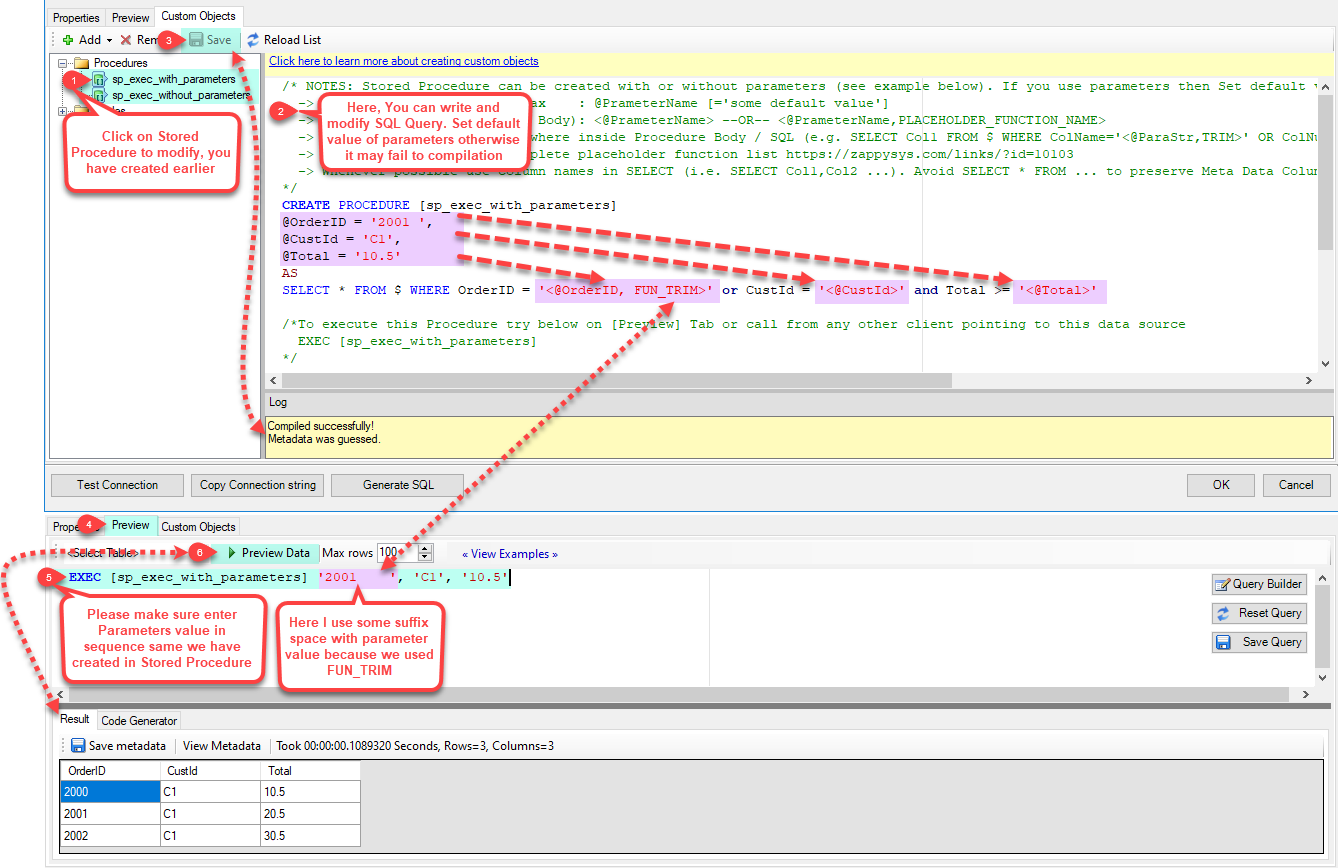

Click on the Custom Objects Tab, Click on Add button and select Add Procedure and Enter an appropriate name and Click on OK button to create.

-

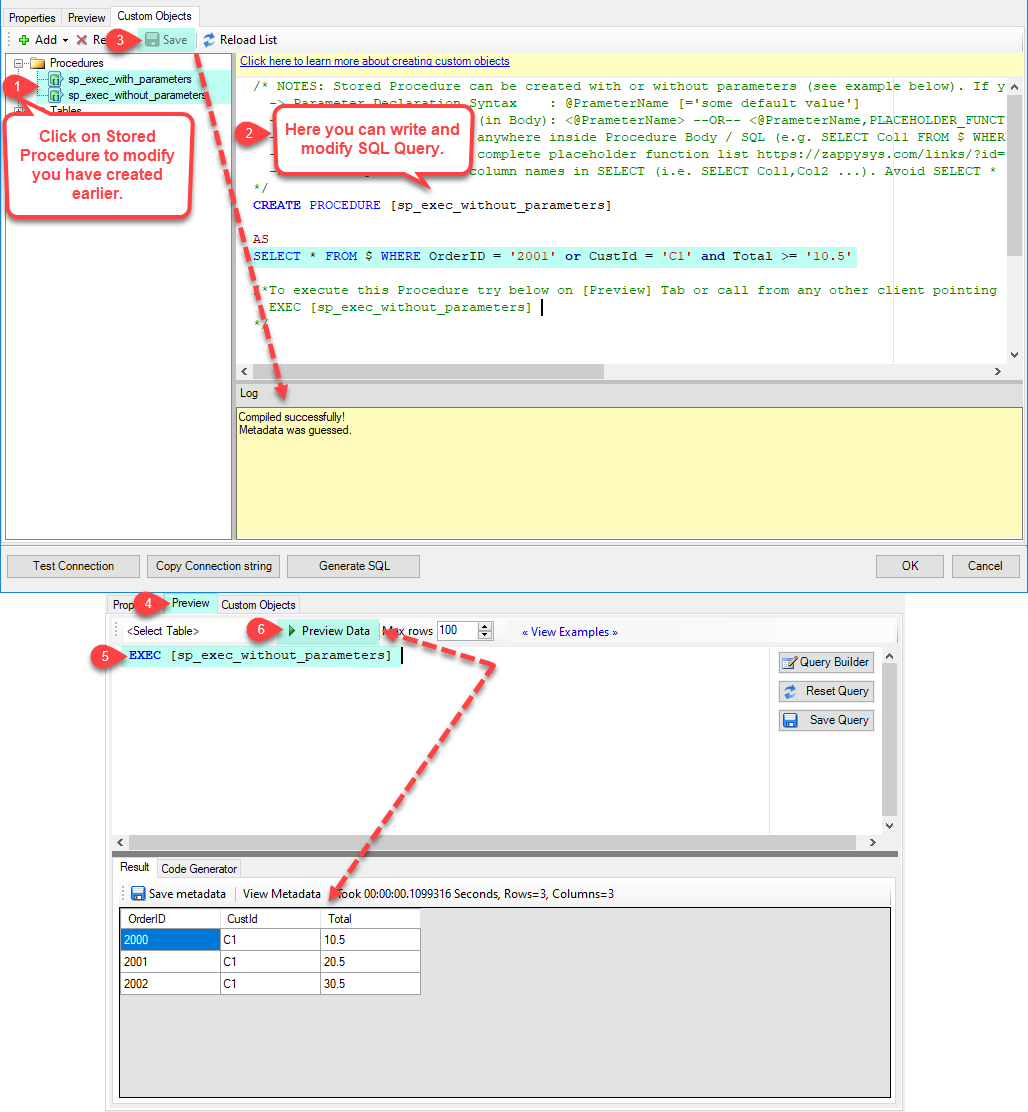

--- Without Parameters ---

Now Stored Procedure can be created with or without parameters (see example below). If you use parameters then Set default value otherwise it may fail to compilation)

-

--- With Parameters ---

Note : Here you can use Placeholder with Paramters in Stored Procedure. Example : SELECT * FROM $ WHERE OrderID = '<@OrderID, FUN_TRIM>' or CustId = '<@CustId>' and Total >= '<@Total>'

-

-

--- Using Virtual Table ---

Note : For this you have to Save ODBC Driver configuration and then again reopen to configure same driver. For more information click here.

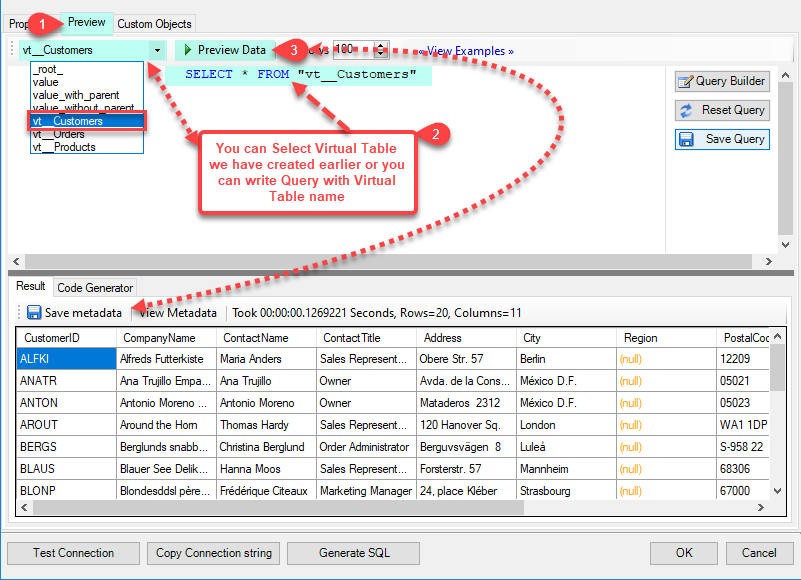

ZappySys APi Drivers support flexible Query language so you can override Default Properties you configured on Data Source such as URL, Body. This way you don't have to create multiple Data Sources if you like to read data from multiple EndPoints. However not every application support supplying custom SQL to driver so you can only select Table from list returned from driver.

Many applications like MS Access, Informatica Designer wont give you option to specify custom SQL when you import Objects. In such case Virtual Table is very useful. You can create many Virtual Tables on the same Data Source (e.g. If you have 50 Buckets with slight variations you can create virtual tables with just URL as Parameter setting).

vt__Customers DataPath=mybucket_1/customers.json vt__Orders DataPath=mybucket_2/orders.json vt__Products DataPath=mybucket_3/products.json

-

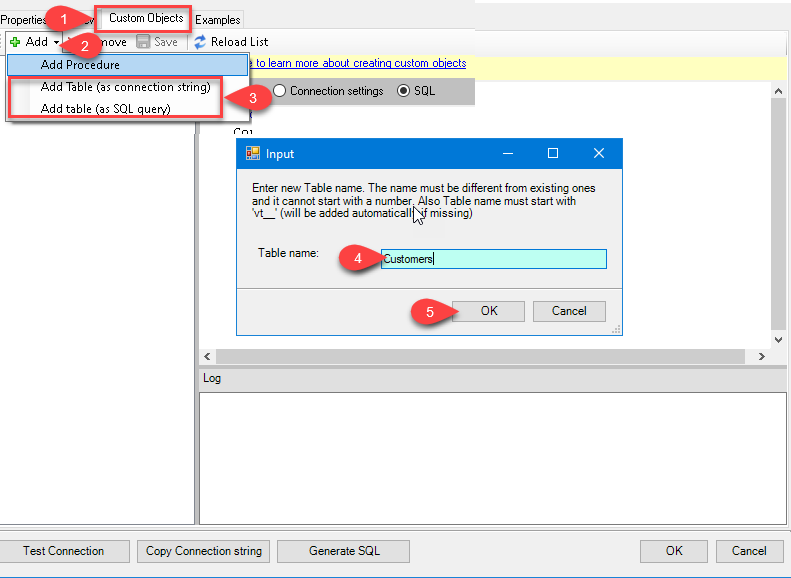

Click on the Custom Objects Tab, Click on Add button and select Add Table and Enter an appropriate name and Click on OK button to create.

-

Once you see Query Builder Window on screen Configure it.

-

Click on Preview Tab, Select Virtual Table(prefix with vt__) from Tables Dropdown or write SQL query with Virtual Table name and click Preview.

-

Click on the Custom Objects Tab, Click on Add button and select Add Table and Enter an appropriate name and Click on OK button to create.

-

-

Click OK to finish creating the data source

-

That's it; we are done. In a few clicks we configured the to Read the Amazon S3 JSON File data using ZappySys Amazon S3 JSON File Connector

-

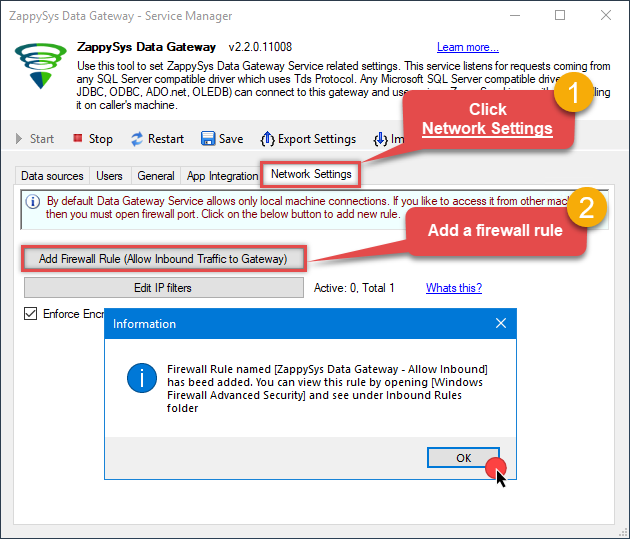

Once done, go to the Network Settings tab and Add a firewall rule for inbound traffic:

- This will initially allow all inbound traffic.

- Click Edit IP filters to restrict access to specific IP addresses or ranges.

-

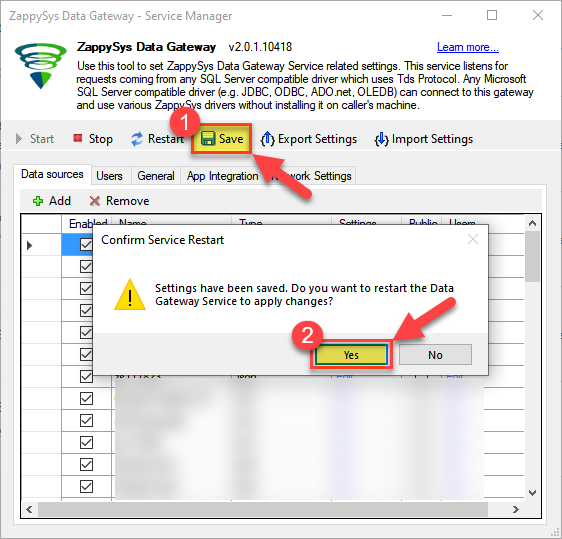

Crucial Step: After creating or modifying the data source, you must:

- Click the Save button to persist your changes.

- Hit Yes when prompted to restart the Data Gateway service.

This ensures all changes are properly applied:

Skipping this step may cause the new settings to fail, preventing you from connecting to the data source.

Skipping this step may cause the new settings to fail, preventing you from connecting to the data source.

Read Amazon S3 JSON File data in SSAS cube

With the data source created in the Data Gateway (previous step), we're now ready to read Amazon S3 JSON File data in an SSAS cube. Before we dive in, open Visual Studio and create a new Analysis Services project. Then, you're all set!

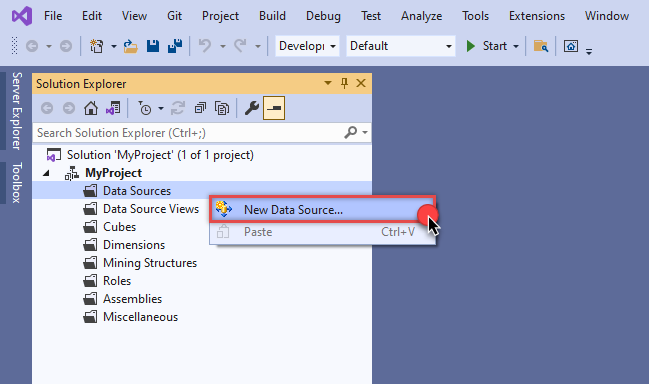

Create data source based on ZappySys Data Gateway

Let's start by creating a data source for a cube, based on the Data Gateway's data source we created earlier. So, what are we waiting for? Let's do it!

-

Create a new data source:

-

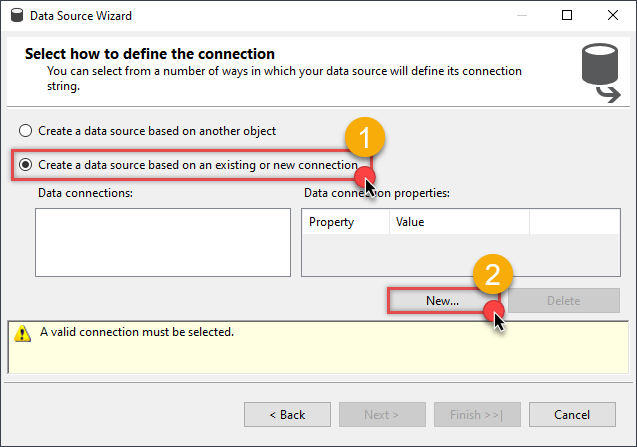

Once a window opens,

select Create a data source based on an existing or new connection option and

click New...:

-

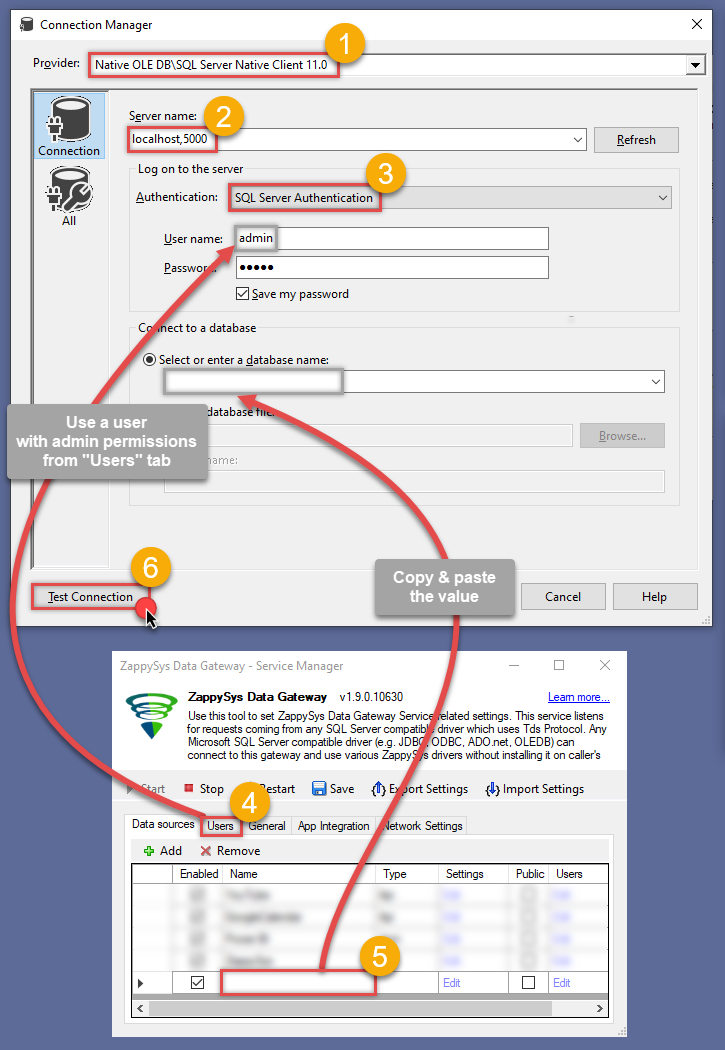

Here things become a little complicated, but do not despair, it's only for a little while.

Just perform these little steps:

- Select Native OLE DB\SQL Server Native Client 11.0 as provider.

- Enter your Server name (or IP address) and Port, separated by a comma.

- Select SQL Server Authentication option for authentication.

- Input User name which has admin permissions in the ZappySys Data Gateway.

- In Database name field enter the same data source name you use in the ZappySys Data Gateway.

- Hopefully, our hard work is done, when we Test Connection.

AmazonS3JsonFileDSNAmazonS3JsonFileDSN If SQL Server Native Client 11.0 is not listed as Native OLE DB provider, try using these:

If SQL Server Native Client 11.0 is not listed as Native OLE DB provider, try using these:- Microsoft OLE DB Driver for SQL Server

- Microsoft OLE DB Provider for SQL Server

-

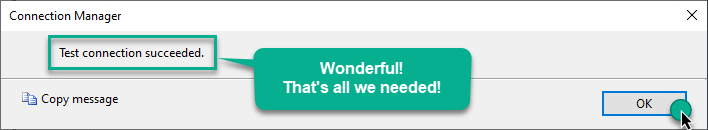

Indeed, life is easy again:

Add data source view

We have data source in place, it's now time to add a data source view. Let's not waste a single second and get on to it!

-

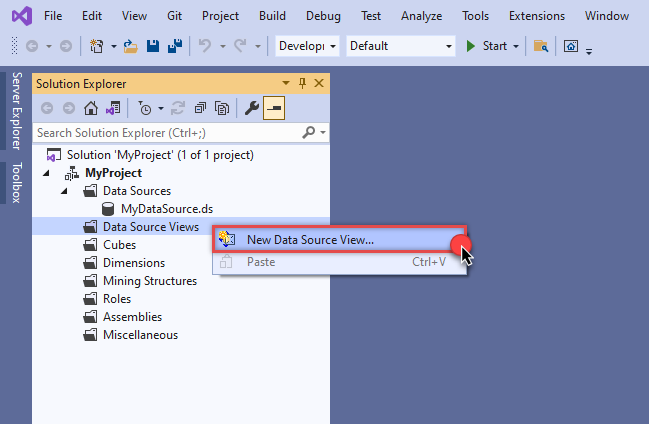

Start by right-clicking on Data Source Views and then choosing New Data Source View...:

-

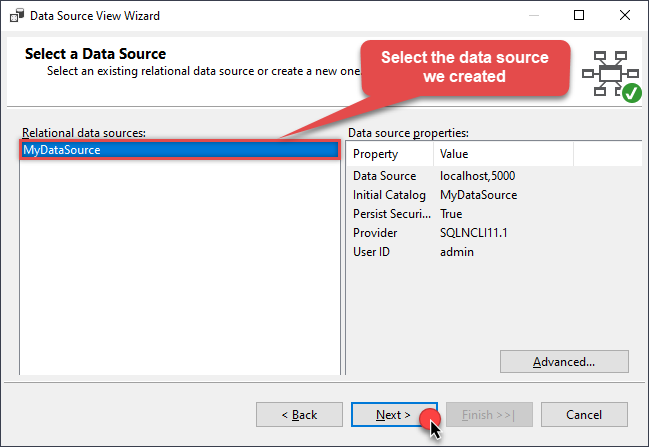

Select the previously created data source and click Next:

-

Ignore the Name Matching window and click Next.

-

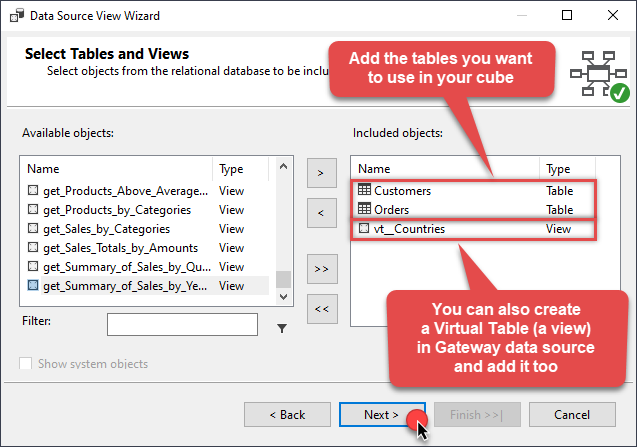

Add the tables you will use in your SSAS cube:

For cube dimensions, consider creating a Virtual Table in the Data Gateway's data source. Use the

For cube dimensions, consider creating a Virtual Table in the Data Gateway's data source. Use theDISTINCTkeyword in theSELECTstatement to get unique values from the facts table, like this:SELECT DISTINCT Country FROM CustomersFor demonstration purposes we are using sample tables which may not be available in Amazon S3 JSON File. -

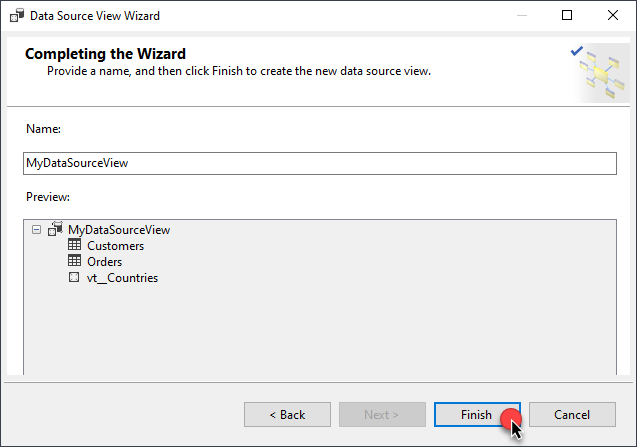

Review your data source view and click Finish:

-

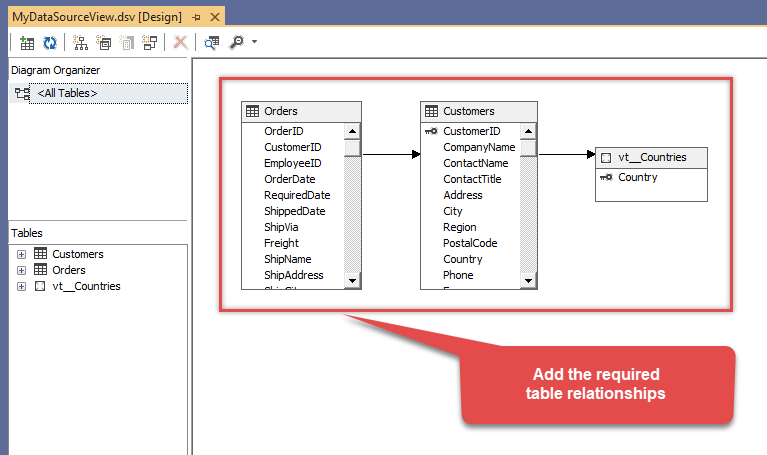

Add the missing table relationships and you're done!

Create cube

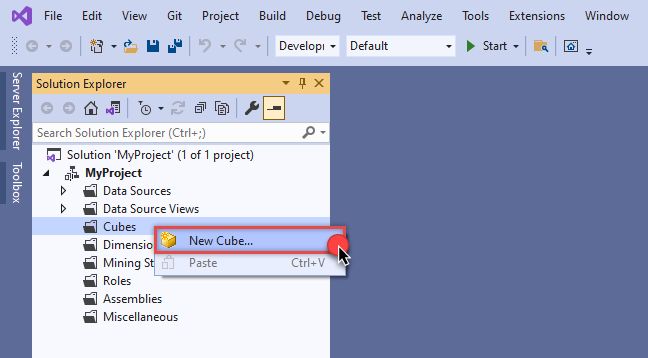

We have a data source view ready to be used by our cube. Let's create one!

-

Start by right-clicking on Cubes and selecting New Cube... menu item:

-

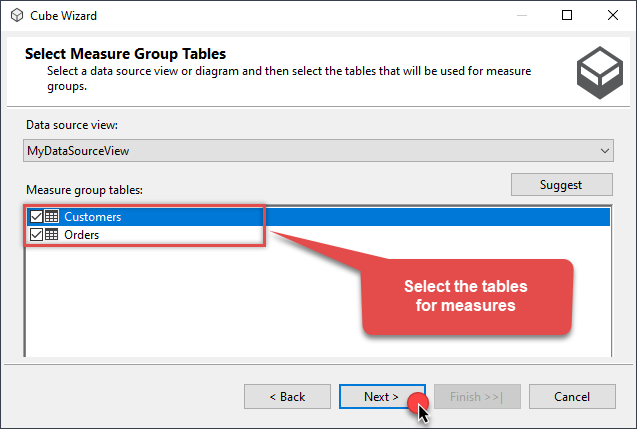

Select tables you will use for the measures:

-

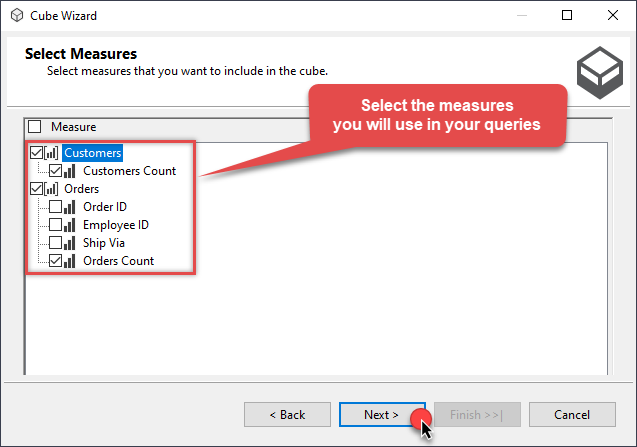

And then select the measures themselves:

-

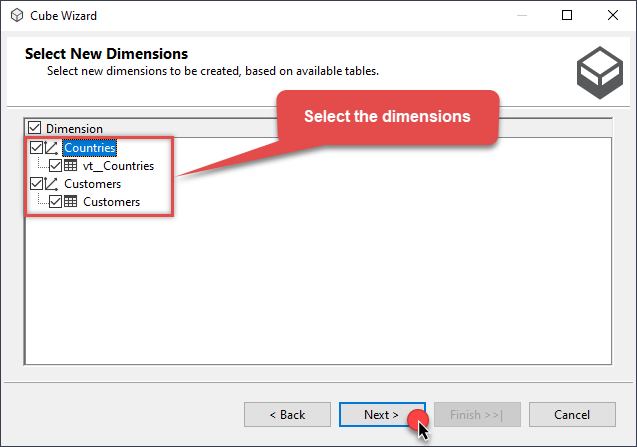

Don't stop and select the dimensions too:

-

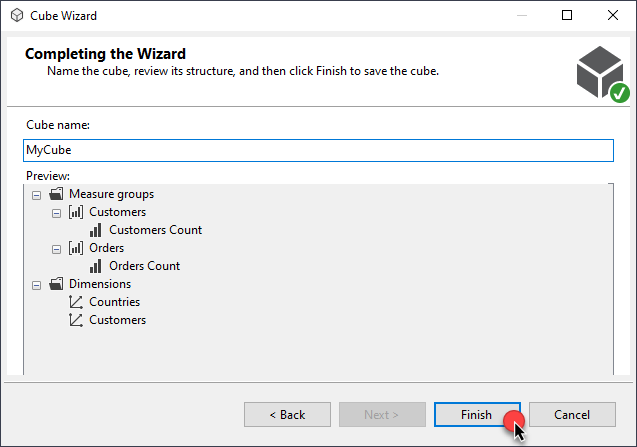

Move along and click Finish before the final steps:

-

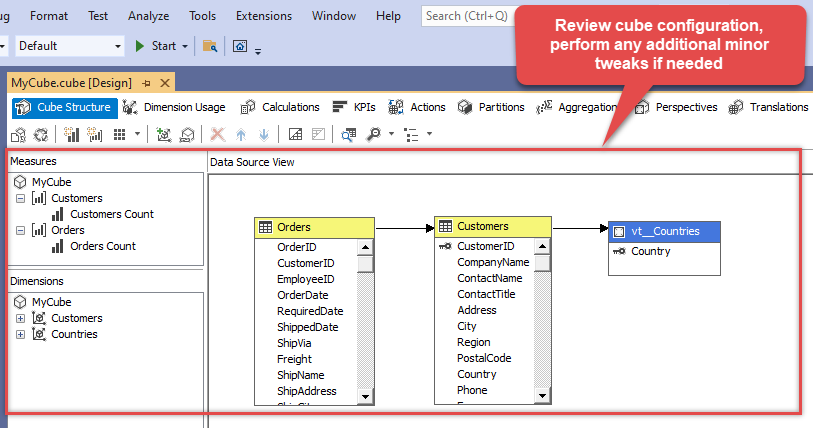

Review your cube before processing it:

-

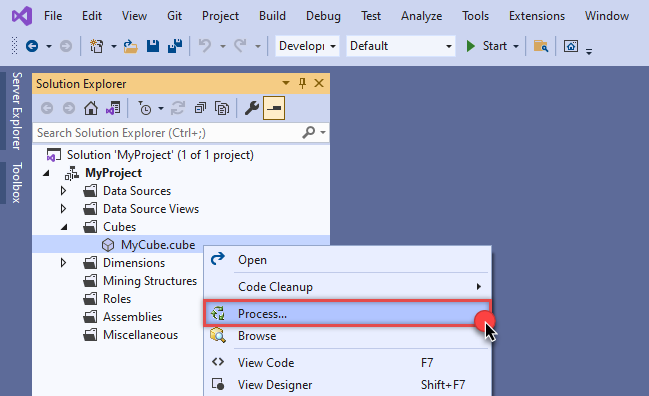

It's time for the grand finale! Hit Process... to create the cube:

-

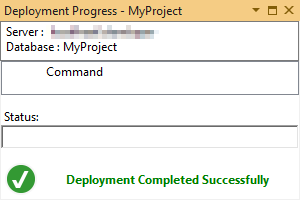

A splendid success!

Execute MDX query

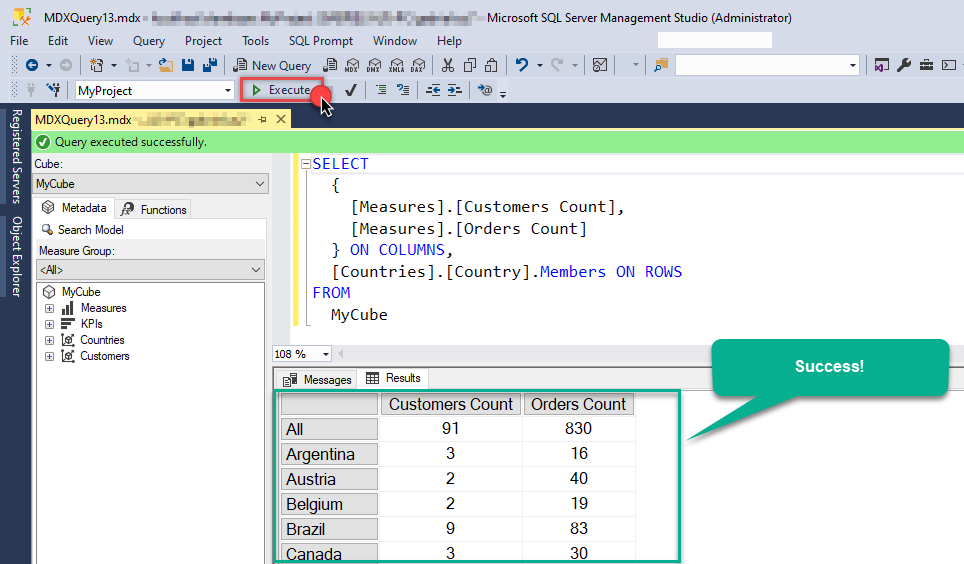

The cube is created and processed. It's time to reap what we sow! Just execute an MDX query and get Amazon S3 JSON File data in your SSAS cube:

Conclusion

In this guide, we demonstrated how to connect to Amazon S3 JSON File in SSAS and integrate your data — all without writing complex code.

Ready to get started? Download ODBC PowerPack now or ping us via chat if you still need help: