Introduction

You can connect to your Salesforce data in SSAS using the high-performance Salesforce ODBC Driver. We'll walk you through the entire setup.

Let's not waste time and get started!

Create data source in ZappySys Data Gateway

In this section we will create a data source for Salesforce in the Data Gateway. Let's follow these steps to accomplish that:

-

Download and install ODBC PowerPack (if you haven't already).

-

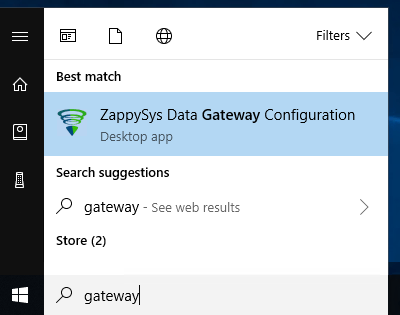

Search for

gatewayin the Windows Start Menu and open ZappySys Data Gateway Configuration:

-

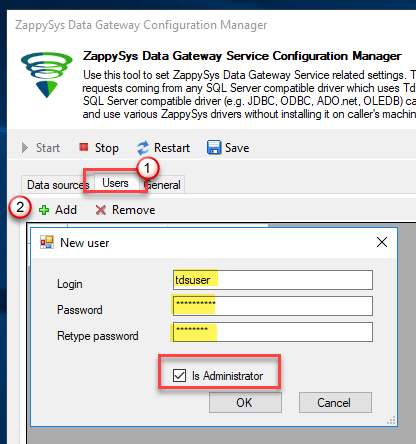

Go to the Users tab and follow these steps to add a Data Gateway user:

- Click the Add button

-

In the Login field enter a username, e.g.,

john - Then enter a Password

- Check the Is Administrator checkbox

- Click OK to save

-

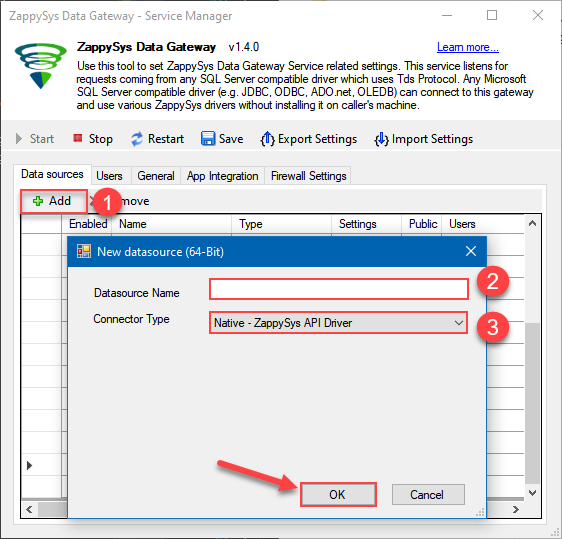

Now we are ready to add a data source:

- Click the Add button

- Give the Data source a name (have it handy for later)

- Then select Native - ZappySys Salesforce Driver

- Finally, click OK

SalesforceDSNZappySys Salesforce Driver

-

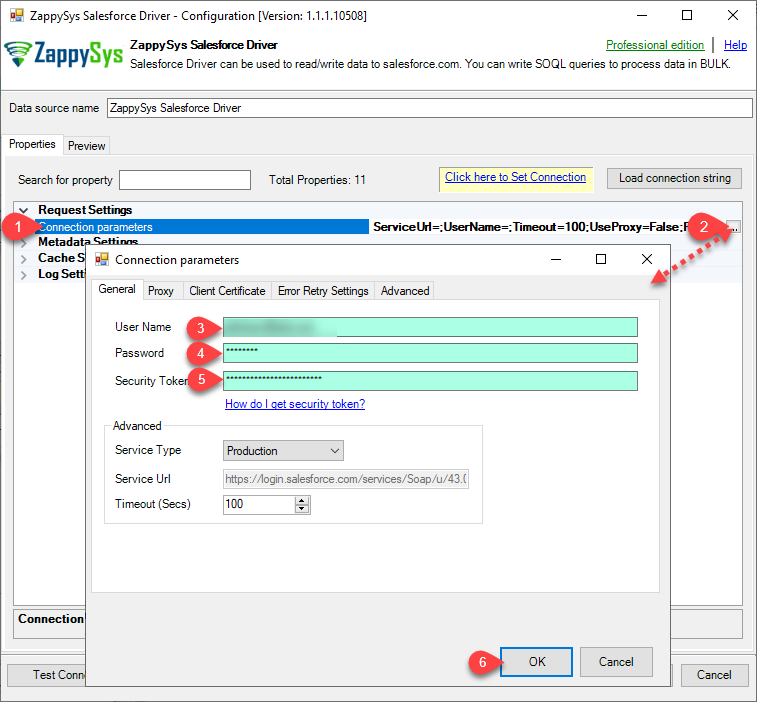

Now, we need SalesForce Connection. Lets create it.

-

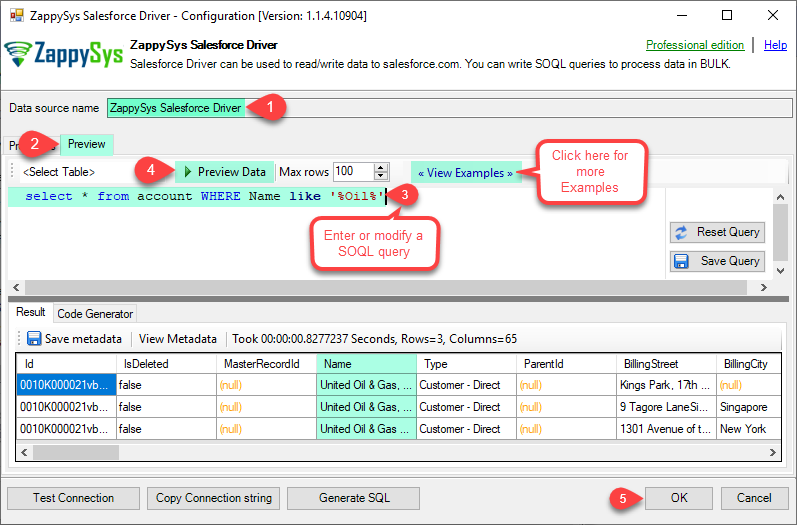

Now, When you see DSN Config Editor with zappysys logo first thing you need to do is change default DSN Name at the top and Click on Preview Tab, Select Table from Tables Dropdown or you can enter or modify a SOQL query and click on Preview Data.

This example shows how to write simple SOQL query (Salesforce Object Query Language). It uses WHERE clause. For more SOQL Queries click here.

SOQL is similar to database SQL query language but much simpler and many features you use in database query may not be supported in SOQL (Such as JOIN clause not supported). But you can use following Queries for Insert, Update, Delete and Upsert(Update or Insert record if not found).SELECT * FROM Account WHERE Name like '%Oil%'

-

Click OK to finish creating the data source

-

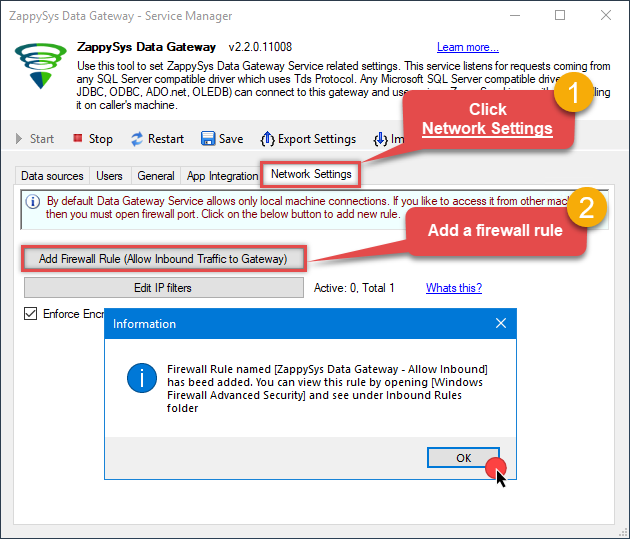

Once done, go to the Network Settings tab and Add a firewall rule for inbound traffic:

- This will initially allow all inbound traffic.

- Click Edit IP filters to restrict access to specific IP addresses or ranges.

-

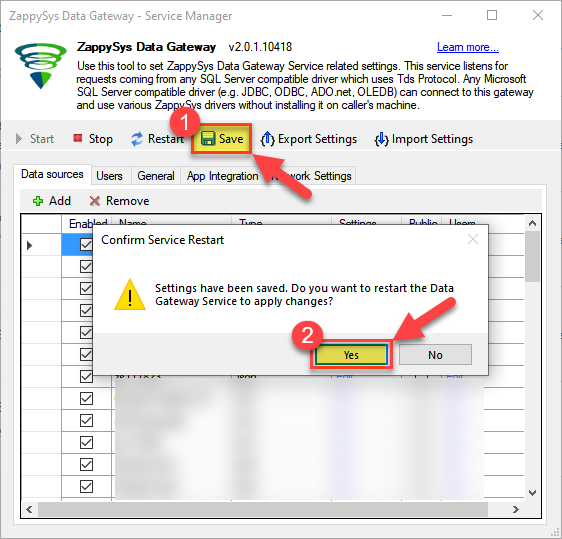

Crucial Step: After creating or modifying the data source, you must:

- Click the Save button to persist your changes.

- Hit Yes when prompted to restart the Data Gateway service.

This ensures all changes are properly applied:

Skipping this step may cause the new settings to fail, preventing you from connecting to the data source.

Skipping this step may cause the new settings to fail, preventing you from connecting to the data source.

Read Salesforce data in SSAS cube

With the data source created in the Data Gateway (previous step), we're now ready to read Salesforce data in an SSAS cube. Before we dive in, open Visual Studio and create a new Analysis Services project. Then, you're all set!

Create data source based on ZappySys Data Gateway

Let's start by creating a data source for a cube, based on the Data Gateway's data source we created earlier. So, what are we waiting for? Let's do it!

-

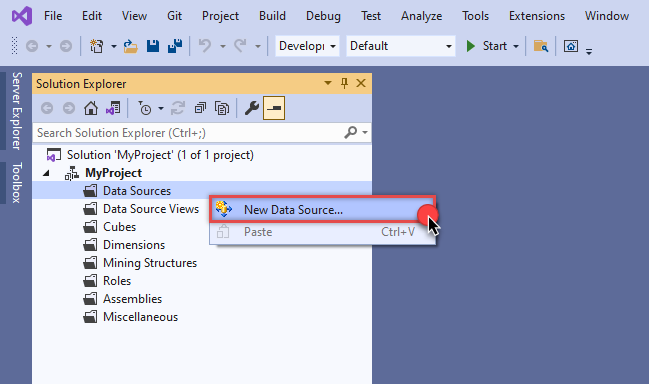

Create a new data source:

-

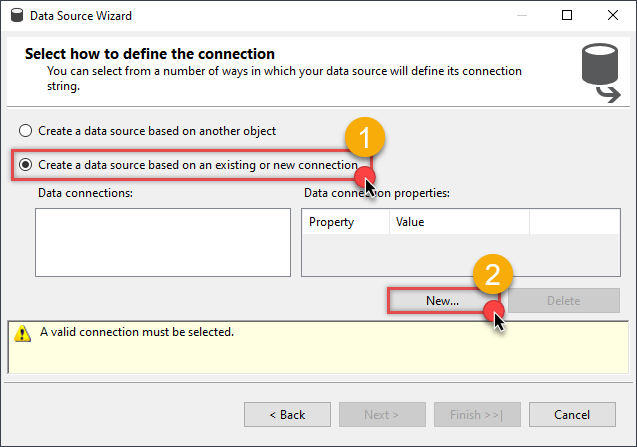

Once a window opens,

select Create a data source based on an existing or new connection option and

click New...:

-

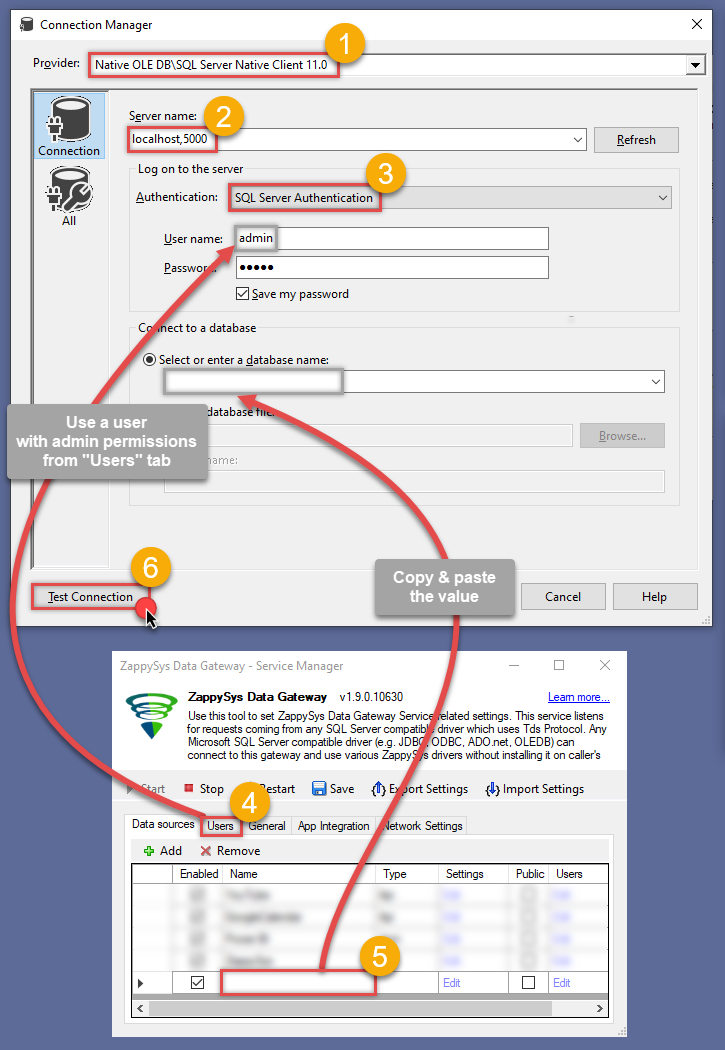

Here things become a little complicated, but do not despair, it's only for a little while.

Just perform these little steps:

- Select Native OLE DB\SQL Server Native Client 11.0 as provider.

- Enter your Server name (or IP address) and Port, separated by a comma.

- Select SQL Server Authentication option for authentication.

- Input User name which has admin permissions in the ZappySys Data Gateway.

- In Database name field enter the same data source name you use in the ZappySys Data Gateway.

- Hopefully, our hard work is done, when we Test Connection.

SalesforceDSNSalesforceDSN If SQL Server Native Client 11.0 is not listed as Native OLE DB provider, try using these:

If SQL Server Native Client 11.0 is not listed as Native OLE DB provider, try using these:- Microsoft OLE DB Driver for SQL Server

- Microsoft OLE DB Provider for SQL Server

-

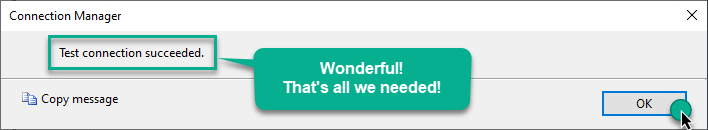

Indeed, life is easy again:

Add data source view

We have data source in place, it's now time to add a data source view. Let's not waste a single second and get on to it!

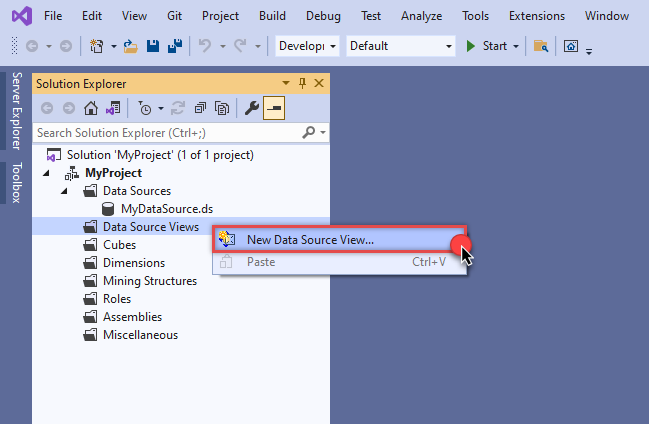

-

Start by right-clicking on Data Source Views and then choosing New Data Source View...:

-

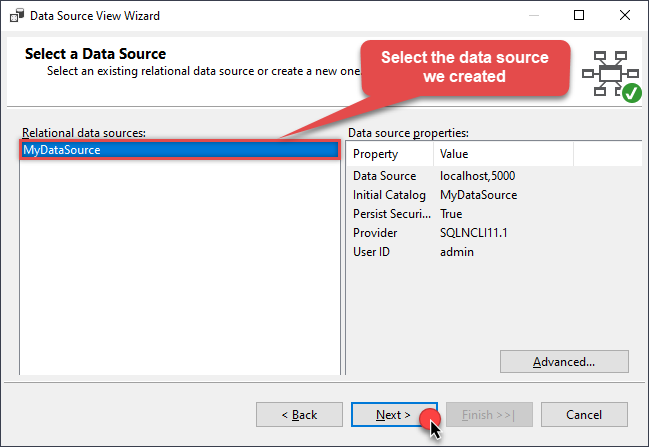

Select the previously created data source and click Next:

-

Ignore the Name Matching window and click Next.

-

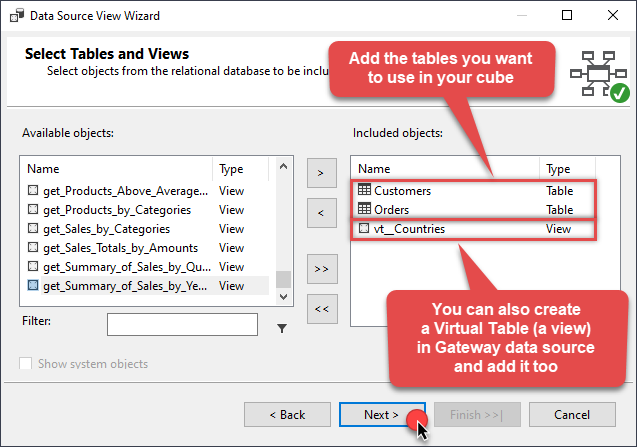

Add the tables you will use in your SSAS cube:

For cube dimensions, consider creating a Virtual Table in the Data Gateway's data source. Use the

For cube dimensions, consider creating a Virtual Table in the Data Gateway's data source. Use theDISTINCTkeyword in theSELECTstatement to get unique values from the facts table, like this:SELECT DISTINCT Country FROM CustomersFor demonstration purposes we are using sample tables which may not be available in Salesforce. -

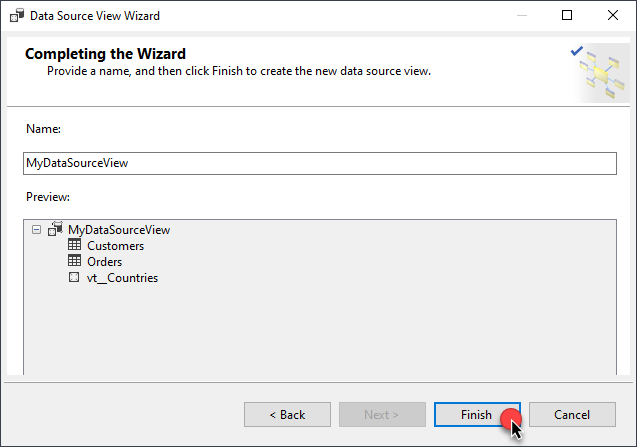

Review your data source view and click Finish:

-

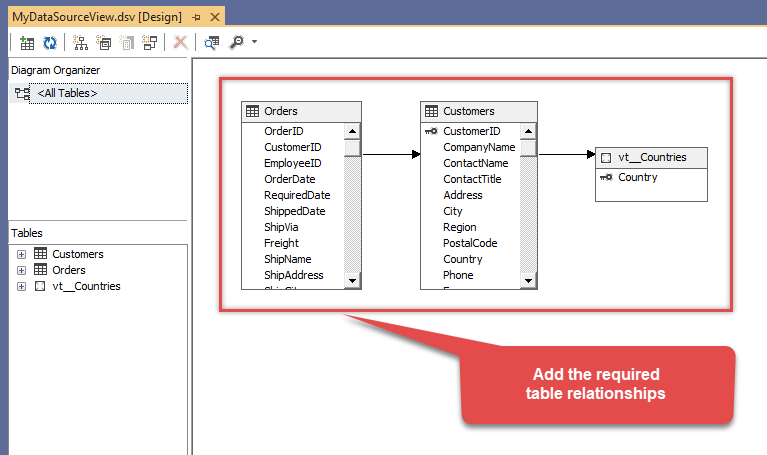

Add the missing table relationships and you're done!

Create cube

We have a data source view ready to be used by our cube. Let's create one!

-

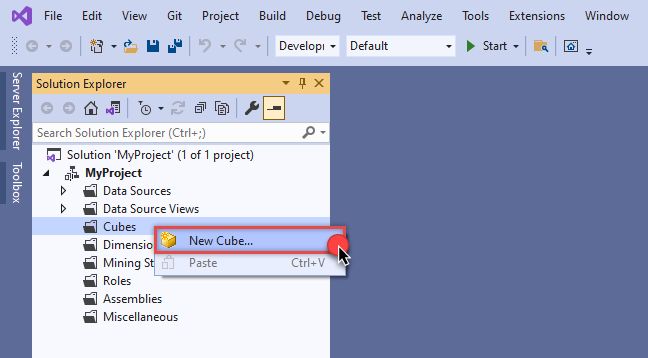

Start by right-clicking on Cubes and selecting New Cube... menu item:

-

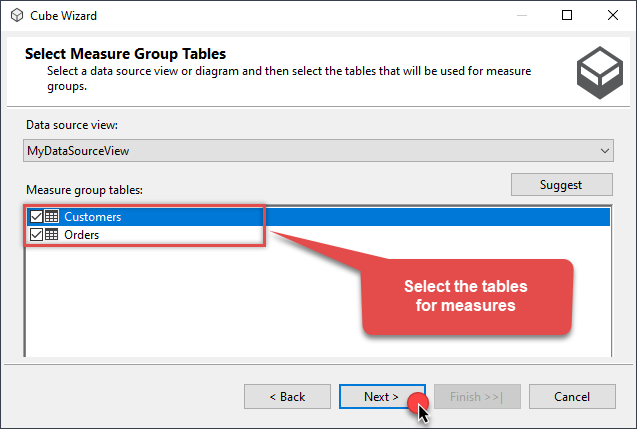

Select tables you will use for the measures:

-

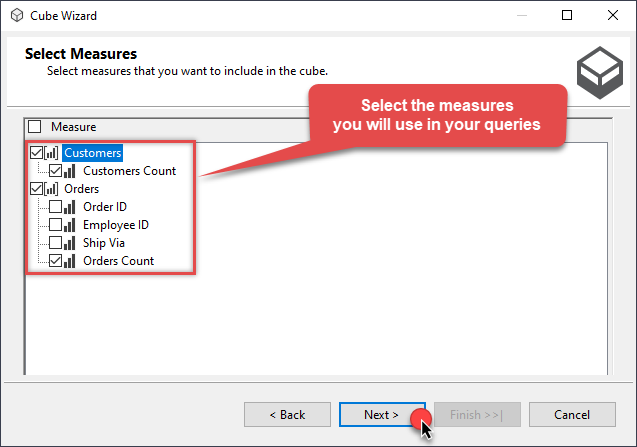

And then select the measures themselves:

-

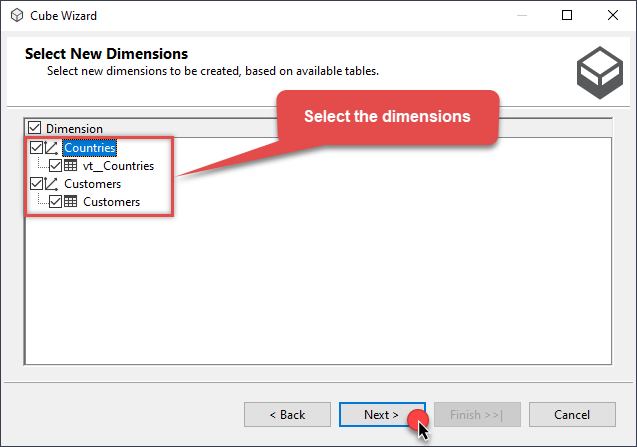

Don't stop and select the dimensions too:

-

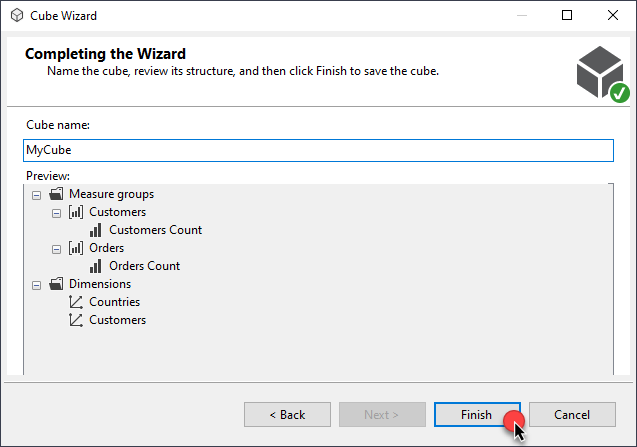

Move along and click Finish before the final steps:

-

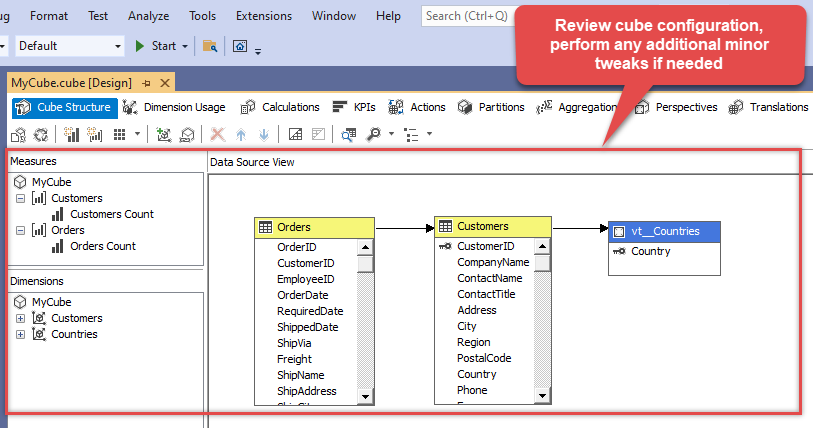

Review your cube before processing it:

-

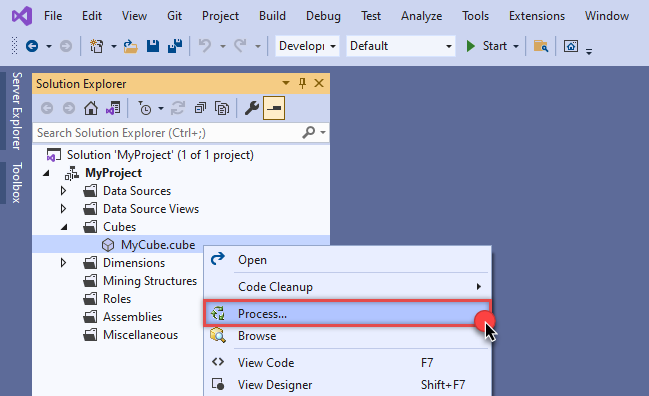

It's time for the grand finale! Hit Process... to create the cube:

-

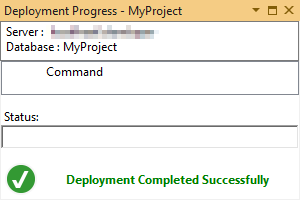

A splendid success!

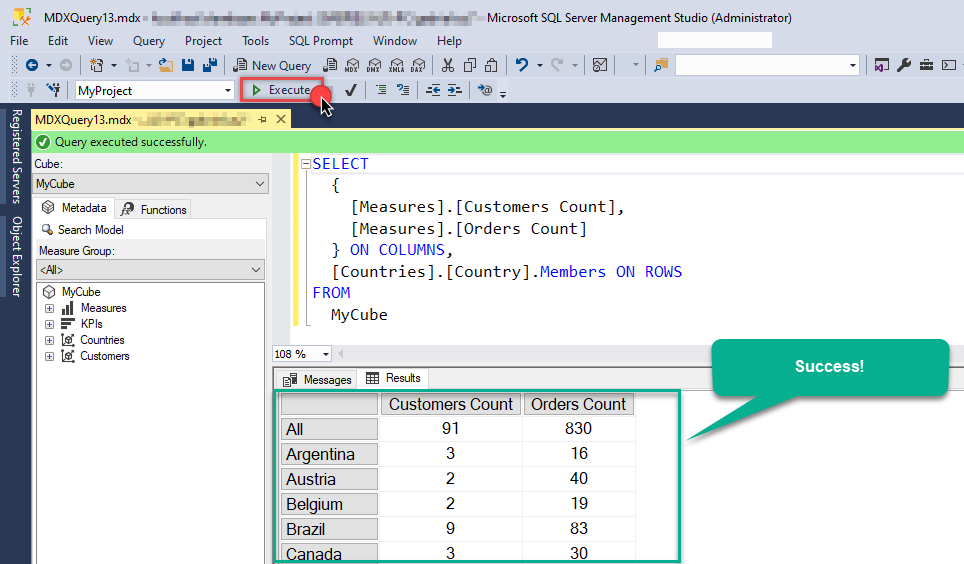

Execute MDX query

The cube is created and processed. It's time to reap what we sow! Just execute an MDX query and get Salesforce data in your SSAS cube:

Query Examples

This guide provides examples for using the ZappySys Salesforce ODBC Driver to perform bulk API operations and DML (Data Manipulation Language) actions on Salesforce. You’ll learn how to leverage the Bulk API to insert, update, upsert, and delete large datasets from external sources such as MSSQL, CSV, Oracle, and other ODBC-compatible systems. By using external IDs and lookup fields, you can easily map data from your source systems to Salesforce. These examples will help you execute high-performance operations efficiently using EnableBulkMode, EXTERNAL options, and more.

Bulk Mode - Insert Large Volume of Data from External Source (e.g., MSSQL) into Salesforce

This example demonstrates how to use the EnableBulkMode option to insert a large volume of records into Salesforce using the Bulk API (Job-based mode). By default, the standard mode writes data in batches of 200 rows. However, when Bulk API mode is enabled, it can send up to 10,000 rows per batch, offering better performance for large datasets. Note that using Bulk API mode may not provide performance benefits for small datasets (e.g., a few hundred rows).

In this example, the driver type is set to MSSQL. For other data sources such as CSV, REST API, or Oracle, update the driver type to ODBC and modify the connection string and query accordingly.

Ensure that your source query returns column names that match the target Salesforce object fields. The EXTERNAL option is used to map Salesforce target fields based on the output of the source query.

Important: If you’re using Windows authentication, the service account running the ZappySys Data Gateway must have the appropriate permissions on the source system.

INSERT INTO Account

SOURCE (

'MSSQL',

'Data Source=localhost;Initial Catalog=tempdb;Integrated Security=true',

'SELECT TOP 1000000

C_NAME AS Name,

C_CITY AS BillingCity,

C_LOC AS NumberofLocations__c

FROM very_large_staging_table'

)

WITH (

Output = 1,

EnableBulkMode = 1

)

-- Notes:

-- 'MSSQL': External driver type (MSSQL, ODBC, OLEDB)

-- Output: Enables capturing __RowStatus and __ErrorMessage

-- EnableBulkMode: Improves performance with bulk batches (uses 10000 rows per batch rather than 200)

Bulk Mode - Insert Records with Lookup Field (Read from External Source)

This example demonstrates how to use the EnableBulkMode option to insert a large number of records into Salesforce using the Bulk API (Job-based mode). Additionally, it shows how to set a lookup field—specifically the Owner field—by referencing an external ID from the User object instead of using the internal Salesforce ID.

If you are performing an Update operation, you must include the Id field in the source data. If your source field has a different name, alias it to Id in the SQL query. For Upsert operations, you can specify a custom external ID field using the Key='ExternalId_Field_Name' option. However, for standard Update operations, the Id field is mandatory.

By default, data is written in batches of 200 rows. When Bulk API mode is enabled, up to 10,000 rows can be sent per batch. This improves performance for large datasets, but offers little advantage for smaller volumes.

In this example, the driver type is set to MSSQL. For other sources such as CSV, REST API, or Oracle, change the driver type to ODBC and adjust the connection string and query accordingly.

Make sure the query outputs column names that match the target fields in the Salesforce object. The EXTERNAL option is used to map input columns to Salesforce fields dynamically.

Important: If you’re using Windows authentication, ensure that the service account running the ZappySys Data Gateway has the appropriate access permissions on the source system.

INSERT INTO Account

SOURCE (

'MSSQL',

'Data Source=localhost;Initial Catalog=tempdb;Integrated Security=true',

'SELECT TOP 1000000

Account_Name as Name,

AccountOwnerId as [Owner.ExternalId]

FROM very_large_staging_table'

)

WITH (

Output = 1,

EnableBulkMode = 1

)

-- Notes:

-- 'MSSQL': External driver type (MSSQL, ODBC, OLEDB)

-- Output: Enables capturing __RowStatus and __ErrorMessage

-- EnableBulkMode: Improves performance with bulk batches (uses 10000 rows per batch rather than 200)

Bulk Mode - Delete Large Volume of Data (Read IDs from External Source)

This example demonstrates how to use the EnableBulkMode option to delete a large number of records from Salesforce using the Bulk API (Job-based mode). To perform a delete operation, the source query must return the Id column. If your source column has a different name, make sure to alias it as Id in the SQL query.

By default, data is processed in batches of 200 rows. When Bulk API mode is enabled, batches can include up to 10,000 rows, which significantly improves performance when working with large datasets. However, for small volumes (a few hundred records), Bulk API mode may not offer a noticeable performance benefit.

In this example, the driver type is set to MSSQL. For other data sources such as CSV, REST API, or Oracle, set the driver type to ODBC and update the connection string and query as needed.

Ensure that the query output includes column names that match the target Salesforce object fields. The EXTERNAL option allows dynamic mapping of input columns to Salesforce fields based on the source query.

Important: If you’re using Windows authentication, make sure the service account running the ZappySys Data Gateway has the necessary permissions to access the data source.

DELETE FROM Account

SOURCE (

'MSSQL',

'Data Source=localhost;Initial Catalog=tempdb;Integrated Security=true',

'SELECT TOP 1000000

Account_ID as Id

FROM very_large_staging_table'

)

WITH (

Output = 1,

EnableBulkMode = 1

)

-- Notes:

-- 'MSSQL': External driver type (MSSQL, ODBC, OLEDB)

-- Output: Enables capturing __RowStatus and __ErrorMessage

-- EnableBulkMode: Improves performance with bulk batches (uses 10000 rows per batch rather than 200)

Bulk Mode - Update Large Volume of Data (Read from External Source)

This example illustrates how to use the EnableBulkMode option to update a large number of records in Salesforce via the Bulk API (Job-based mode). When performing an Update operation, the source query must include the Id column. If the source column is named differently, be sure to alias it as Id in your SQL query.

By default, records are processed in batches of 200 rows. When Bulk API mode is enabled, batches can handle up to 10,000 rows, which greatly improves performance for large datasets. However, for smaller datasets (e.g., a few hundred records), Bulk API may not offer a significant performance boost.

In this example, the driver type is set to MSSQL. For other sources such as CSV, REST API, or Oracle, change the driver type to ODBC and modify the connection string and query accordingly.

Ensure that your query returns column names matching the fields in the Salesforce target object. The EXTERNAL option is used to dynamically map input columns to Salesforce fields based on the query output.

Important: When using Windows authentication, the service account running the ZappySys Data Gateway must have the necessary permissions on the source system.

UPDATE Account

SOURCE (

'MSSQL',

'Data Source=localhost;Initial Catalog=tempdb;Integrated Security=true',

'SELECT TOP 1000000

Account_ID as Id,

Account_Name as Name,

City as BillingCity

FROM very_large_staging_table'

)

WITH (

Output = 1,

EnableBulkMode = 1

)

-- Notes:

-- 'MSSQL': External driver type (MSSQL, ODBC, OLEDB)

-- Output: Enables capturing __RowStatus and __ErrorMessage

-- EnableBulkMode: Improves performance with bulk batches (uses 10000 rows per batch rather than 200)

Bulk Mode - Update Lookup Field (Read from External Source)

This example shows how to use the EnableBulkMode option to update a large number of Salesforce records using the Bulk API (Job-based mode). In this scenario, we update a lookup field—specifically the Owner field—by referencing the external ID from the User object instead of using the internal Salesforce ID.

When performing an Update, the Id field must be included in the source data. If your source column has a different name, alias it as Id in the SQL query. For Upsert operations, you can specify a custom external ID using the Key='ExternalId_Field_Name' option. However, for standard Update operations, the Id field is required.

By default, the system processes 200 rows per batch. When EnableBulkMode is enabled, it can process up to 10,000 rows per batch, offering improved performance for large datasets. This mode is less effective for smaller data volumes.

In this example, the driver type is set to MSSQL. For other data sources (e.g., CSV, REST API, Oracle), change the driver type to ODBC and update the connection string and query as needed.

Ensure the query returns column names that match the fields in the target Salesforce object. The EXTERNAL option dynamically maps input columns to Salesforce fields based on the query output.

Important: If using Windows authentication, ensure the service account running the ZappySys Data Gateway has appropriate permissions on the source system.

UPDATE Account

SOURCE (

'MSSQL',

'Data Source=localhost;Initial Catalog=tempdb;Integrated Security=true',

'SELECT TOP 1000000

Account_ID as Id,

Account_Name as Name,

AccountOwnerId as [Owner.ExternalId]

FROM very_large_staging_table'

)

WITH (

Output = 1,

EnableBulkMode = 1

)

-- Notes:

-- 'MSSQL': External driver type (MSSQL, ODBC, OLEDB)

-- Output: Enables capturing __RowStatus and __ErrorMessage

-- EnableBulkMode: Improves performance with bulk batches (uses 10000 rows per batch rather than 200)

External Input from ODBC - Insert Multiple Rows from ODBC Source (e.g., CSV) into Salesforce

This example demonstrates how to perform an INSERT operation in Salesforce using multiple input rows from an external data source such as MSSQL, ODBC, or OLEDB. The operation reads records via an external query and inserts them directly into Salesforce.

In this example, the driver type is set to MSSQL. For other systems like CSV, REST API, or Oracle, set the driver type to ODBC and update the connection string and query accordingly.

Ensure that the query returns column names that match the fields in the Salesforce target object. The EXTERNAL option is used to map these input columns to the corresponding Salesforce fields based on the source query output.

INSERT INTO Account

SOURCE (

'ODBC', -- External driver type: MSSQL, ODBC, or OLEDB

'Driver={ZappySys CSV Driver};DataPath=c:\somefile.csv', -- ODBC connection string

'

SELECT

Acct_Name AS Name,

Billing_City AS BillingCity,

Locations AS NumberofLocations__c

FROM $

WITH (

-- Either use SRC to point to a file or use inline DATA. Comment out one as needed.

-- Examples:

-- SRC = ''c:\file_1.csv''

-- SRC = ''c:\some*.csv''

-- SRC = ''https://abc.com/api/somedata-in-csv''

DATA = ''Acct_Name,Billing_City,Locations

Account001,City001,1

Account002,City002,2

Account003,City003,3''

)'

)

-- Notes:

-- Column aliases in SELECT must match Salesforce target fields.

-- Preview the Account object to verify available fields.

WITH (

Output = 1, -- Capture __RowStatus and __ErrorMessage for each record

-- EnableBulkMode = 1, -- Use Bulk API (recommended for 5,000+ rows)

EnableParallelThreads = 1, -- Use multiple threads for real-time inserts

MaxParallelThreads = 6 -- Set maximum number of threads

)DML - Upsert Lookup Field Value Using External ID Instead of Salesforce ID

This example demonstrates how to set a lookup field value in Salesforce using an external ID rather than the internal Salesforce ID during DML operations such as INSERT, UPDATE, or UPSERT.

Typically, updating a lookup field requires the Salesforce ID of the related record. However, Salesforce also allows referencing a related record using an external ID field. To do this, use the following field name syntax:

[relationship_name.external_id_field_name(child_object_name)]

relationship_name: The API name of the relationship (e.g.,OwnerorYourObject__r).external_id_field_name: A custom field on the related object, marked as External ID.child_object_name(optional): The API name of the related object. If omitted, Salesforce derives it from the relationship name (without the__rsuffix).

Example:

To assign a record owner using a custom external ID on the User object:

Owner.SomeExternalId__c(User)

Owner: The relationship name for the user record.SomeExternalId__c: A custom external ID field in the User object.User: The related (child) object name.

If you’re using the SOURCE(...) clause to read input data and enabling BulkApiMode=1 in the WITH(...) clause, you can omit the child object name. In that case, use the format:

relationship_name.external_id_field_name

Setting a Field to NULL:

To set a lookup or standard field to null, use:

FieldName = null

For example:

AccountId = null

Avoid using:

relation_name.external_id_name(target_table) = null

More Information:

For full details and examples, visit the official guide: ZappySys Docs - External ID in Lookup Fields

-- Upsert record into Salesforce Account object

UPSERT INTO Account (

Name,

BillingCity,

[Owner.SomeExternalId__c(User)] -- Use external ID field on related Owner (User) object

)

VALUES (

'mycompany name',

'New York',

'K100' -- External ID value of the User (Owner)

)

WITH (

KEY = 'SupplierId__c', -- External ID field used for UPSERT on Account object

Output = 1 -- Return __RowStatus and __ErrorMessage for result diagnostics

)

Supported WITH Properties in BULK Mode

When using the ZappySys Salesforce ODBC Driver with BULK mode, you can pass additional options using the WITH clause to customize behavior.

Here are other supported properties commonly used BULK mode:

INSERT INTO Account/UPDATE Account/DELETE FROM Account

SOURCE(...)

WITH(

Output=1 /*Other values can be Output='*' , Output=1 , Output=0 , Output='Col1,Col2...ColN'. When Output option is supplied then error is not thrown but you can capture status and message in __RowStatus and __ErrorMessage output columns*/

,EnableBulkMode=1 --use Job Style Bulk API (uses 10000 rows per batch rather than 200)

--,MaxRowsPerJob=500000 --useful to control memory footprint in driver

--,ConcurrencyMode='Default' /* or 'Parallel' or 'Serial' - Must set BulkApiVersion=2 to use this, Bulk API V1 doesnt support this yet. If you get locking errors then change to Serial*/

--,BulkApiVersion=2 --default is V1

--,IgnoreFieldsIfInputNull=1 --Set this option to True if you wish to ignore fields if input value is NULL. By default target field is set to NULL if input value is NULL.

--,FieldsToSetNullIfInputNull='SomeColum1,SomeColumn5,SomeColumn7' --Comma separated CRM entity field names which you like to set as NULL when input value is NULL. This option is ignored if IgnoreFieldsIfInputNull is not set to True.

--,AssignmentRuleId='xxxxx' --rule id to invoke on value assignment

--,UseDefaultAssignmentRule=1 --sets whether you like to use default rule

--,AllOrNone=1 --If true, any failed records in a call cause all changes for the call to be rolled back. Record changes aren't committed unless all records are processed successfully. The default is false. Some records can be processed successfully while others are marked as failed in the call results.

--,OwnerChangeOptions='option1,option2...optionN' -- use one or more options from below. Use '-n' suffix to disable option execution e.g. TransferOpenActivities-n

-->>> Available owner change options: EnforceNewOwnerHasReadAccess,TransferOpenActivities,TransferNotesAndAttachments,TransferOthersOpenOpportunities,TransferOwnedOpenOpportunities,TransferOwnedClosedOpportunities,TransferOwnedOpenCases,TransferAllOwnedCases,TransferContracts,TransferOrders,TransferContacts,TransferArticleOwnedPublishedVersion,TransferArticleOwnedArchivedVersions,TransferArticleAllVersions,KeepAccountTeam,KeepSalesTeam,KeepSalesTeamGrantCurrentOwnerReadWriteAccess,SendEmail

-->>> For more information visit https://zappysys.com/link/?id=10141

--,AllowFieldTruncation=1 --If true, truncate field values that are too long, which is the behavior in API versions 14.0 and earlier.

--,AllowSaveOnDuplicates=1 --Set to true to save the duplicate record. Set to false to prevent the duplicate record from being saved.

--,EnableParallelThreads=1 --Enables sending Data in multiple threads to speedup. This option is ignored when bulk mode enabled (i.e. EnableBulkMode=1)

--,MaxParallelThreads=6 --Maximum threads to spin off to speedup write operation. This option is ignored when bulk mode enabled (i.e. EnableBulkMode=1)

--,TempStorageMode='Disk' --or 'Memory'. Use this option to overcome OutOfMemory Error if you processing many rows. This option enables how Temp Storage is used for query processing. Available options 'Disk' or 'Memory' (Default is Memory)

)

More Examples and Documentation

For additional examples and detailed guidance on using the ZappySys Salesforce ODBC Driver, visit the official documentation:

Conclusion

In this guide, we demonstrated how to connect to Salesforce in SSAS and integrate your data — all without writing complex code.

Ready to get started? Download ODBC PowerPack now or ping us via chat if you still need help: