Introduction

You can connect to your Apache Derby data in Microsoft Fabric using the high-performance Apache Derby ODBC Driver (powered by ZappySys JDBC-ODBC Bridge Driver). We'll walk you through the entire setup.

Let's not waste time and get started!

Prerequisites

Before we begin, make sure you meet the following prerequisite: Java Runtime Environment (JRE) or Java Development Kit (JDK) must be installed on your system.

-

Minimum required version: Java 8

-

Recommended Java version: Java 21

If your JDBC Driver targets a different Java version (e.g., 11 / 17 / 21), install the corresponding or newer Java version.

Download Apache Derby JDBC driver

To connect to Apache Derby, you will have to download JDBC driver for it, which we will use in later steps. Let's perform these little steps right away:

-

Visit Apache Derby official website.

-

Download the JDBC drivers, and save them locally.

-

Done! That was easy, wasn't it? Let's proceed to the next step.

Create data source using Apache Derby ODBC Driver

Video instructions

Watch this quick walkthrough to see how to configure your Apache Derby ODBC data source, or scroll down for the step-by-step written guide.

Step-by-step instructions

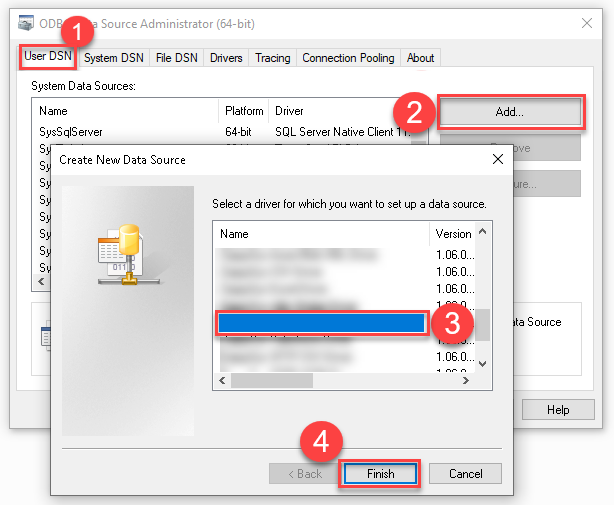

To get data from Apache Derby using Microsoft Fabric, we first need to create an ODBC data source. We will later read this data in Microsoft Fabric. Perform these steps:

-

Download and install ODBC PowerPack (if you haven't already).

-

Search for

odbcand open the ODBC Data Sources (64-bit):

-

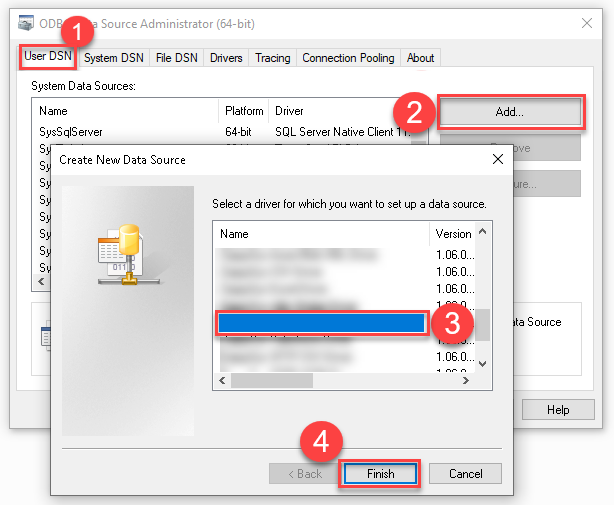

Create a User data source (User DSN) based on the ZappySys JDBC Bridge Driver driver:

ZappySys JDBC Bridge Driver

- Create and use a User DSN if the client application runs under a User Account. This is the ideal option at design time (e.g., when developing in Visual Studio). Use it for both types of applications (64-bit and 32-bit).

- Create and use a System DSN if the client application runs under a System Account (e.g., as a Windows Service). This is usually the required option in a production environment. If your Windows Service is a 32-bit application, you must use the 32-bit ODBC Data Source Administrator to configure this

-

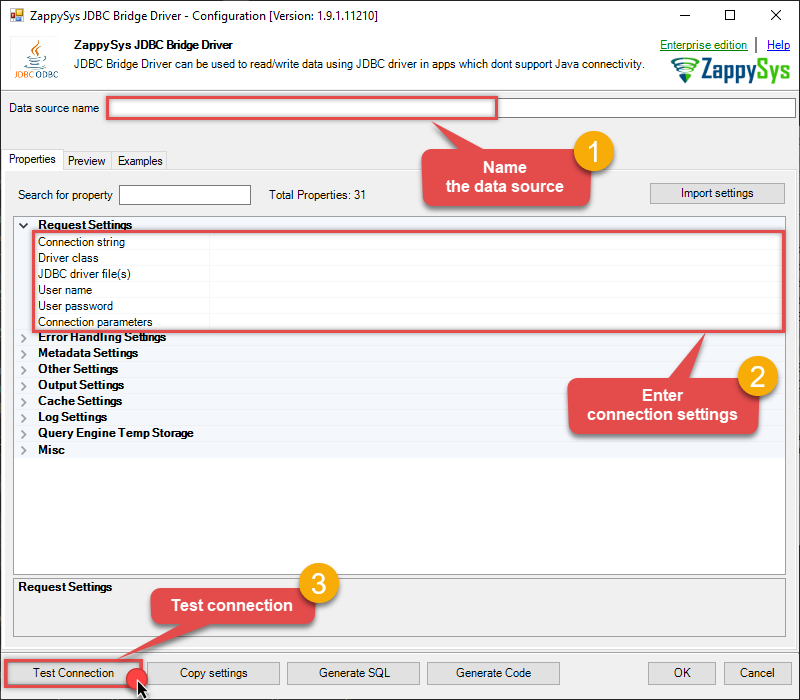

Now, we need to configure the JDBC connection in the new ODBC data source. Simply enter the Connection string, credentials, configure other settings, and then click Test Connection button to test the connection:

ApacheDerbyDSNjdbc:derby://hostname:1527/mydatabaseorg.apache.derby.jdbc.ClientDriverD:\Apache\derby\lib\derbyclient.jaradmin**************[]

For Client/server Environment, use these values when setting parameters:

-

Connection string :jdbc:derby://hostname:1527/mydatabase -

Driver class :org.apache.derby.jdbc.ClientDriver -

JDBC driver file(s) :D:\Apache\derby\lib\derbyclient.jar -

User name :admin -

User password :************** -

Connection parameters :[]

For Embedded Environment, use these values when setting parameters:

-

Connection string :jdbc:derby:c:\apache\derby\databases\mydatabase -

Driver class :org.apache.derby.jdbc.EmbeddedDriver -

JDBC driver file(s) :D:\Apache\derby\lib\derby.jar

-

-

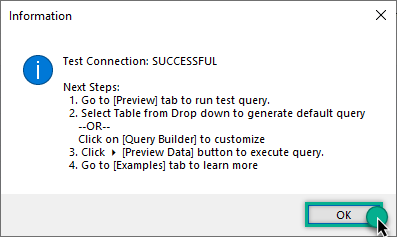

You should see a message saying that connection test is successful:

-

Otherwise, if you are getting an error, start by troubleshooting the JDBC connection with DBeaver in the section below:

-

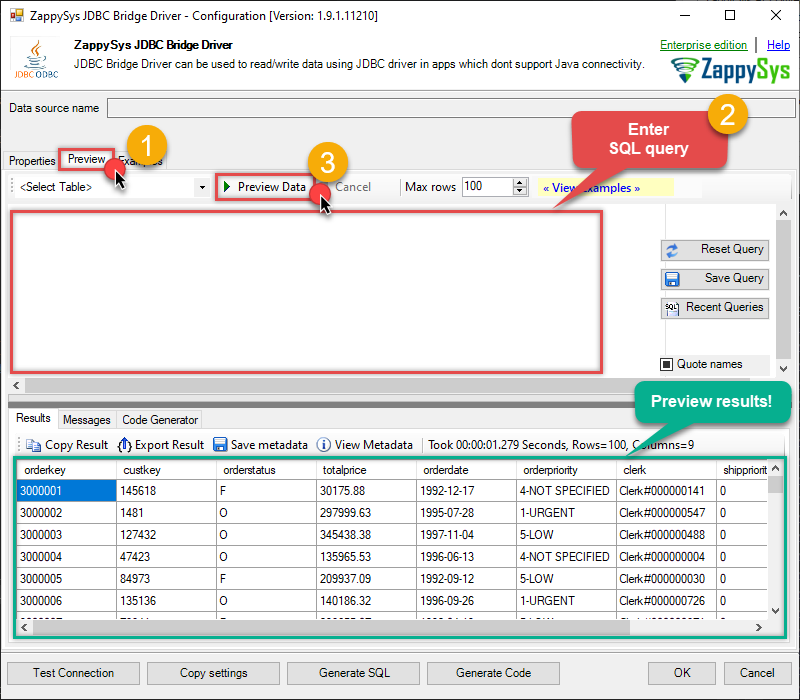

We are at the point where we can preview a SQL query. For more SQL query examples visit JDBC Bridge documentation:

ApacheDerbyDSNSELECT * FROM "APP"."ORDERS"

SELECT * FROM "APP"."ORDERS"You can also click on the <Select Table> dropdown and select a table from the list.The ZappySys JDBC Bridge Driver acts as a transparent intermediary, passing SQL queries directly to the JDBC driver, which then handles the query execution. This means the JDBC-ODBC Bridge Driver simply relays the SQL query without altering it.

Some JDBC drivers don't support

INSERT/UPDATE/DELETEstatements, so you may get an error saying "action is not supported" or a similar one. Please, be aware, this is not the limitation of ZappySys JDBC Bridge Driver, but is a limitation of the specific JDBC driver you are using. -

Click OK to finish creating the data source.

Install Microsoft On-premises data gateway (Standard mode)

To access and read Apache Derby data in Microsoft Fabric, you must download and install the Microsoft On-premises data gateway (Standard mode). It acts as a secure bridge between Microsoft Fabric cloud services and your local Apache Derby ODBC data source:

There are two types of On-premises data gateways:

- Supports Power BI and other Microsoft Cloud services

- Installs as a Windows service

- Starts automatically

- Supports centralized user access control

- Supports the

Direct Queryfeature - Ideal for enterprise solutions

- Supports Power BI services only

- Cannot run as a Windows service

- Stops when you sign out of Windows

- Does not support access control

- Does not support the

Direct Queryfeature - Best for individual use and POC solutions

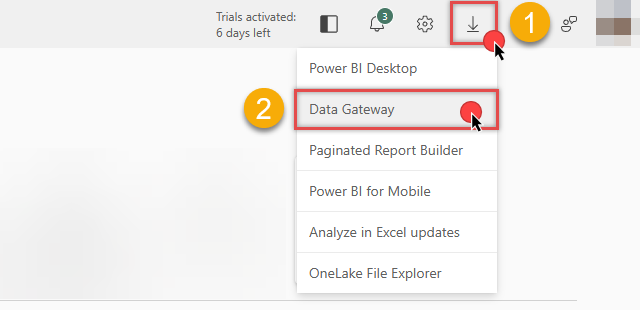

You can download the On-premises data gateway directly from the Microsoft Fabric or Power BI portals:

Link ODBC data source via the gateway

Follow these steps to download, install, and configure the gateway in Standard mode:

-

Download On-premises data gateway (standard mode) and run the installer.

-

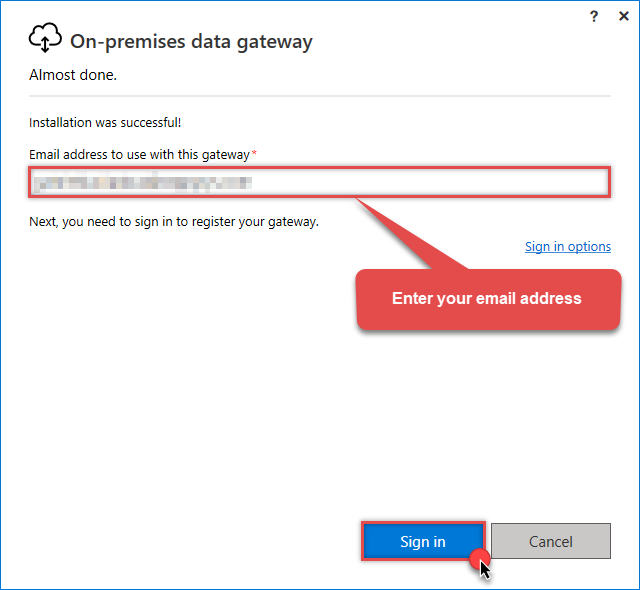

Once the configuration window opens, sign in:

Sign in with the same email address you use for Microsoft Fabric.

Sign in with the same email address you use for Microsoft Fabric. -

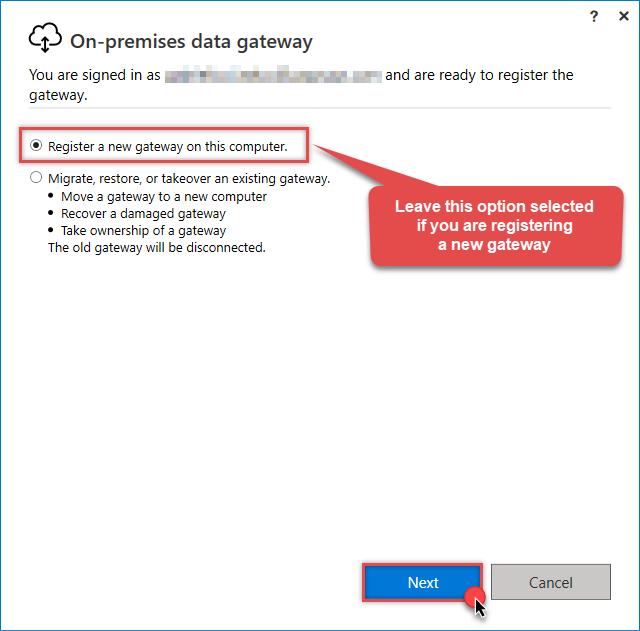

Select Register a new gateway on this computer (or migrate an existing one):

-

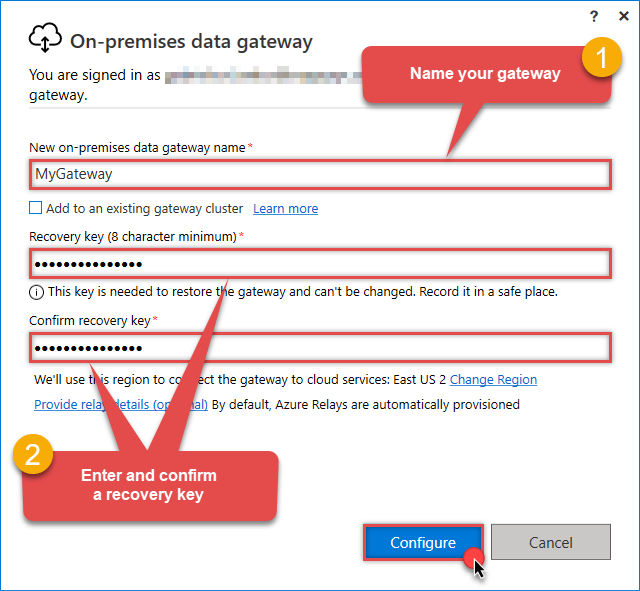

Name your gateway, enter a Recovery key, and click the Configure button:

Save your Recovery Key in a safe place (like a password manager). If you lose it, you cannot restore or migrate this gateway later.

Save your Recovery Key in a safe place (like a password manager). If you lose it, you cannot restore or migrate this gateway later. -

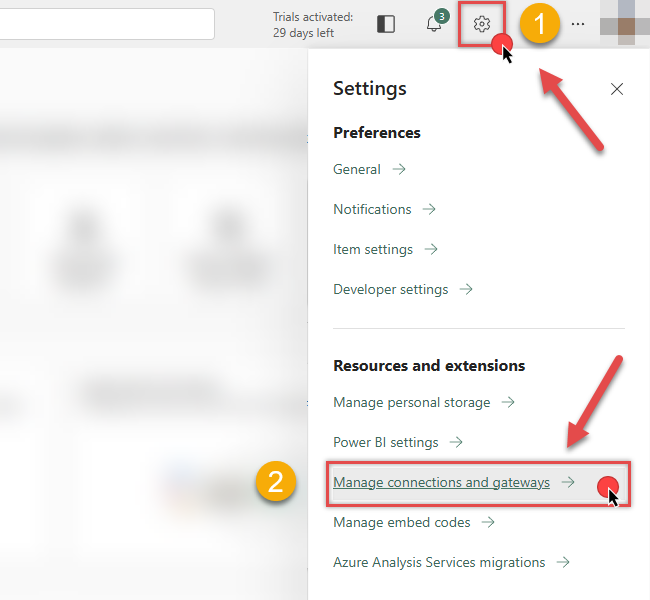

Once Microsoft gateway is installed, check if it registered correctly:

-

Go back to Microsoft Fabric portal

-

Click Gear icon on top-right

-

And then hit Manage connections and gateways menu item

-

-

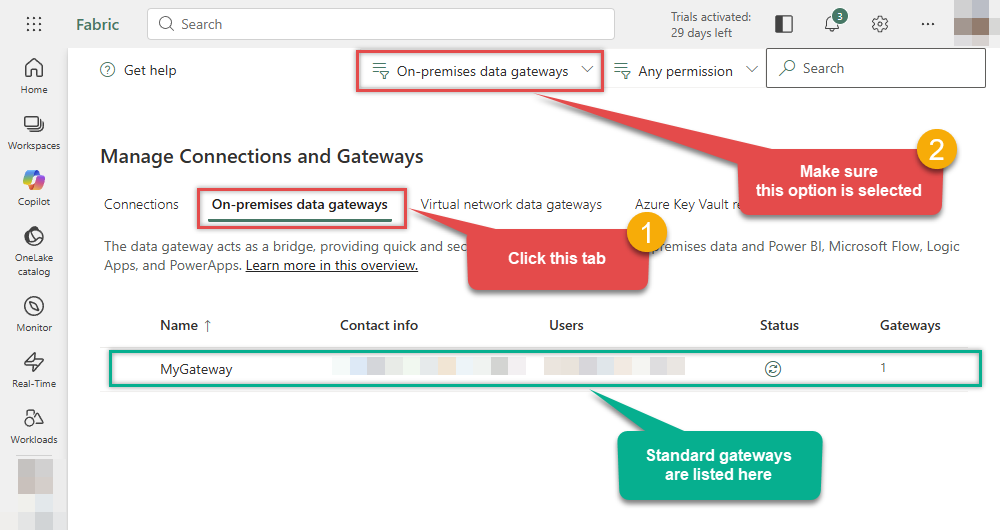

Continue by clicking the On-premises data gateway tab and selecting Standard mode gateways from the dropdown menu:

If your gateway is not listed, the registration may have failed. To resolve this:

- Wait a couple of minutes and refresh Microsoft Fabric portal page

- Restart the machine where On-premises data gateway is installed

- Check firewall settings

-

Success! The gateway is now Online and ready to handle requests.

- Done!

You are now ready to load data into Microsoft Fabric.

Load Apache Derby data into Microsoft Fabric

Now that we have configured the ODBC data source and installed the On-premises data gateway, we can proceed with loading data. You can accomplish this in two ways:

-

Copy job

Best for simple, high-speed data copying without modification.

-

Dataflow Gen2

Best if you need to transform, clean, or reshape data before loading.

Let's dive into the steps for both methods.

Use Copy job for high-speed loading

-

Go to the Microsoft Fabric Portal.

-

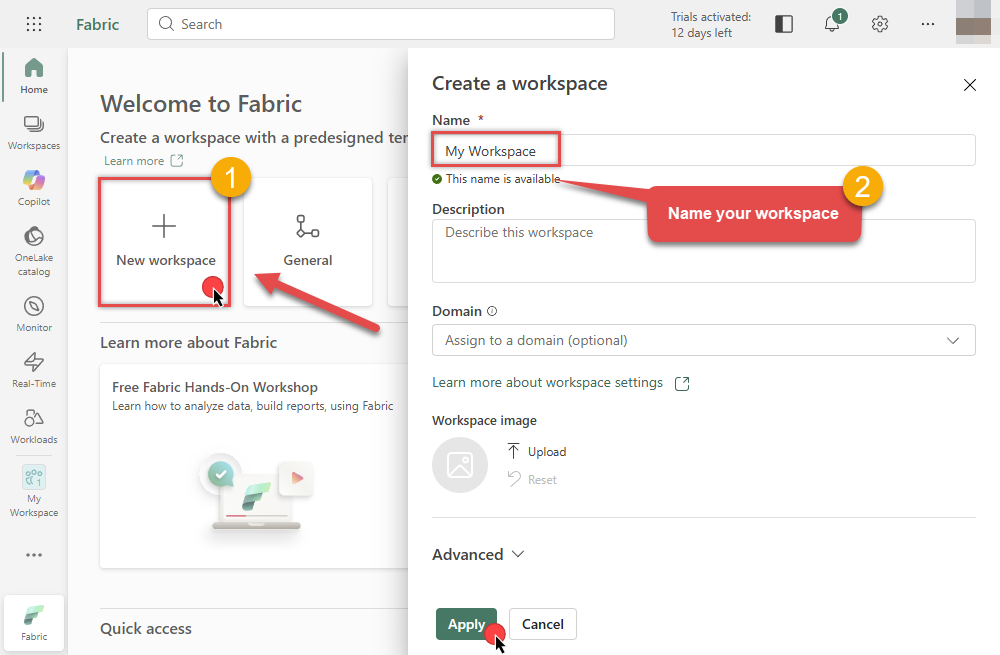

Select an existing Workspace or create a new one by clicking New workspace (ensure you are in the Home section):

-

Inside your workspace, click the New item button in the toolbar to start creating your data pipeline:

-

In the item selection window, choose Copy job to open the data ingestion wizard:

-

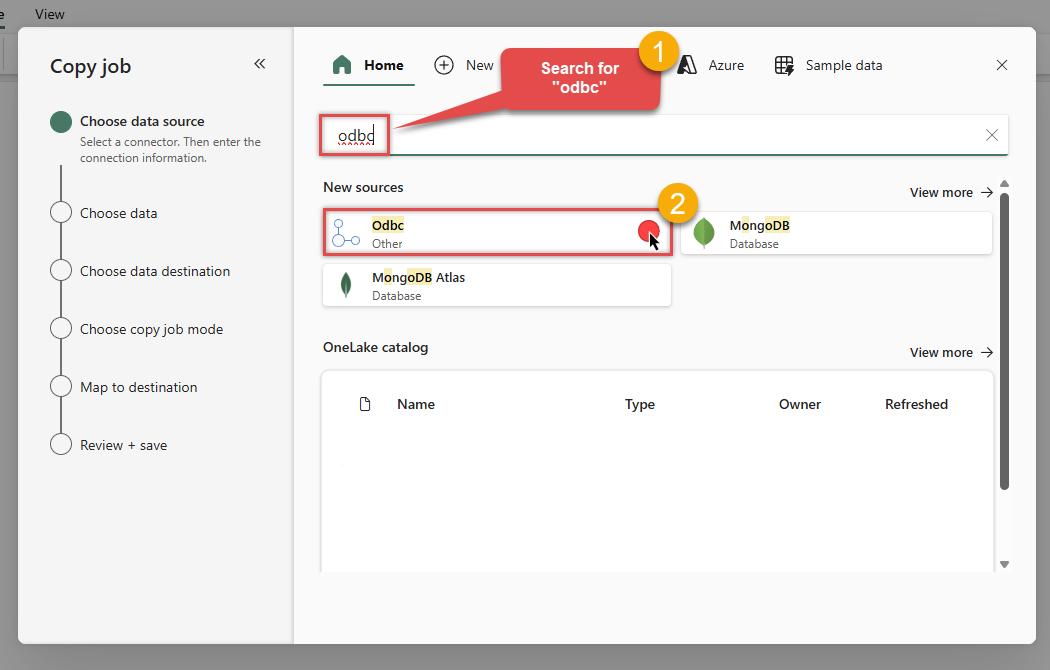

In the Choose data source screen, search for

odbcand select the Odbc source:

-

Then enter your ODBC connection string (

DSN=ApacheDerbyDSN) and selectMyGatewayfrom the Data gateway dropdown we configured in the previous step:DSN=ApacheDerbyDSNDSN=ApacheDerbyDSN

-

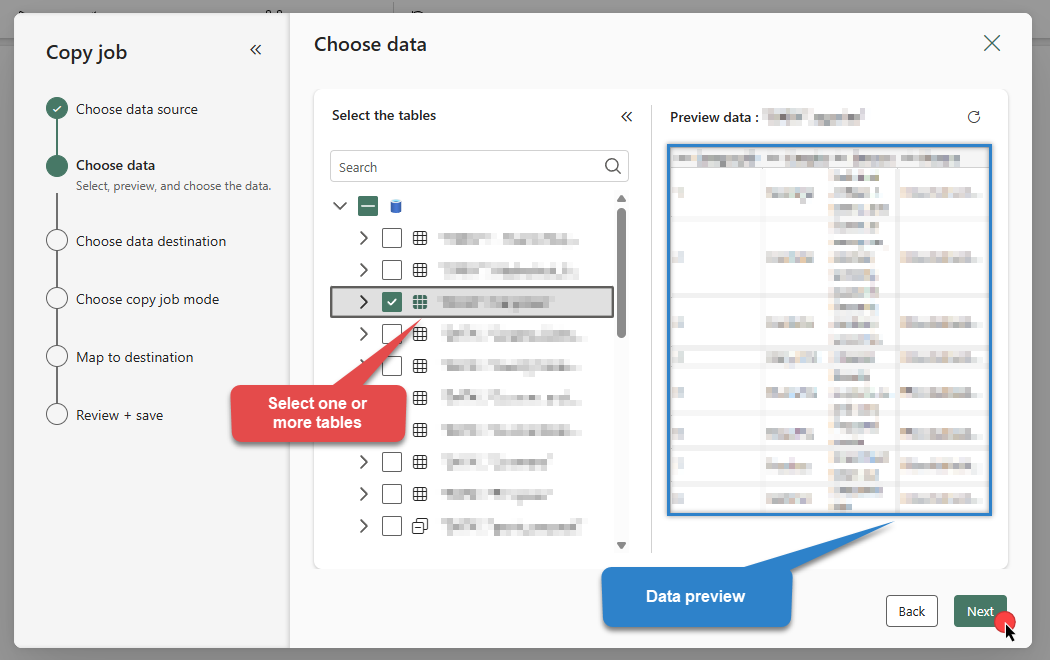

Select the table(s) and preview the data you wish to copy from Apache Derby. Once done, click Next:

DSN=ApacheDerbyDSN

-

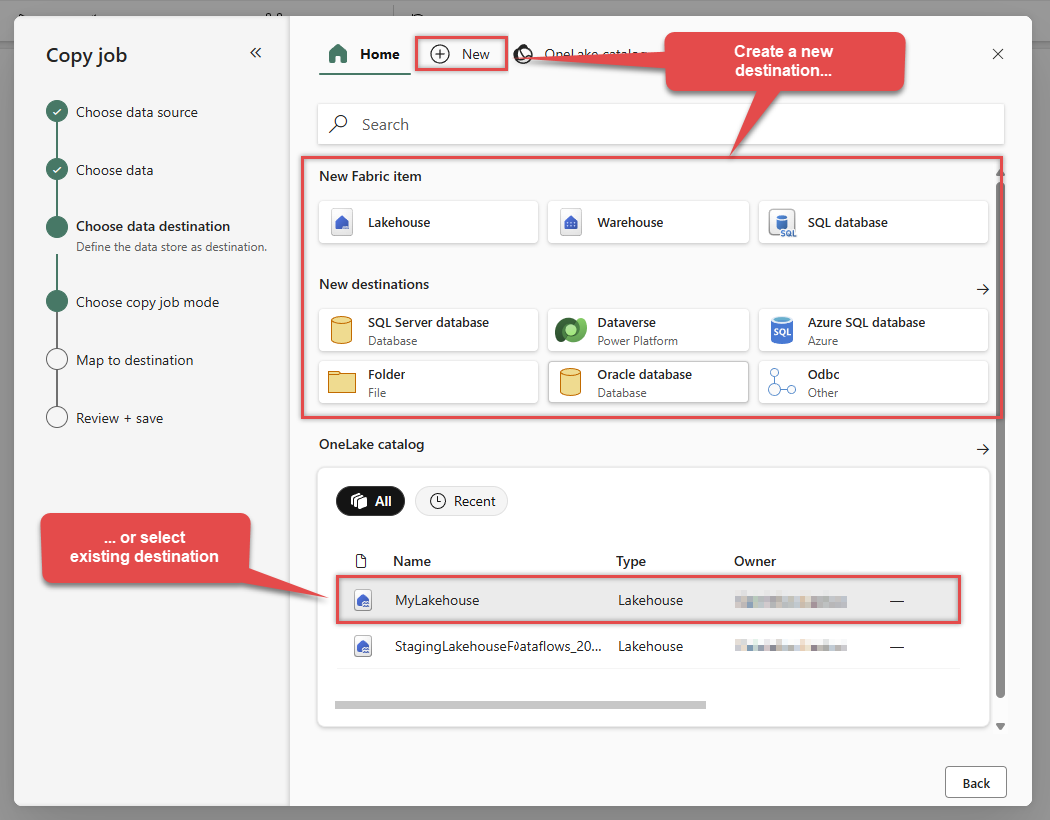

Choose your Data Destination. You can create a New Fabric item (like a Lakehouse or Warehouse) or select an existing one:

In this example, we will use a Lakehouse as the destination.

In this example, we will use a Lakehouse as the destination. -

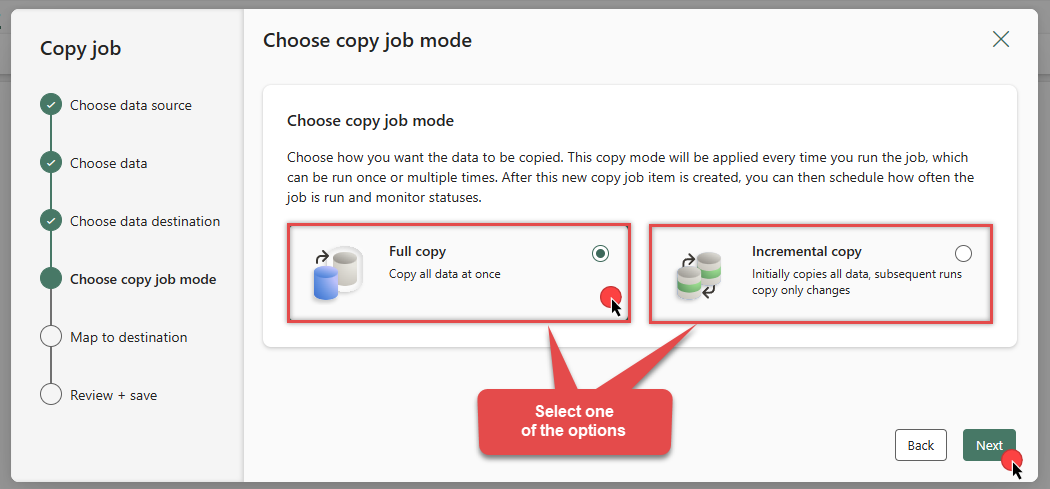

Choose Full copy to load all data, or Incremental copy to load only changed data in subsequent runs:

-

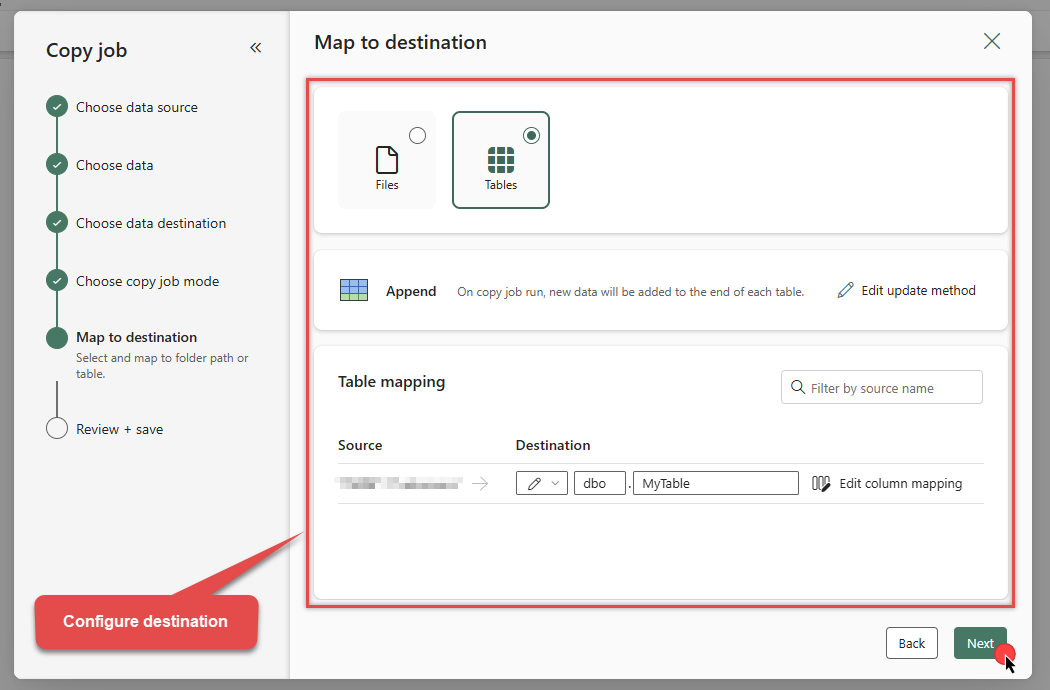

Review the Column and Table mappings section:

-

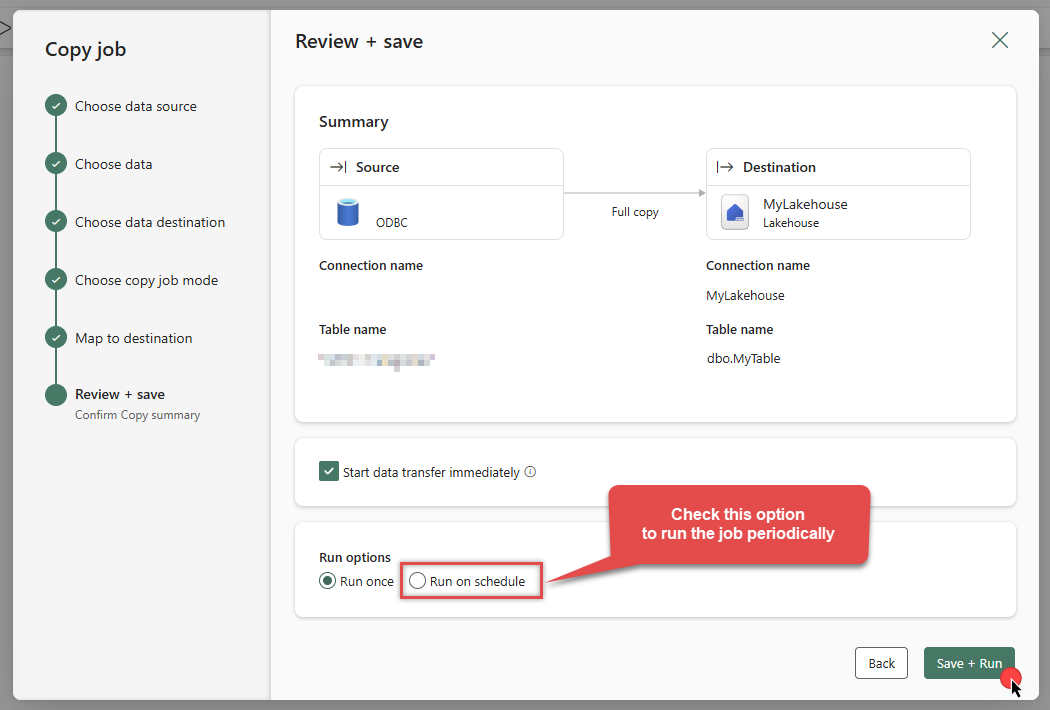

On the summary screen, review your settings. You can optionally enable Run on schedule. Click Save + Run to execute the job:

DSN=ApacheDerbyDSNDSN=ApacheDerbyDSN

-

The job will enter the queue. Monitor the Status column to see the progress:

DSN=ApacheDerbyDSN

-

Wait for the status to change to Succeeded. Your Apache Derby data is now successfully integrated into Microsoft Fabric!

-

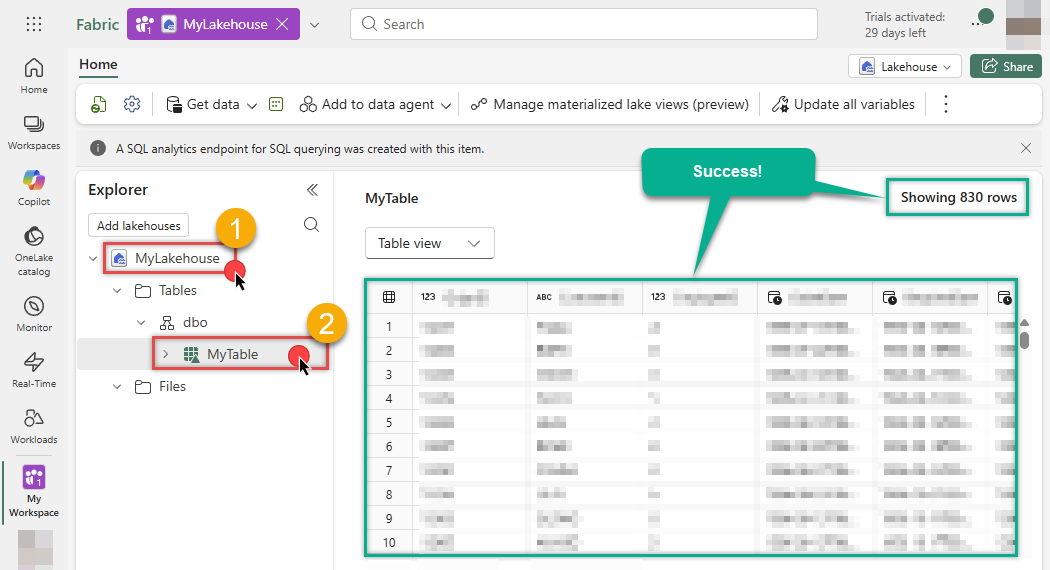

Let's go to our Lakehouse (

MyLakehouse) and verify the data:

-

Success! The data has been loaded.

Use Dataflow for advanced transformation

Another way to load data is by creating a Dataflow Gen2. This approach allows you to perform complex data transformations (ETL) before loading the data into its destination.

Configure Dataflow activity

-

Go to the Microsoft Fabric Portal.

-

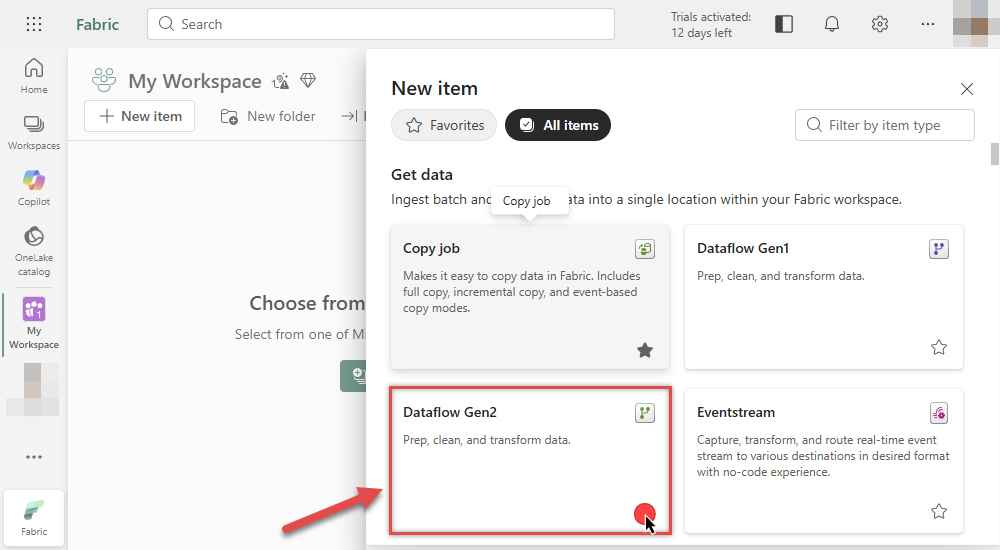

Inside your workspace, click New item and select Dataflow Gen2:

-

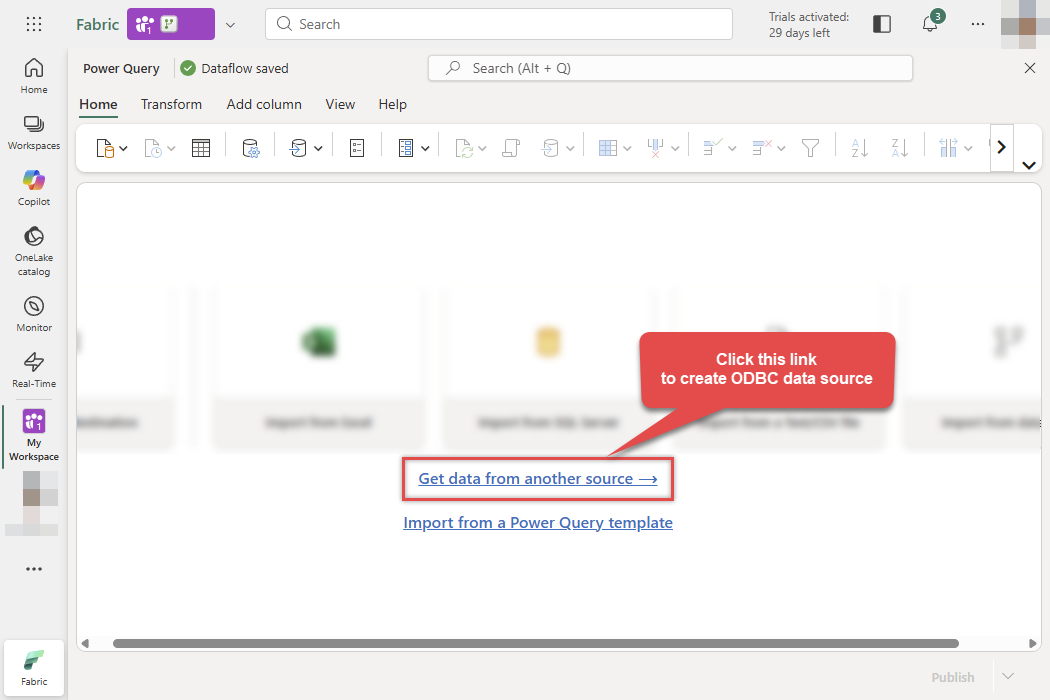

In the Power Query editor, click Get data from another source:

-

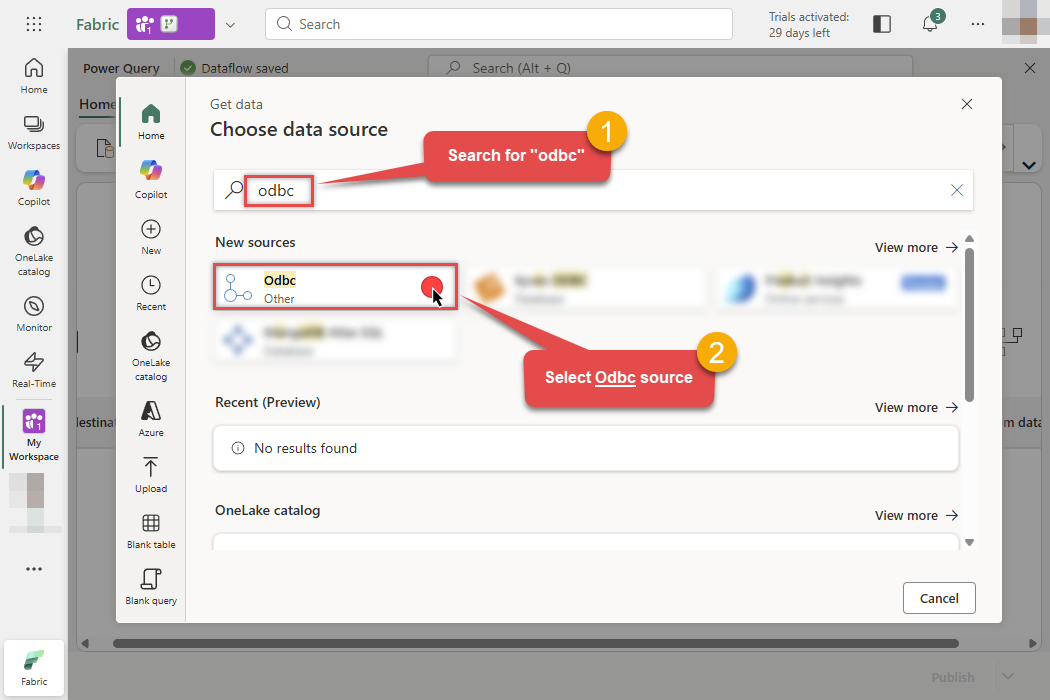

Search for ODBC in the search bar and select the ODBC connector:

-

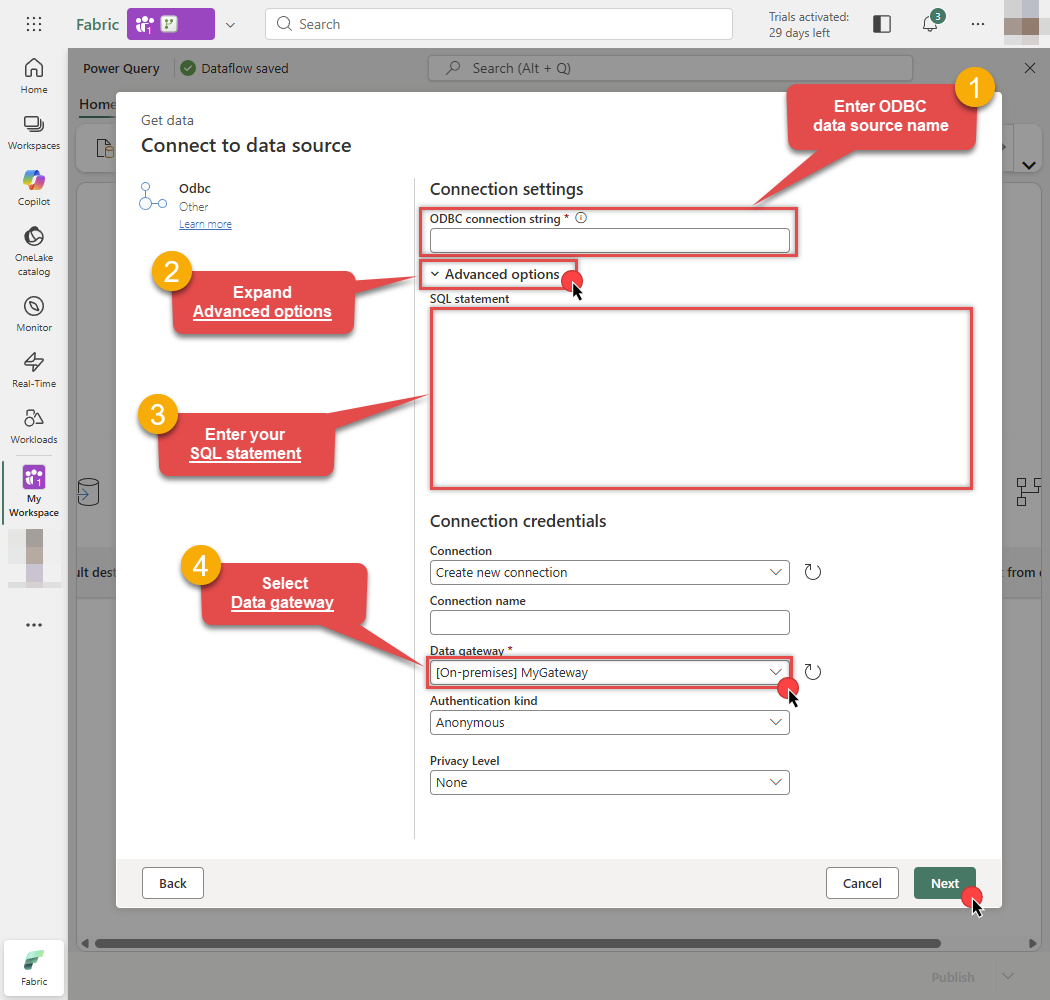

Then in the next step follow these instructions:

-

Enter your ODBC connection string (e.g.,

DSN=ApacheDerbyDSN) - Expand Advanced options

- Enter your SQL statement

- Select your On-premises data gateway

- Finally, click Next:

DSN=ApacheDerbyDSNDSN=ApacheDerbyDSNSELECT * FROM "APP"."ORDERS"

-

Enter your ODBC connection string (e.g.,

-

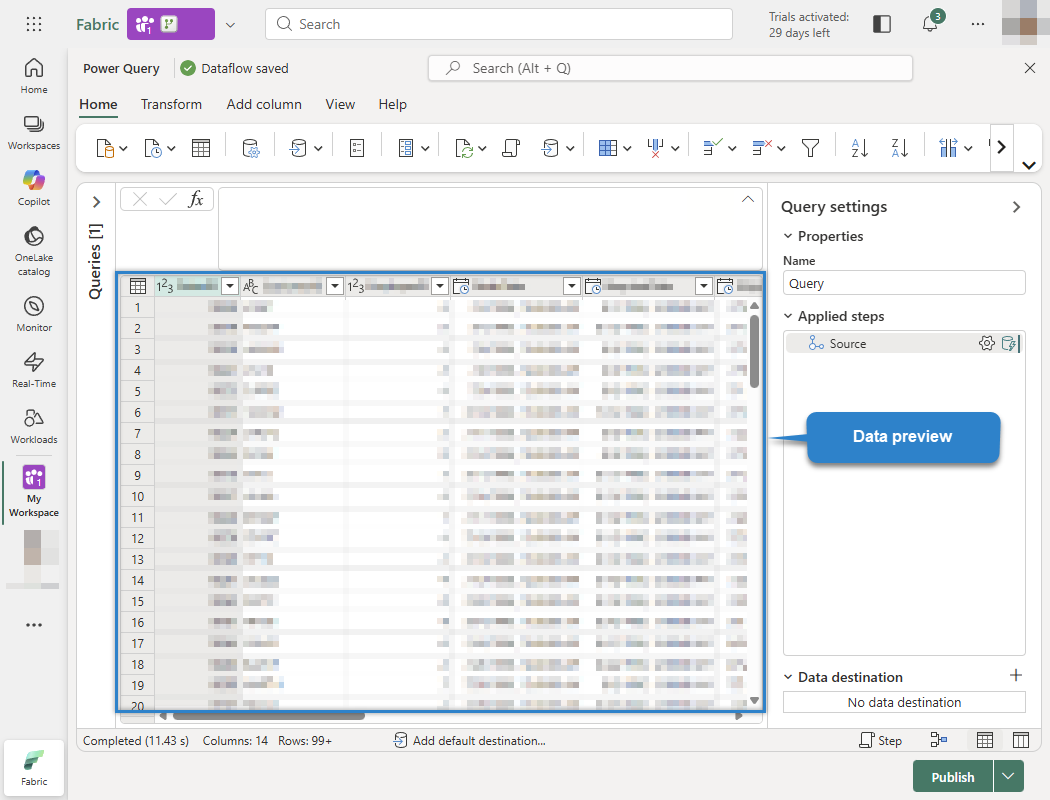

You will see a preview of your Apache Derby data. You can now transform the data if needed (filter rows, rename columns, change types, etc.):

Odbc.Query("DSN=ApacheDerbyDSN", "SELECT * FROM ""APP"".""ORDERS""")

-

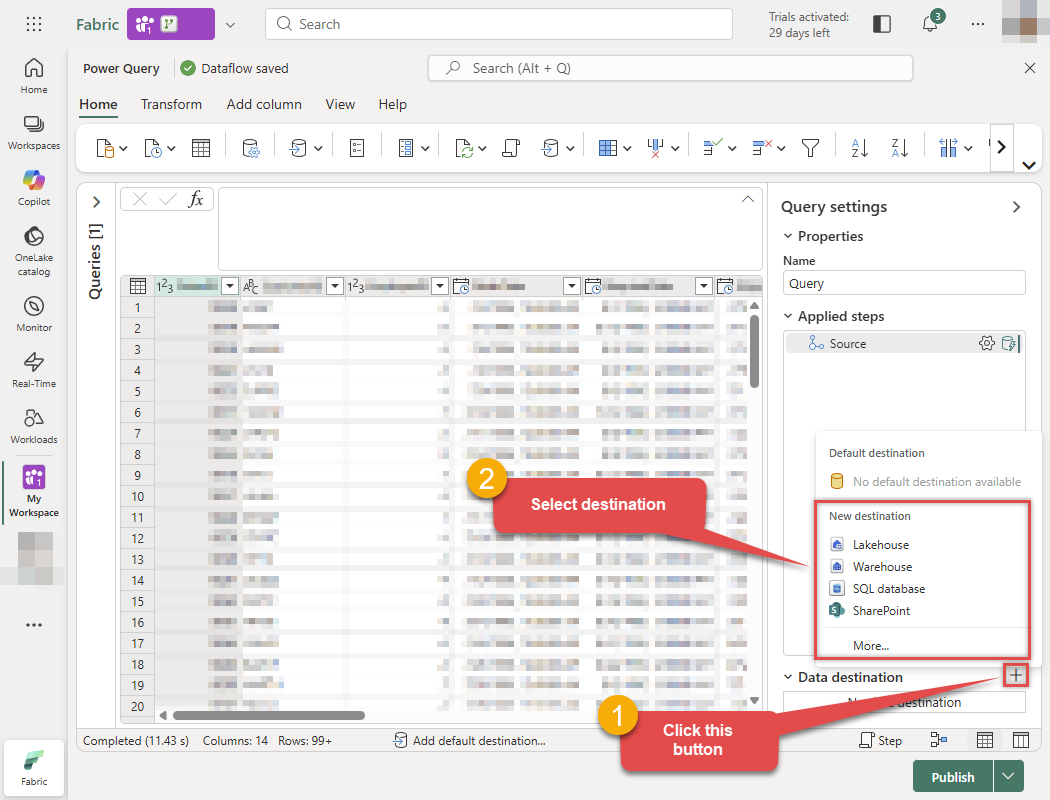

Now, let's send this data to the Lakehouse. Click the + button (Add data destination) at the bottom right and select Lakehouse:

Odbc.Query("DSN=ApacheDerbyDSN", "SELECT * FROM ""APP"".""ORDERS""")

-

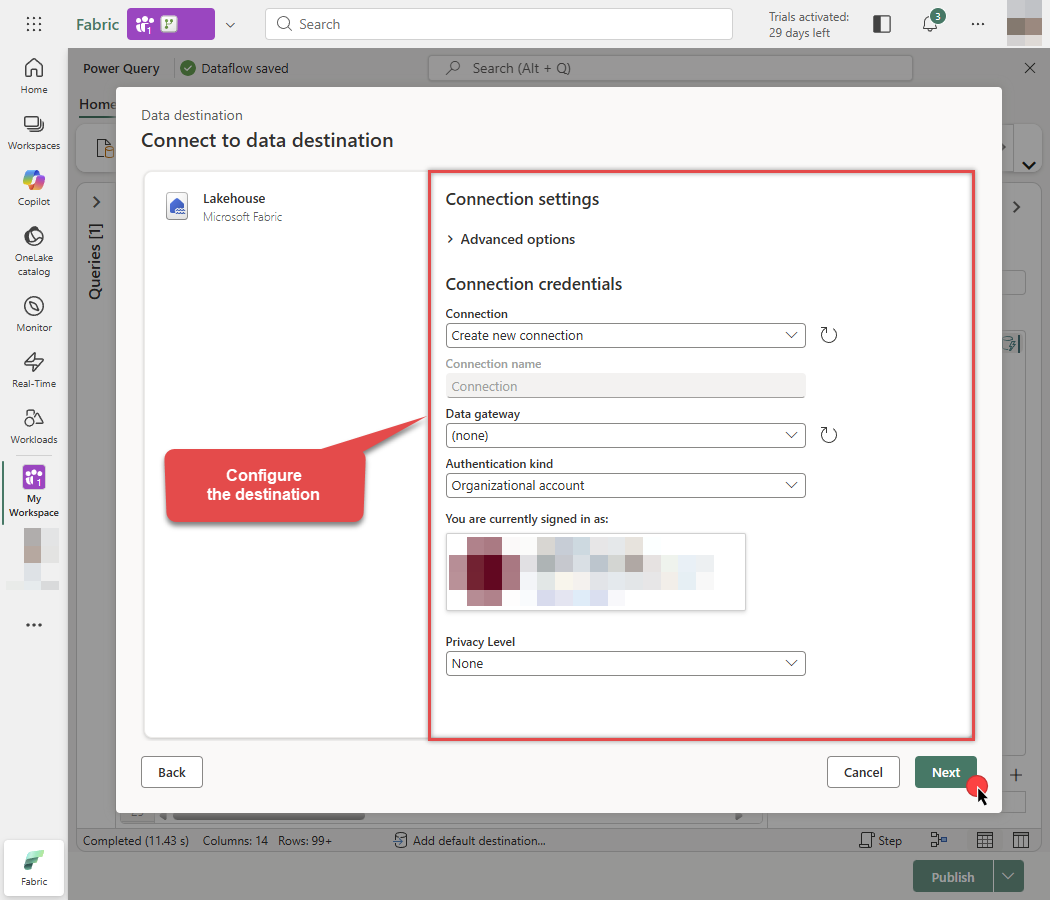

Configure the destination connection settings and click Next:

-

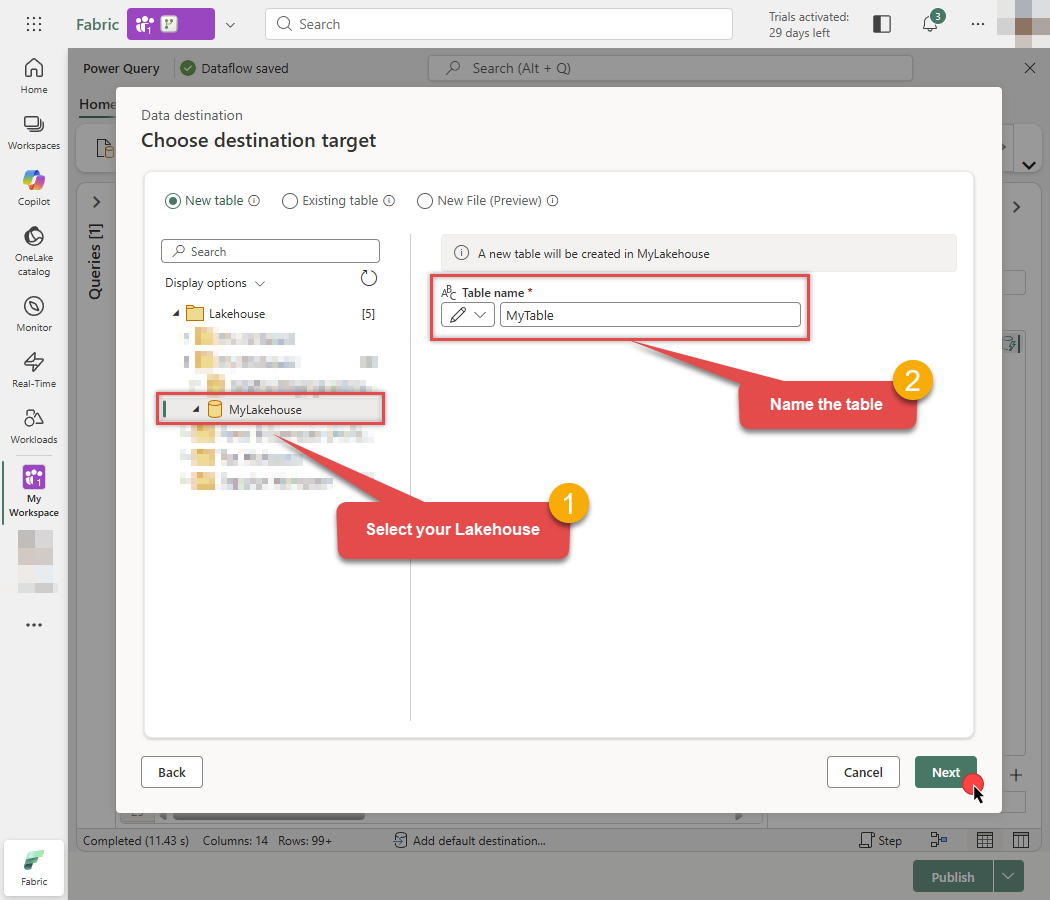

Select your specific Lakehouse, enter the Table name you want to create, and click Next:

-

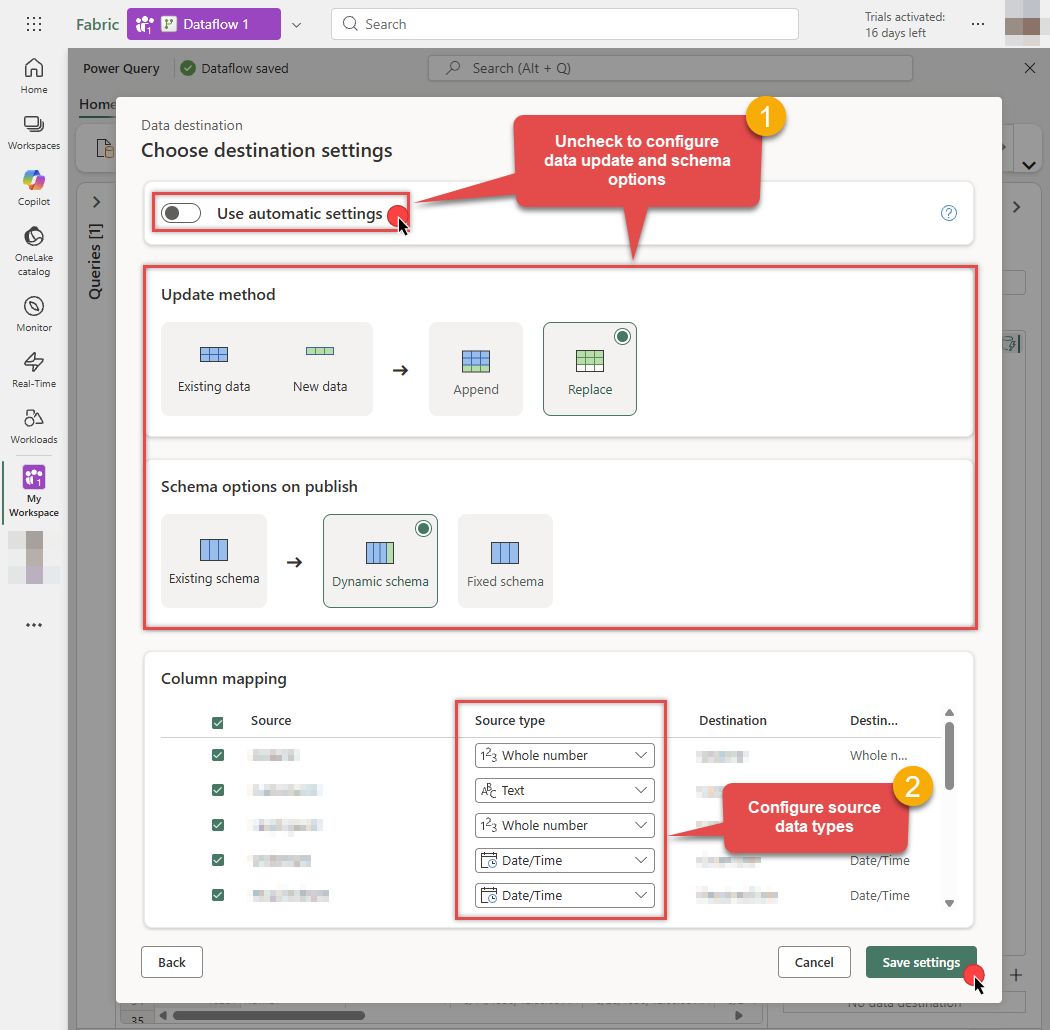

Uncheck Use automatic settings to set data update or schema options manually. Map the columns with proper data types and click Save settings when done:

-

The destination is now set. Click the Publish button to save the Dataflow:

Odbc.Query("DSN=ApacheDerbyDSN", "SELECT * FROM ""APP"".""ORDERS""")

-

Done! You can now start building reports using your new semantic model.

Configure and run Pipeline

Once you have created and published your Dataflow, you can use a Pipeline to orchestrate and run it.

-

Go to the Microsoft Fabric Portal.

-

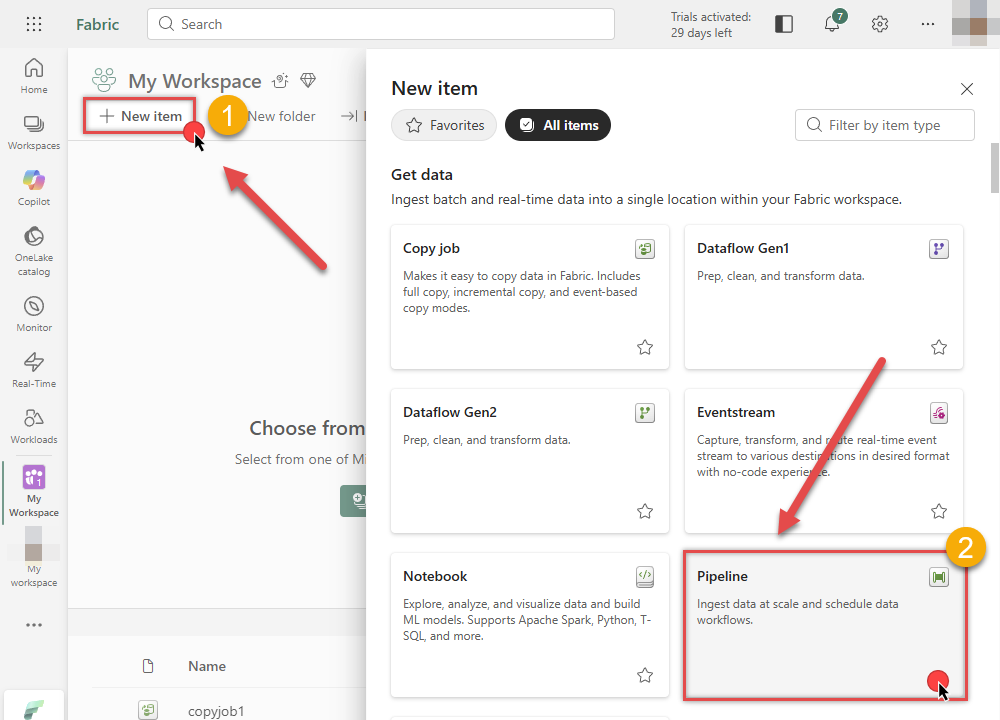

Inside your workspace, click New item and select Data Pipeline to create a new pipeline.

-

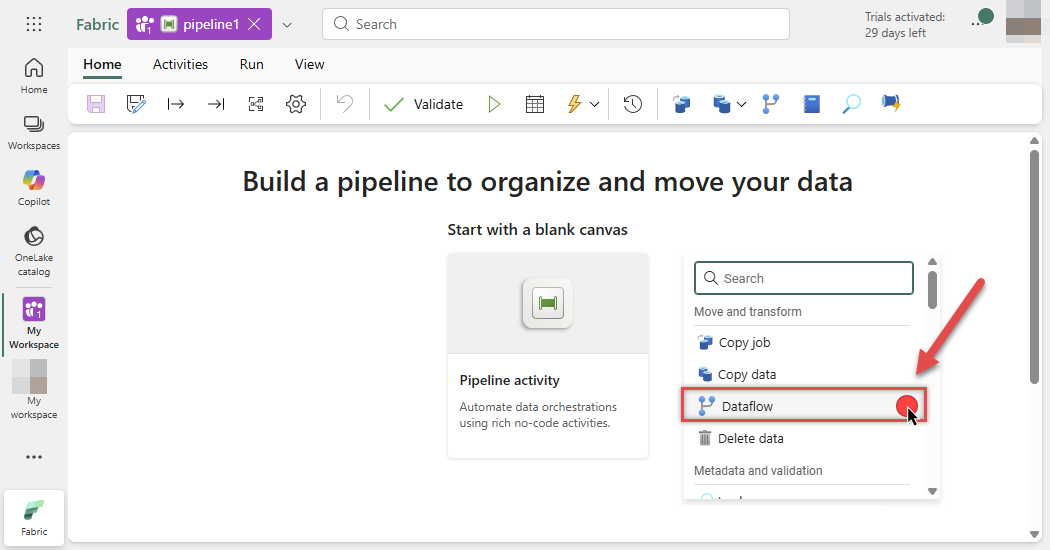

In the pipeline editor, select the Dataflow activity from the toolbar to add it to your canvas:

-

Select the Dataflow activity on the canvas and click the Settings tab. Choose your Workspace and the Dataflow you created in the previous steps:

-

You are now ready to link the Dataflow with other Pipeline activities.

-

Once the Pipeline flow is configured, click the Run button at the top, then click Save and run to execute the pipeline:

-

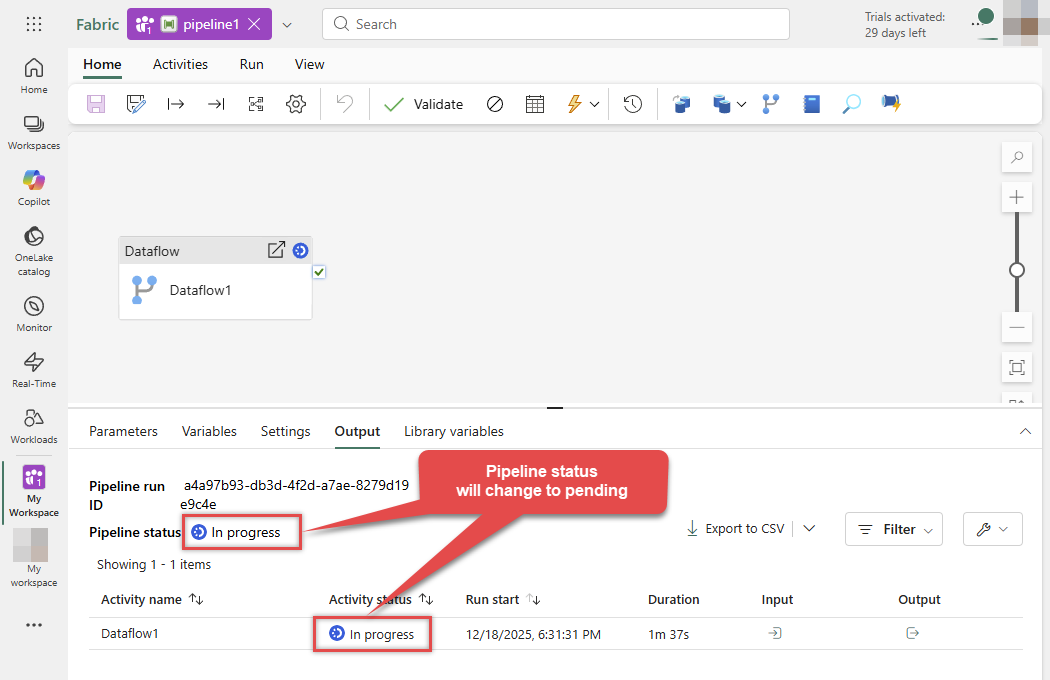

Monitor the Output tab below. The Pipeline status will initially show as In progress:

-

Wait for the process to complete. The status will update to Succeeded, indicating your data has been successfully loaded via the Dataflow:

-

Done! You can now start building reports on your new semantic model.

Troubleshooting

Validating JDBC connection in DBeaver

If you are experiencing JDBC connection issues, start by testing your JDBC driver in a JDBC client tool like DBeaver. If the JDBC connection fails in DBeaver, it naturally will not work in your ODBC data source either.

Create generic JDBC driver

-

Download and install DBeaver Community Edition.

-

Open DBeaver.

-

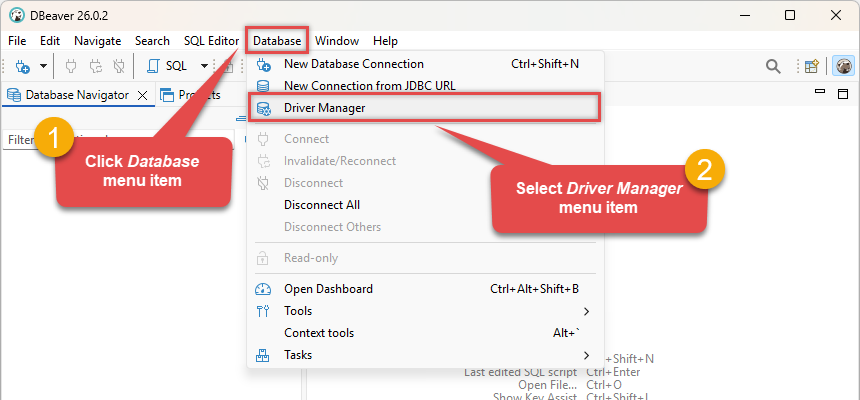

Click Database in the top menu and select Driver Manager:

-

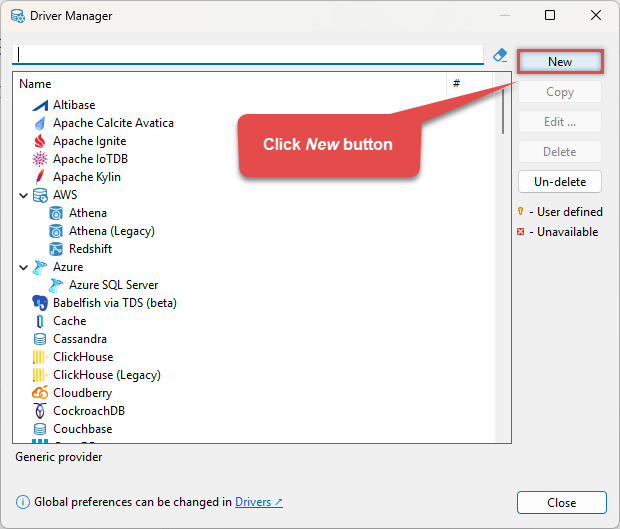

Click the New button to start adding a custom JDBC driver:

-

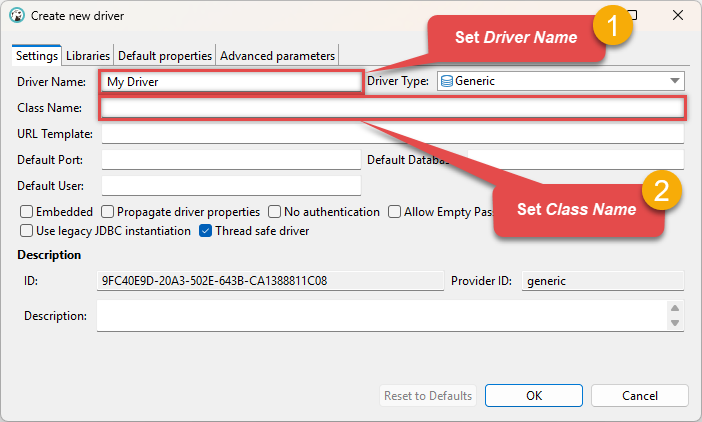

Configure the connection settings by entering the Driver Name and Class Name (

org.apache.derby.jdbc.ClientDriver): org.apache.derby.jdbc.ClientDriver

org.apache.derby.jdbc.ClientDriver -

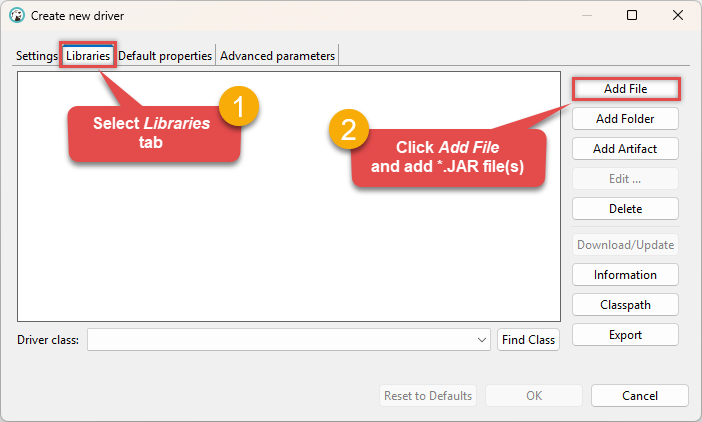

Go to the Libraries tab, click Add File, and select your Apache Derby driver library file(s), e.g.

D:\Apache\derby\lib\derbyclient.jar: Make sure to add all the required library dependencies.

Make sure to add all the required library dependencies. -

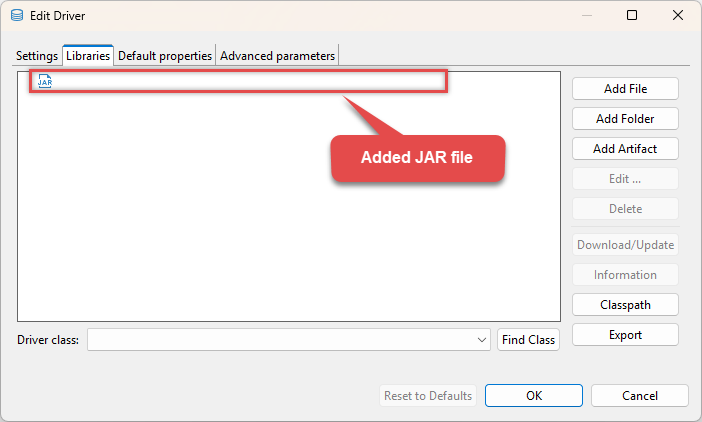

Your JDBC driver

jarlibrary is now added: D:\Apache\derby\lib\derbyclient.jar

D:\Apache\derby\lib\derbyclient.jar -

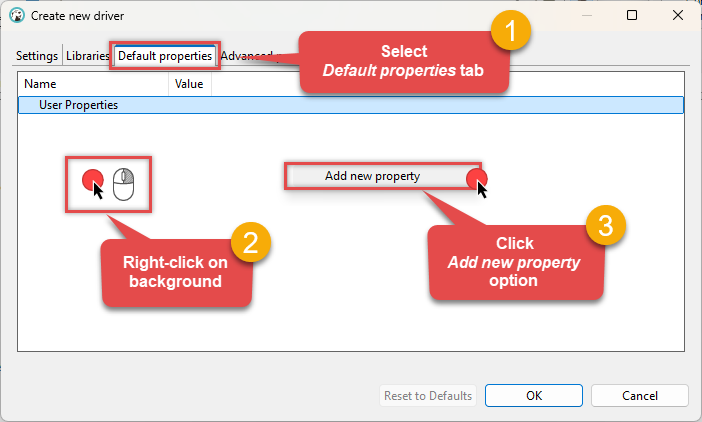

(Optional). If required by your JDBC driver, add additional properties by going to the Default properties tab, Right-clicking on the background, and selecting Add new property:

-

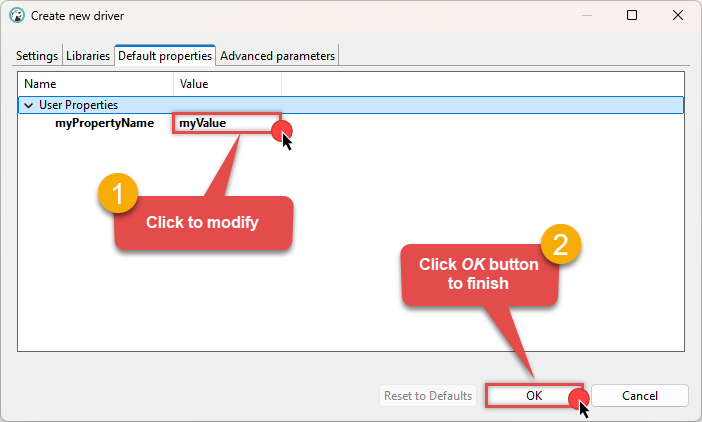

Click on a value to modify it, then click OK to finish:

We are now ready to test the connection. Let's proceed!

Test connection

-

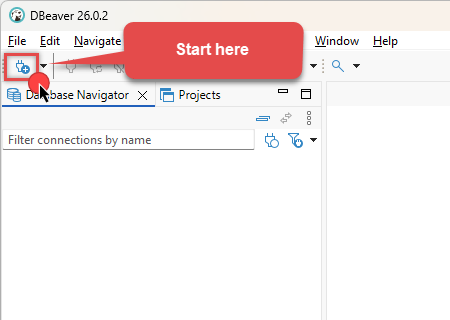

Click the New Database Connection icon in the toolbar:

-

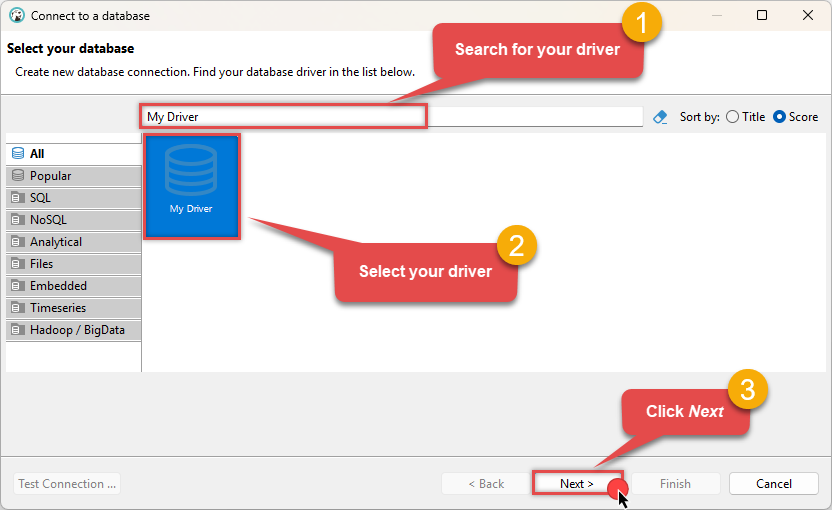

Select your newly created JDBC driver from the list and click Next:

-

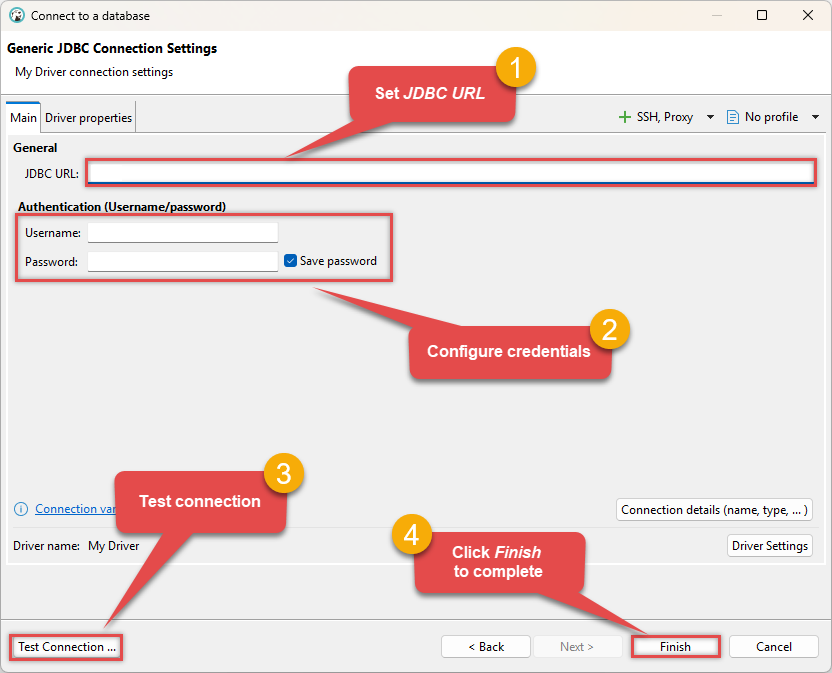

Enter your JDBC URL (e.g.

jdbc:derby://hostname:1527/mydatabase), click Test Connection to verify it works, and then click Finish: jdbc:derby://hostname:1527/mydatabase

jdbc:derby://hostname:1527/mydatabase -

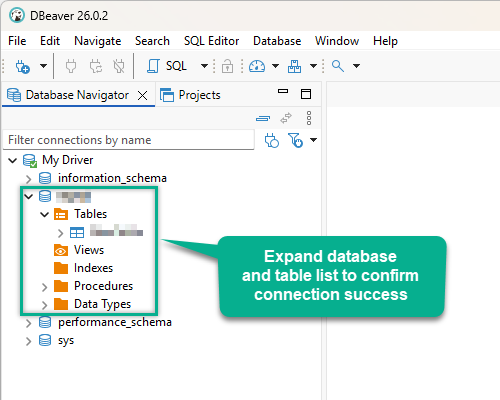

Finally, expand the database and table list to check the connection status:

There are two possible outcomes from the connection test:

- Reason:The JDBC driver works perfectly, meaning the issue lies within your ODBC data source configuration.

- Action:Double-check your ODBC data source configuration and ensure it matches the settings that successfully connected in DBeaver.

-

Reason:There is likely an issue with the JDBC driver configuration or the connection details provided.

-

Action:Contact the ZappySys Support Team for assistance.

If you are still experiencing issues or need further help, please contact us:

Chat with an ExpertFrequent issues and solutions

Below are some useful community articles to help you troubleshoot and configure the ZappySys JDBC Bridge Driver:

-

How to combine multiple JAR files

Learn how to merge multiple

.jardependencies when your JDBC driver requires more than one file. -

How to fix JBR error: “Data lake is not available / Unable to verify trust for server certificate chain”

Resolve SSL or certificate validation issues encountered during JDBC connections.

-

System Exception: “Java is not installed or not accessible”

Fix Java path or environment issues that prevent the JDBC Bridge from launching Java.

-

JDBC Bridge Driver disconnect from Java host error

Troubleshoot unexpected disconnection problems between your client application and the Java process.

-

Error: Could not open jvm.cfg while using JDBC Bridge Driver

Resolve JVM configuration path errors during driver initialization.

-

How to enable JDBC Bridge Driver logging

Enable detailed driver logging for better visibility during troubleshooting.

-

How to pass JDBC connection parameters (not by URL)

Learn how to specify connection properties programmatically instead of embedding them in the JDBC URL.

-

How to fix JDBC Bridge error: “No connection could be made because the target machine actively refused it”

Troubleshoot firewall or local port binding issues preventing communication with the Java host.

-

How to use JDBC Bridge options (System Property for Java command line, e.g., classpath, proxy)

Configure custom Java options like classpath and proxy using JDBC Bridge system properties.

Optional: Centralized data access via ZappySys Data Gateway

In some situations, you may need to provide Apache Derby data access to multiple users or services. Configuring the data source on a Data Gateway creates a single, centralized connection point for this purpose.

This configuration provides two primary advantages:

-

Centralized data access

The data source is configured once on the gateway, eliminating the need to set it up individually on each user's machine or application. This significantly simplifies the management process.

-

Centralized access control

Since all connections route through the gateway, access can be governed or revoked from a single location for all users.

| Data Gateway |

Local ODBC

data source

|

|

|---|---|---|

| Simple configuration | ||

| Installation | Single machine | Per machine |

| Connectivity | Local and remote | Local only |

| Connections limit | Limited by License | Unlimited |

| Central data access | ||

| Central access control | ||

| More flexible cost |

To achieve this, you must first create a data source in the Data Gateway (server-side) and then create an ODBC data source in Microsoft Fabric (client-side) to connect to it.

Let's not wait and get going!

Create Apache Derby data source in the gateway

In this section we will create a data source for Apache Derby in the Data Gateway. Let's follow these steps to accomplish that:

-

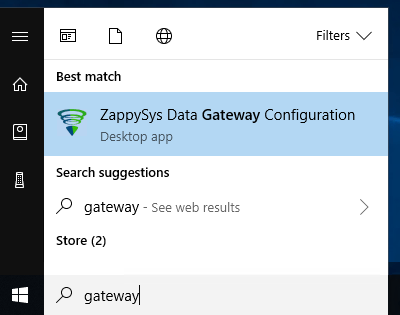

Search for

gatewayin the Windows Start Menu and open ZappySys Data Gateway Configuration:

-

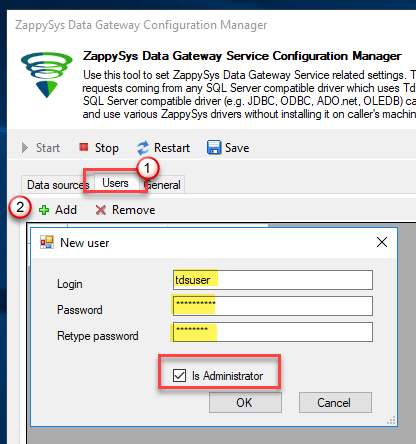

Go to the Users tab and follow these steps to add a Data Gateway user:

- Click the Add button

-

In the Login field enter a username, e.g.,

john - Then enter a Password

- Check the Is Administrator checkbox

- Click OK to save

-

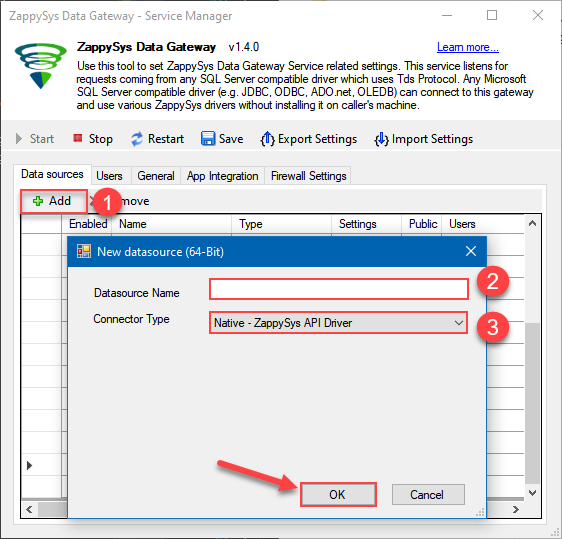

Now we are ready to add a data source:

- Click the Add button

- Give the Data source a name (have it handy for later)

- Then select Native - ZappySys JDBC Bridge Driver

- Finally, click OK

ApacheDerbyDSNZappySys JDBC Bridge Driver

-

When the ZappySys JDBC Bridge Driver configuration window opens, go back to ODBC Data Source Administrator where you already have the Apache Derby ODBC data source created and configured, and follow these steps on how to Import data source configuration into the Gateway:

-

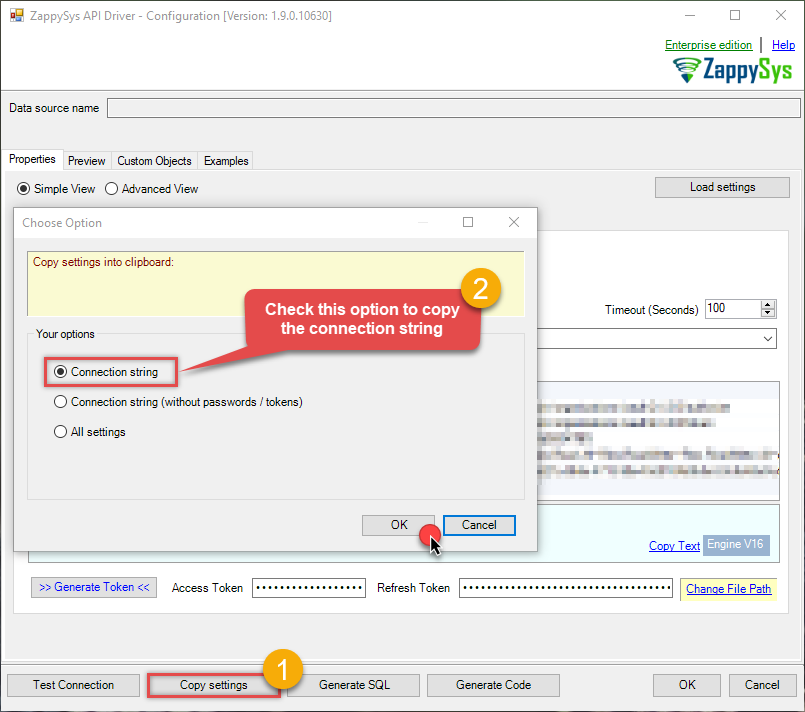

Open ODBC data source configuration and click Copy settings:

ZappySys JDBC Bridge Driver - Apache DerbyRead and write Apache Derby data effortlessly. Query, sync, and manage databases and tables — almost no coding required.ApacheDerbyDSN

ZappySys JDBC Bridge Driver - Apache DerbyRead and write Apache Derby data effortlessly. Query, sync, and manage databases and tables — almost no coding required.ApacheDerbyDSN

-

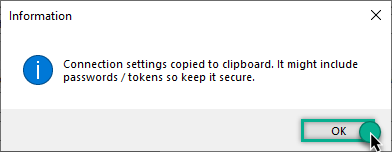

The window opens, telling us the connection string was successfully copied to the clipboard:

-

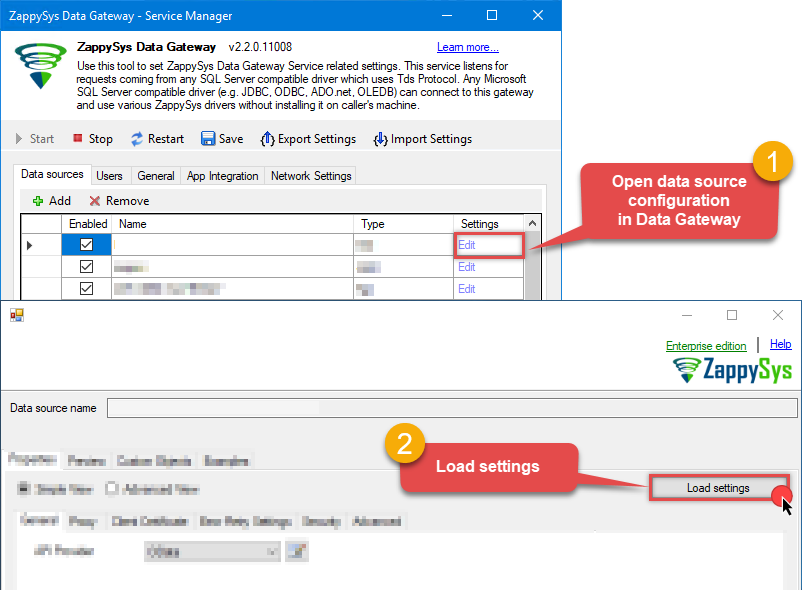

Then go to Data Gateway configuration and in data source configuration window click Load settings:

ApacheDerbyDSN ZappySys JDBC Bridge Driver - Configuration [Version: 2.0.1.10418]ZappySys JDBC Bridge Driver - Apache DerbyRead and write Apache Derby data effortlessly. Query, sync, and manage databases and tables — almost no coding required.ApacheDerbyDSN

ZappySys JDBC Bridge Driver - Configuration [Version: 2.0.1.10418]ZappySys JDBC Bridge Driver - Apache DerbyRead and write Apache Derby data effortlessly. Query, sync, and manage databases and tables — almost no coding required.ApacheDerbyDSN

-

Once a window opens, just paste the settings by pressing

CTRL+Vor by clicking right mouse button and then Paste option.

-

Open ODBC data source configuration and click Copy settings:

-

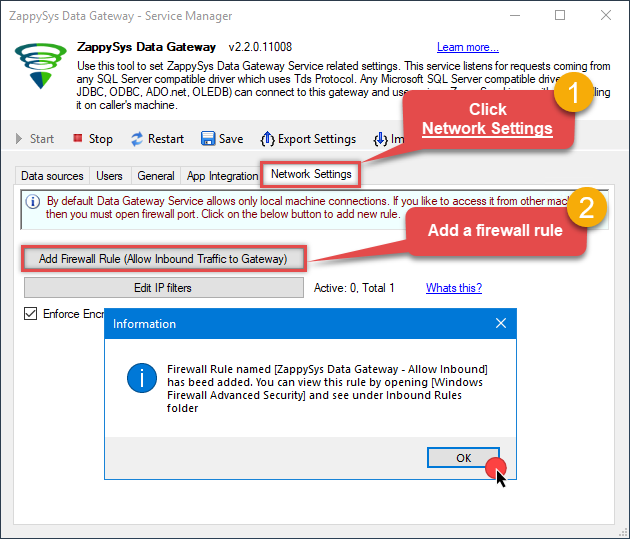

Once done, go to the Network Settings tab and Add a firewall rule for inbound traffic:

- This will initially allow all inbound traffic.

- Click Edit IP filters to restrict access to specific IP addresses or ranges.

-

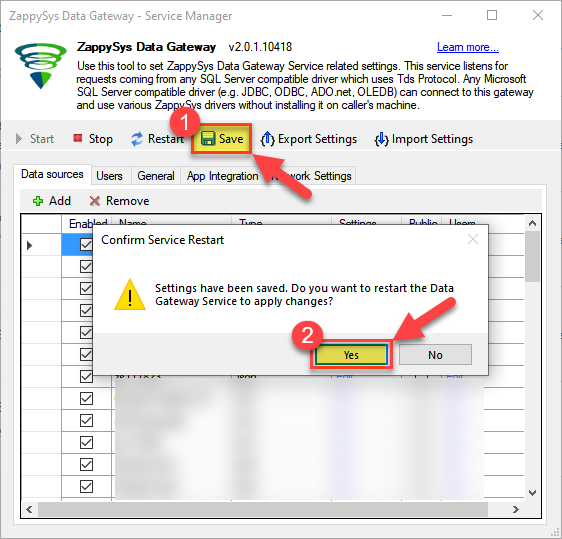

Crucial Step: After creating or modifying the data source, you must:

- Click the Save button to persist your changes.

- Hit Yes when prompted to restart the Data Gateway service.

This ensures all changes are properly applied:

Skipping this step may cause the new settings to fail, preventing you from connecting to the data source.

Skipping this step may cause the new settings to fail, preventing you from connecting to the data source.

Create ODBC data source to connect to the gateway

In this part we will create an ODBC data source to connect to the ZappySys Data Gateway from Microsoft Fabric. To achieve that, let's perform these steps:

-

Search for

odbcand open the ODBC Data Sources (64-bit):

-

Create a User data source (User DSN) based on the ODBC Driver 17 for SQL Server driver:

ODBC Driver 17 for SQL Server If you don't see the ODBC Driver 17 for SQL Server driver in the list, choose a similar version.

If you don't see the ODBC Driver 17 for SQL Server driver in the list, choose a similar version. -

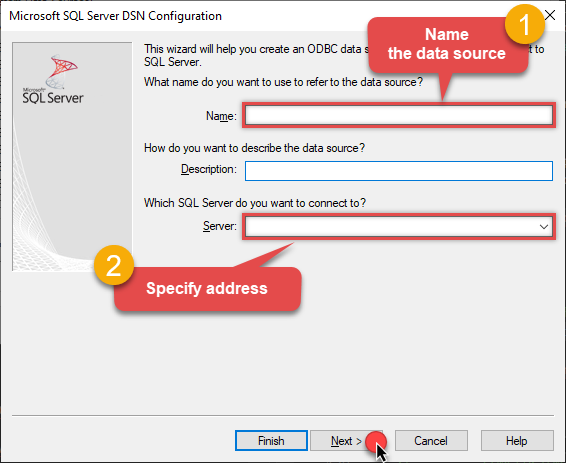

Then set a Name for the data source (e.g.

Gateway) and the address of the Data Gateway:ZappySysGatewayDSNlocalhost,5000 Make sure you separate the hostname and port with a comma, e.g.

Make sure you separate the hostname and port with a comma, e.g.localhost,5000. -

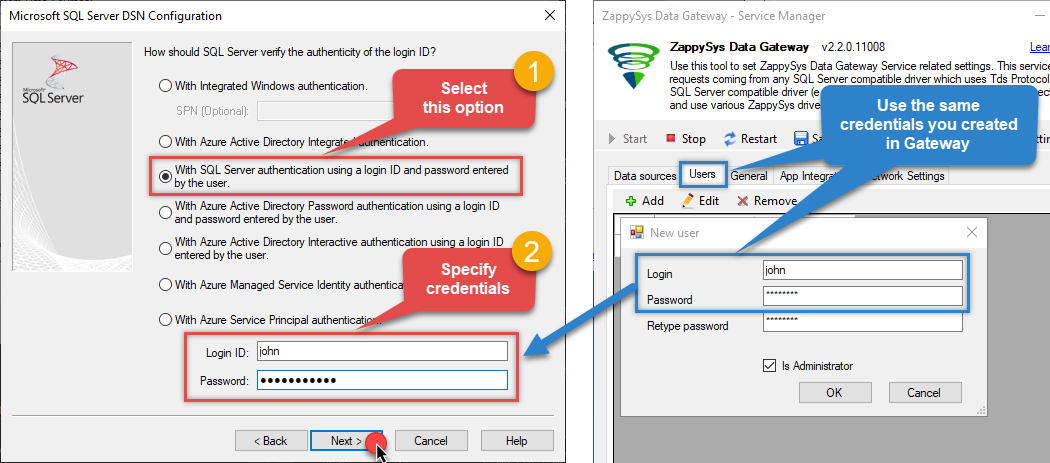

Proceed with the authentication part:

- Select SQL Server authentication

-

In the Login ID field enter the user name you created in the Data Gateway, e.g.,

john - Set Password to the one you configured in the Data Gateway

-

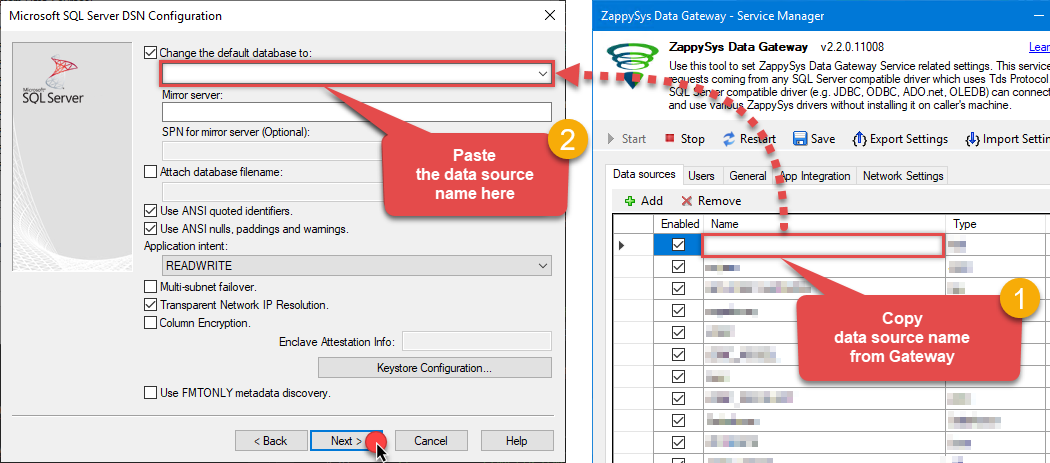

Then set the default database property to

ApacheDerbyDSN(the one we used in the Data Gateway):ApacheDerbyDSNApacheDerbyDSN Make sure to type the data source name manually or copy/paste it directly into the field. Using the dropdown might fail because the Trust server certificate option is not enabled yet (next step).

Make sure to type the data source name manually or copy/paste it directly into the field. Using the dropdown might fail because the Trust server certificate option is not enabled yet (next step). -

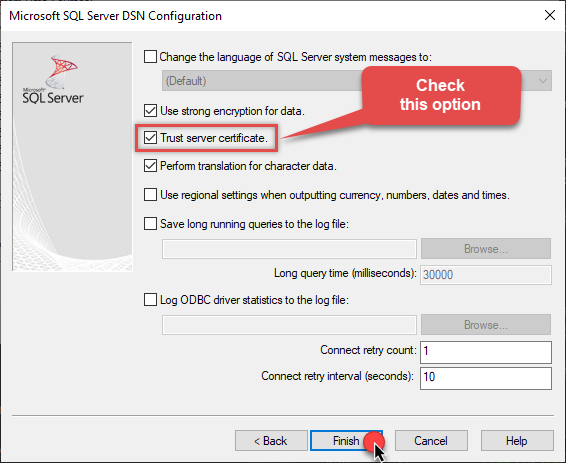

Continue by checking the Trust server certificate option:

-

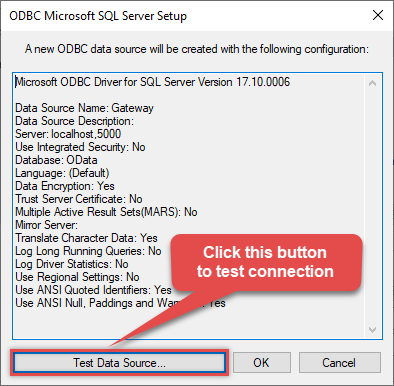

Once you do that, test the connection:

-

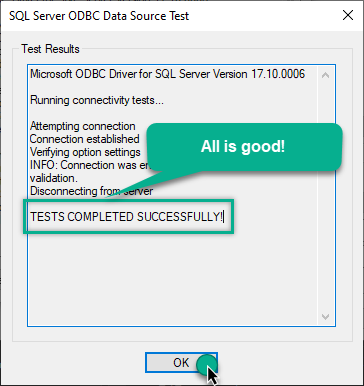

If the connection is successful, everything is good:

-

Done!

We are ready to move to the final step. Let's do it!

Access data in Microsoft Fabric via the gateway

Finally, we are ready to read data from Apache Derby in Microsoft Fabric via the Data Gateway. Follow these final steps:

-

Go back to Microsoft Fabric.

-

Then, go to your Copy job or Dataflow and start configuring your ODBC data source (like you did in the previous step).

-

In the ODBC configuration window, configure these fields:

-

Enter your ODBC connection string (DSN format), for example:

DSN=ZappySysGatewayDSN - Expand Advanced options and set the SQL statement

-

Select

MyGatewayfrom the Data gateway dropdown that you configured in the previous step -

Select

Basicfrom the Authentication kind dropdown -

Enter the Username (e.g.,

john) and Password that you configured in ZappySys Data Gateway

DSN=ZappySysGatewayDSNSELECT * FROM "APP"."ORDERS"

DSN=ZappySysGatewayDSN

-

Enter your ODBC connection string (DSN format), for example:

-

Read the data the same way we discussed at the beginning of this article.

-

That's it!

Now you can connect to Apache Derby data in Microsoft Fabric via the ZappySys Data Gateway.

Conclusion

In this guide, we demonstrated how to connect to Apache Derby in Microsoft Fabric and integrate your data — all without writing complex code. It's worth noting that ZappySys JDBC Bridge Driver allows you to connect not only to Apache Derby, but to any Java application that supports JDBC (just use a different JDBC driver and configure it appropriately).

Ready to get started? Download ODBC PowerPack now or ping us via chat if you still need help: