FTP/SFTP JSON File Connector for PowerShell

FTP/SFTP JSON File Connector can be used to read JSON Files stored on FTP Sites (Classic FTP, SFTP or FTPS). Using this you can easily read FTP/SFTP JSON File data. It's supports latest security standards, and optimized for large data files. It also supports reading compressed files (e.g. GZip /Zip).

In this article you will learn how to quickly and efficiently integrate FTP/SFTP JSON File data in PowerShell without coding. We will use high-performance FTP/SFTP JSON File Connector to easily connect to FTP/SFTP JSON File and then access the data inside PowerShell.

Let's follow the steps below to see how we can accomplish that!

FTP/SFTP JSON File Connector for PowerShell is based on ZappySys SFTP JSON Driver which is part of ODBC PowerPack. It is a collection of high-performance ODBC drivers that enable you to integrate data in SQL Server, SSIS, a programming language, or any other ODBC-compatible application. ODBC PowerPack supports various file formats, sources and destinations, including REST/SOAP API, SFTP/FTP, storage services, and plain files, to mention a few.

Create ODBC Data Source (DSN) based on ZappySys SFTP JSON Driver

Step-by-step instructions

To get data from FTP/SFTP JSON File using PowerShell we first need to create a DSN (Data Source) which will access data from FTP/SFTP JSON File. We will later be able to read data using PowerShell. Perform these steps:

-

Download and install ODBC PowerPack.

-

Open ODBC Data Sources (x64):

-

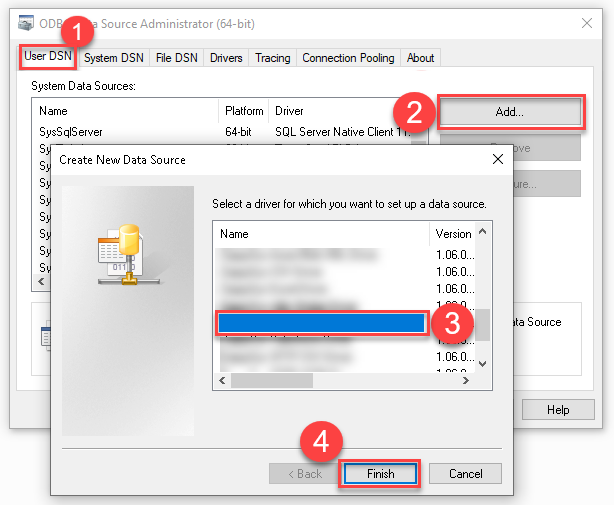

Create a User data source (User DSN) based on ZappySys SFTP JSON Driver:

ZappySys SFTP JSON Driver

-

Create and use User DSN

if the client application is run under a User Account.

This is an ideal option

in design-time , when developing a solution, e.g. in Visual Studio 2019. Use it for both type of applications - 64-bit and 32-bit. -

Create and use System DSN

if the client application is launched under a System Account, e.g. as a Windows Service.

Usually, this is an ideal option to use

in a production environment . Use ODBC Data Source Administrator (32-bit), instead of 64-bit version, if Windows Service is a 32-bit application.

-

Create and use User DSN

if the client application is run under a User Account.

This is an ideal option

-

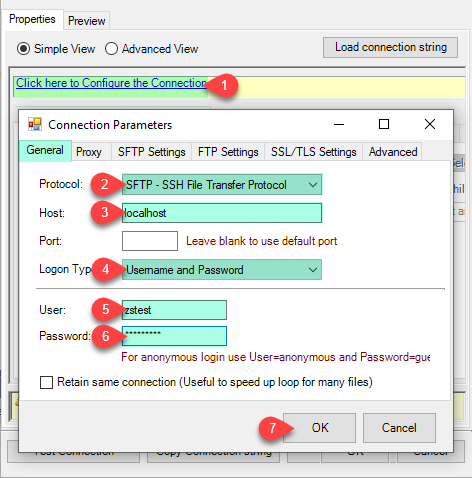

Create and configure a connection for the FTP/SFTP storage account.

-

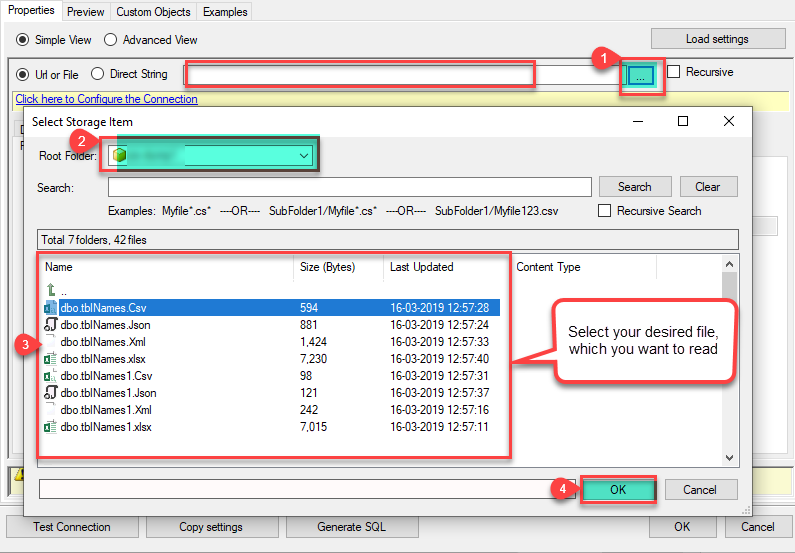

You can use select your desired single file by clicking [...] path button.

mybucket/dbo.tblNames.jsondbo.tblNames.json

----------OR----------You can also read the multiple files stored in FTP/SFTP Storage using wildcard pattern supported e.g. dbo.tblNames*.json.

Note: If you want to operation with multiple files then use wild card pattern as below (when you use wild card pattern in source path then system will treat target path as folder regardless you end with slash) mybucket/dbo.tblNames.json (will read only single .JSON file) mybucket/dbo.tbl*.json (all files starting with file name) mybucket/*.json (all files with .json Extension and located under folder subfolder)

mybucket/dbo.tblNames*.json

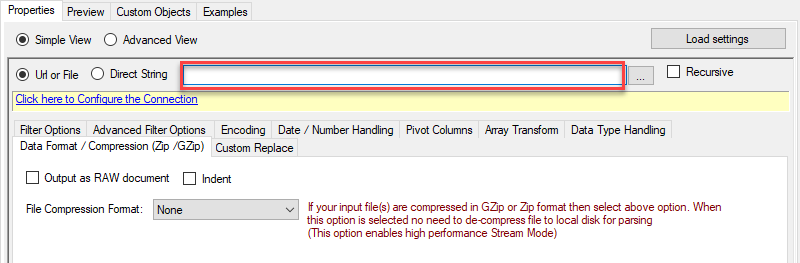

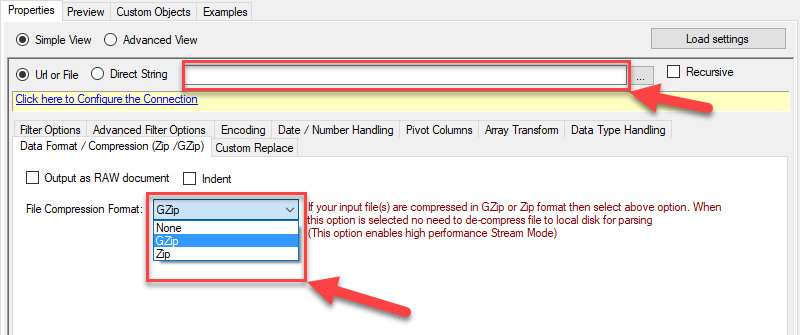

----------OR----------You can also read the zip and gzip compressed files also without extracting it in using FTP/SFTP JSON Source File Task.

mybucket/dbo.tblNames*.gz

-

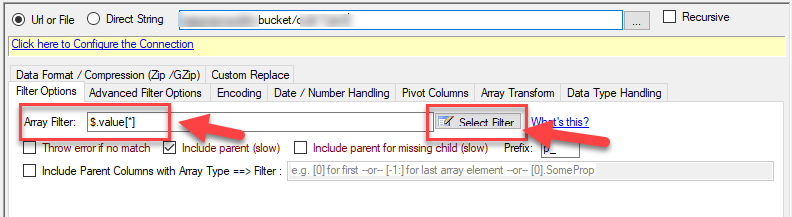

Now select/enter Path expression in Path textbox to extract only specific part of JSON string as below ($.value[*] will get content of value attribute from JSON document. Value attribute is array of JSON documents so we have to use [*] to indicate we want all records of that array)

NOTE: Here, We are using our desired filter, but you need to select your desired filter based on your requirement.Go to Preview Tab.

-

Navigate to the Preview Tab and let's explore the different modes available to access the data.

-

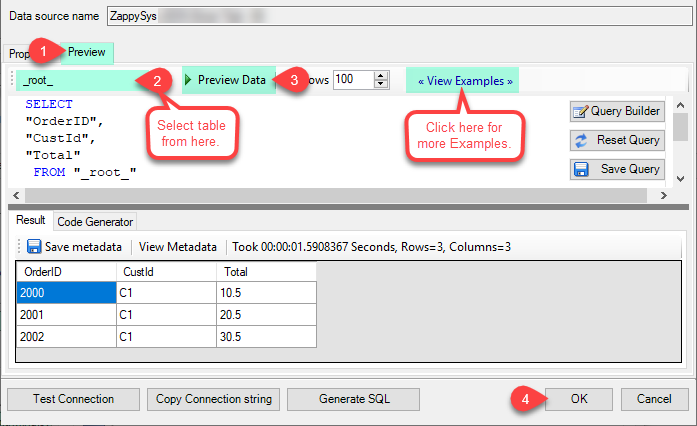

--- Using Direct Query ---

Click on Preview Tab, Select Table from Tables Dropdown and select [value] and click Preview.

-

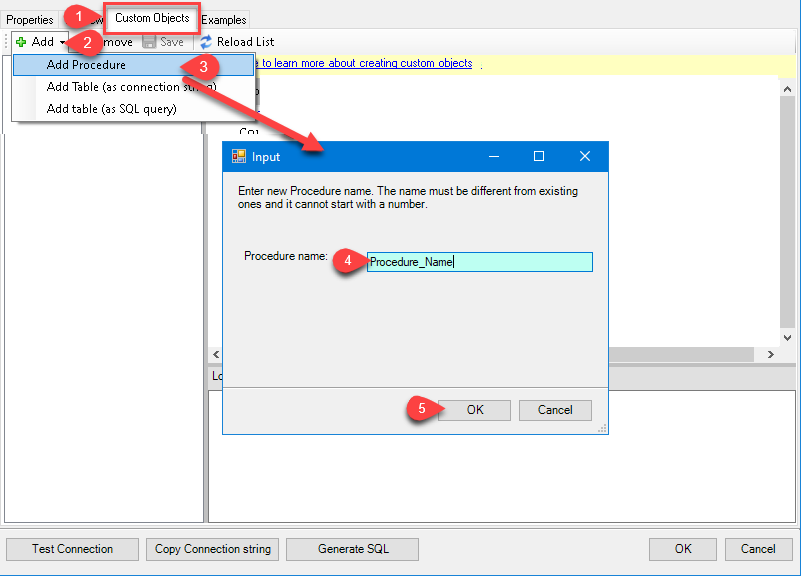

--- Using Stored Procedure ---

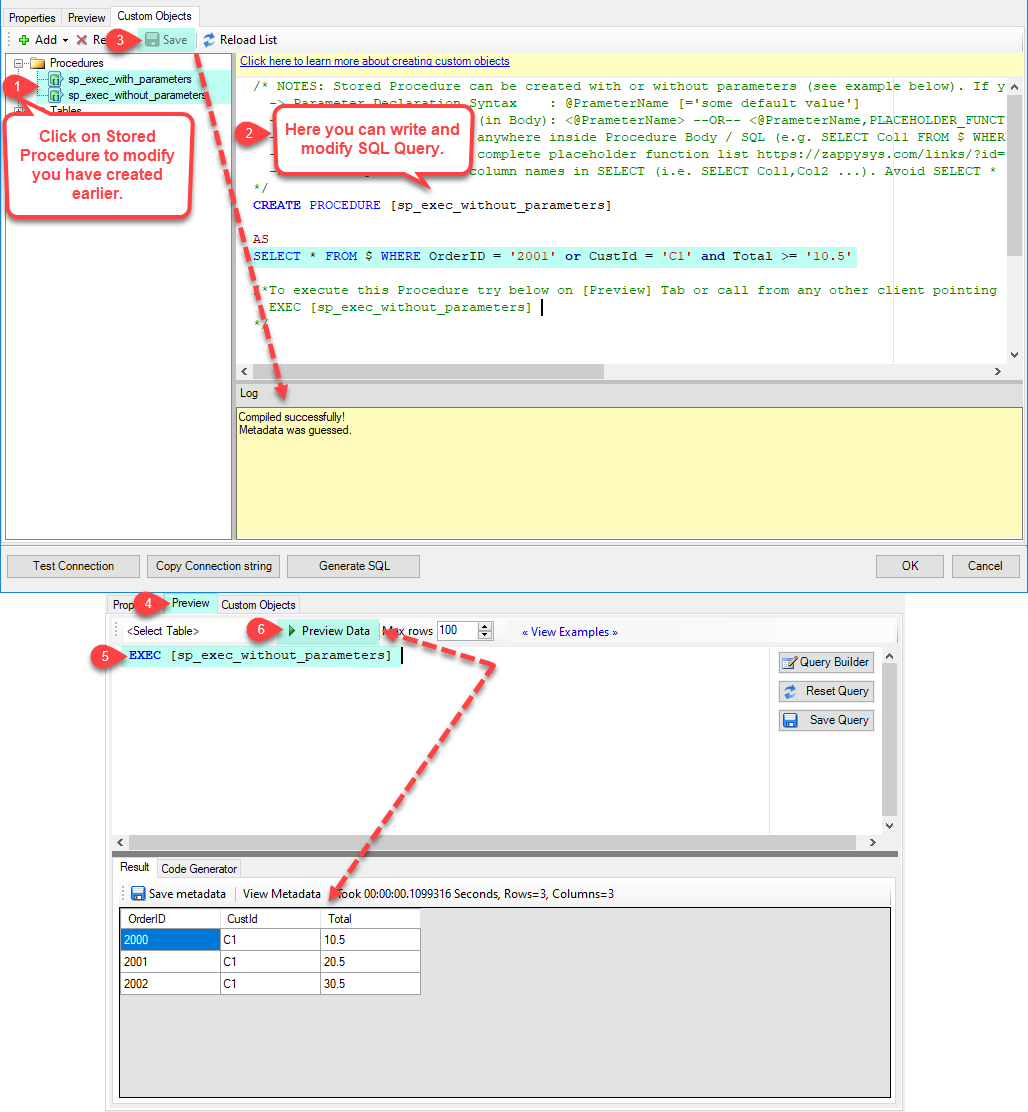

Note : For this you have to Save ODBC Driver configuration and then again reopen to configure same driver. For more information click here.Click on the Custom Objects Tab, Click on Add button and select Add Procedure and Enter an appropriate name and Click on OK button to create.

-

--- Without Parameters ---

Now Stored Procedure can be created with or without parameters (see example below). If you use parameters then Set default value otherwise it may fail to compilation)

-

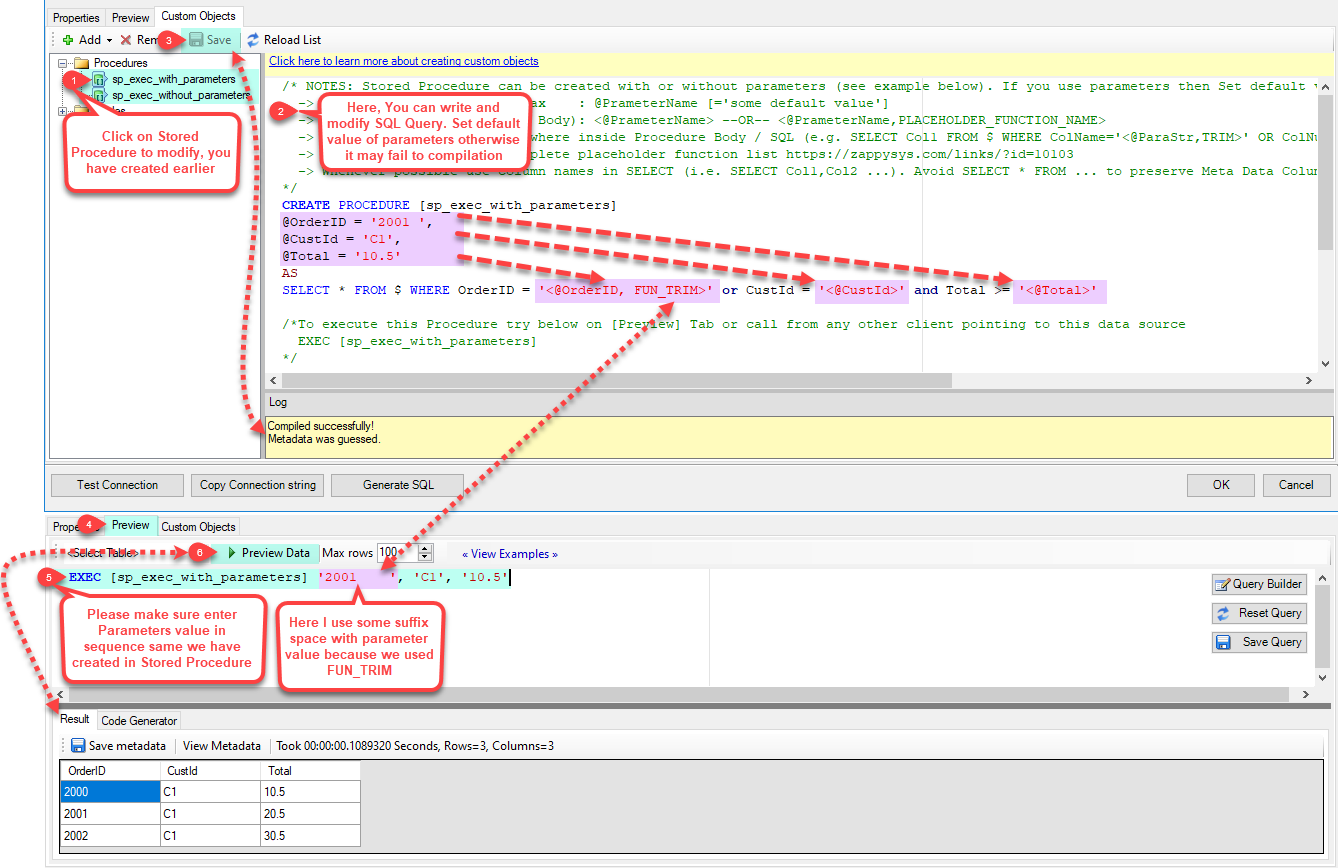

--- With Parameters ---

Note : Here you can use Placeholder with Paramters in Stored Procedure. Example : SELECT * FROM $ WHERE OrderID = '<@OrderID, FUN_TRIM>' or CustId = '<@CustId>' and Total >= '<@Total>'

-

-

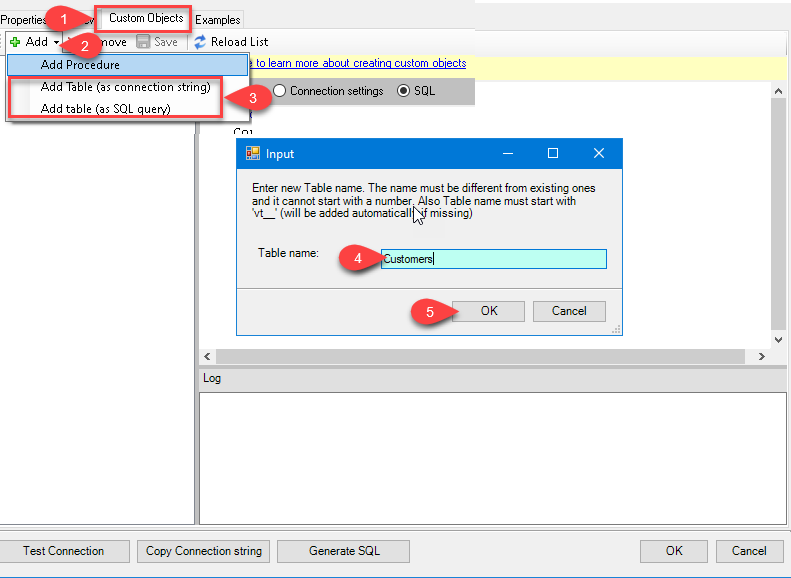

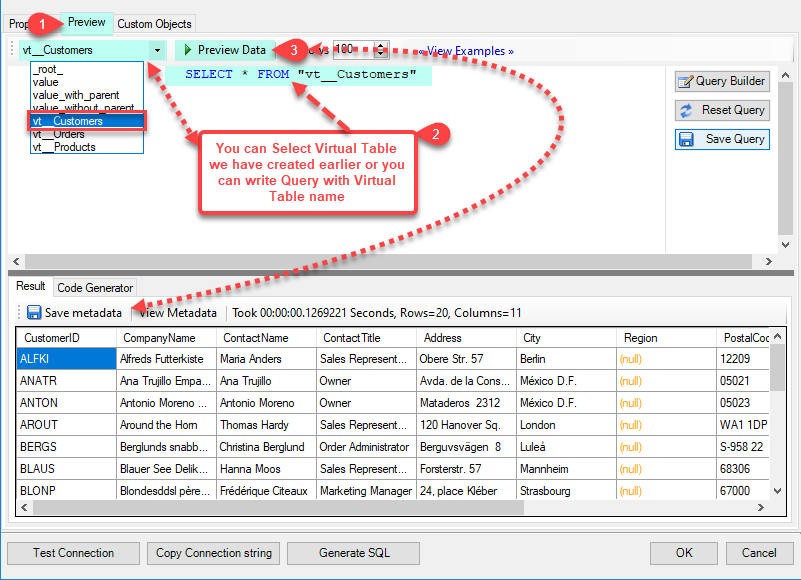

--- Using Virtual Table ---

Note : For this you have to Save ODBC Driver configuration and then again reopen to configure same driver. For more information click here.ZappySys APi Drivers support flexible Query language so you can override Default Properties you configured on Data Source such as URL, Body. This way you don't have to create multiple Data Sources if you like to read data from multiple EndPoints. However not every application support supplying custom SQL to driver so you can only select Table from list returned from driver.

Many applications like MS Access, Informatica Designer wont give you option to specify custom SQL when you import Objects. In such case Virtual Table is very useful. You can create many Virtual Tables on the same Data Source (e.g. If you have 50 Buckets with slight variations you can create virtual tables with just URL as Parameter setting).

vt__Customers DataPath=mybucket_1/customers.json vt__Orders DataPath=mybucket_2/orders.json vt__Products DataPath=mybucket_3/products.json

-

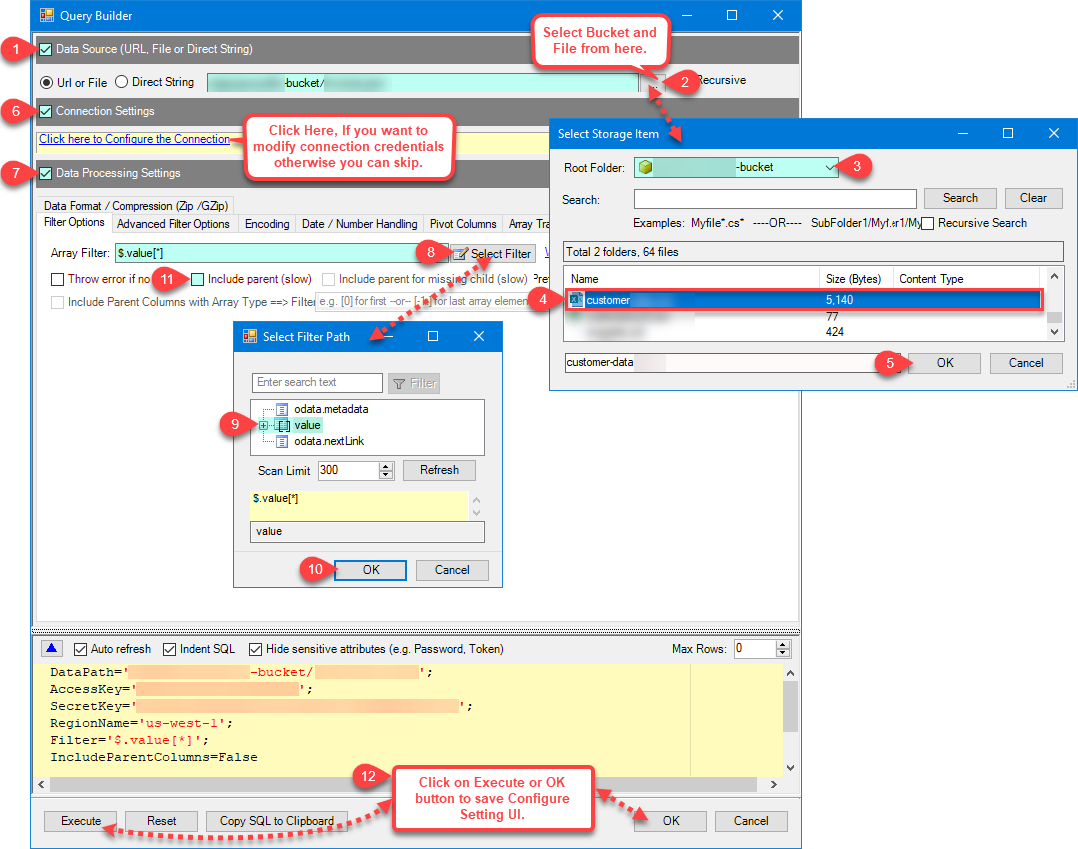

Click on the Custom Objects Tab, Click on Add button and select Add Table and Enter an appropriate name and Click on OK button to create.

-

Once you see Query Builder Window on screen Configure it.

-

Click on Preview Tab, Select Virtual Table(prefix with vt__) from Tables Dropdown or write SQL query with Virtual Table name and click Preview.

-

Click on the Custom Objects Tab, Click on Add button and select Add Table and Enter an appropriate name and Click on OK button to create.

-

-

Click OK to finish creating the data source

-

That's it; we are done. In a few clicks we configured the to Read the FTP/SFTP JSON File data using ZappySys FTP/SFTP JSON File Connector

Read FTP/SFTP JSON File data in PowerShell

Sometimes, you need to quickly access and work with your FTP/SFTP JSON File data in PowerShell. Whether you need a quick data overview or the complete dataset, this article will guide you through the process. Here are some common scenarios:

Viewing data in a terminal- Quickly peek at FTP/SFTP JSON File data

- Monitor data constantly in your console

- Export data to a CSV file so that it can be sliced and diced in Excel

- Export data to a JSON file so that it can ingested by other processes

- Export data to an HTML file for user-friendly view and easy sharing

- Create a schedule to make it an automatic process

- Store data internally for analysis or for further ETL processes

- Create a schedule to make it an automatic process

- Integrate data with other systems via external APIs

In this article, we will delve deeper into how to quickly view the data in PowerShell terminal and how to save it to a file. But let's stop talking and get started!

Reading individual fields

-

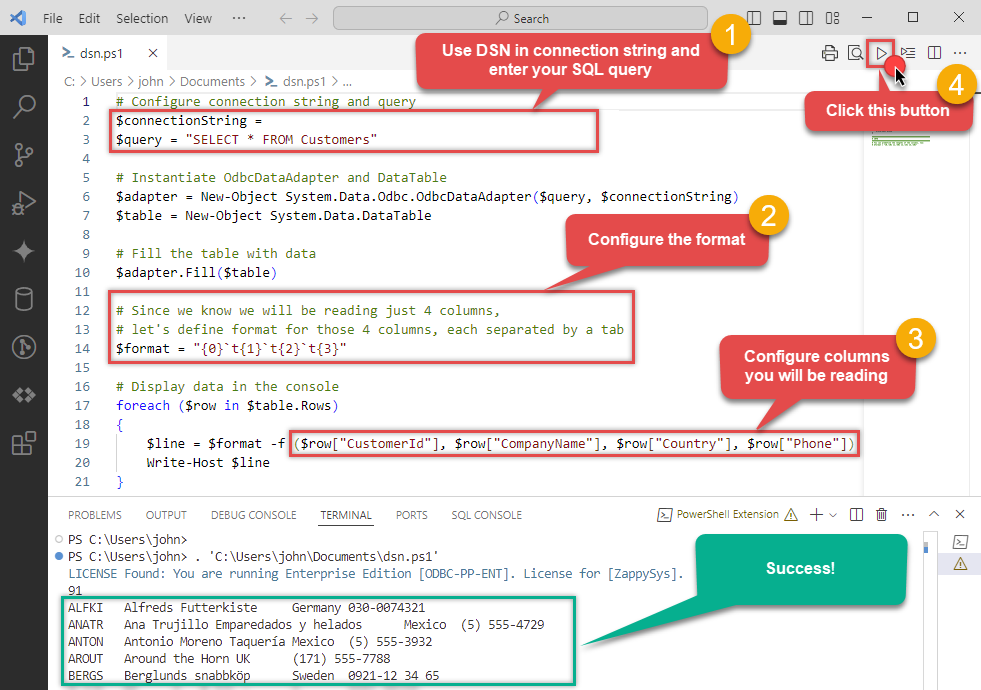

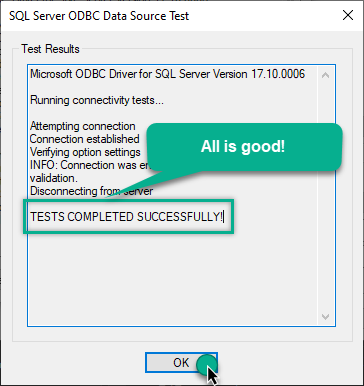

Open your favorite PowerShell IDE (we are using Visual Studio Code).

-

Use this code snippet to read the data using

FtpSftpJsonFileDSNdata source:"DSN=FtpSftpJsonFileDSN"

For your convenience, here is the whole PowerShell script:

# Configure connection string and query $connectionString = "DSN=FtpSftpJsonFileDSN" $query = "SELECT * FROM Customers" # Instantiate OdbcDataAdapter and DataTable $adapter = New-Object System.Data.Odbc.OdbcDataAdapter($query, $connectionString) $table = New-Object System.Data.DataTable # Fill the table with data $adapter.Fill($table) # Since we know we will be reading just 4 columns, let's define format for those 4 columns, each separated by a tab $format = "{0}`t{1}`t{2}`t{3}" # Display data in the console foreach ($row in $table.Rows) { # Construct line based on the format and individual FTP/SFTP JSON File fields $line = $format -f ($row["CustomerId"], $row["CompanyName"], $row["Country"], $row["Phone"]) Write-Host $line }Access specific FTP/SFTP JSON File table field using this code snippet:

You will find more info on how to manipulate$field = $row["ColumnName"]DataTable.Rowsproperty in Microsoft .NET reference.For demonstration purposes we are using sample tables which may not be available in FTP/SFTP JSON File. -

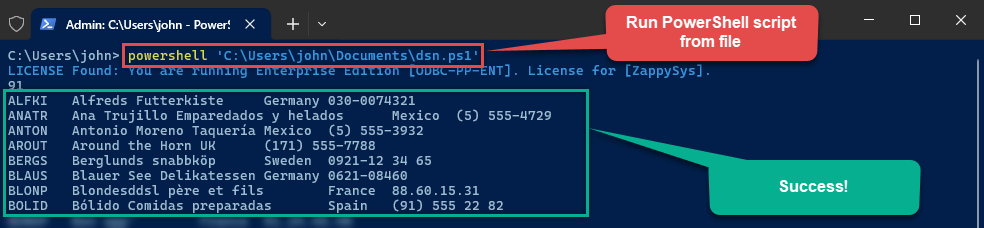

To read values in a console, save the script to a file and then execute this command inside PowerShell terminal:

You can also use even a simpler command inside the terminal, e.g.:

You can also use even a simpler command inside the terminal, e.g.:. 'C:\Users\john\Documents\dsn.ps1'

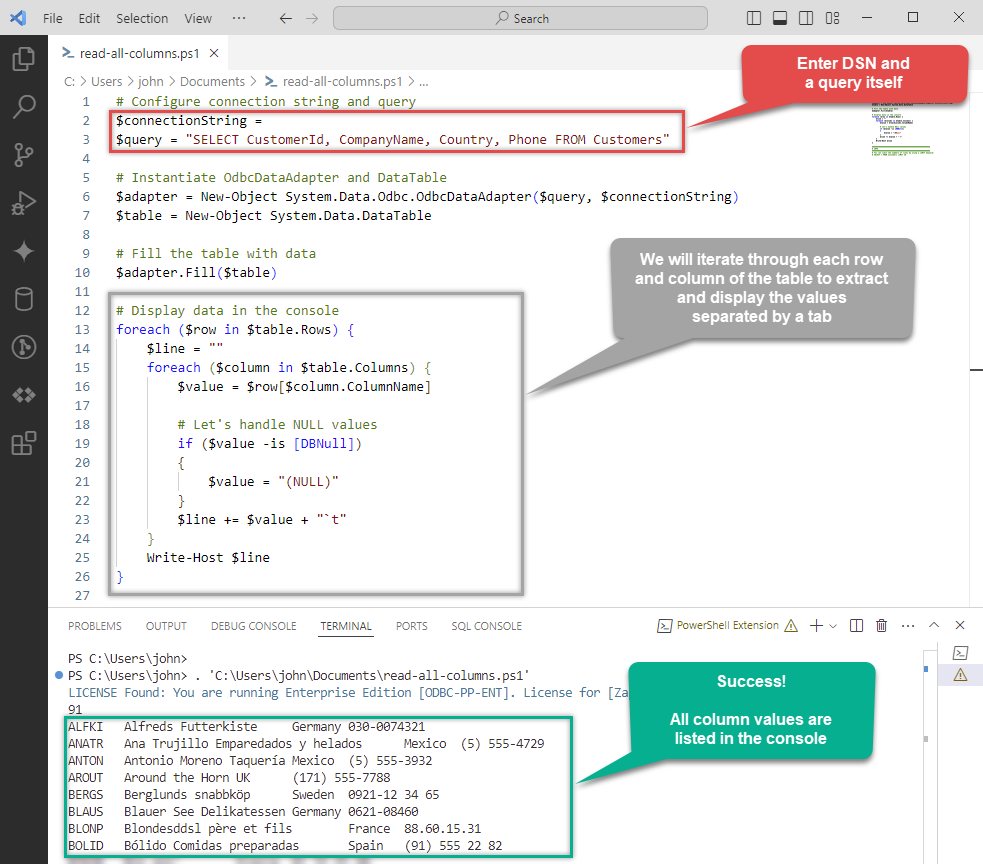

Retrieving all fields

However, there might be case, when you want to retrieve all columns of a query. Here is how you do it:

Again, for your convenience, here is the whole PowerShell script:

# Configure connection string and query

$connectionString = "DSN=FtpSftpJsonFileDSN"

$query = "SELECT CustomerId, CompanyName, Country, Phone FROM Customers"

# Instantiate OdbcDataAdapter and DataTable

$adapter = New-Object System.Data.Odbc.OdbcDataAdapter($query, $connectionString)

$table = New-Object System.Data.DataTable

# Fill the table with data

$adapter.Fill($table)

# Display data in the console

foreach ($row in $table.Rows) {

$line = ""

foreach ($column in $table.Columns) {

$value = $row[$column.ColumnName]

# Let's handle NULL values

if ($value -is [DBNull])

{

$value = "(NULL)"

}

$line += $value + "`t"

}

Write-Host $line

}

LIMIT keyword in the query, e.g.:

SELECT * FROM Customers LIMIT 10Using a full ODBC connection string

In the previous steps we used a very short format of ODBC connection string - a DSN. Yet sometimes you don't want a dependency on an ODBC data source (and an extra step). In those times, you can define a full connection string and skip creating an ODBC data source entirely. Let's see below how to accomplish that in the below steps:

-

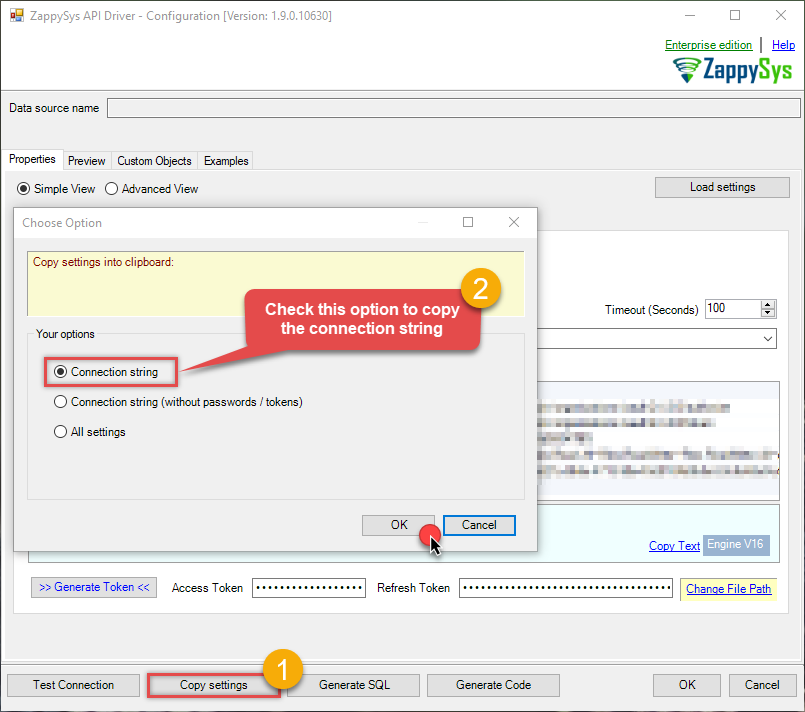

Open ODBC data source configuration and click Copy settings:

ZappySys SFTP JSON Driver - FTP/SFTP JSON FileFTP/SFTP JSON File Connector can be used to read JSON Files stored on FTP Sites (Classic FTP, SFTP or FTPS). Using this you can easily read FTP/SFTP JSON File data. It's supports latest security standards, and optimized for large data files. It also supports reading compressed files (e.g. GZip /Zip).FtpSftpJsonFileDSN

ZappySys SFTP JSON Driver - FTP/SFTP JSON FileFTP/SFTP JSON File Connector can be used to read JSON Files stored on FTP Sites (Classic FTP, SFTP or FTPS). Using this you can easily read FTP/SFTP JSON File data. It's supports latest security standards, and optimized for large data files. It also supports reading compressed files (e.g. GZip /Zip).FtpSftpJsonFileDSN

-

The window opens, telling us the connection string was successfully copied to the clipboard:

-

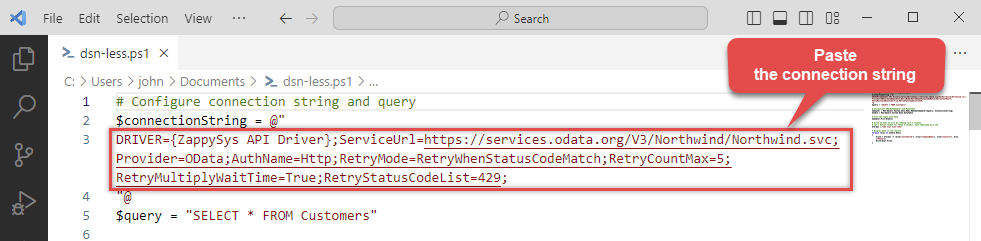

Then just paste the connection string into your script:

- You are good to go! The script will execute the same way as using a DSN.

Write FTP/SFTP JSON File data to a file in PowerShell

Save data to a CSV file

Export data to a CSV file so that it can be sliced and diced in Excel:

# Configure connection string and query

$connectionString = "DSN=FtpSftpJsonFileDSN"

$query = "SELECT * FROM Customers"

# Instantiate OdbcDataAdapter and DataTable

$adapter = New-Object System.Data.Odbc.OdbcDataAdapter($query, $connectionString)

$table = New-Object System.Data.DataTable

# Fill the table with data

$adapter.Fill($table)

# Export table data to a file

$table | ConvertTo-Csv -NoTypeInformation -Delimiter "`t" | Out-File "C:\Users\john\saved-data.csv" -ForceSave data to a JSON file

Export data to a JSON file so that it can ingested by other processes (use the above script, but change this part):

# Export table data to a file

$table | ConvertTo-Json | Out-File "C:\Users\john\saved-data.json" -ForceSave data to an HTML file

Export data to an HTML file for user-friendly view and easy sharing (use the above script, but change this part):

# Export table data to a file

$table | ConvertTo-Html | Out-File "C:\Users\john\saved-data.html" -ForceConvertTo-Csv, ConvertTo-Json, and ConvertTo-Html for other data manipulation scenarios.

Centralized data access via Data Gateway

In some situations, you may need to provide FTP/SFTP JSON File data access to multiple users or services. Configuring the data source on a Data Gateway creates a single, centralized connection point for this purpose.

This configuration provides two primary advantages:

-

Centralized data access

The data source is configured once on the gateway, eliminating the need to set it up individually on each user's machine or application. This significantly simplifies the management process.

-

Centralized access control

Since all connections route through the gateway, access can be governed or revoked from a single location for all users.

| Data Gateway |

Local ODBC

data source

|

|

|---|---|---|

| Simple configuration | ||

| Installation | Single machine | Per machine |

| Connectivity | Local and remote | Local only |

| Connections limit | Limited by License | Unlimited |

| Central data access | ||

| Central access control | ||

| More flexible cost |

If you need any of these requirements, you will have to create a data source in Data Gateway to connect to FTP/SFTP JSON File, and to create an ODBC data source to connect to Data Gateway in PowerShell.

Let's not wait and get going!

Creating FTP/SFTP JSON File data source in Gateway

In this section we will create a data source for FTP/SFTP JSON File in Data Gateway. Let's follow these steps to accomplish that:

-

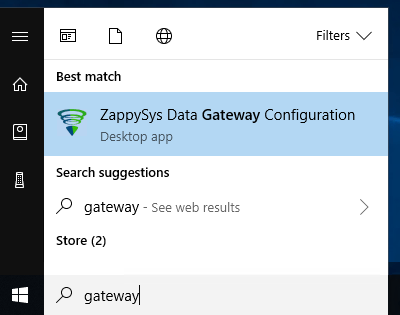

Search for

gatewayin Windows Start Menu and open ZappySys Data Gateway Configuration:

-

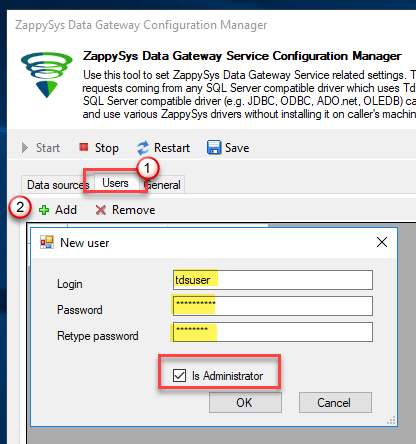

Go to Users tab and follow these steps to add a Data Gateway user:

- Click Add button

-

In Login field enter username, e.g.,

john - Then enter a Password

- Check Is Administrator checkbox

- Click OK to save

-

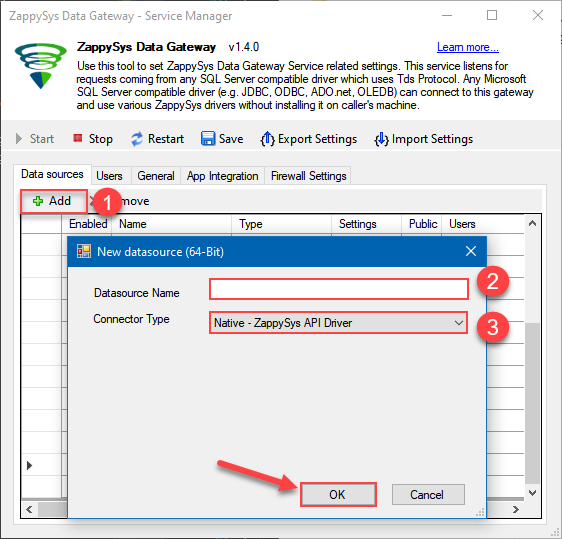

Now we are ready to add a data source:

- Click Add button

- Give Datasource a name (have it handy for later)

- Then select Native - ZappySys SFTP JSON Driver

- Finally, click OK

FtpSftpJsonFileDSNZappySys SFTP JSON Driver

-

When the ZappySys SFTP JSON Driver configuration window opens, configure the Data Source the same way you configured it in ODBC Data Sources (64-bit), in the beginning of this article.

-

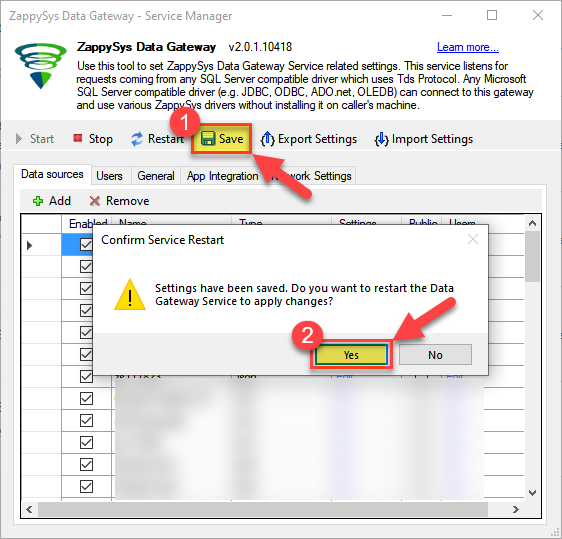

Very important step. Now, after creating or modifying the data source make sure you:

- Click the Save button to persist your changes.

- Hit Yes, once asked if you want to restart the Data Gateway service.

This will ensure all changes are properly applied:

Skipping this step may result in the new settings not taking effect and, therefore you will not be able to connect to the data source.

Skipping this step may result in the new settings not taking effect and, therefore you will not be able to connect to the data source.

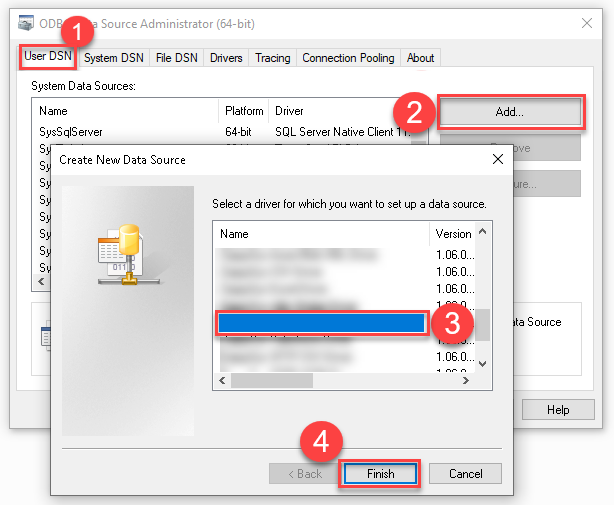

Creating ODBC data source for Data Gateway

In this part we will create ODBC data source to connect to Data Gateway from PowerShell. To achieve that, let's perform these steps:

-

Open ODBC Data Sources (x64):

-

Create a User data source (User DSN) based on ODBC Driver 17 for SQL Server:

ODBC Driver 17 for SQL Server If you don't see ODBC Driver 17 for SQL Server driver in the list, choose a similar version driver.

If you don't see ODBC Driver 17 for SQL Server driver in the list, choose a similar version driver. -

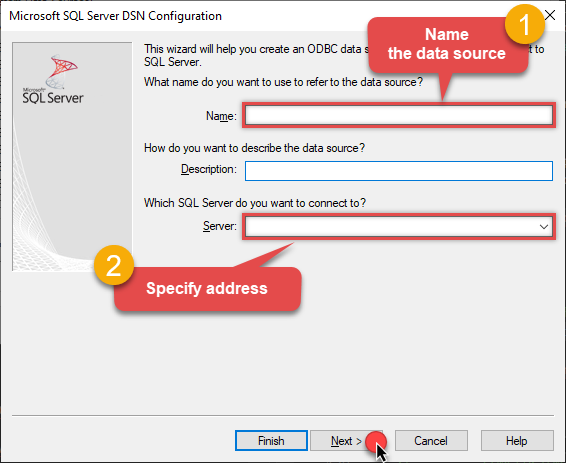

Then set a Name of the data source (e.g.

Gateway) and the address of the Data Gateway:GatewayDSNlocalhost,5000 Make sure you separate the hostname and port with a comma, e.g.

Make sure you separate the hostname and port with a comma, e.g.localhost,5000. -

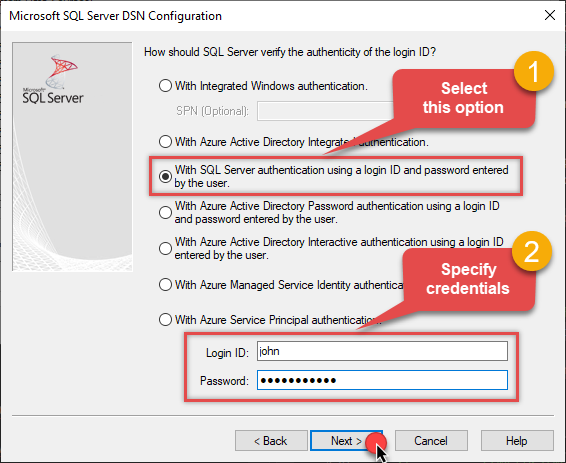

Proceed with authentication part:

- Select SQL Server authentication

-

In Login ID field enter the user name you used in Data Gateway, e.g.,

john - Set Password to the one you configured in Data Gateway

-

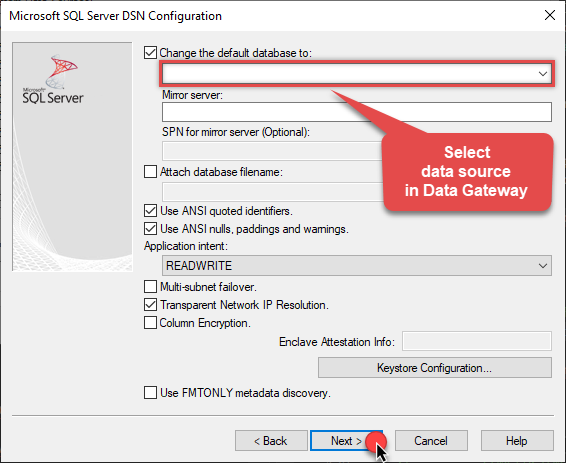

Then set the default database property to

FtpSftpJsonFileDSN(the one we used in Data Gateway):FtpSftpJsonFileDSN

-

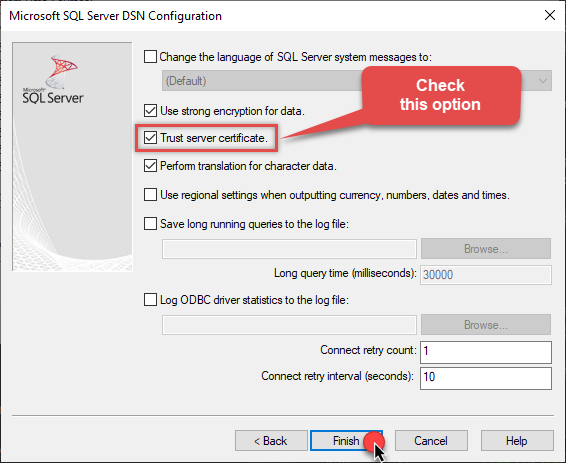

Continue by checking Trust server certificate option:

-

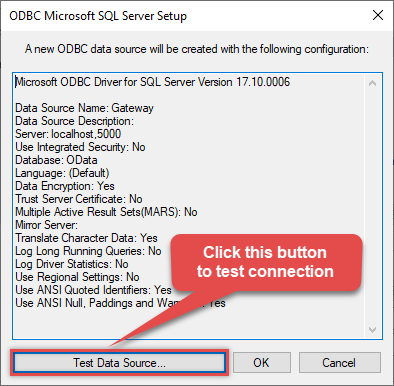

Once you do that, test the connection:

-

If connection is successful, everything is good:

-

Done!

We are ready to move to the final step. Let's do it!

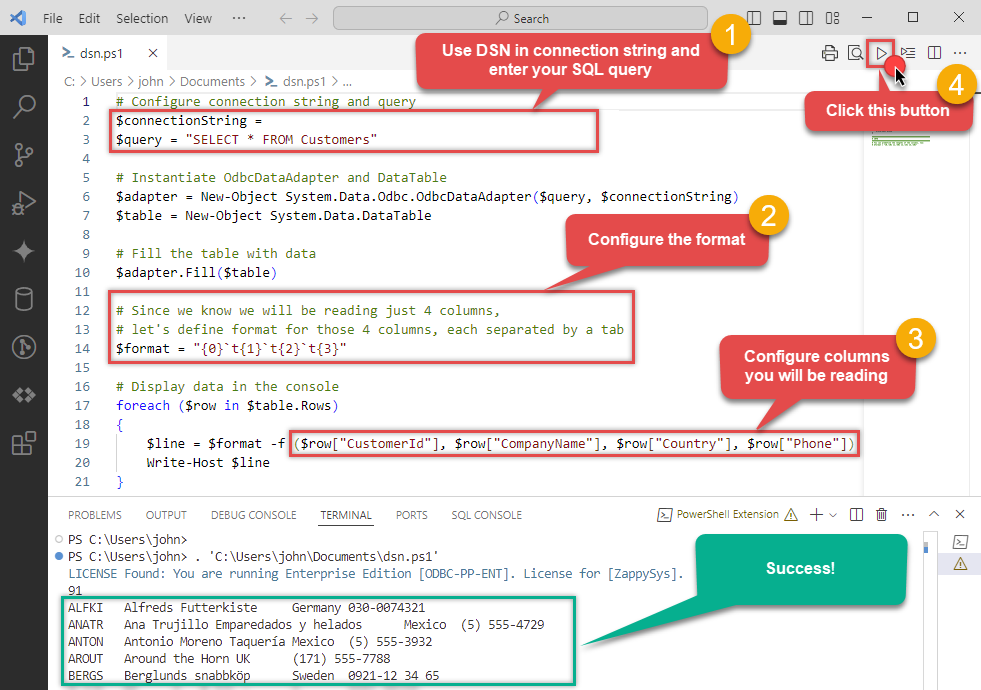

Accessing data in PowerShell via Data Gateway

Finally, we are ready to read data from FTP/SFTP JSON File in PowerShell via Data Gateway. Follow these final steps:

-

Go back to PowerShell.

-

Use this code snippet to read the data using

GatewayDSNdata source:"DSN=GatewayDSN"

For your convenience, here is the whole PowerShell script:

# Configure connection string and query $connectionString = "DSN=GatewayDSN" $query = "SELECT * FROM Customers" # Instantiate OdbcDataAdapter and DataTable $adapter = New-Object System.Data.Odbc.OdbcDataAdapter($query, $connectionString) $table = New-Object System.Data.DataTable # Fill the table with data $adapter.Fill($table) # Since we know we will be reading just 4 columns, let's define format for those 4 columns, each separated by a tab $format = "{0}`t{1}`t{2}`t{3}" # Display data in the console foreach ($row in $table.Rows) { # Construct line based on the format and individual FTP/SFTP JSON File fields $line = $format -f ($row["CustomerId"], $row["CompanyName"], $row["Country"], $row["Phone"]) Write-Host $line }Access specific FTP/SFTP JSON File table field using this code snippet:

You will find more info on how to manipulate$field = $row["ColumnName"]DataTable.Rowsproperty in Microsoft .NET reference.For demonstration purposes we are using sample tables which may not be available in FTP/SFTP JSON File. -

Read the data the same way we discussed at the beginning of this article.

-

That's it!

Now you can connect to FTP/SFTP JSON File data in PowerShell via the Data Gateway.

john and your password.

Conclusion

In this article we showed you how to connect to FTP/SFTP JSON File in PowerShell and integrate data without any coding, saving you time and effort.

We encourage you to download FTP/SFTP JSON File Connector for PowerShell and see how easy it is to use it for yourself or your team.

If you have any questions, feel free to contact ZappySys support team. You can also open a live chat immediately by clicking on the chat icon below.

Download FTP/SFTP JSON File Connector for PowerShell Documentation