How to integrate REST API using Azure Data Factory (Pipeline)

Learn how to quickly and efficiently connect REST API with Azure Data Factory (Pipeline) for smooth data access.

Read and write REST API data effortlessly. Streamline, manage, and automate JSON, XML, and CSV from web URLs for analytics, reporting, and data pipelines — almost no coding required. You can do it all using the high-performance REST API ODBC Driver for Azure Data Factory (Pipeline) (often referred to as the REST API Connector). We'll walk you through the entire setup.

Ready to dive in? Download the product to jump right in, or follow the step-by-step guide below to see how it works.

Create ODBC data source

ZappySys provides specialized ODBC drivers to connect to various API formats. Depending on your API's response type, follow the relevant configuration section below:

-

JSON Driver

Best for REST APIs responding with JSON data.

-

XML Driver

Designed for SOAP services or APIs returning XML strings.

-

CSV Driver

Use this for APIs returning CSV data or for parsing flat files.

Using JSON Driver

-

Download and install ODBC PowerPack (if you haven't already).

-

Search for

odbcand open the ODBC Data Sources (64-bit):

-

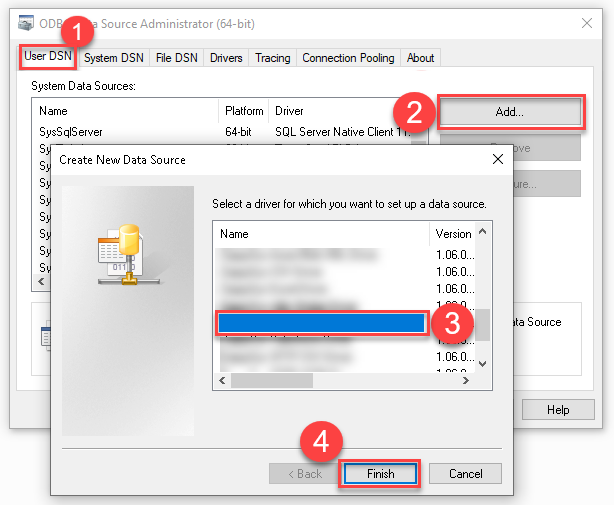

Create a User data source (User DSN) based on the ZappySys JSON Driver driver:

ZappySys JSON Driver

- Create and use a User DSN if the client application runs under a User Account. This is the ideal option at design time (e.g., when developing in Visual Studio). Use it for both types of applications (64-bit and 32-bit).

- Create and use a System DSN if the client application runs under a System Account (e.g., as a Windows Service). This is usually the required option in a production environment. If your Windows Service is a 32-bit application, you must use the 32-bit ODBC Data Source Administrator to configure this

When deployed to production, Azure Data Factory (Pipeline) runs under a Service Account. Therefore, for the production environment, you must create and use a System DSN. -

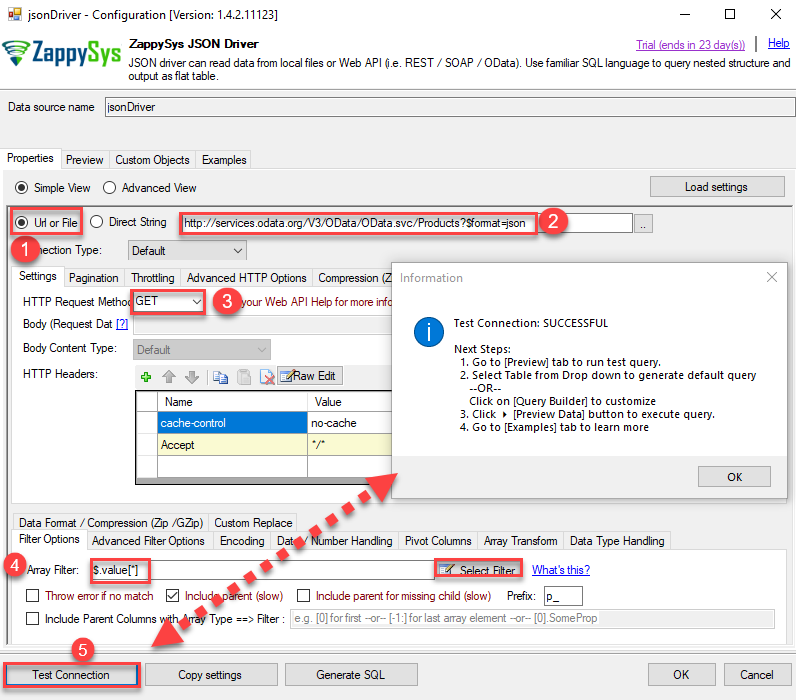

Select Url or File and paste the following Url for this example OR you can load existing connection string as per this article.

NOTE: Here for demo, We are using odata API, but you need to refer your own API documentation and based on that you need to use your own API URL and need to configure connection based on API Authentication type

-

Now enter JSONPath expression in Array Filter textbox to extract only specific part of JSON file as below ($.value[*] will get content of value attribute from JSON document. Value attribute is array of JSON documents so we have to use [*] to indicate we want all records of that array)

NOTE: Here, We are using our desired filter, but you need to select your desired filter based on your requirement.

Click on Test Connection button to view whether the Test Connection is SUCCESSFUL or Not.$.value[*]

-

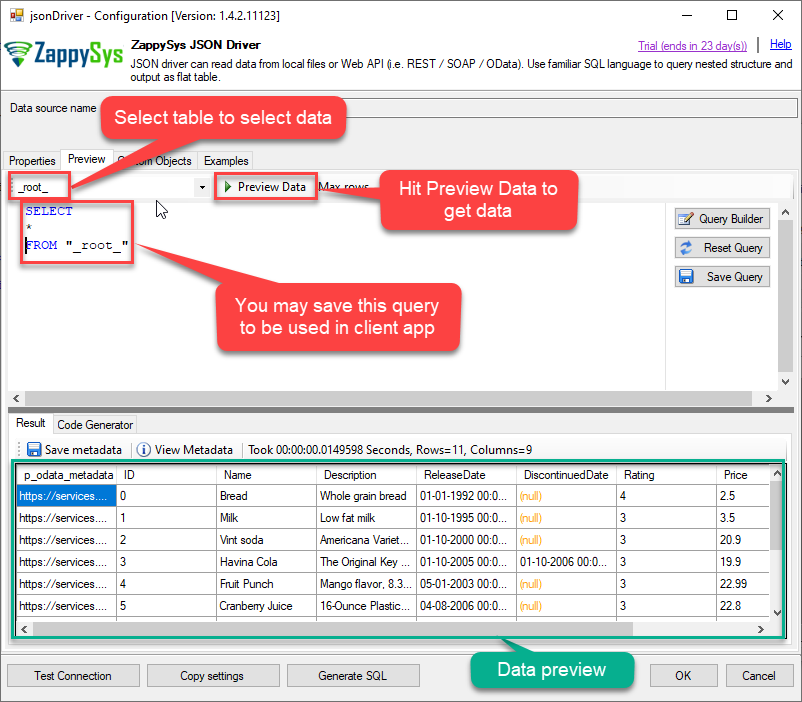

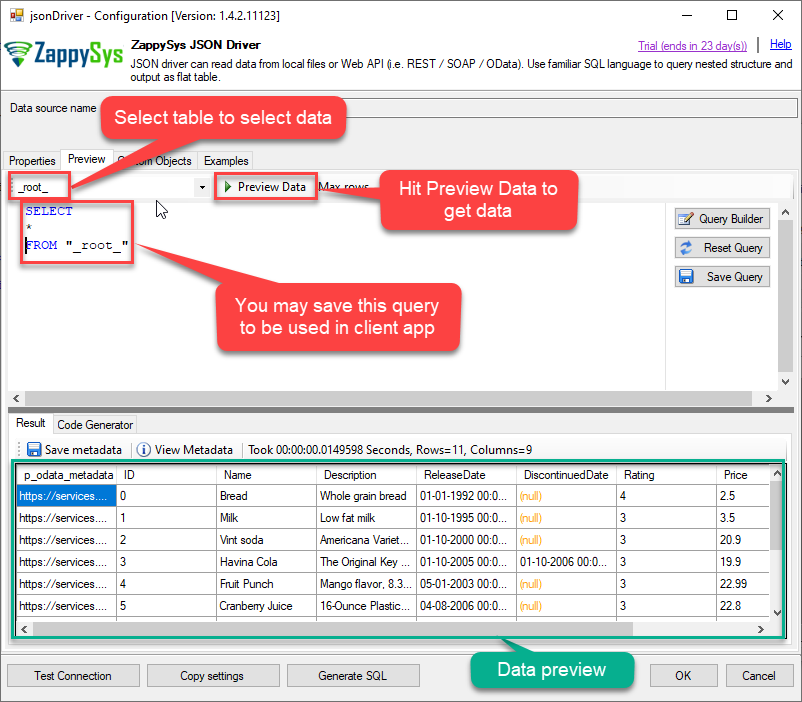

Once you configured a data source, you can preview data. Hit Preview tab, and use similar settings to preview data:

-

Click OK to finish creating the data source

-

That's it; we are done. In a few clicks we configured the call to JSON API using ZappySys JSON Connector.

Using XML Driver

-

Download and install ODBC PowerPack (if you haven't already).

-

Search for

odbcand open the ODBC Data Sources (64-bit):

-

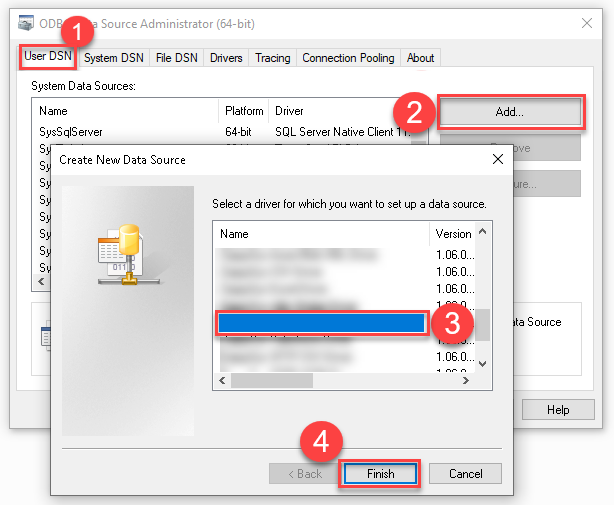

Create a User data source (User DSN) based on the ZappySys XML Driver driver:

ZappySys XML Driver

- Create and use a User DSN if the client application runs under a User Account. This is the ideal option at design time (e.g., when developing in Visual Studio). Use it for both types of applications (64-bit and 32-bit).

- Create and use a System DSN if the client application runs under a System Account (e.g., as a Windows Service). This is usually the required option in a production environment. If your Windows Service is a 32-bit application, you must use the 32-bit ODBC Data Source Administrator to configure this

When deployed to production, Azure Data Factory (Pipeline) runs under a Service Account. Therefore, for the production environment, you must create and use a System DSN. -

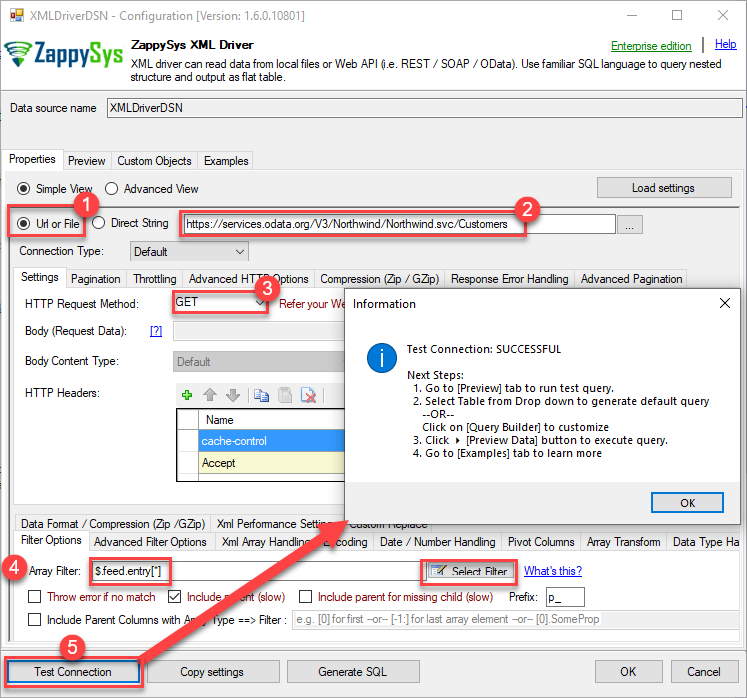

Select Url or File and paste the following Url for this example OR you can load existing connection string as per this article.

NOTE: Here for demo, We are using odata API, but you need to refer your own API documentation and based on that you need to use your own API URL and need to configure connection based on API Authentication type

-

Now enter Path expression in Array Filter textbox to extract only specific part of XML file as below ($.feed.entry[*] will get content of entry attribute from XML document. Entry attribute is array of XML documents so we have to use [*] to indicate we want all records of that array)

NOTE: Here, We are using our desired filter, but you need to select your desired filter based on your requirement.

Click on Test Connection button to view whether the Test Connection is SUCCESSFUL or Not.$.feed.entry[*]

-

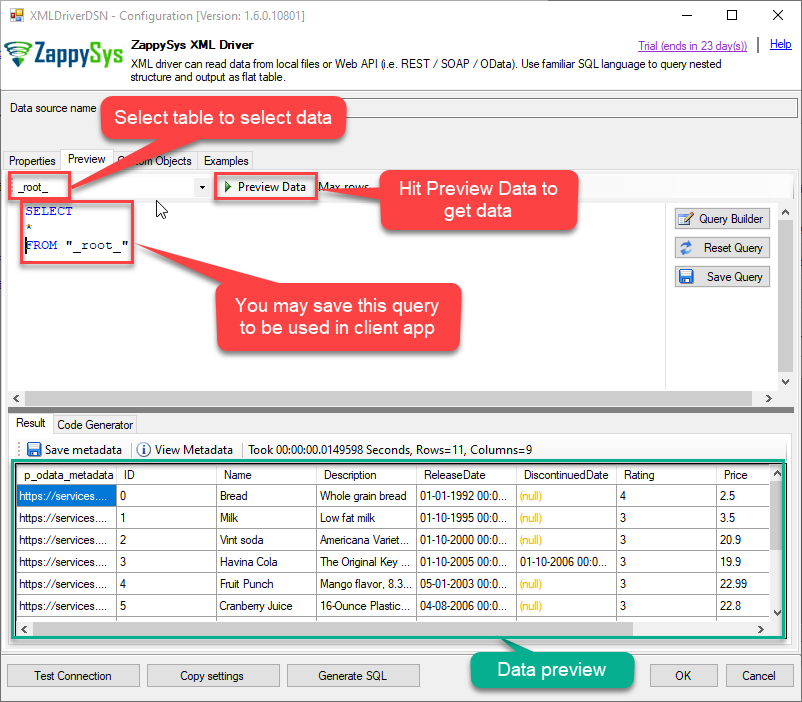

Once you configured a data source, you can preview data. Hit Preview tab, and use similar settings to preview data:

-

Click OK to finish creating the data source.

-

That's it; we are done. In a few clicks we configured the call to XML API using ZappySys XML Connector.

Using CSV Driver

-

Download and install ODBC PowerPack (if you haven't already).

-

Search for

odbcand open the ODBC Data Sources (64-bit):

-

Create a User data source (User DSN) based on the ZappySys CSV Driver driver:

ZappySys CSV Driver

- Create and use a User DSN if the client application runs under a User Account. This is the ideal option at design time (e.g., when developing in Visual Studio). Use it for both types of applications (64-bit and 32-bit).

- Create and use a System DSN if the client application runs under a System Account (e.g., as a Windows Service). This is usually the required option in a production environment. If your Windows Service is a 32-bit application, you must use the 32-bit ODBC Data Source Administrator to configure this

When deployed to production, Azure Data Factory (Pipeline) runs under a Service Account. Therefore, for the production environment, you must create and use a System DSN. -

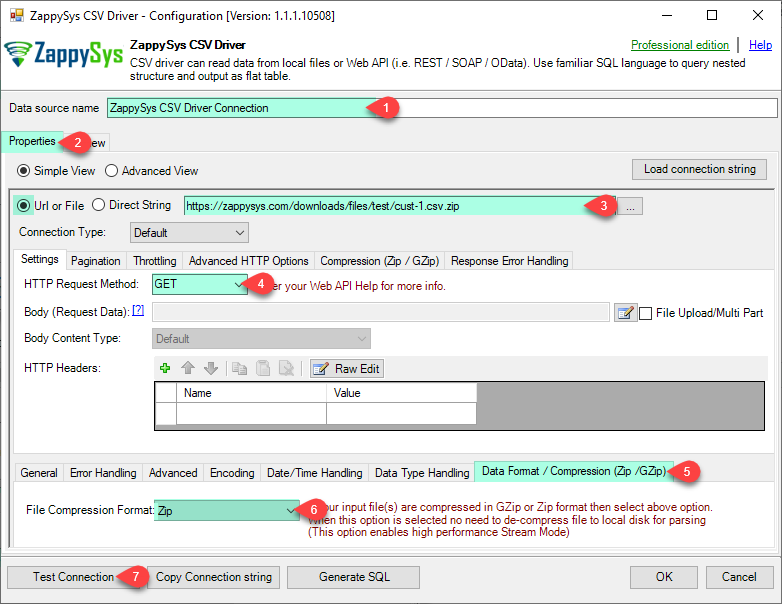

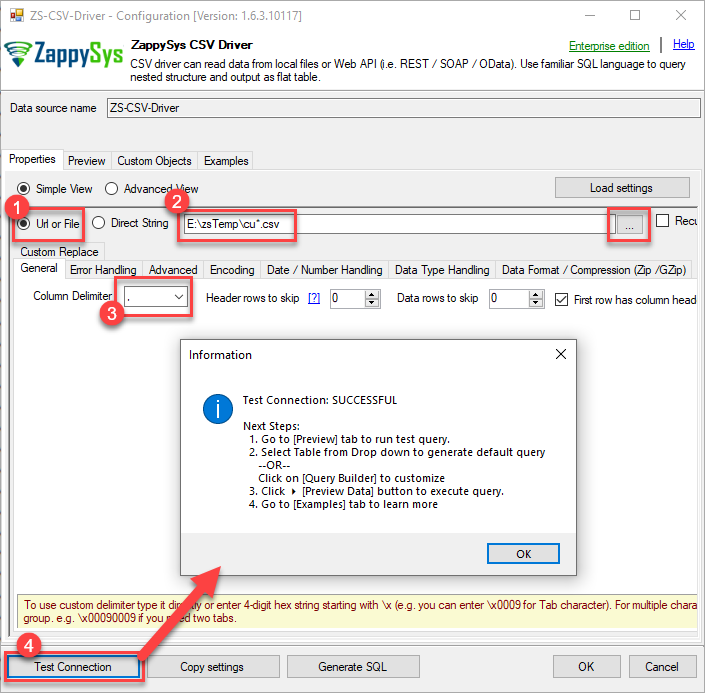

Select Url or File.

Read CSV API in Azure Data Factory (Pipeline)

-

Paste the following Url. In this example, We are using Zip format CSV File URL, but you need to refer your CSV File/URL.

https://zappysys.com/downloads/files/test/cust-1.csv.zipClick on Test Connection button to view whether the Test Connection is SUCCESSFUL or Not.

Read CSV File in Azure Data Factory (Pipeline)

-

You can use pass single file or multiple file path using wildcard pattern in path and you can use select single file by clicking [...] path button or multiple file using wildcard pattern in path.

Note: If you want to operation with multiple files then use wild card pattern as below (when you use wild card pattern in source path then system will treat target path as folder regardless you end with slash) C:\SSIS\Test\reponse.csv (will read only single reponse.csv file) C:\SSIS\Test\j*.csv (all files starting with file name j) C:\SSIS\Test\*.csv (all files with .csv Extension and located under folder subfolder)

Click on Test Connection button to view whether the Test Connection is SUCCESSFUL or Not.

-

-

Once you configured a data source, you can preview data. Hit Preview tab, and use similar settings to preview data:

-

Click OK to finish creating the data source

-

That's it; we are done. In a few clicks we configured the read the CSV data using ZappySys CSV Connector.

Read data in Azure Data Factory (ADF) from ODBC datasource (REST API)

-

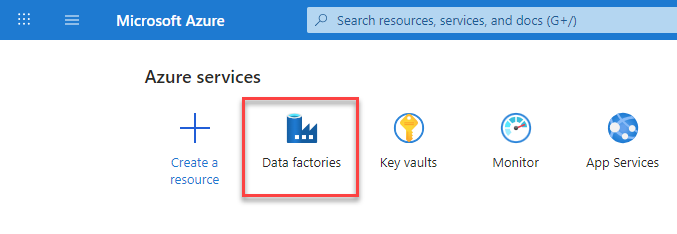

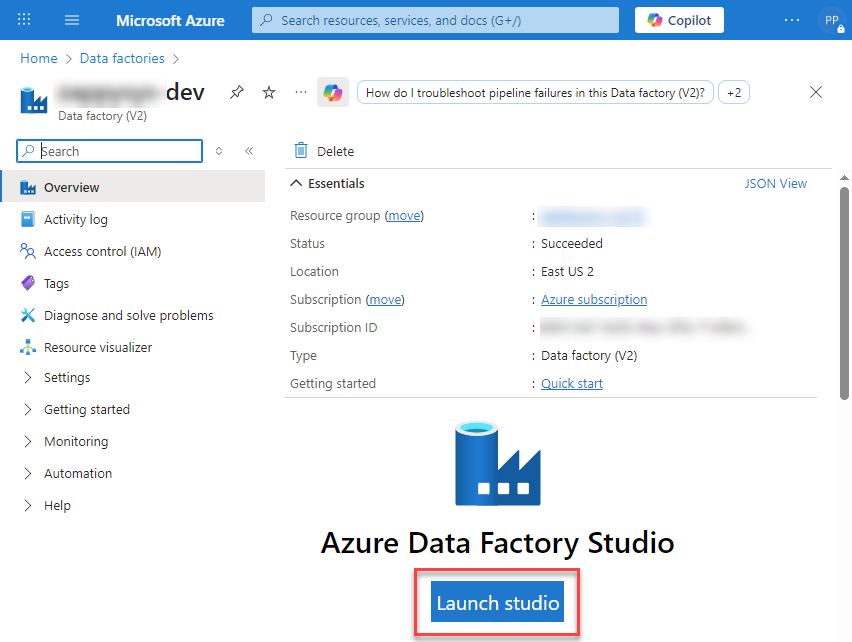

Sign in to Azure Portal

-

Open your browser and go to: https://portal.azure.com

-

Enter your Azure credentials and complete MFA if required.

-

After login, go to Data factories.

-

-

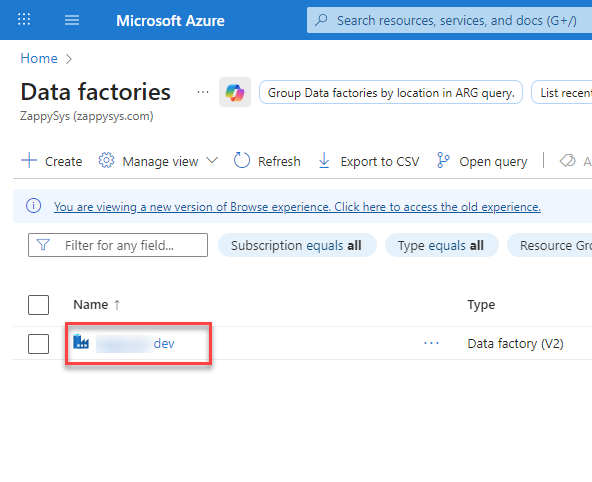

Under Azure Data Factory Resource - Create or select the Data Factory you want to work with.

-

Inside the Data Factory resource page, click Launch studio.

-

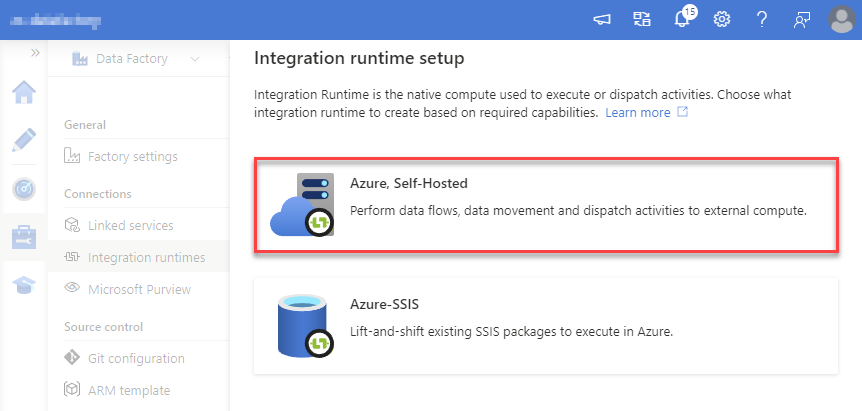

Create a New Integration Runtime (Self-Hosted):

In Azure Data Factory Studio, go to the Manage section (left menu).

Under Connections, select Integration runtimes.

Click + New to create a new integration runtime.

-

Select Azure, Self-Hosted option:

-

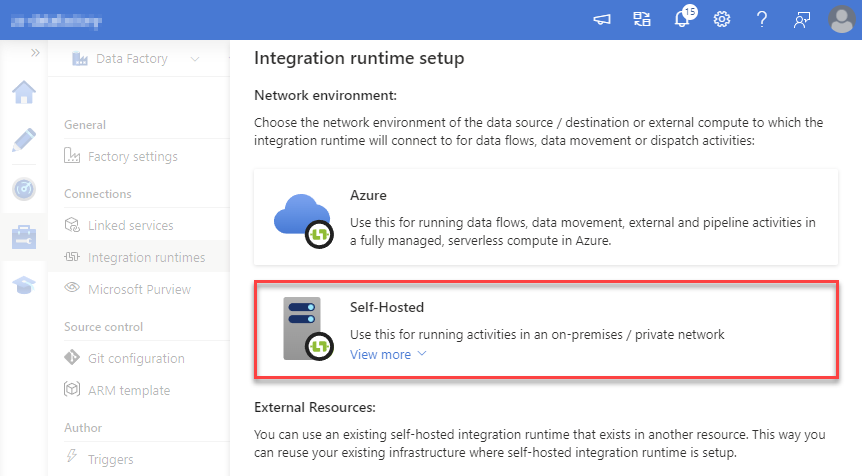

Select Self-Hosted option:

-

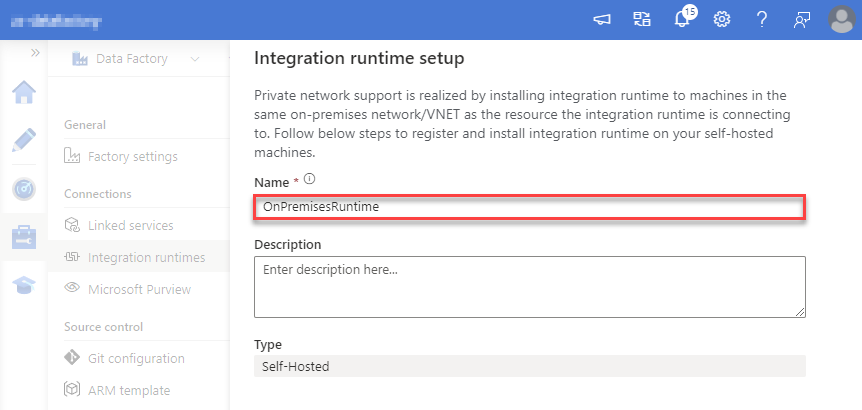

Set a name, we will use OnPremisesRuntime:

-

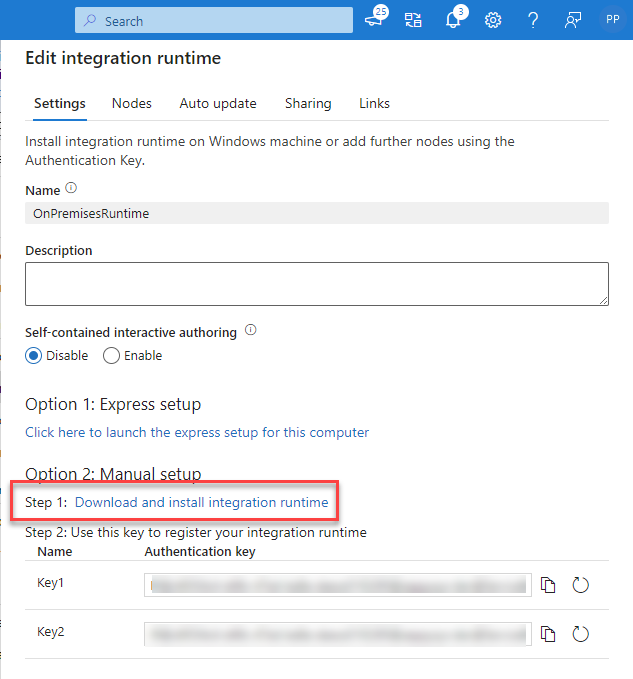

Download and install Microsoft Integration Runtime.

-

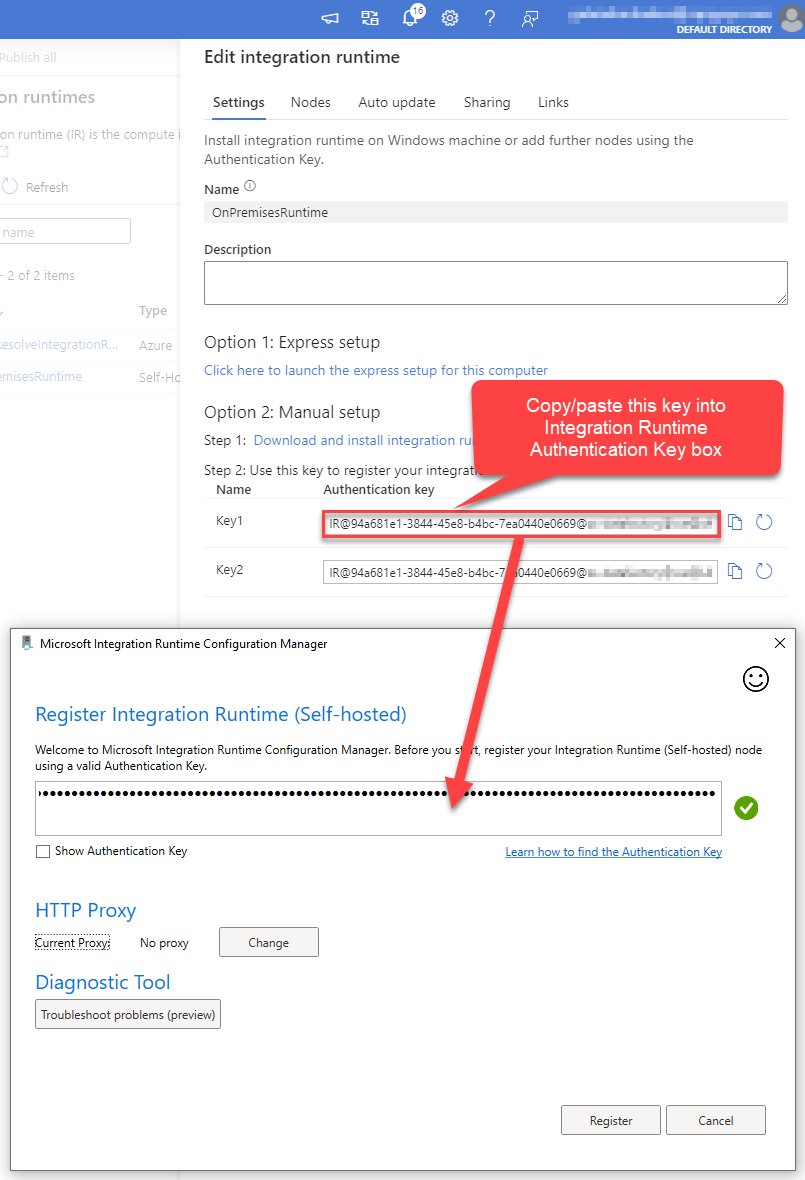

Launch Integration Runtime and copy/paste Authentication Key from Integration Runtime configuration in Azure Portal:

-

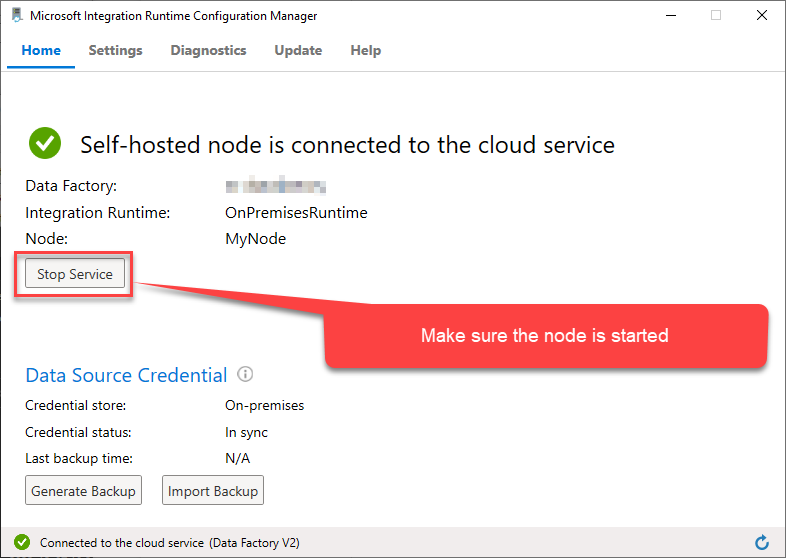

After finishing registering the Integration Runtime node, you should see a similar view:

-

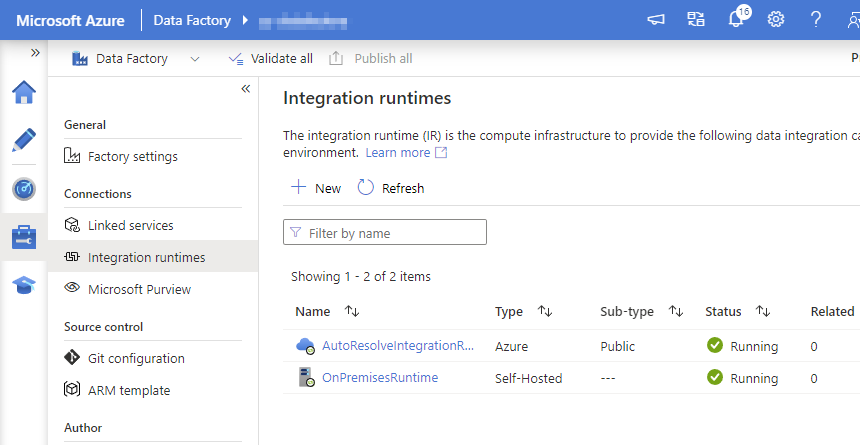

Go back to Azure Portal and finish adding new Integration Runtime. You should see it was successfully added:

-

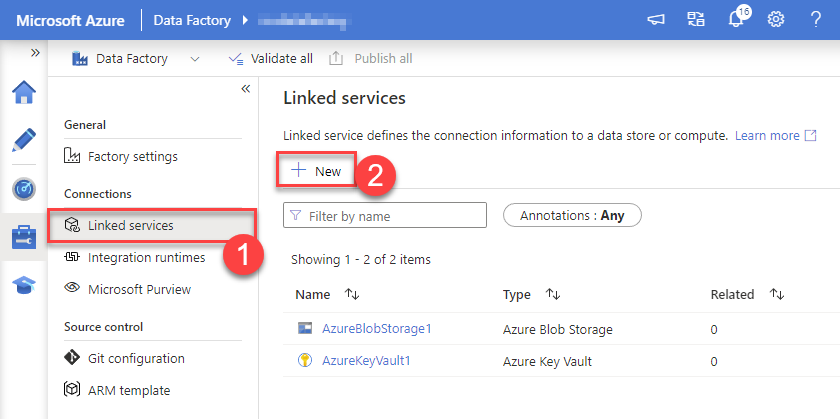

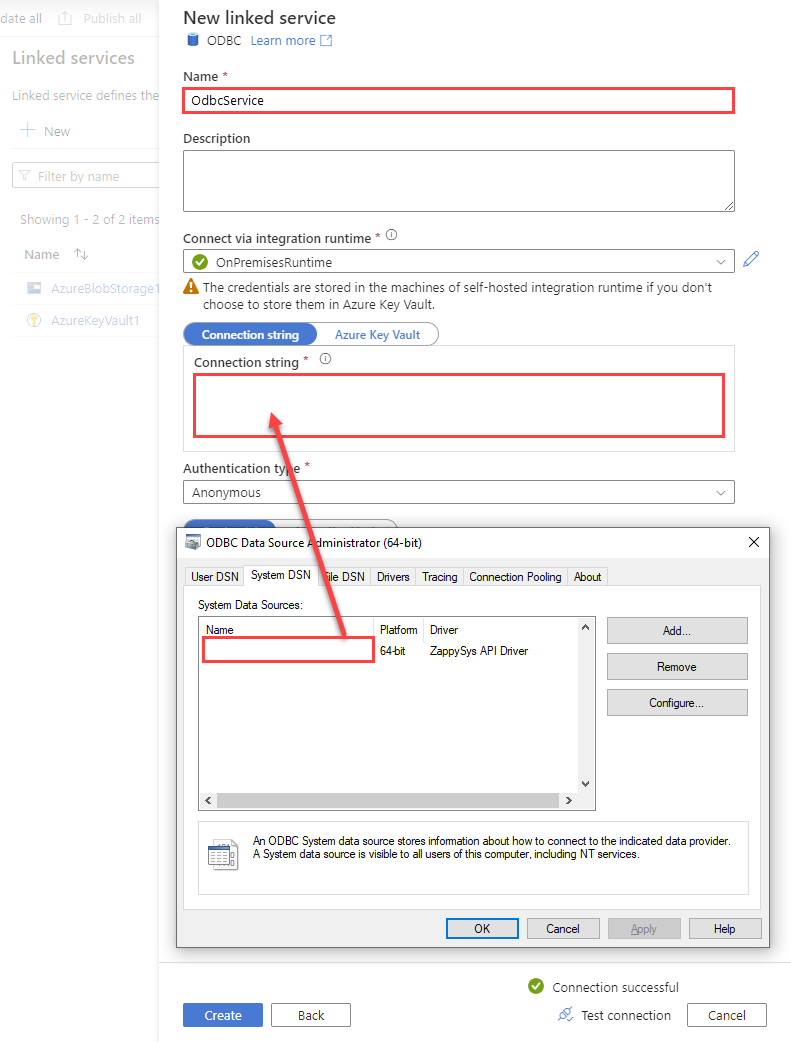

Create a New Linked service:

In the Manage section (left menu).

Under Connections, select Linked services.

Click + New to create a new Linked service based on ODBC.

-

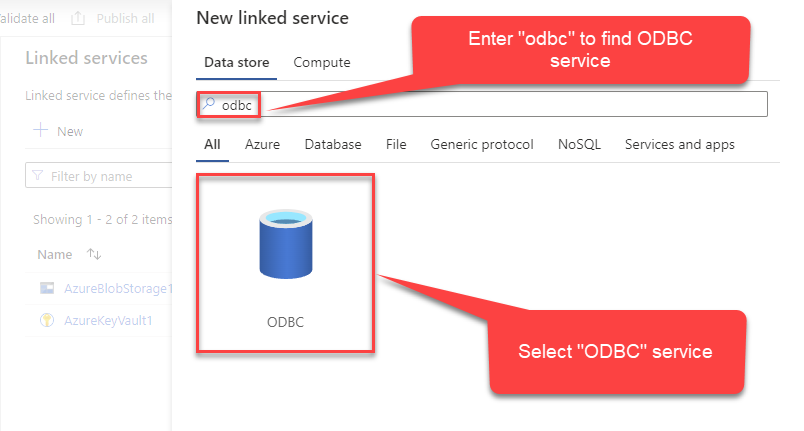

Select ODBC service:

-

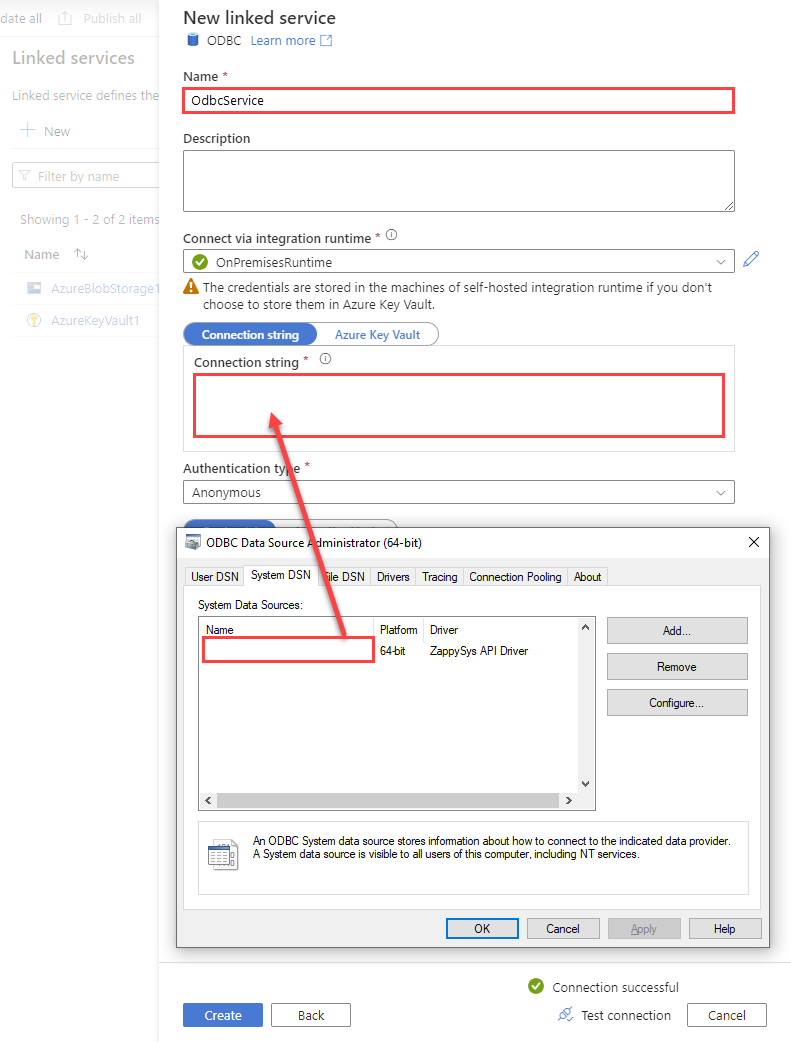

Configure new ODBC service. Use the same DSN name we used in the previous step and copy it to Connection string box:

RestApiDSNDSN=RestApiDSN

-

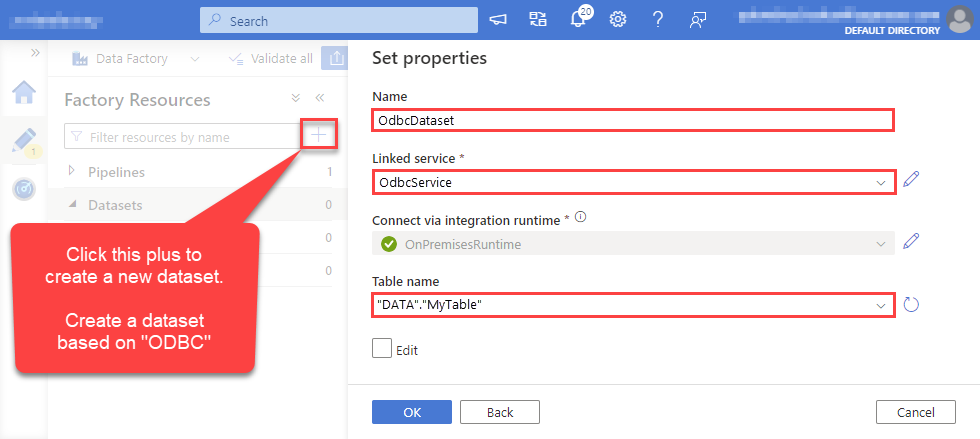

For created ODBC service create ODBC-based dataset:

-

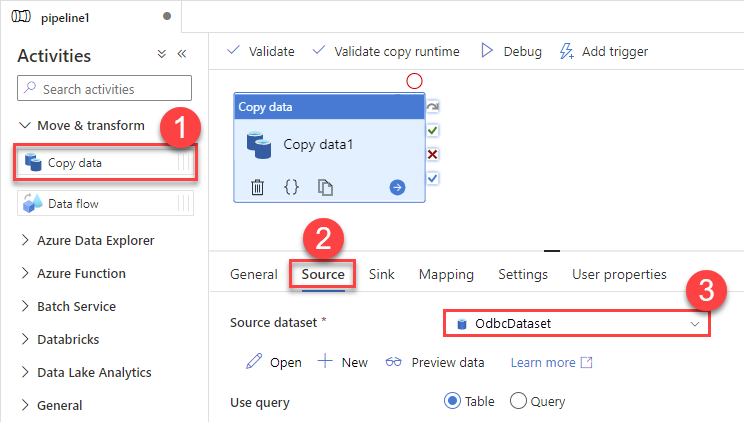

Go to your pipeline and add Copy data connector into the flow. In Source section use OdbcDataset we created as a source dataset:

-

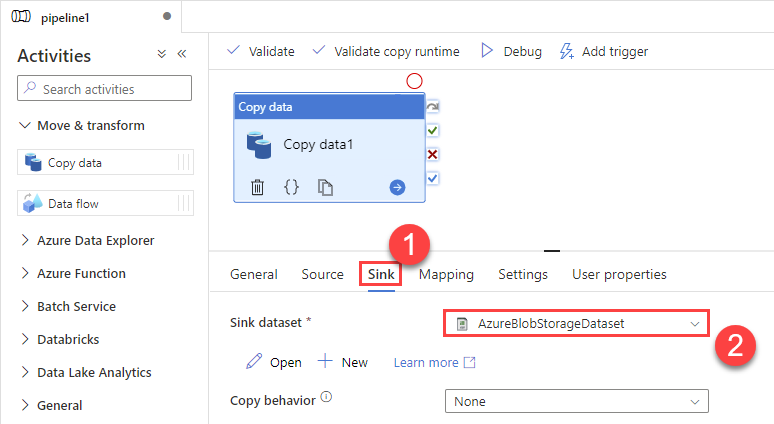

Then go to Sink section and select a destination/sink dataset. In this example we use precreated AzureBlobStorageDataset which saves data into an Azure Blob:

-

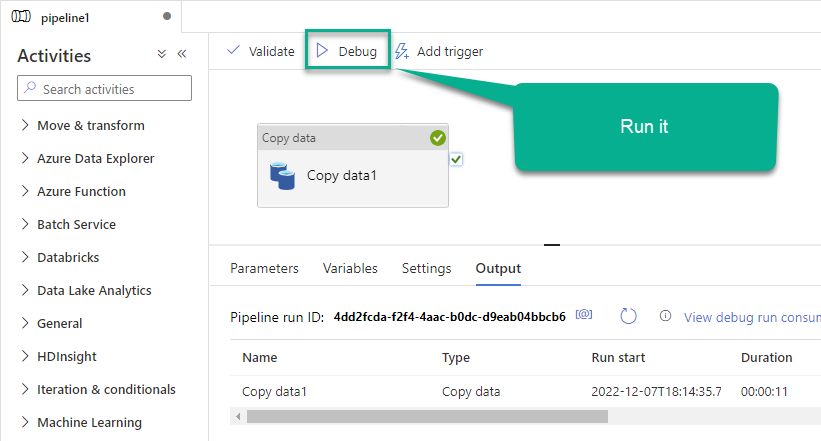

Finally, run the pipeline and see data being transferred from OdbcDataset to your destination dataset:

Executing SQL queries using Lookup activity

If you need to execute commands in REST API instead of retrieving data, use the Lookup activity for that purpose. Use this approach when you want data to be changed on the REST API side, but you don't need the data on your side (a "fire-and-forget" scenario).

Perform these simple steps to accomplish that:

-

Go to your pipeline in Azure Data Factory

-

Find Lookup activity in the Activities pane

-

Then drag-and-drop the Lookup activity onto your pipeline canvas

-

Click Settings tab

-

Select

OdbcDatasetin the Source dataset field -

Finally, enter your SQL query in the Query text box:

Optional: Centralized data access via ZappySys Data Gateway

In some situations, you may need to provide REST API data access to multiple users or services. Configuring the data source on a Data Gateway creates a single, centralized connection point for this purpose.

This configuration provides two primary advantages:

-

Centralized data access

The data source is configured once on the gateway, eliminating the need to set it up individually on each user's machine or application. This significantly simplifies the management process.

-

Centralized access control

Since all connections route through the gateway, access can be governed or revoked from a single location for all users.

| Data Gateway |

Local ODBC

data source

|

|

|---|---|---|

| Simple configuration | ||

| Installation | Single machine | Per machine |

| Connectivity | Local and remote | Local only |

| Connections limit | Limited by License | Unlimited |

| Central data access | ||

| Central access control | ||

| More flexible cost |

To achieve this, you must first create a data source in the Data Gateway (server-side) and then create an ODBC data source in Azure Data Factory (Pipeline) (client-side) to connect to it.

Let's not wait and get going!

Create REST API data source in the gateway

In this section we will create a data source for REST API in the Data Gateway. Let's follow these steps to accomplish that:

-

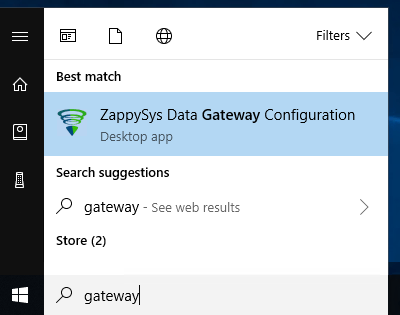

Search for

gatewayin the Windows Start Menu and open ZappySys Data Gateway Configuration:

-

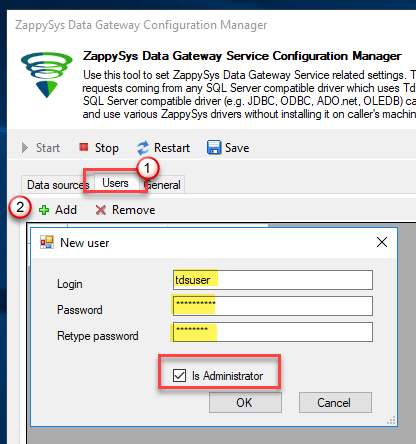

Go to the Users tab and follow these steps to add a Data Gateway user:

- Click the Add button

-

In the Login field enter a username, e.g.,

john - Then enter a Password

- Check the Is Administrator checkbox

- Click OK to save

-

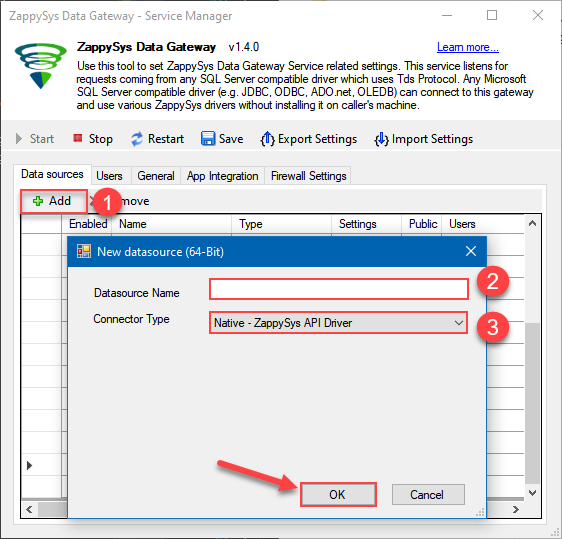

Now we are ready to add a data source:

- Click the Add button

- Give the Data source a name (have it handy for later)

- Then select Native - ZappySys JSON, XML or CSV Driver

- Finally, click OK

RestApiDSNZappySys JSON, XML or CSV Driver

-

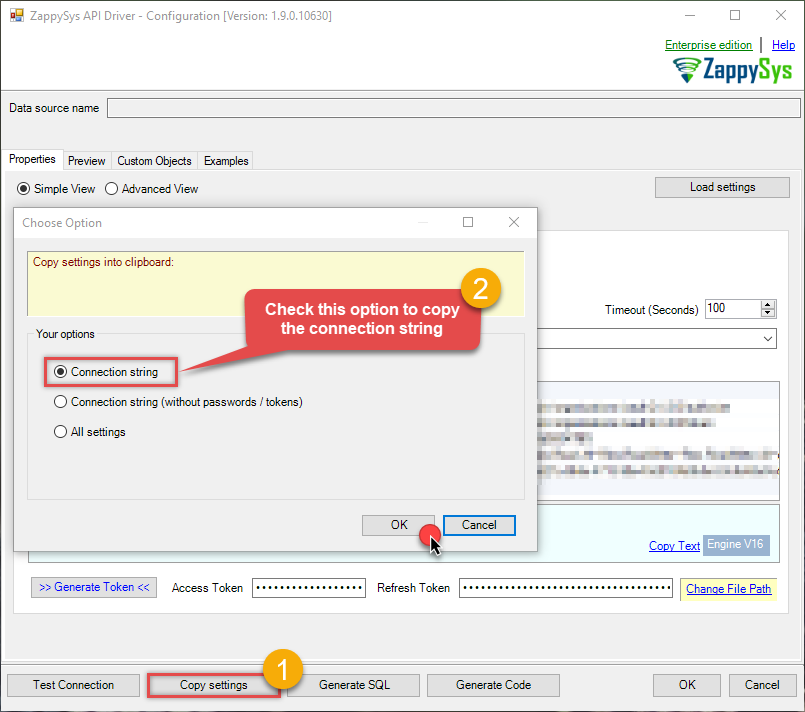

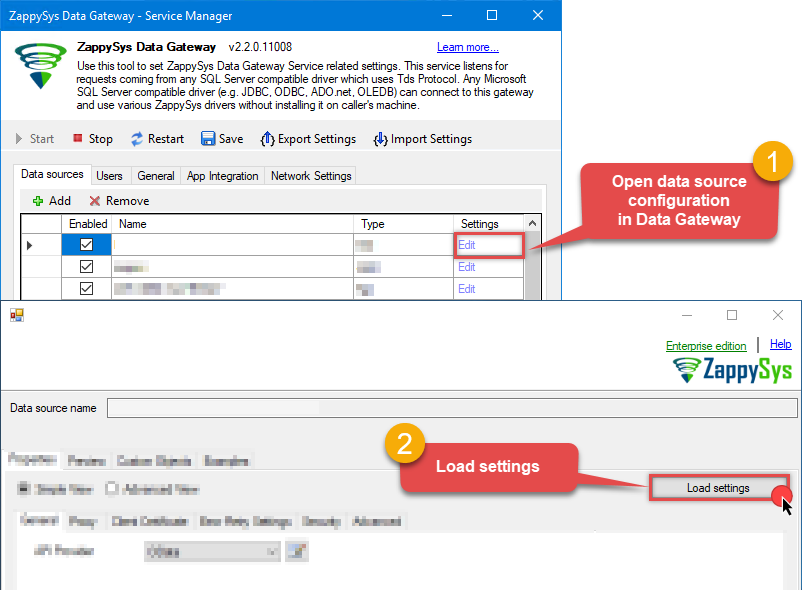

When the ZappySys JSON, XML or CSV Driver configuration window opens, go back to ODBC Data Source Administrator where you already have the REST API ODBC data source created and configured, and follow these steps on how to Import data source configuration into the Gateway:

-

Open ODBC data source configuration and click Copy settings:

ZappySys JSON, XML or CSV Driver - REST APIRead and write REST API data effortlessly. Streamline, manage, and automate JSON, XML, and CSV from web URLs for analytics, reporting, and data pipelines — almost no coding required.RestApiDSN

ZappySys JSON, XML or CSV Driver - REST APIRead and write REST API data effortlessly. Streamline, manage, and automate JSON, XML, and CSV from web URLs for analytics, reporting, and data pipelines — almost no coding required.RestApiDSN

-

The window opens, telling us the connection string was successfully copied to the clipboard:

-

Then go to Data Gateway configuration and in data source configuration window click Load settings:

RestApiDSN ZappySys JSON, XML or CSV Driver - Configuration [Version: 2.0.1.10418]ZappySys JSON, XML or CSV Driver - REST APIRead and write REST API data effortlessly. Streamline, manage, and automate JSON, XML, and CSV from web URLs for analytics, reporting, and data pipelines — almost no coding required.RestApiDSN

ZappySys JSON, XML or CSV Driver - Configuration [Version: 2.0.1.10418]ZappySys JSON, XML or CSV Driver - REST APIRead and write REST API data effortlessly. Streamline, manage, and automate JSON, XML, and CSV from web URLs for analytics, reporting, and data pipelines — almost no coding required.RestApiDSN

-

Once a window opens, just paste the settings by pressing

CTRL+Vor by clicking right mouse button and then Paste option.

-

Open ODBC data source configuration and click Copy settings:

-

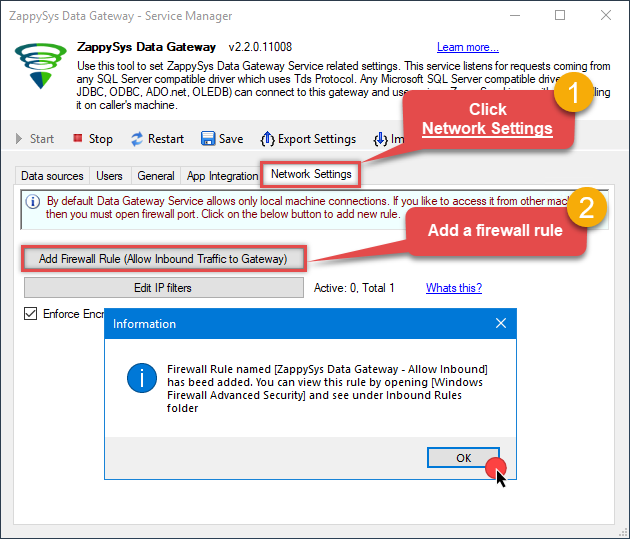

Once done, go to the Network Settings tab and Add a firewall rule for inbound traffic:

- This will initially allow all inbound traffic.

- Click Edit IP filters to restrict access to specific IP addresses or ranges.

-

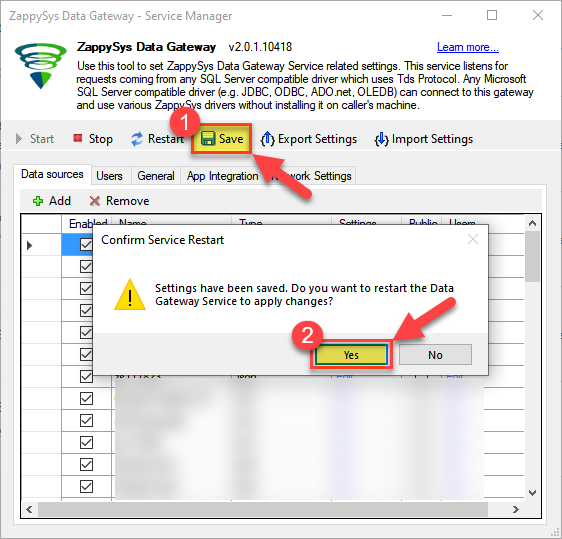

Crucial Step: After creating or modifying the data source, you must:

- Click the Save button to persist your changes.

- Hit Yes when prompted to restart the Data Gateway service.

This ensures all changes are properly applied:

Skipping this step may cause the new settings to fail, preventing you from connecting to the data source.

Skipping this step may cause the new settings to fail, preventing you from connecting to the data source.

Create ODBC data source to connect to the gateway

In this part we will create an ODBC data source to connect to the ZappySys Data Gateway from Azure Data Factory (Pipeline). To achieve that, let's perform these steps:

-

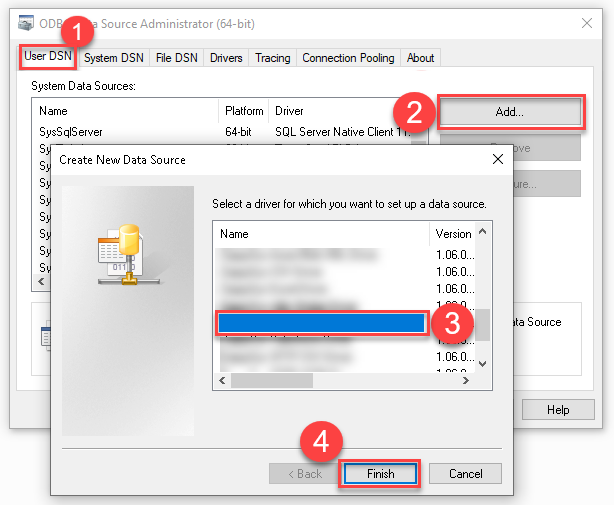

Search for

odbcand open the ODBC Data Sources (64-bit):

-

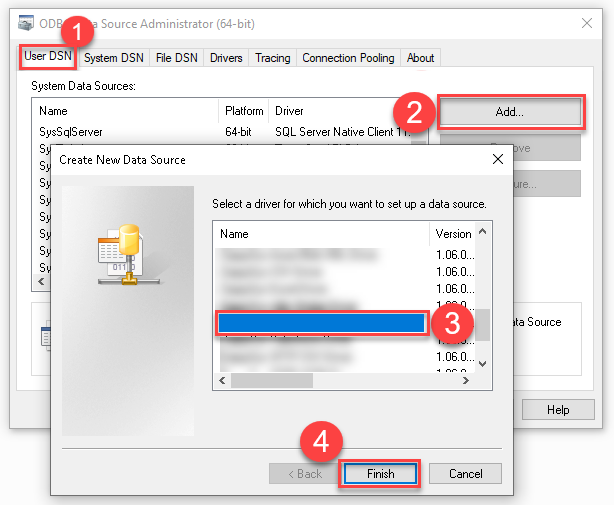

Create a User data source (User DSN) based on the ODBC Driver 17 for SQL Server driver:

ODBC Driver 17 for SQL Server If you don't see the ODBC Driver 17 for SQL Server driver in the list, choose a similar version.

If you don't see the ODBC Driver 17 for SQL Server driver in the list, choose a similar version. -

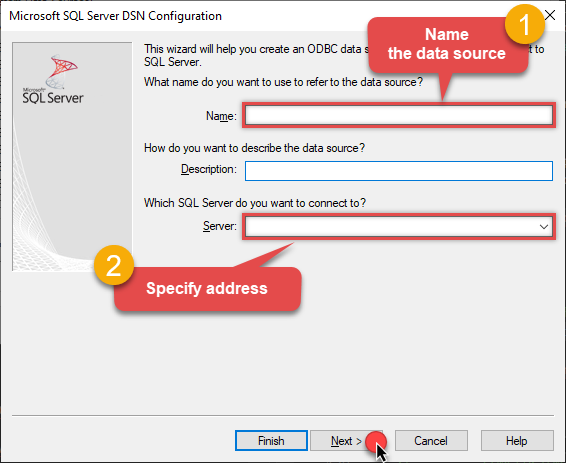

Then set a Name for the data source (e.g.

Gateway) and the address of the Data Gateway:ZappySysGatewayDSNlocalhost,5000 Make sure you separate the hostname and port with a comma, e.g.

Make sure you separate the hostname and port with a comma, e.g.localhost,5000. -

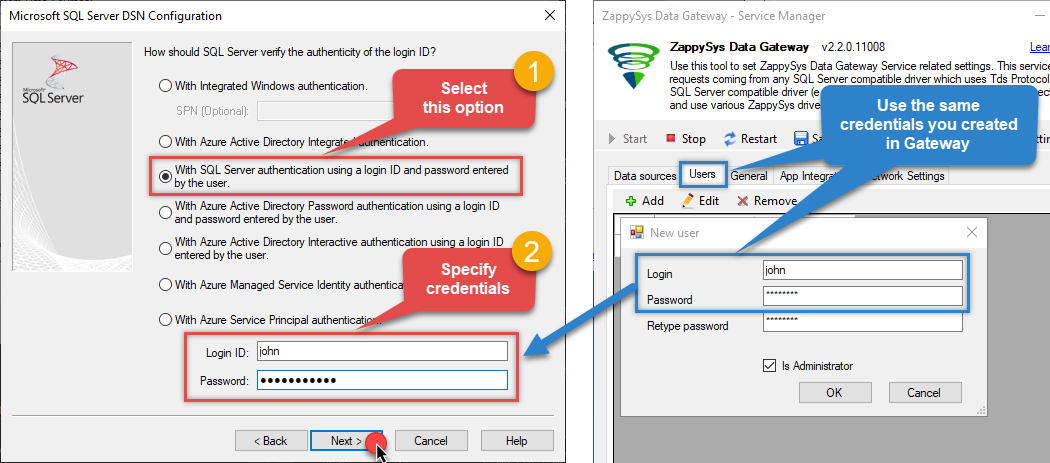

Proceed with the authentication part:

- Select SQL Server authentication

-

In the Login ID field enter the user name you created in the Data Gateway, e.g.,

john - Set Password to the one you configured in the Data Gateway

-

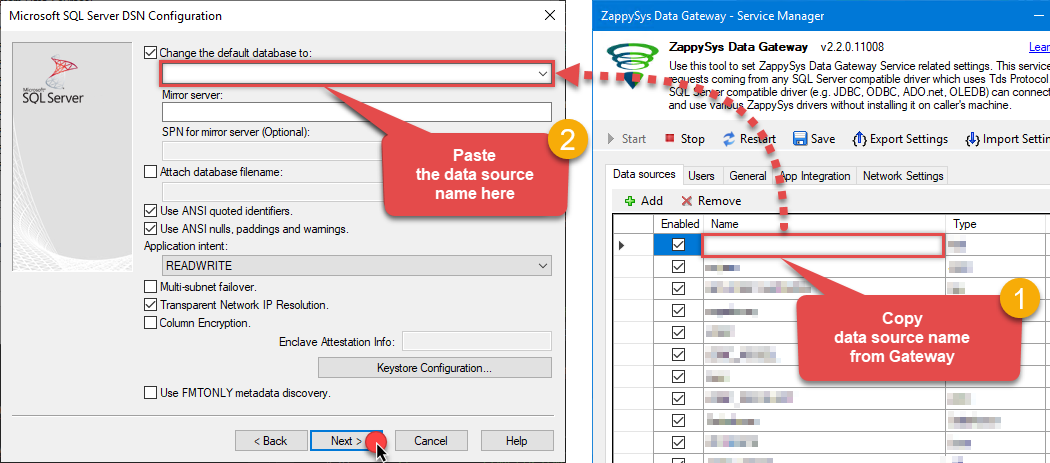

Then set the default database property to

RestApiDSN(the one we used in the Data Gateway):RestApiDSNRestApiDSN Make sure to type the data source name manually or copy/paste it directly into the field. Using the dropdown might fail because the Trust server certificate option is not enabled yet (next step).

Make sure to type the data source name manually or copy/paste it directly into the field. Using the dropdown might fail because the Trust server certificate option is not enabled yet (next step). -

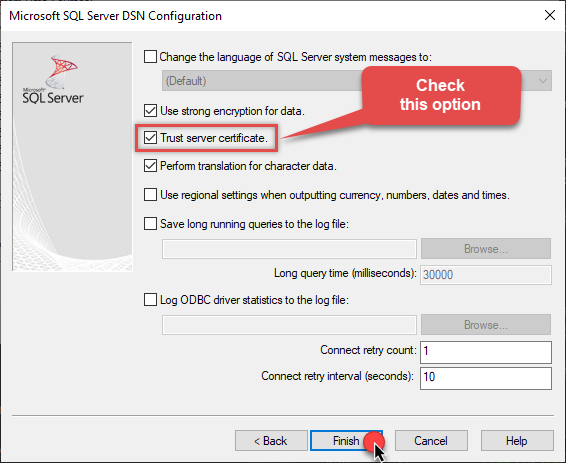

Continue by checking the Trust server certificate option:

-

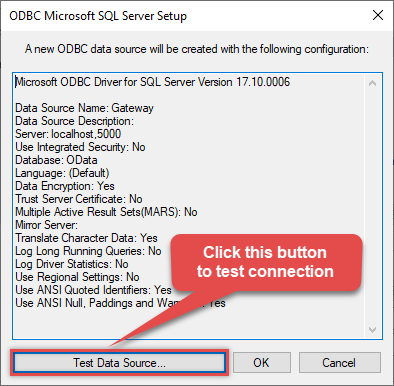

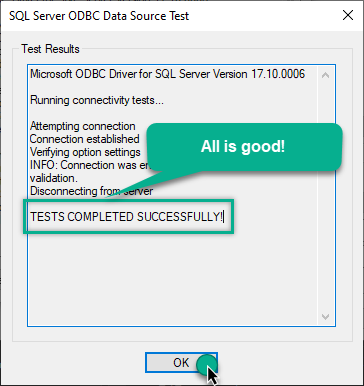

Once you do that, test the connection:

-

If the connection is successful, everything is good:

-

Done!

We are ready to move to the final step. Let's do it!

Access data in Azure Data Factory (Pipeline) via the gateway

Finally, we are ready to read data from REST API in Azure Data Factory (Pipeline) via the Data Gateway. Follow these final steps:

-

Go back to Azure Data Factory (Pipeline).

-

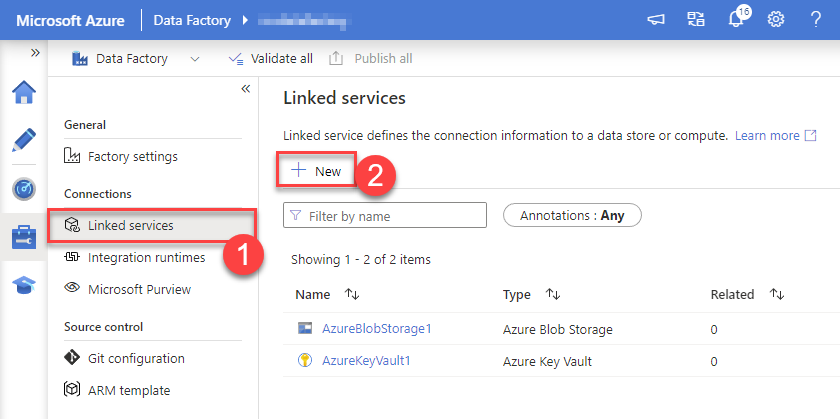

Create a New Linked service:

In the Manage section (left menu).

Under Connections, select Linked services.

Click + New to create a new Linked service based on ODBC.

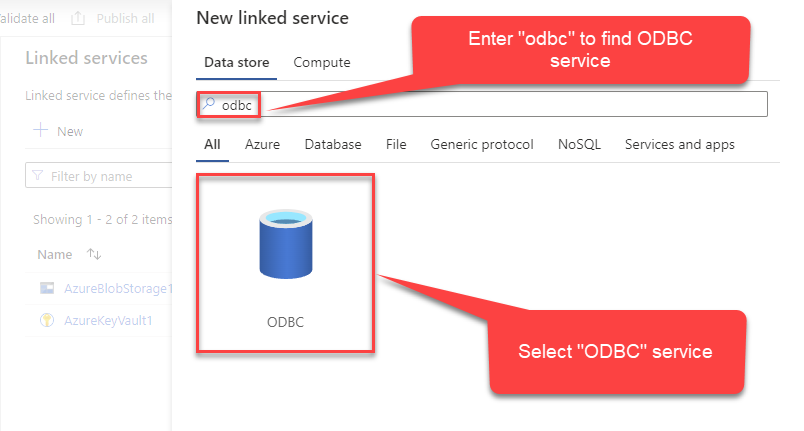

-

Select ODBC service:

-

Configure new ODBC service. Use the same DSN name we used in the previous step and copy it to Connection string box:

ZappySysGatewayDSNDSN=ZappySysGatewayDSN

-

Read the data the same way we discussed at the beginning of this article.

-

That's it!

Now you can connect to REST API data in Azure Data Factory (Pipeline) via the Data Gateway.

john and your password.

Configuring pagination in the REST API Driver

ZappySys REST API Driver equips users with powerful tools for seamless data extraction and management from REST APIs, leveraging advanced pagination methods for enhanced efficiency. These options are designed to handle various types of pagination structures commonly used in APIs. Below are the detailed descriptions of these options:

Page-based Pagination: This method works by retrieving data in fixed-size pages from the Rest API. It allows you to specify the page size and navigate through the results by requesting different page numbers, ensuring that you can access all the data in a structured manner.

Offset-based Pagination: With this approach, you can extract data by specifying the starting point or offset from which to begin retrieving data. It allows you to define the number of records to skip and fetch subsequent data accordingly, providing precise control over the data extraction process.

Cursor-based Pagination: This technique involves using a cursor or a marker that points to a specific position in the dataset. It enables you to retrieve data starting from the position indicated by the cursor and proceed to subsequent segments, ensuring that you capture all the relevant information without missing any records.

Token-based Pagination: In this method, a token serves as a unique identifier for a specific data segment. It allows you to access the next set of data by using the token provided in the response from the previous request. This ensures that you can systematically retrieve all the data segments without duplication or omission.

Utilizing these comprehensive pagination features in the ZappySys REST API Driver facilitates efficient data management and extraction from REST APIs, optimizing the integration and analysis of extensive datasets.

For more detailed steps, please refer to this link: How to do REST API Pagination in SSIS / ODBC Drivers

Authentication

ZappySys offers various authentication methods to securely access data from various sources. These authentication methods include OAuth, Basic Authentication, Token-based Authentication, and more, allowing users to connect to a wide range of data sources securely.

ZappySys Authentication is a robust system that facilitates secure access to data from a diverse range of sources. It includes a variety of authentication methods tailored to meet the specific requirements of different data platforms and services. These authentication methods may involve:

OAuth: ZappySys supports OAuth for authentication, which allows users to grant limited access to their data without revealing their credentials. It's commonly used for applications that require access to user account information.

Basic Authentication: This method involves sending a username and password with every request. ZappySys allows users to securely access data using this traditional authentication approach.

Token-based Authentication: ZappySys enables users to utilize tokens for authentication. This method involves exchanging a unique token with each request to authenticate the user's identity without revealing sensitive information.

By implementing these authentication methods, ZappySys ensures the secure and reliable retrieval of data from various sources, providing users with the necessary tools to access and integrate data securely and efficiently. For more comprehensive details on the authentication process, please refer to the official ZappySys documentation or reach out to their support team for further assistance.

For more details, please refer to this link: ZappySys Connections

Conclusion

In this article we showed you how to connect to REST API in Azure Data Factory (Pipeline) and integrate data without writing complex code — all of this was powered by REST API ODBC Driver.

Download ODBC PowerPack now or ping us via chat if you have any questions or are looking for a specific feature (you can also reach out to us by submitting a ticket):