Introduction

You can connect to your Custom API data from Azure Data Factory (Pipeline) via the high-performance Custom API ODBC Driver. We'll walk you through the entire setup.

Let's not waste time and get started!

Create Custom API Connector

First of all, you will have to create your own API connector.

For demonstration purposes, in this section we will create a simple Hello-World API connector that

calls ZappySys Sandbox World API endpoint https://sandbox.zappysys.com/api/world/hello.

When developing your Custom API Connector, just replace it with your real API method/endpoint.

Let's dive in and follow these steps:

-

Search for

odbcand open the ODBC Data Sources (64-bit):

-

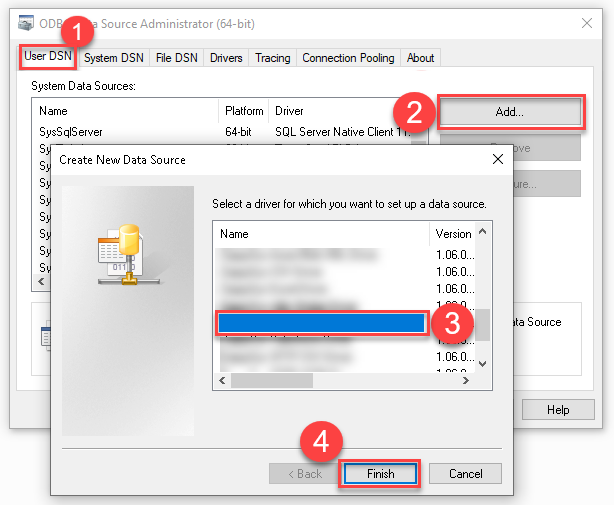

Create a User data source (User DSN) based on the ZappySys JSON Driver driver:

ZappySys JSON Driver

-

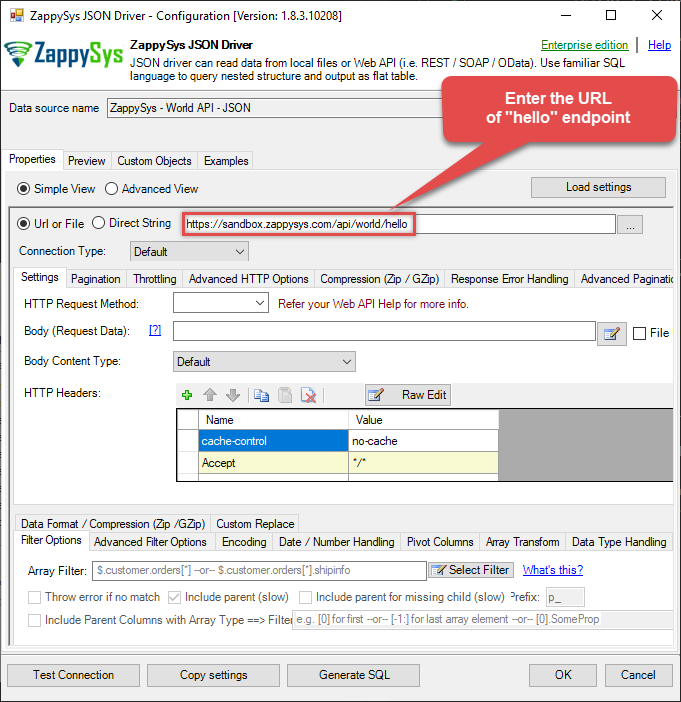

Once the data source configuration window opens, enter this URL into the text box:

https://sandbox.zappysys.com/api/world/hello

-

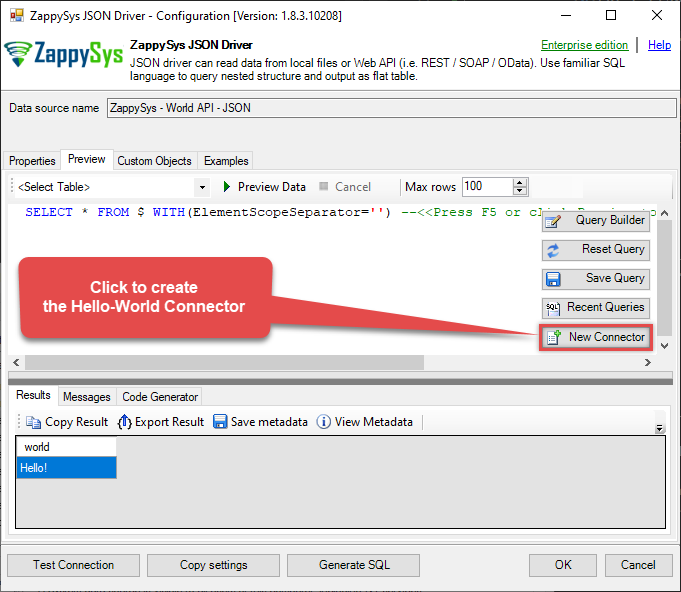

Then go to the Preview tab and try to say "Hello!" to the World!

-

Since the test is successful, you are ready to create the Hello-World Connector:

-

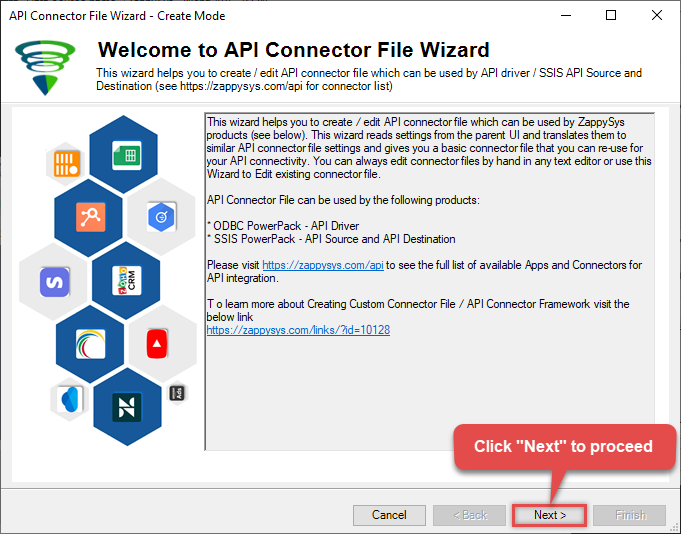

The API Connector File Wizard opens, click Next:

-

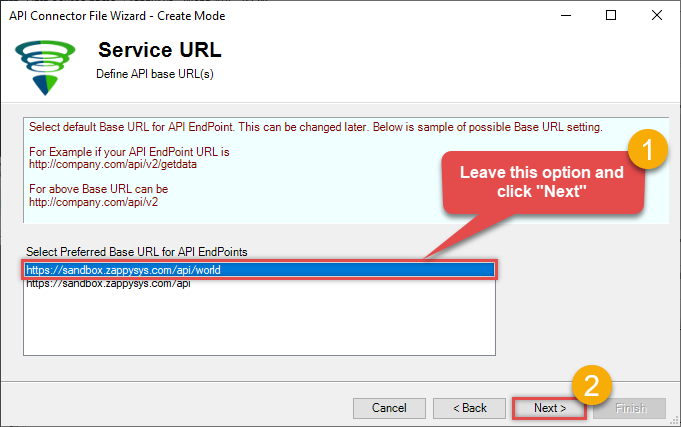

Leave the default option, and click Next again:

-

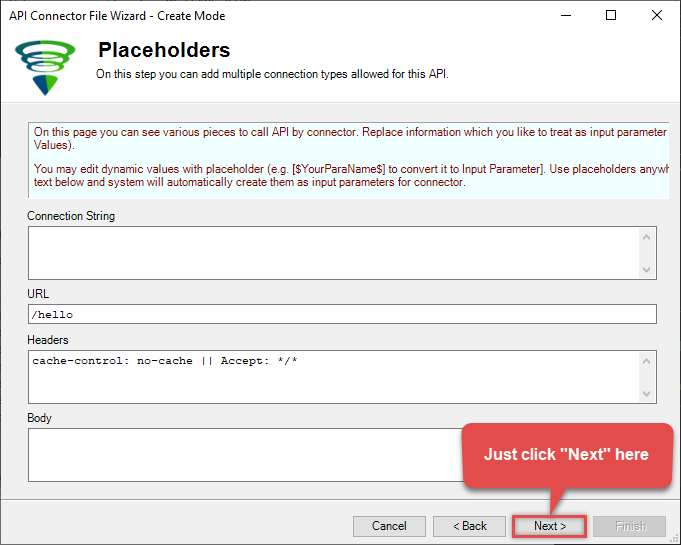

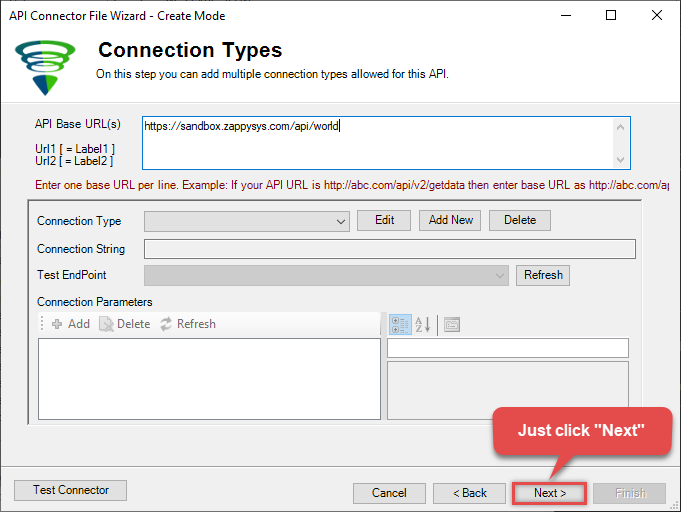

Just click Next in the next window:

-

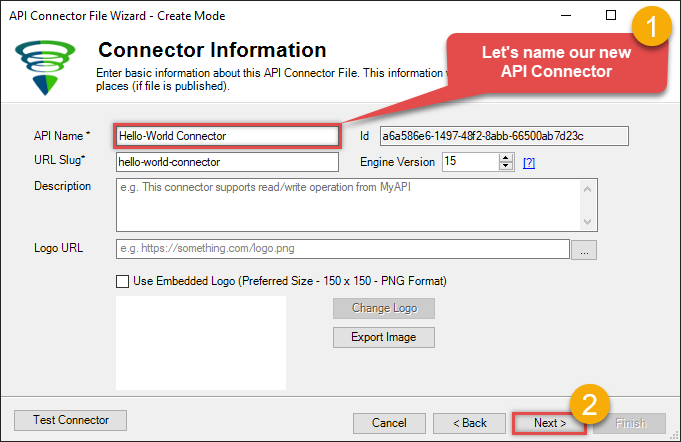

Let's give our new custom connector a name it deserves:

-

Then just click Next in the Connection Types window:

-

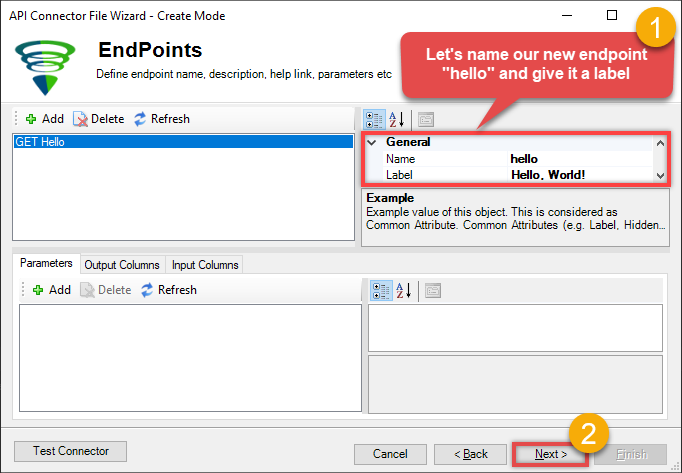

Let's name the hello endpoint (it deserves a name too!):

-

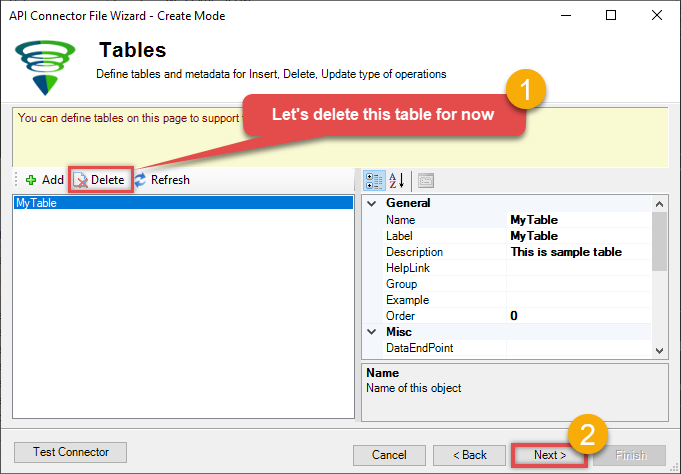

When the next window opens, delete the default table (we won't need it for now):

-

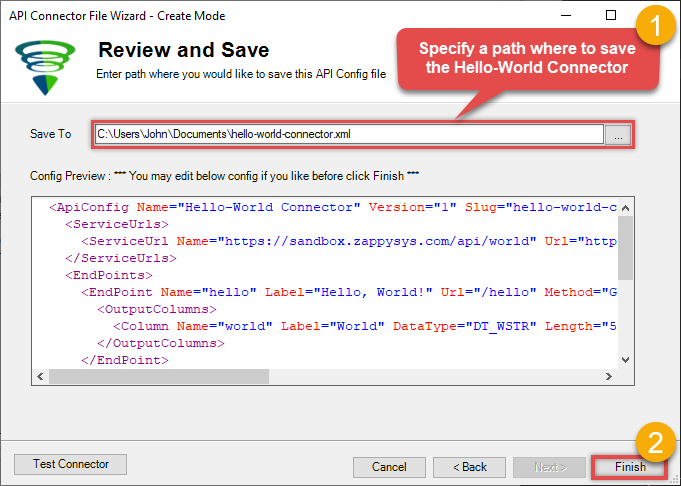

Finally, specify a path, where you want to save the newly created API Connector:

Create data source using Custom API ODBC Driver

Video instructions

Watch this quick walkthrough to see how to configure your Custom API ODBC data source, or scroll down for the step-by-step written guide.

Step-by-step instructions

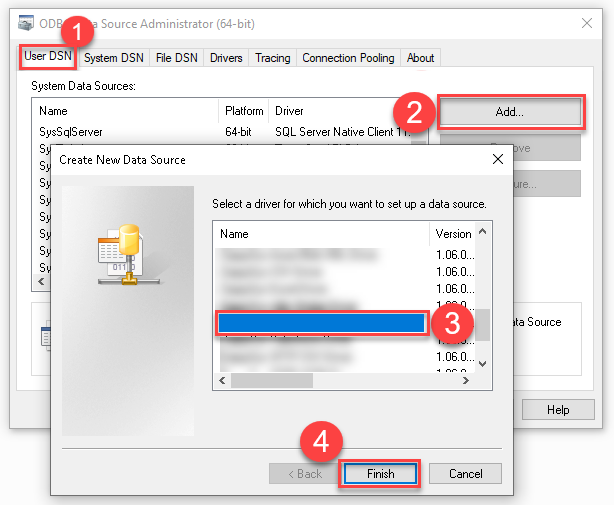

To get data from Custom API using Azure Data Factory (Pipeline), we first need to create an ODBC data source. We will later read this data in Azure Data Factory (Pipeline). Perform these steps:

-

Download and install ODBC PowerPack (if you haven't already).

-

Search for

odbcand open the ODBC Data Sources (64-bit):

-

Create a User data source (User DSN) based on the ZappySys API Driver driver:

ZappySys API Driver

-

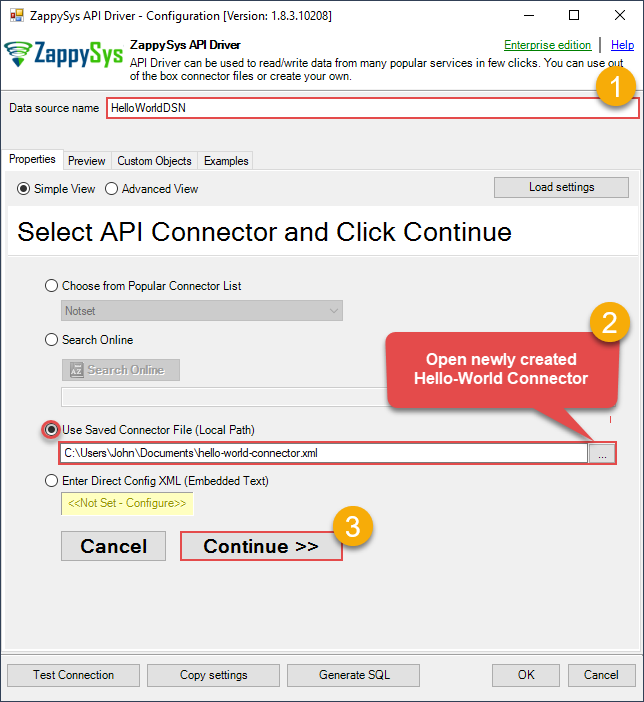

When the Configuration window appears give your data source a name if you haven't done that already. Then set the path to your created Custom API Connector (in the example below, we use Hello-World Connector). Finally, click Continue >> to proceed:

-

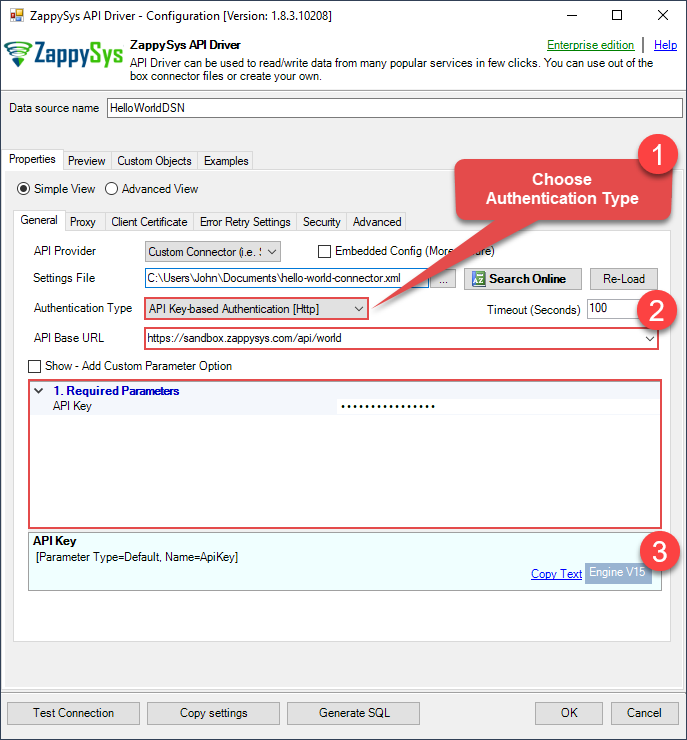

Now it's time to configure the Connection Manager. Select Authentication Type, e.g. Token Authentication. Then select API Base URL (in most cases, the default one is the right one). Check your Custom API reference for more information on how to authenticate.

-

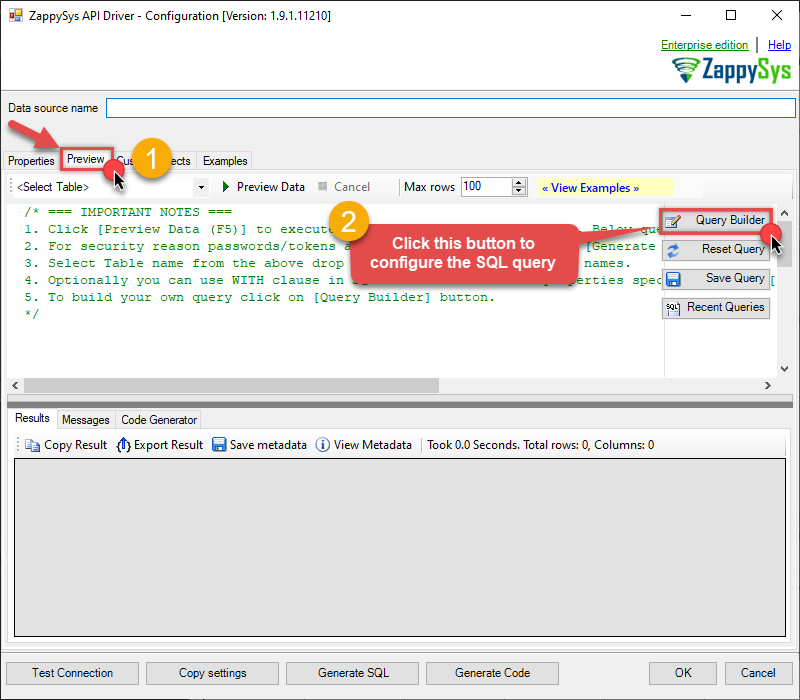

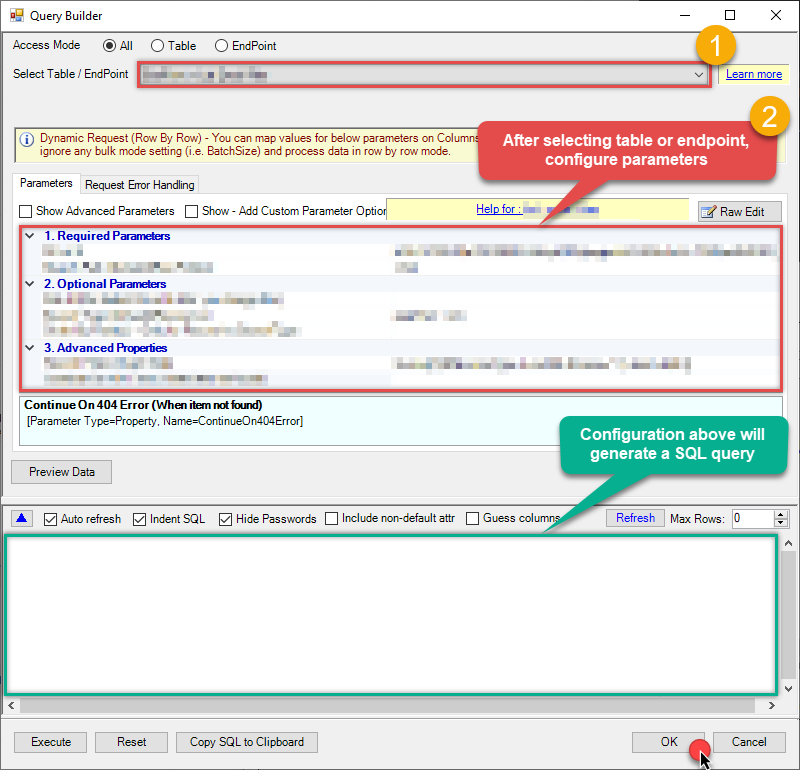

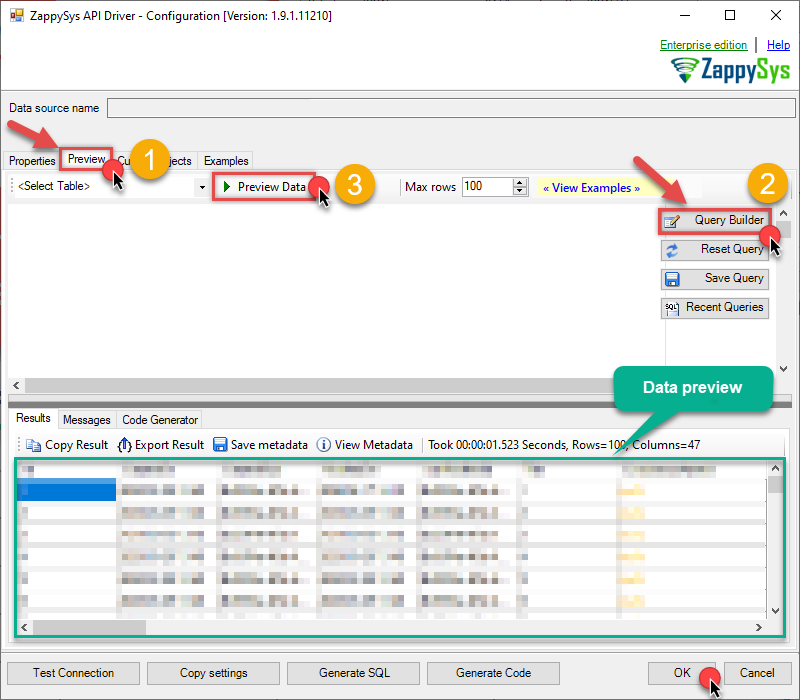

Once the data source connection has been configured, it's time to configure the SQL query. Select the Preview tab and then click Query Builder button to configure the SQL query:

ZappySys API Driver - Custom APIRead / write Custom API data without coding.CustomApiDSN

ZappySys API Driver - Custom APIRead / write Custom API data without coding.CustomApiDSN

-

Start by selecting the Table or Endpoint you are interested in and then configure the parameters. This will generate a query that we will use in Azure Data Factory (Pipeline) to retrieve data from Custom API. Hit OK button to use this query in the next step.

SELECT * FROM Orders Some parameters configured in this window will be passed to the Custom API, e.g. filtering parameters. It means that filtering will be done on the server side (instead of the client side), enabling you to get only the meaningful data

Some parameters configured in this window will be passed to the Custom API, e.g. filtering parameters. It means that filtering will be done on the server side (instead of the client side), enabling you to get only the meaningful datamuch faster . -

Now hit Preview Data button to preview the data using the generated SQL query. If you are satisfied with the result, use this query in Azure Data Factory (Pipeline):

ZappySys API Driver - Custom APIRead / write Custom API data without coding.CustomApiDSN

ZappySys API Driver - Custom APIRead / write Custom API data without coding.CustomApiDSNSELECT * FROM Orders You can also access data quickly from the tables dropdown by selecting <Select table>.A

You can also access data quickly from the tables dropdown by selecting <Select table>.AWHEREclause,LIMITkeyword will be performed on the client side, meaning that thewhole result set will be retrieved from the Custom API first, and only then the filtering will be applied to the data. If possible, it is recommended to use parameters in Query Builder to filter the data on the server side (in Custom API servers). -

Click OK to finish creating the data source.

Read data in Azure Data Factory (ADF) from ODBC datasource (Custom API)

-

Sign in to Azure Portal

-

Open your browser and go to: https://portal.azure.com

-

Enter your Azure credentials and complete MFA if required.

-

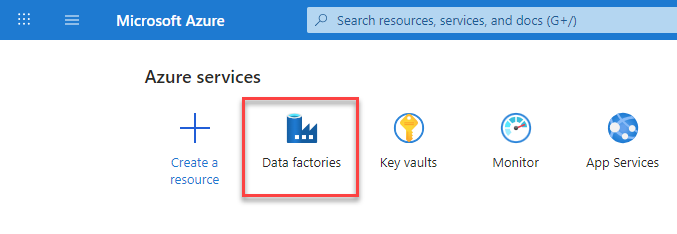

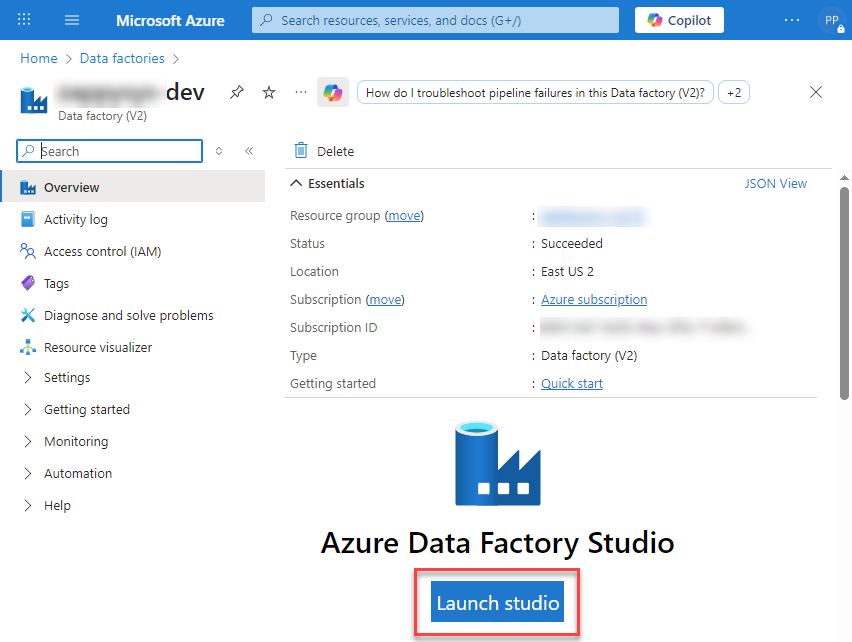

After login, go to Data factories.

-

-

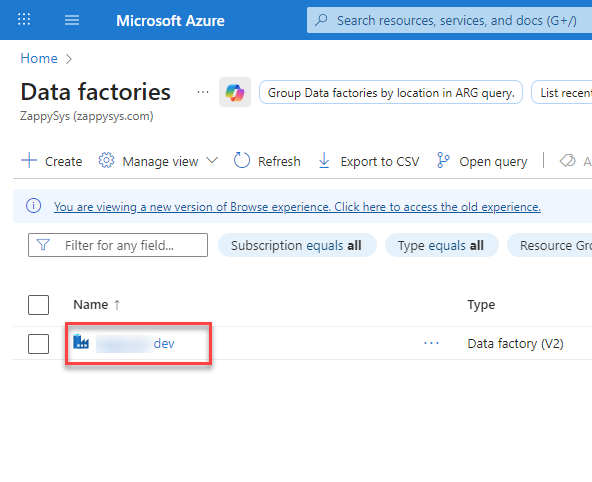

Under Azure Data Factory Resource - Create or select the Data Factory you want to work with.

-

Inside the Data Factory resource page, click Launch studio.

-

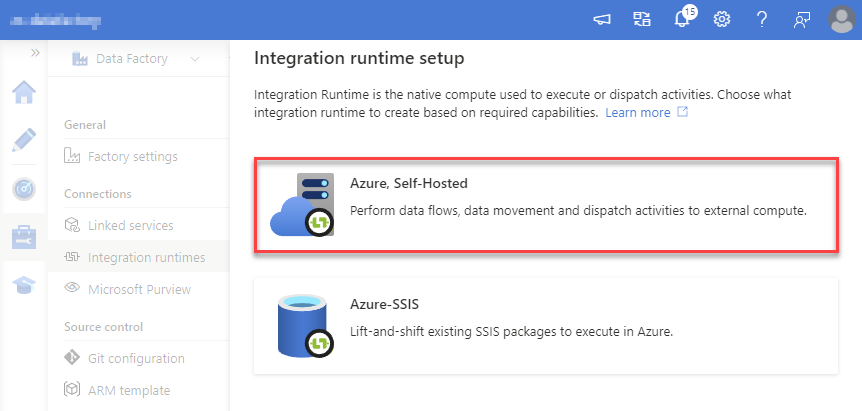

Create a New Integration Runtime (Self-Hosted):

In Azure Data Factory Studio, go to the Manage section (left menu).

Under Connections, select Integration runtimes.

Click + New to create a new integration runtime.

-

Select Azure, Self-Hosted option:

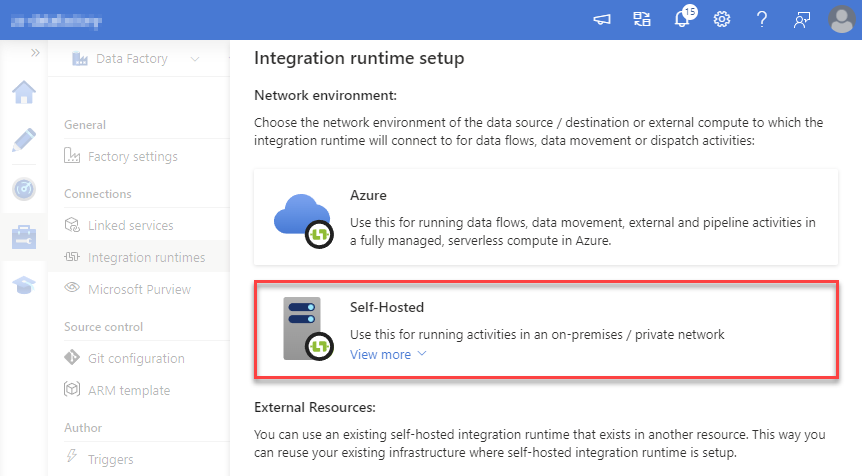

-

Select Self-Hosted option:

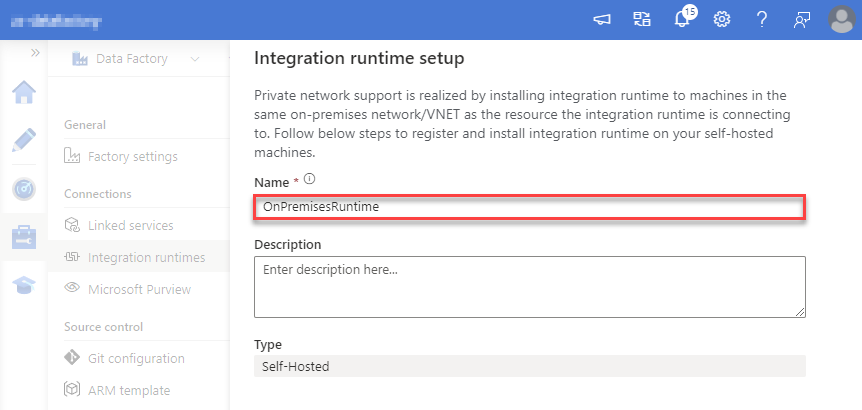

-

Set a name, we will use OnPremisesRuntime:

-

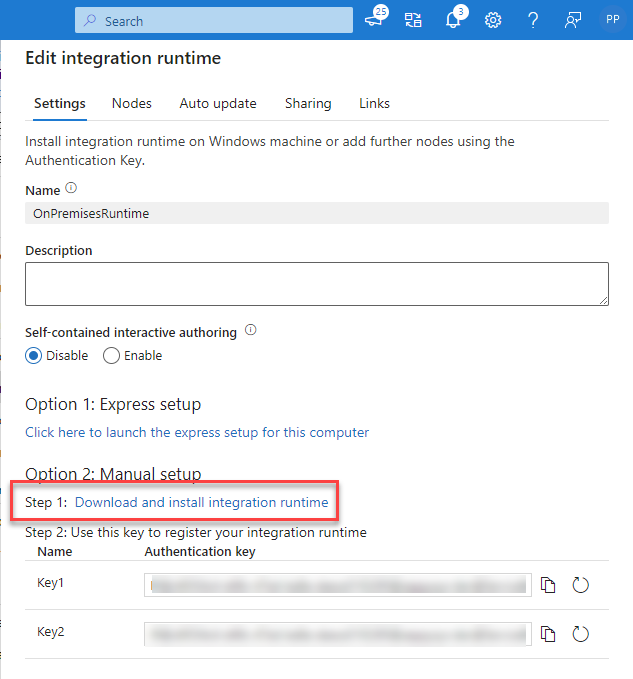

Download and install Microsoft Integration Runtime.

-

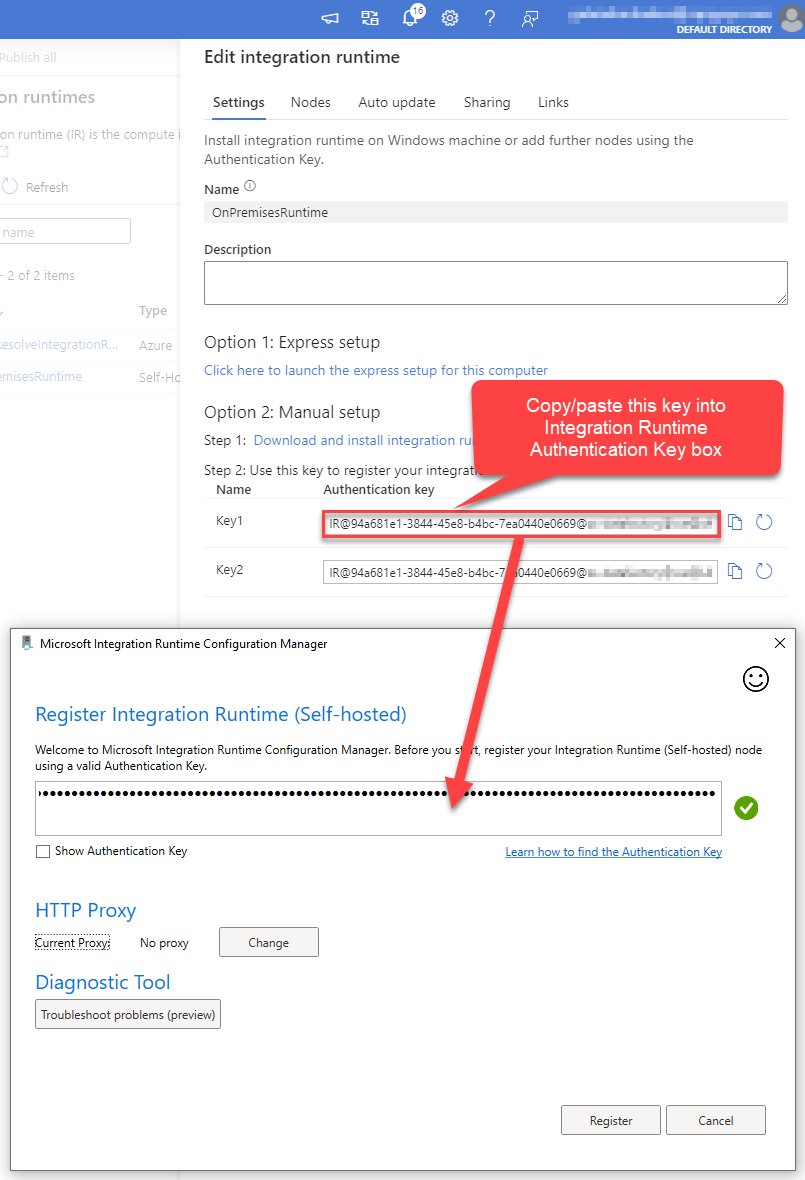

Launch Integration Runtime and copy/paste Authentication Key from Integration Runtime configuration in Azure Portal:

-

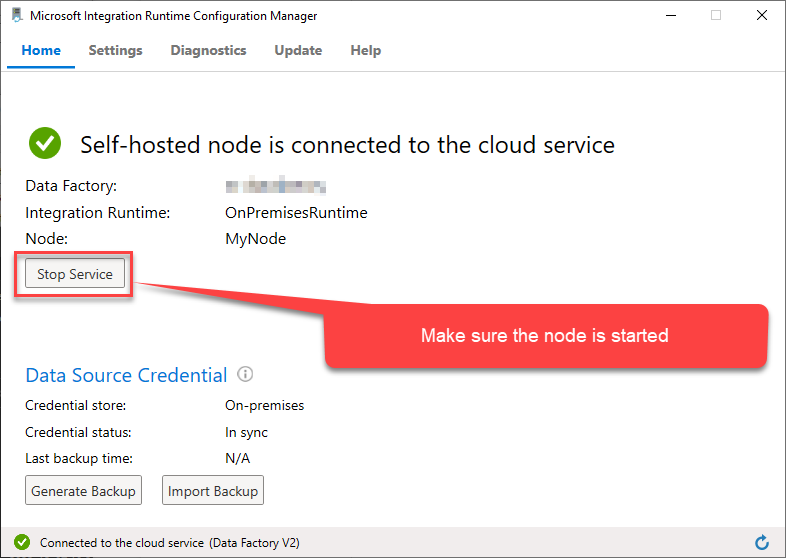

After finishing registering the Integration Runtime node, you should see a similar view:

-

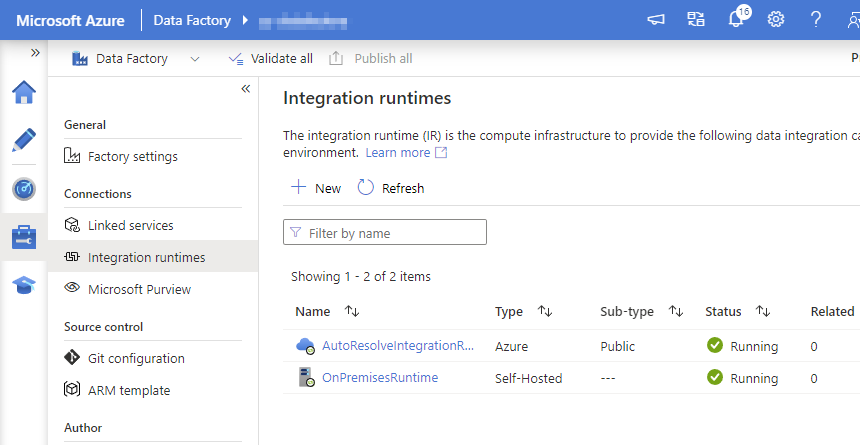

Go back to Azure Portal and finish adding new Integration Runtime. You should see it was successfully added:

-

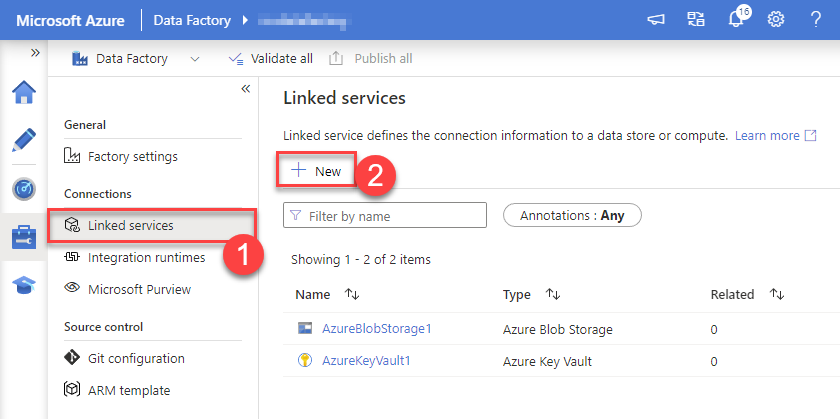

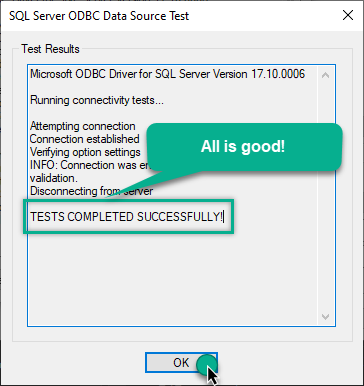

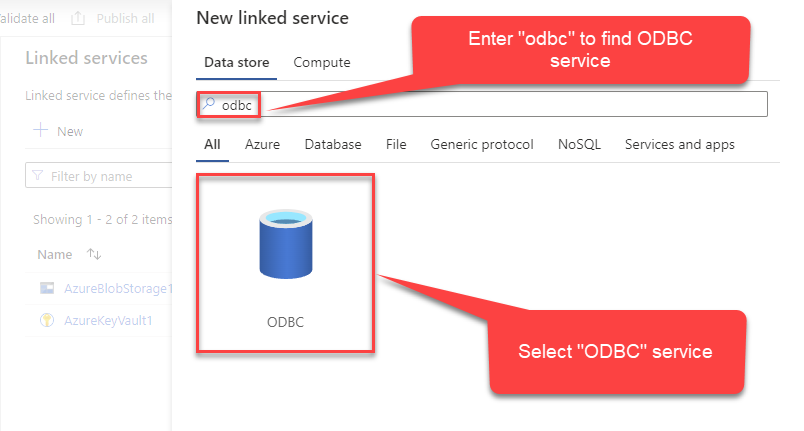

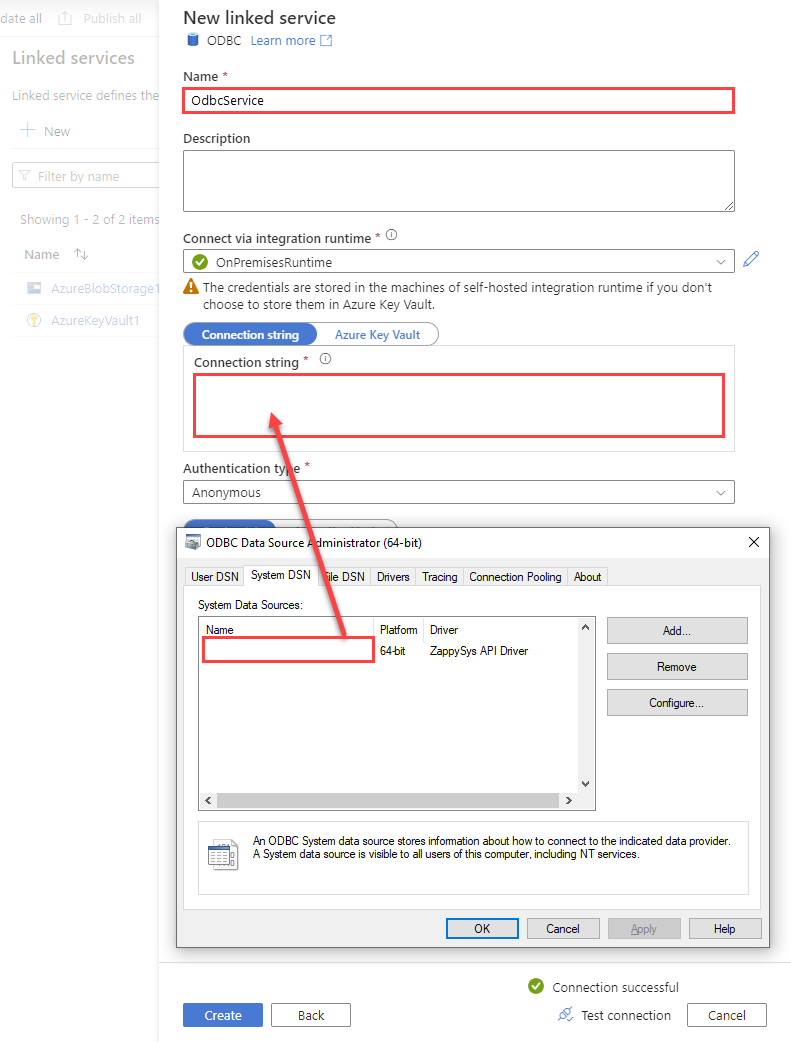

Create a New Linked service:

In the Manage section (left menu).

Under Connections, select Linked services.

Click + New to create a new Linked service based on ODBC.

-

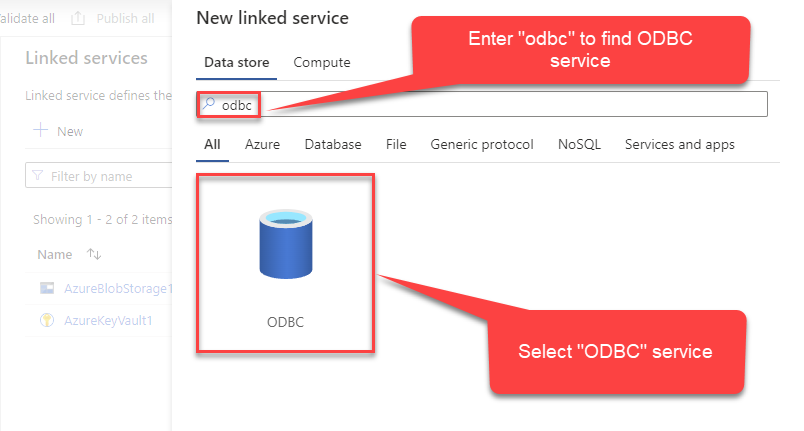

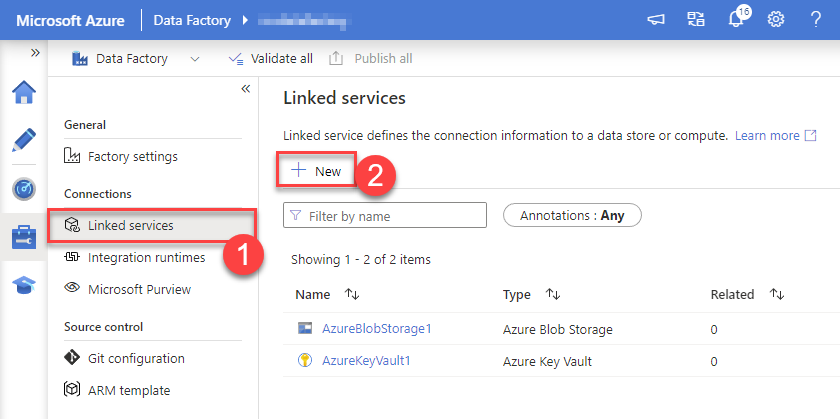

Select ODBC service:

-

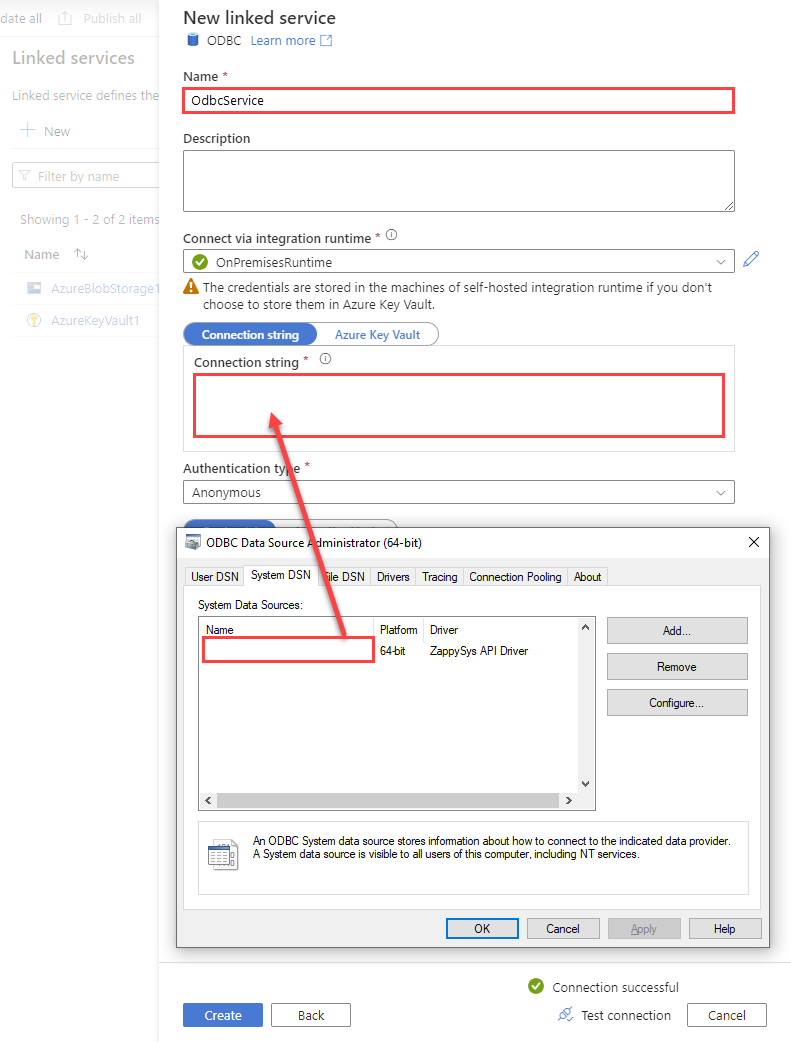

Configure new ODBC service. Use the same DSN name we used in the previous step and copy it to Connection string box:

CustomApiDSNDSN=CustomApiDSN

-

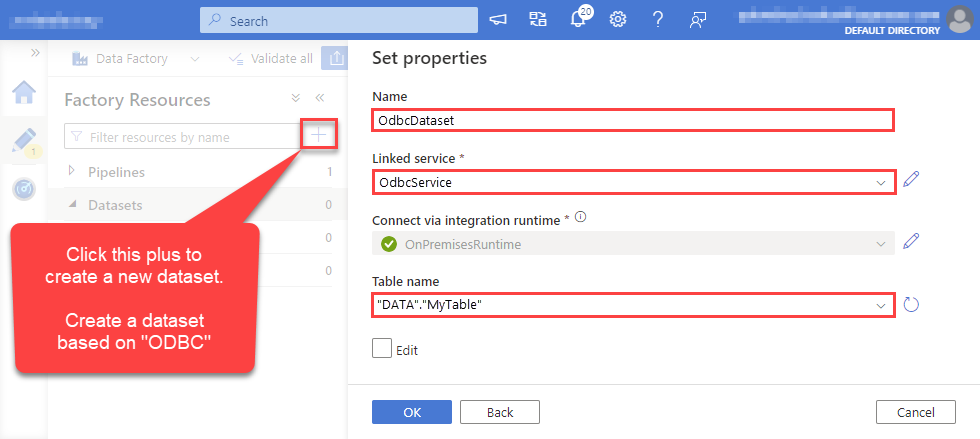

For created ODBC service create ODBC-based dataset:

-

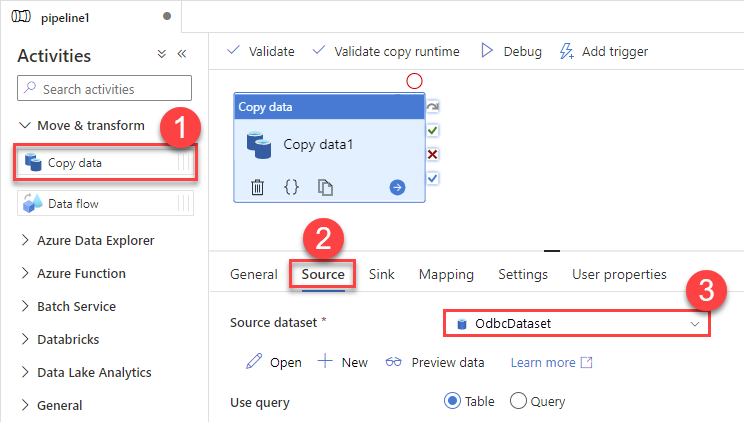

Go to your pipeline and add Copy data connector into the flow. In Source section use OdbcDataset we created as a source dataset:

-

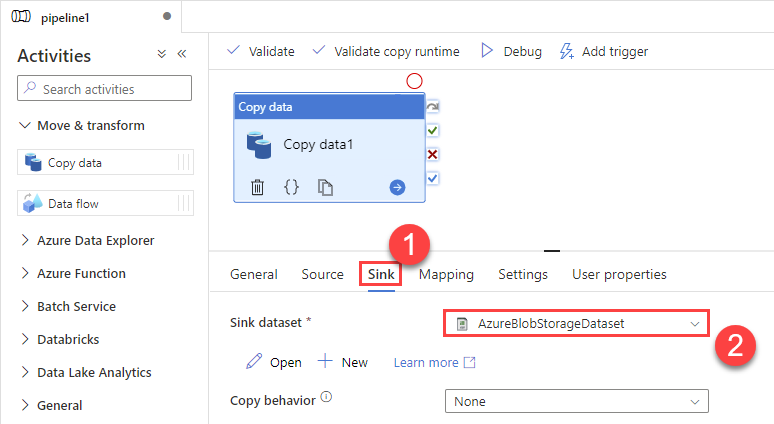

Then go to Sink section and select a destination/sink dataset. In this example we use precreated AzureBlobStorageDataset which saves data into an Azure Blob:

-

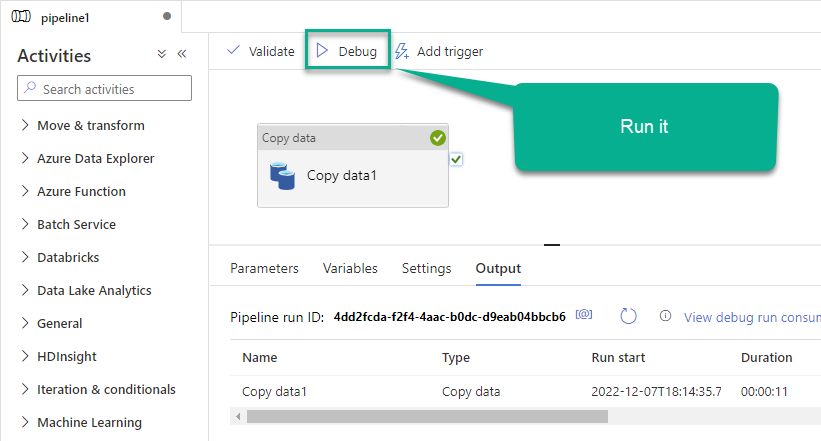

Finally, run the pipeline and see data being transferred from OdbcDataset to your destination dataset:

Executing SQL queries using Lookup activity

If you need to execute commands in Custom API instead of retrieving data, use the Lookup activity for that purpose. Use this approach when you want data to be changed on the Custom API side, but you don't need the data on your side (a "fire-and-forget" scenario).

Perform these simple steps to accomplish that:

-

Go to your pipeline in Azure Data Factory

-

Find Lookup activity in the Activities pane

-

Then drag-and-drop the Lookup activity onto your pipeline canvas

-

Click Settings tab

-

Select

OdbcDatasetin the Source dataset field -

Finally, enter your SQL query in the Query text box:

Optional: Centralized data access via ZappySys Data Gateway

In some situations, you may need to provide Custom API data access to multiple users or services. Configuring the data source on a Data Gateway creates a single, centralized connection point for this purpose.

This configuration provides two primary advantages:

-

Centralized data access

The data source is configured once on the gateway, eliminating the need to set it up individually on each user's machine or application. This significantly simplifies the management process.

-

Centralized access control

Since all connections route through the gateway, access can be governed or revoked from a single location for all users.

| Data Gateway |

Local ODBC

data source

|

|

|---|---|---|

| Simple configuration | ||

| Installation | Single machine | Per machine |

| Connectivity | Local and remote | Local only |

| Connections limit | Limited by License | Unlimited |

| Central data access | ||

| Central access control | ||

| More flexible cost |

To achieve this, you must first create a data source in the Data Gateway (server-side) and then create an ODBC data source in Azure Data Factory (Pipeline) (client-side) to connect to it.

Let's not wait and get going!

Create Custom API data source in the gateway

In this section we will create a data source for Custom API in the Data Gateway. Let's follow these steps to accomplish that:

-

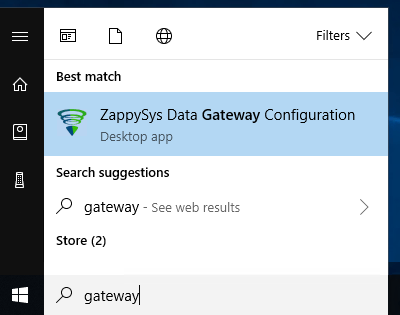

Search for

gatewayin the Windows Start Menu and open ZappySys Data Gateway Configuration:

-

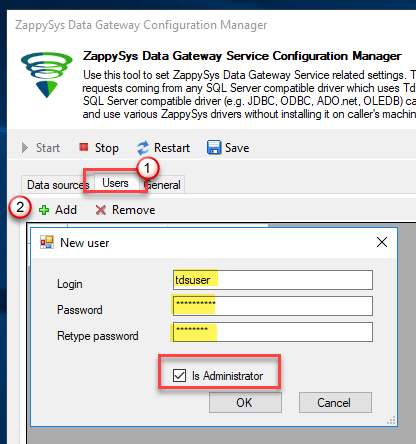

Go to the Users tab and follow these steps to add a Data Gateway user:

- Click the Add button

-

In the Login field enter a username, e.g.,

john - Then enter a Password

- Check the Is Administrator checkbox

- Click OK to save

-

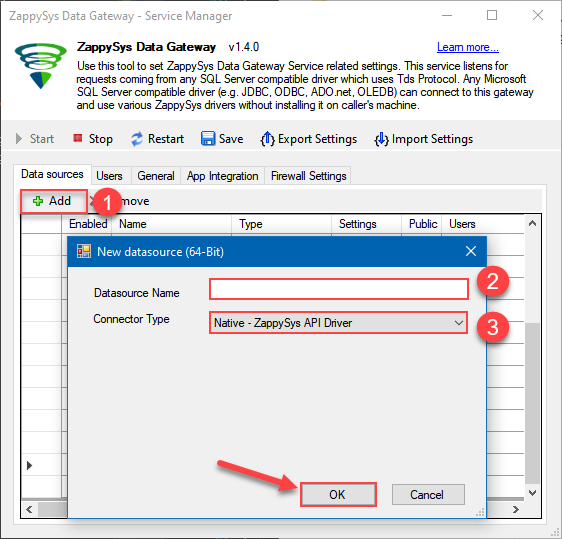

Now we are ready to add a data source:

- Click the Add button

- Give the Data source a name (have it handy for later)

- Then select Native - ZappySys API Driver

- Finally, click OK

CustomApiDSNZappySys API Driver

-

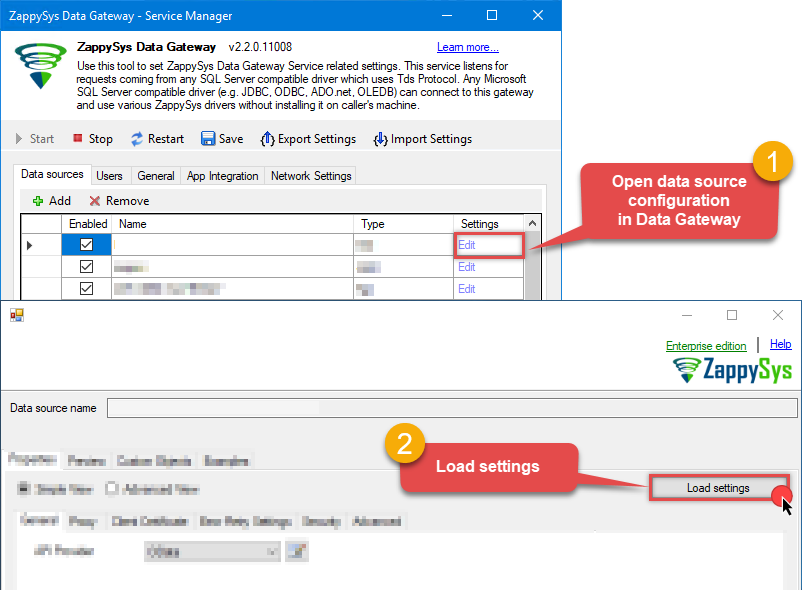

When the ZappySys API Driver configuration window opens, go back to ODBC Data Source Administrator where you already have the Custom API ODBC data source created and configured, and follow these steps on how to Import data source configuration into the Gateway:

-

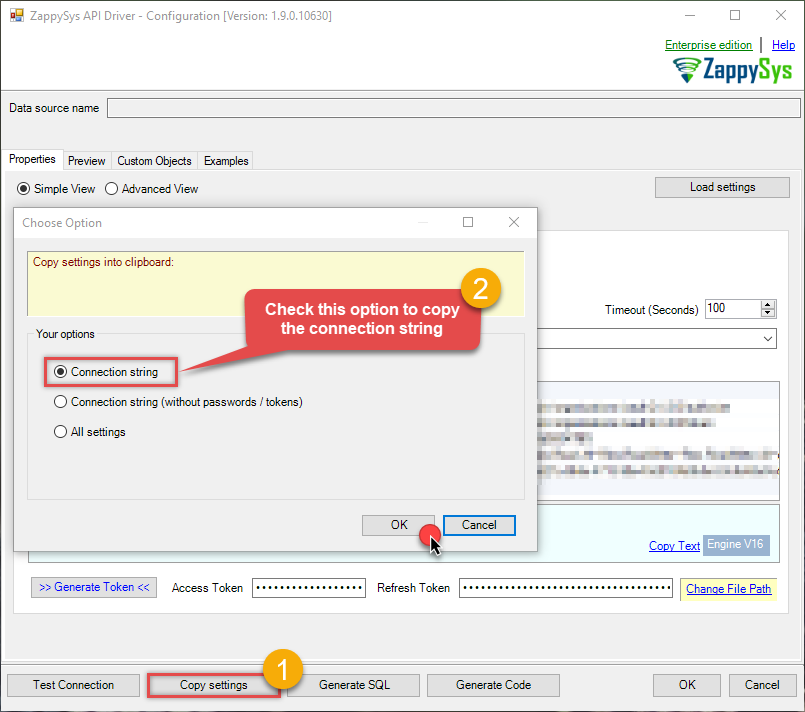

Open ODBC data source configuration and click Copy settings:

ZappySys API Driver - Custom APIRead / write Custom API data without coding.CustomApiDSN

ZappySys API Driver - Custom APIRead / write Custom API data without coding.CustomApiDSN

-

The window opens, telling us the connection string was successfully copied to the clipboard:

-

Then go to Data Gateway configuration and in data source configuration window click Load settings:

CustomApiDSN ZappySys API Driver - Configuration [Version: 2.0.1.10418]ZappySys API Driver - Custom APIRead / write Custom API data without coding.CustomApiDSN

ZappySys API Driver - Configuration [Version: 2.0.1.10418]ZappySys API Driver - Custom APIRead / write Custom API data without coding.CustomApiDSN

-

Once a window opens, just paste the settings by pressing

CTRL+Vor by clicking right mouse button and then Paste option.

-

Open ODBC data source configuration and click Copy settings:

-

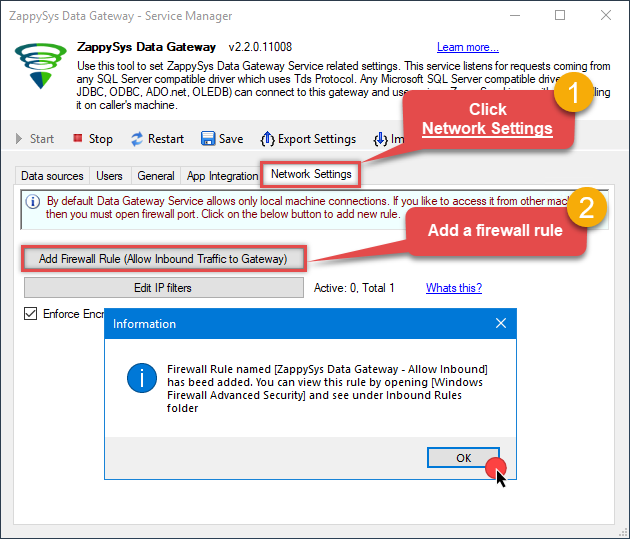

Once done, go to the Network Settings tab and Add a firewall rule for inbound traffic:

- This will initially allow all inbound traffic.

- Click Edit IP filters to restrict access to specific IP addresses or ranges.

-

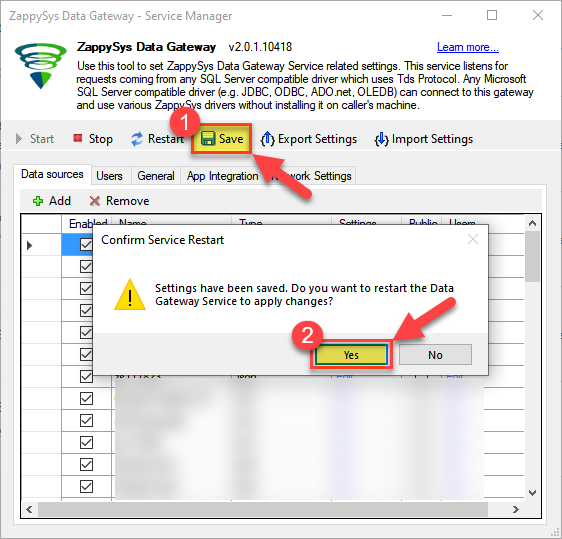

Crucial Step: After creating or modifying the data source, you must:

- Click the Save button to persist your changes.

- Hit Yes when prompted to restart the Data Gateway service.

This ensures all changes are properly applied:

Skipping this step may cause the new settings to fail, preventing you from connecting to the data source.

Skipping this step may cause the new settings to fail, preventing you from connecting to the data source.

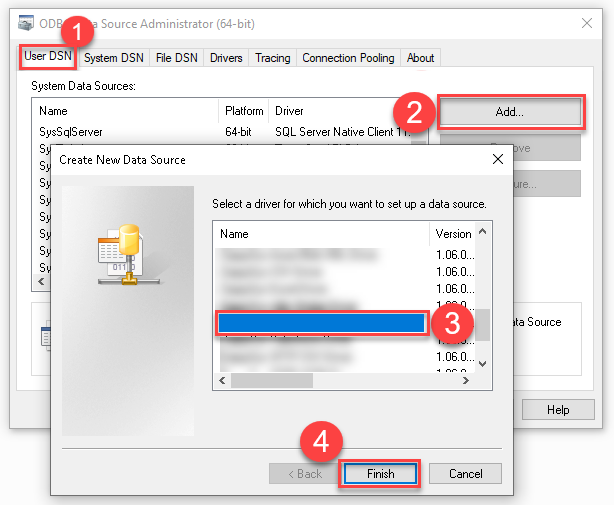

Create ODBC data source to connect to the gateway

In this part we will create an ODBC data source to connect to the ZappySys Data Gateway from Azure Data Factory (Pipeline). To achieve that, let's perform these steps:

-

Search for

odbcand open the ODBC Data Sources (64-bit):

-

Create a User data source (User DSN) based on the ODBC Driver 17 for SQL Server driver:

ODBC Driver 17 for SQL Server If you don't see the ODBC Driver 17 for SQL Server driver in the list, choose a similar version.

If you don't see the ODBC Driver 17 for SQL Server driver in the list, choose a similar version. -

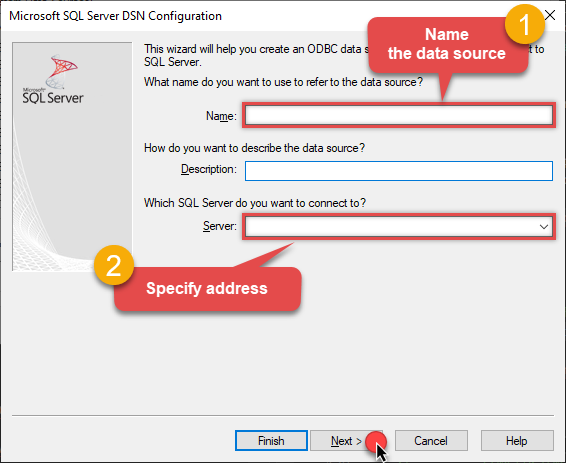

Then set a Name for the data source (e.g.

Gateway) and the address of the Data Gateway:ZappySysGatewayDSNlocalhost,5000 Make sure you separate the hostname and port with a comma, e.g.

Make sure you separate the hostname and port with a comma, e.g.localhost,5000. -

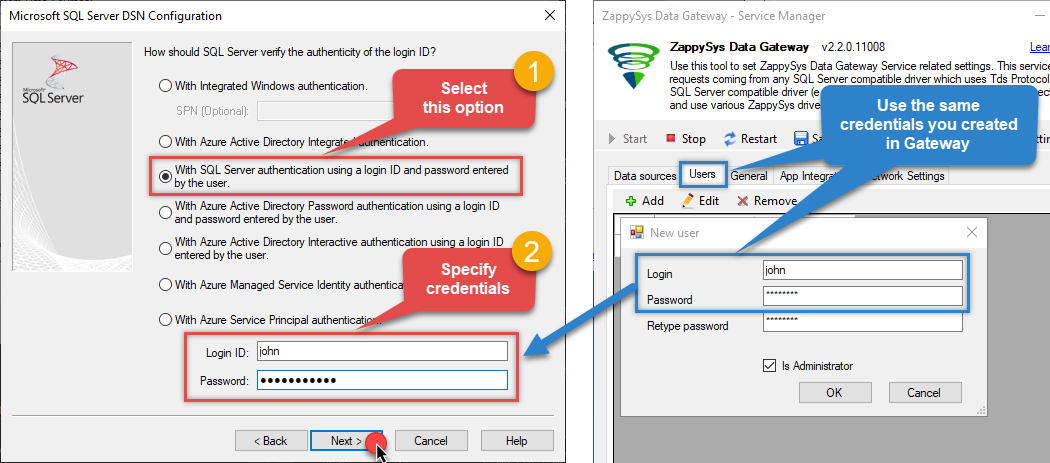

Proceed with the authentication part:

- Select SQL Server authentication

-

In the Login ID field enter the user name you created in the Data Gateway, e.g.,

john - Set Password to the one you configured in the Data Gateway

-

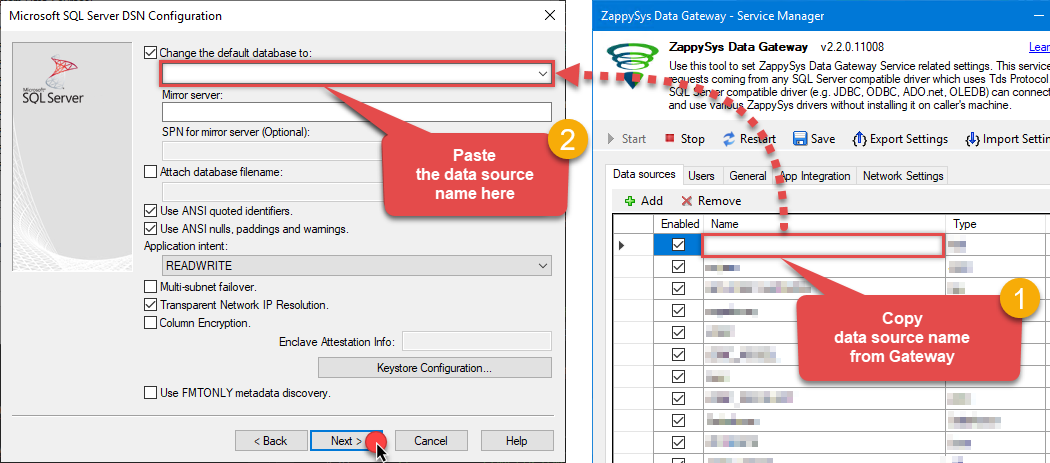

Then set the default database property to

CustomApiDSN(the one we used in the Data Gateway):CustomApiDSNCustomApiDSN Make sure to type the data source name manually or copy/paste it directly into the field. Using the dropdown might fail because the Trust server certificate option is not enabled yet (next step).

Make sure to type the data source name manually or copy/paste it directly into the field. Using the dropdown might fail because the Trust server certificate option is not enabled yet (next step). -

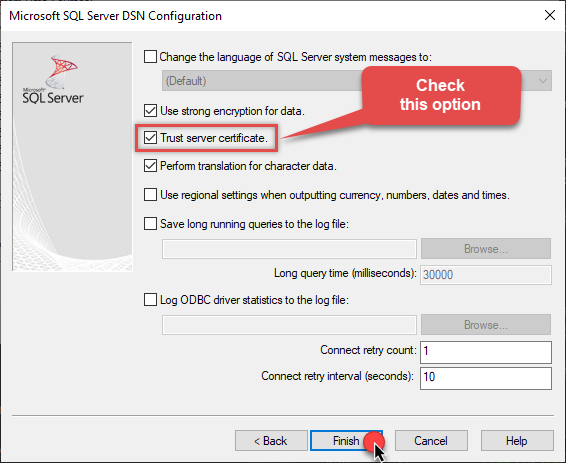

Continue by checking the Trust server certificate option:

-

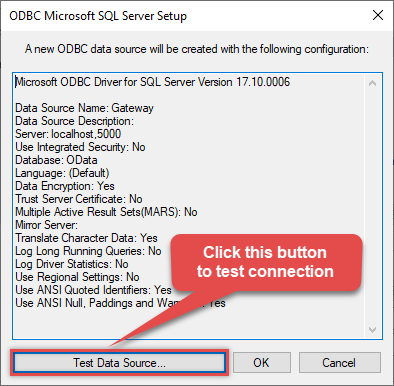

Once you do that, test the connection:

-

If the connection is successful, everything is good:

-

Done!

We are ready to move to the final step. Let's do it!

Access data in Azure Data Factory (Pipeline) via the gateway

Finally, we are ready to read data from Custom API in Azure Data Factory (Pipeline) via the Data Gateway. Follow these final steps:

-

Go back to Azure Data Factory (Pipeline).

-

Create a New Linked service:

In the Manage section (left menu).

Under Connections, select Linked services.

Click + New to create a new Linked service based on ODBC.

-

Select ODBC service:

-

Configure new ODBC service. Use the same DSN name we used in the previous step and copy it to Connection string box:

ZappySysGatewayDSNDSN=ZappySysGatewayDSN

-

Read the data the same way we discussed at the beginning of this article.

-

That's it!

Now you can connect to Custom API data in Azure Data Factory (Pipeline) via the ZappySys Data Gateway.

john and your password.

Conclusion

In this guide, we demonstrated how to connect to Custom API in Azure Data Factory (Pipeline) and integrate your data — all without writing complex code.

Ready to get started? Download ODBC PowerPack now or ping us via chat if you still need help: