Introduction

You can connect to your Cosmos DB data from Informatica PowerCenter via the high-performance Cosmos DB ODBC Driver. We'll walk you through the entire setup.

Let's not waste time and get started!

Introduction

JSON / REST API is becoming more and more popular each day as everyone embrace cloud-centric services. This article is primarily focused on Informatica users who want to access Cosmos DB data or may be other API Integration in Informatica. However many tips and techniques described in this article will help you to understand how to integrate Cosmos DB / XML SOAP / JSON / REST API in other ETL / Reporting apps such as Tableau, Power BI, SSRS, Talend, Excel and many more.

JSON / REST API is becoming more and more popular each day as everyone embrace cloud-centric services. This article is primarily focused on Informatica users who want to access Cosmos DB data or may be other API Integration in Informatica. However many tips and techniques described in this article will help you to understand how to integrate Cosmos DB / XML SOAP / JSON / REST API in other ETL / Reporting apps such as Tableau, Power BI, SSRS, Talend, Excel and many more.

After going through this article you will learn how to Read Cosmos DB / JSON / REST API data in Informatica and understand the concept of JSON / REST API. We will go through many screenshots and step-by-step examples to demonstrate Cosmos DB or REST API integration in Informatica PowerCenter.

XML / JSON can come from a local file or REST API service (internal or public) so we will include both examples in this article (i.e. Read JSON files in Informatica, Import REST API in Informatica). So let’s get started. Next article will focus on how to write data to API in Informatica (POST / PUT data)

If you need to consume API which is not listed on connector library page then please refer to the below article links. It talks about how to read / write pretty much any API and not just Cosmos DB API. It explains various API tips / tricks using our other Universal Drivers not mentioned in this article (i.e. ZappySys JSON / XML and CSV Drivers).

Requirements

This article assumes that you have full filled following basic requirements.

- Download Install ZappySys ODBC PowerPack (API Driver for Cosmos DB included)

- Install Informatica PowerCenter Client Tools (e.g. Workflow and Mapping Designers)

- Access to a Relational database such as SQL Server (or use any of your choice e.g. Oracle, MySQL, DB2 ). If nothing available then you can use flat file target.

High level Steps for Import Cosmos DB data using Informatica (Read Cosmos DB API data)

Before we dive deep to learn how to load Cosmos DB data in Informatica (i.e. Cosmos DB to SQL Table), Here the summary of high-level steps you need to perform to import Cosmos DB in Informatica (same steps for Import JSON / XML / REST API).

- Download and Install ZappySys API Driver (for connecting to Cosmos DB)

- Create ODBC DSN using ZappySys API driver and choose Cosmos DB Connector during Wizard

- Create Relational > ODBC Connection in Informatica Workflow designer (Point to DSN we created in the previous step)

- Import Cosmos DB Source Definition in the Informatica Mapping Designer > Sources Tab

- Import Target Table Definition in the Informatica Mapping Designer > Targets Tab

- Create source to target mapping in Mappings tab

- Save mapping (name m_API_to_SQL_Load )

- Create Session using the mapping we created in the previous step

- Save Workflow and execute to load Cosmos DB data into SQL Table. Verify your data and log.

Video Tutorial – Read any API / JSON data in Informatica (Load Cosmos DB to SQL Table)

Below video is not about Cosmos DB API but its showing API access in general (for any API). By watching following ~5 min video can learn steps listed in this article to load JSON API data into SQL Server Table using ZappySys JSON Driver. You can go though full article to learn many useful details not covered in this video.

Getting Started – Import Cosmos DB to SQL Server in Informatica

Now let’s get started. For example purpose, we will read data from Cosmos DB and load data into SQL Server Table using Informatica Workflow.

Create data source using Cosmos DB ODBC Driver

Video instructions

Watch this quick walkthrough to see how to configure your Cosmos DB ODBC data source, or scroll down for the step-by-step written guide.

Step-by-step instructions

To get data from Cosmos DB using Informatica PowerCenter, we first need to create an ODBC data source. We will later read this data in Informatica PowerCenter. Perform these steps:

-

Download and install ODBC PowerPack (if you haven't already).

-

Search for

odbcand open the ODBC Data Sources (64-bit):

-

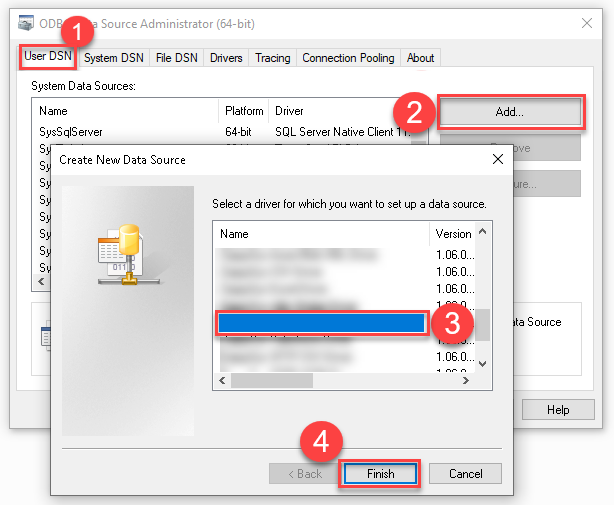

Create a User data source (User DSN) based on the ZappySys API Driver driver:

ZappySys API Driver

- Create and use a User DSN if the client application runs under a User Account. This is the ideal option at design time (e.g., when developing in Visual Studio). Use it for both types of applications (64-bit and 32-bit).

- Create and use a System DSN if the client application runs under a System Account (e.g., as a Windows Service). This is usually the required option in a production environment. If your Windows Service is a 32-bit application, you must use the 32-bit ODBC Data Source Administrator to configure this

-

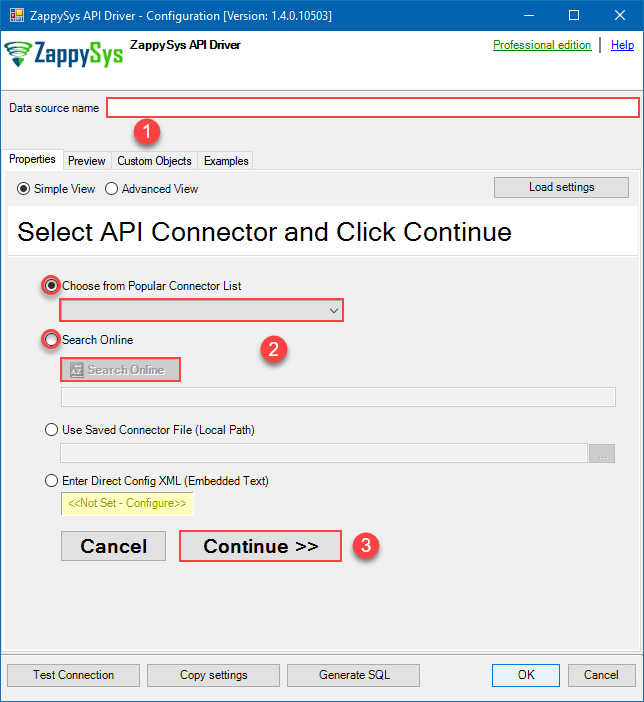

When the Configuration window appears give your data source a name if you haven't done that already, then select "Cosmos DB" from the list of Popular Connectors. If "Cosmos DB" is not present in the list, then click "Search Online" and download it. Then set the path to the location where you downloaded it. Finally, click Continue >> to proceed with configuring the DSN:

CosmosDbDSNCosmos DB

-

Select your authentication scenario below to expand connection configuration steps to:

- Configure the authentication in Cosmos DB.

- Enter those details into the ZappySys API Driver data source configuration.

API Key

Cosmos DB authentication

Connecting to your Azure Cosmos DB data requires you to authenticate your REST API access. Follow the instructions below:- Go to your Azure portal homepage: https://portal.azure.com/.

- In the search bar at the top of the homepage, enter Azure Cosmos DB. In the dropdown that appears, select Azure Cosmos DB.

- Click on the name of the database account you want to connect to (also copy and paste the name of the database account for later use).

-

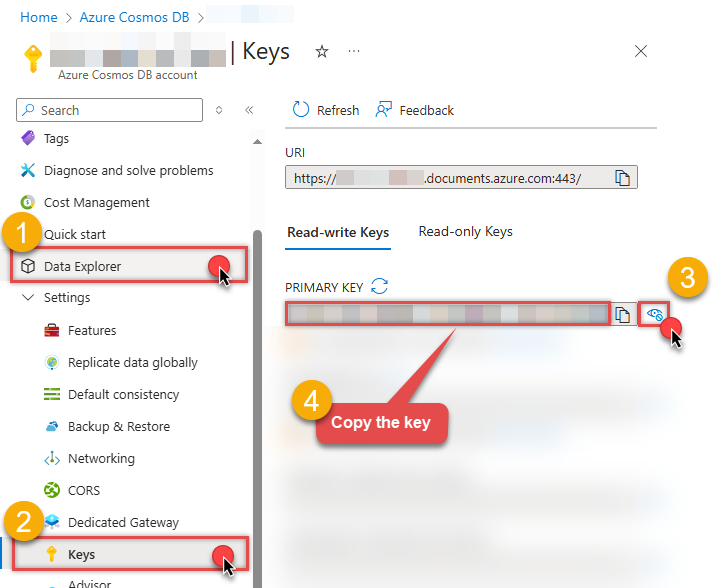

On the next page where you can see all of the database account information, look along the left side and select Keys:

- On the Keys page, you will have two tabs: Read-write Keys and Read-only Keys. If you are going to write data to your database, you need to remain on the Read-write Keys tab. If you are only going to read data from your database, you should select the Read-only Keys tab.

- On the Keys page, copy the PRIMARY KEY value and paste it somewhere for later use (the SECONDARY KEY value may also be copied and used).

- Now go to SSIS package or ODBC data source and use this PRIMARY KEY in API Key authentication configuration.

- Enter the primary or secondary key you recorded in step 6 into the Primary or Secondary Key field.

- Then enter the database account you recorded in step 3 into the Database Account field.

- Next, enter or select the default database you want to connect to using the Default Database field.

- Continue by entering or selecting the default table (i.e. container/collection) you want to connect to using the Default Table (Container/Collection) field.

- Select the Test Connection button at the bottom of the window to verify proper connectivity with your Azure Cosmos DB account.

- If the connection test succeeds, select OK.

- Done! Now you are ready to use Cosmos DB Connector!

API Connection Manager configuration

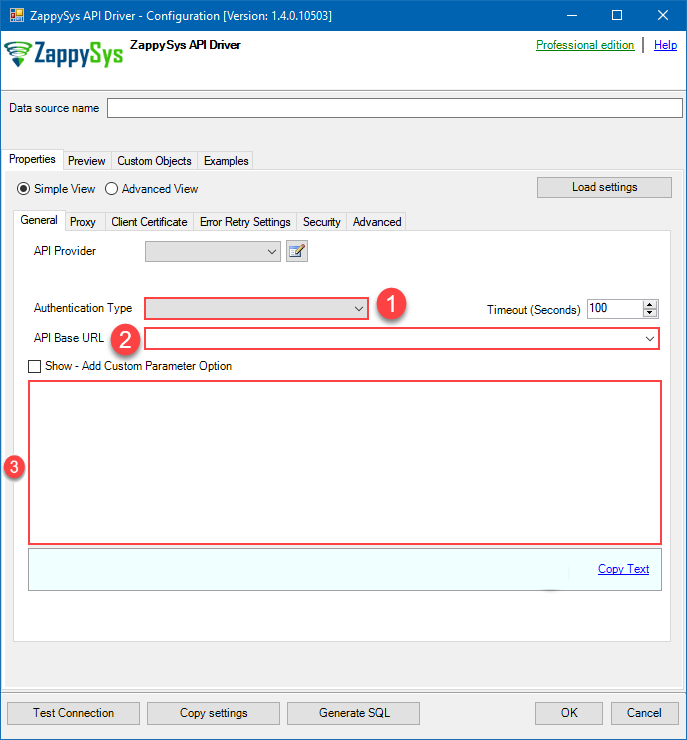

Just perform these simple steps to finish authentication configuration:

-

Set Authentication Type to

API Key [Http] - Optional step. Modify API Base URL if needed (in most cases default will work).

- Fill in all the required parameters and set optional parameters if needed.

- Finally, hit OK button:

CosmosDbDSNCosmos DBAPI Key [Http]https://[$Account$].documents.azure.comRequired Parameters Primary or Secondary Key Fill-in the parameter... Account Name (Case-Sensitive) Fill-in the parameter... Database Name (keep blank to use default) Case-Sensitive Fill-in the parameter... API Version Fill-in the parameter... Optional Parameters Default Table (needed to invoke #DirectSQL)  Find full details in the Cosmos DB Connector authentication reference.

Find full details in the Cosmos DB Connector authentication reference. -

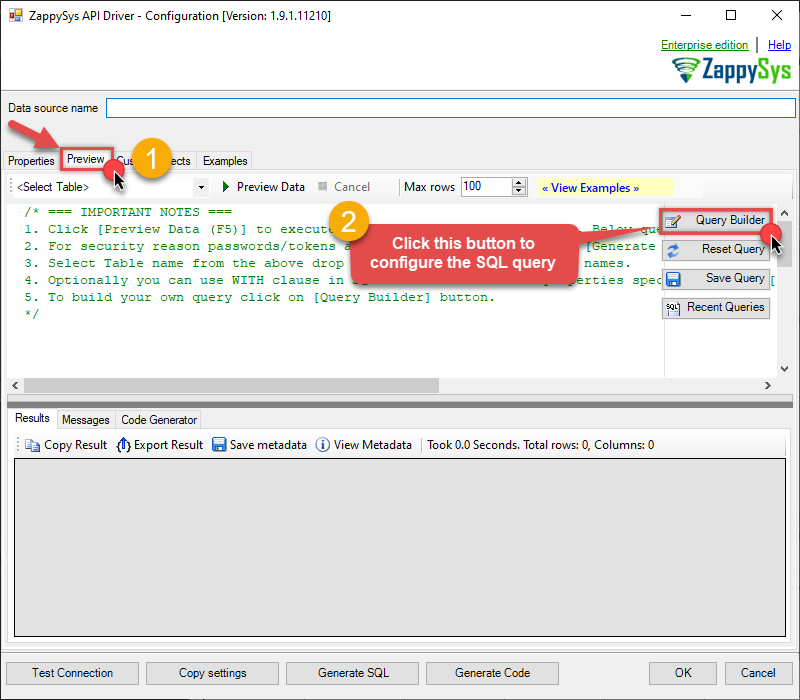

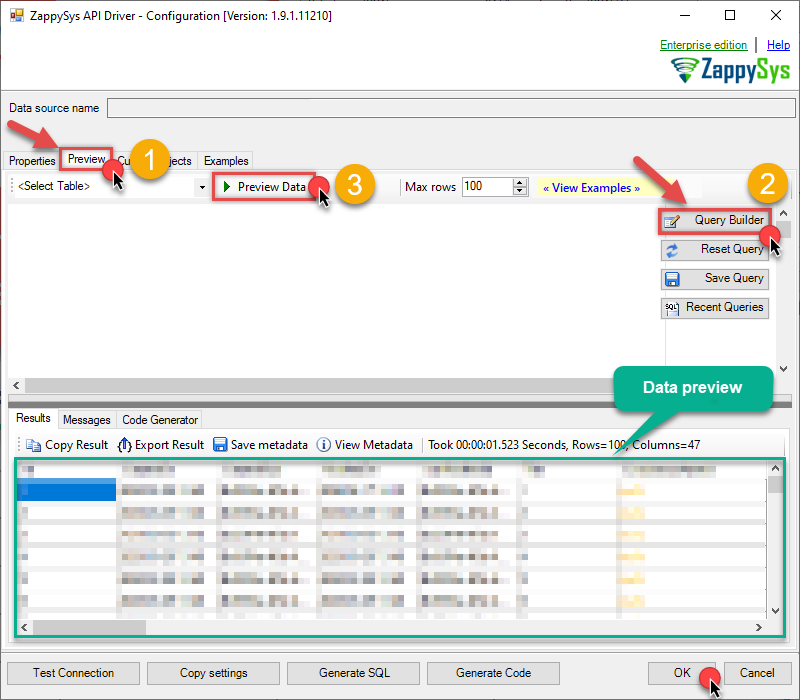

Once the data source connection has been configured, it's time to configure the SQL query. Select the Preview tab and then click Query Builder button to configure the SQL query:

ZappySys API Driver - Cosmos DBRead and write Azure Cosmos DB data effortlessly. Query, integrate, and manage databases, containers, documents, and users — almost no coding required.CosmosDbDSN

ZappySys API Driver - Cosmos DBRead and write Azure Cosmos DB data effortlessly. Query, integrate, and manage databases, containers, documents, and users — almost no coding required.CosmosDbDSN

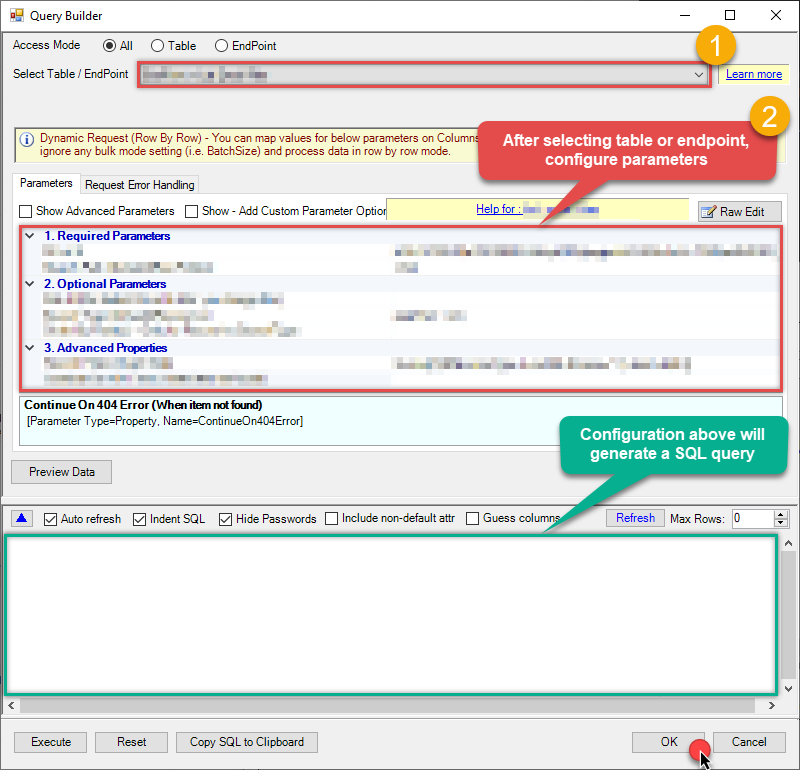

-

Start by selecting the Table or Endpoint you are interested in and then configure the parameters. This will generate a query that we will use in Informatica PowerCenter to retrieve data from Cosmos DB. Hit OK button to use this query in the next step.

#DirectSQL SELECT * FROM root where root.id !=null order by root._ts desc Some parameters configured in this window will be passed to the Cosmos DB API, e.g. filtering parameters. It means that filtering will be done on the server side (instead of the client side), enabling you to get only the meaningful data

Some parameters configured in this window will be passed to the Cosmos DB API, e.g. filtering parameters. It means that filtering will be done on the server side (instead of the client side), enabling you to get only the meaningful datamuch faster . -

Now hit Preview Data button to preview the data using the generated SQL query. If you are satisfied with the result, use this query in Informatica PowerCenter:

ZappySys API Driver - Cosmos DBRead and write Azure Cosmos DB data effortlessly. Query, integrate, and manage databases, containers, documents, and users — almost no coding required.CosmosDbDSN

ZappySys API Driver - Cosmos DBRead and write Azure Cosmos DB data effortlessly. Query, integrate, and manage databases, containers, documents, and users — almost no coding required.CosmosDbDSN#DirectSQL SELECT * FROM root where root.id !=null order by root._ts desc You can also access data quickly from the tables dropdown by selecting <Select table>.A

You can also access data quickly from the tables dropdown by selecting <Select table>.AWHEREclause,LIMITkeyword will be performed on the client side, meaning that thewhole result set will be retrieved from the Cosmos DB API first, and only then the filtering will be applied to the data. If possible, it is recommended to use parameters in Query Builder to filter the data on the server side (in Cosmos DB servers). -

Click OK to finish creating the data source.

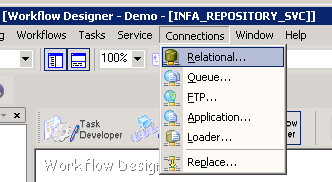

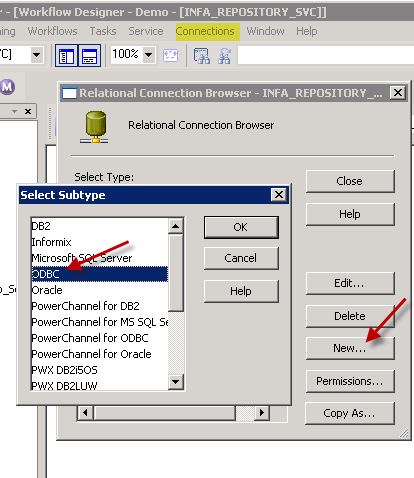

Create Connection in Informatica Workflow Designer

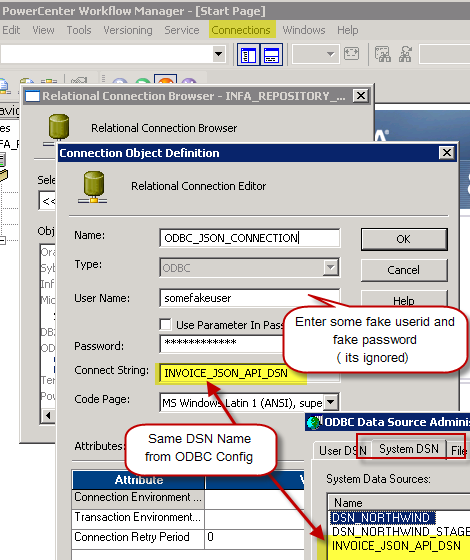

Once you create DSN using API Driver our next step is to define a connection for Cosmos DB source in Informatica PowerCenter Workflow designer.

- Open Workflow designer [W] icon

- Goto Connections > Relational

- Click New and select ODBC

- Now on the ODBC connection setup enter connection name, some fake userid / password (this is a required field but its ignored by JSON Driver)

-

In the Connection String field enter the exact same name of DSN (Open ODBC Data Sources UI to confirm)

Configure Cosmos DB connection in Informatica for REST API – Using ZappySys API Driver

- Click OK to close the connection properties.

That’s it. Now we ready to move to next step (define source and target in Mapping Designer).

Import Cosmos DB Source Definition in Informatica Mapping Designer

Now let’s look at steps to import Cosmos DB table definition.

-

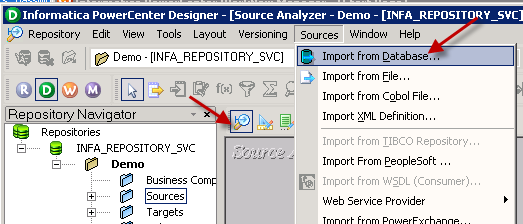

Open Informatica Mapping Designer (Click [D] icon)

-

Click on Source Icon to switch to Sources designer

-

From the top menu > Click on Sources > Import from Database …

-

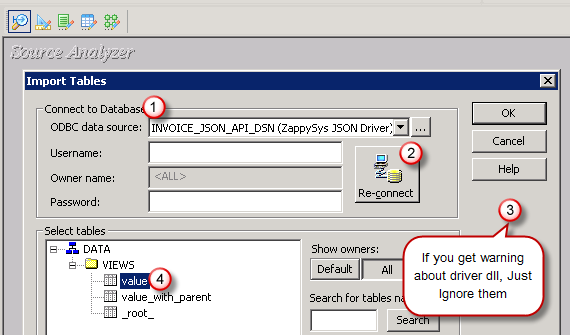

Select ODBC data source from the dropdown (Find out DSN we created earlier to use as JSON Source)

-

Click Connect button to get a list of tables. Any array node is listed as a table. Also, you will see array node with parent columns (e.g. value_with_parent). You may get some warning like below but they are harmless so just ignore by clicking OK.

DLL name entry missing from C:\Informatica\PowerCenter8.6.1\client\bin\powrmart.ini Section = ODBCDLL Entry = ZappySys JSON Driver

—————————————————-

Using EXTODBC.DLL to support ZappySys JSON Driver. For native support of ZappySys JSON Driver make an entry in the .ini file. - Select Table you wish to get (You can filter rows by custom SQL query. We will see later in this article how to do)

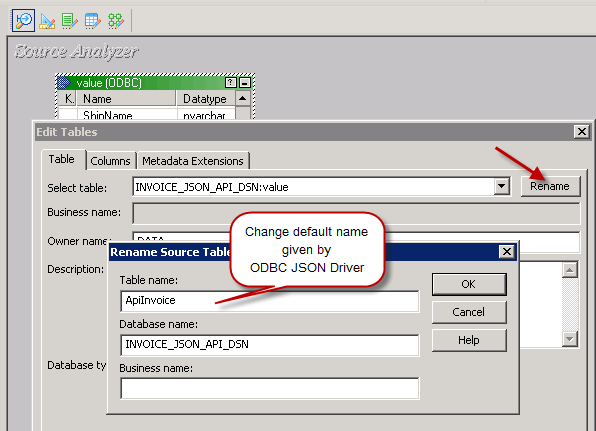

- Optionally once table structure is imported you can rename it

- That’s it, we are now ready to perform similar steps to import Target table structure in the next section.

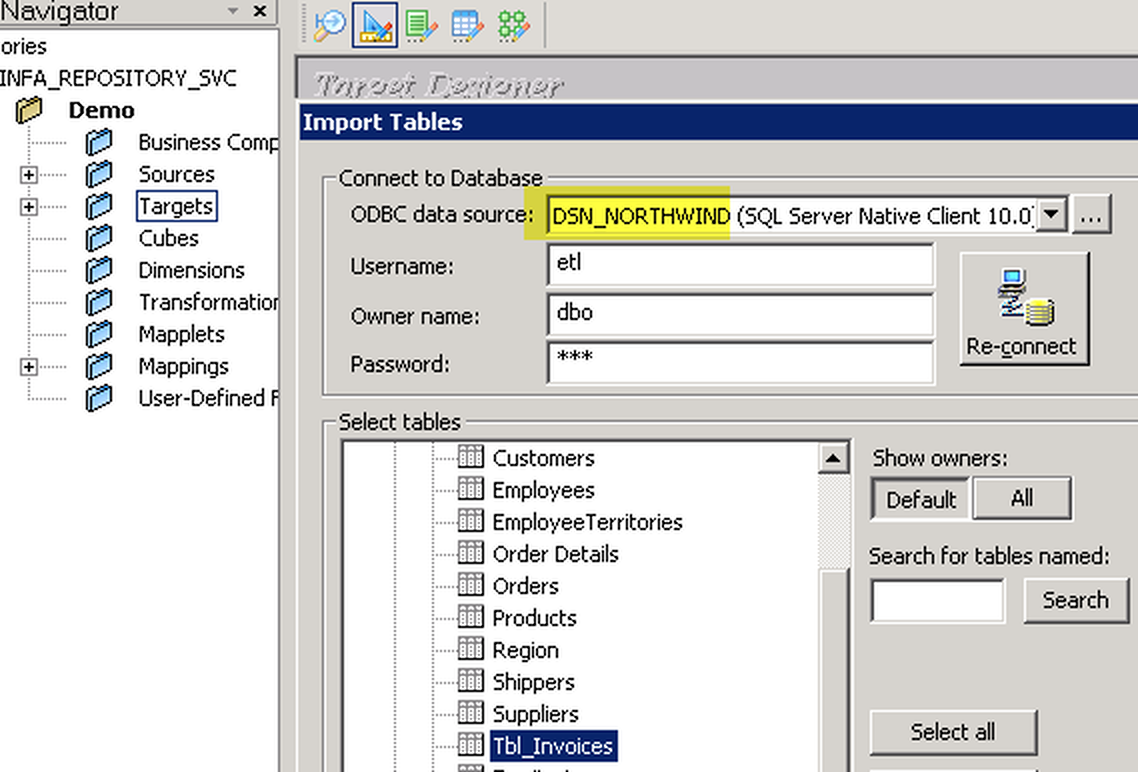

Import SQL Server Target Definition in Informatica Mapping Designer

Now let’s look at steps to import Target table definition (very similar to the previous section, the only difference is this time we will select DSN which points to SQL Server or any other Target Server).

Now lets look at steps to import target table definition in Informatica mapping designer.

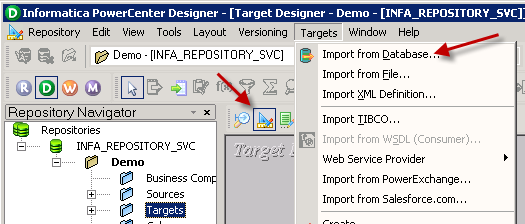

- In the Mapping Designer, Click on Target Icon to switch to Target designer

- From the top menu > Click on Targets > Import from Database …

- Select DSN for your Target server (if DSN doesn’t exist then create one by opening ODBC Sources just like we created one for JSON API source (see the previous section about creating DSN).

- Enter your userid , password and Schema name and click Connect to see tables

- Select Table name to and click OK import definition.

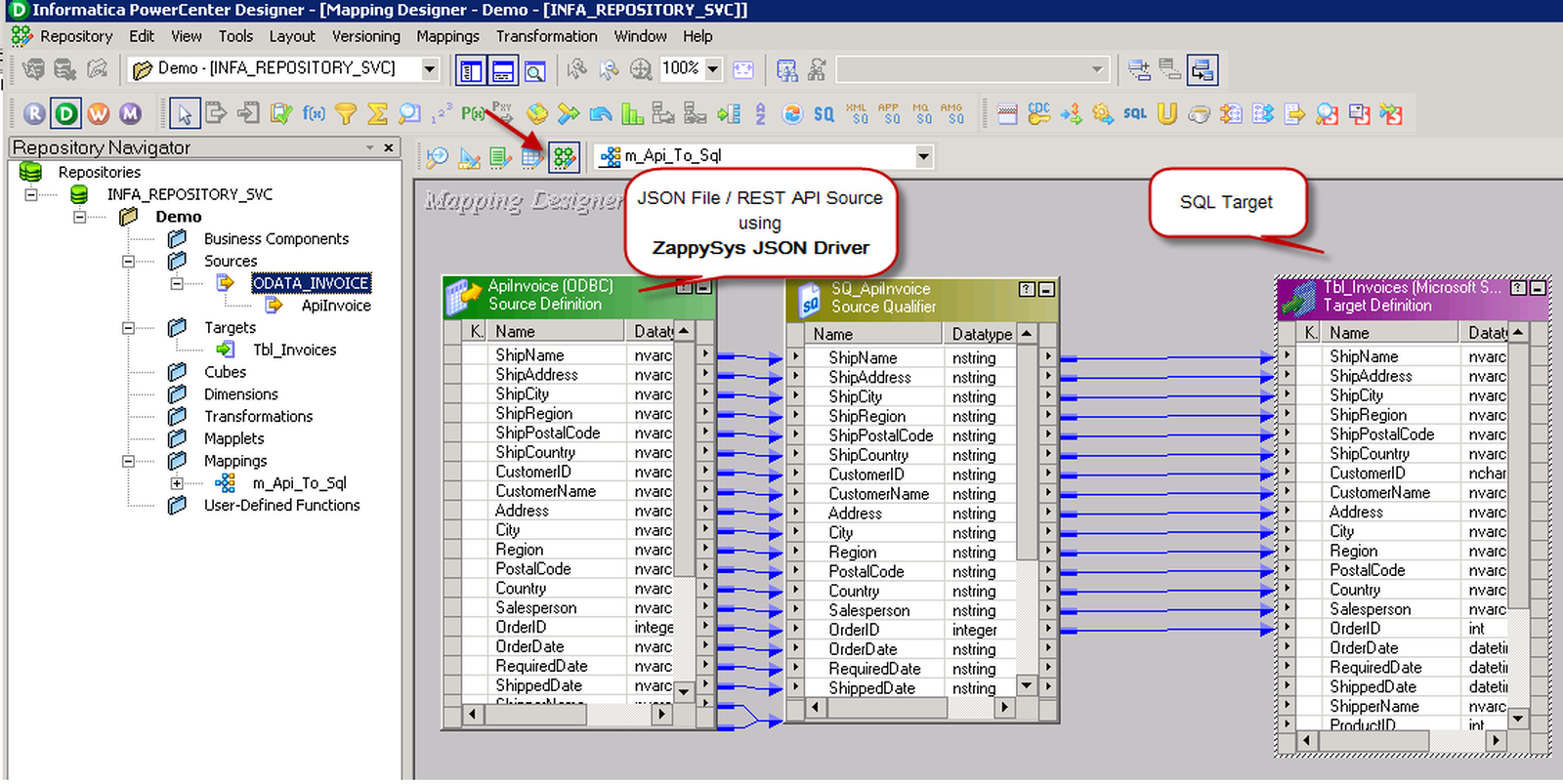

Create Source to Target Mapping in Informatica (Import JSON to SQL Server)

Once you have imported source and target table definition, we can create mapping and transformation to load data from JSON to SQL Table.

- First open Mapping Designer (Click [D] icon)

- Drag JSON Source from sources folder

- Drag SQL Table from Targets folder

- Map desired columns from Source to target

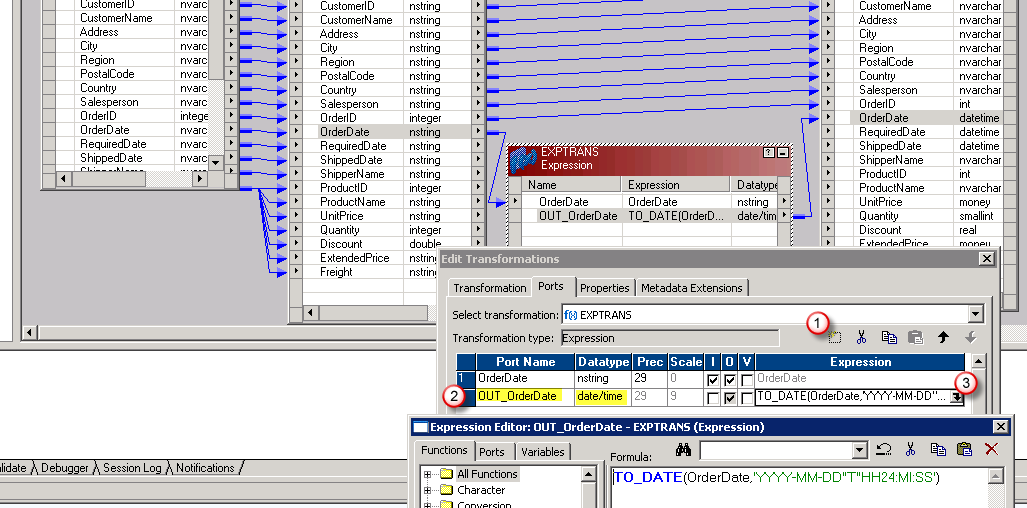

-

For certain columns you may have to do datatype conversion. For example to convert OrderDate form nstring to DataTime you have to use Expression Transform like below and map it to target. In below example, our JSON has date format (e.g. 2018-01-31 12:00:00 AM ). To import this to DateTime field in SQL server we need to convert it using TO_DATE function. Use double quotes around T to make this format working.

TO_DATE(OrderDate,'YYYY-MM-DD H12:MI:SS AM') --For ISO use below way TO_DATE(OrderDate,'YYYY-MM-DD"T"HH24:MI:SS') - Once you done with mapping save your mapping and name it (i.e. m_Api_To_SQL)

- Now lets move to next section to create workflow.

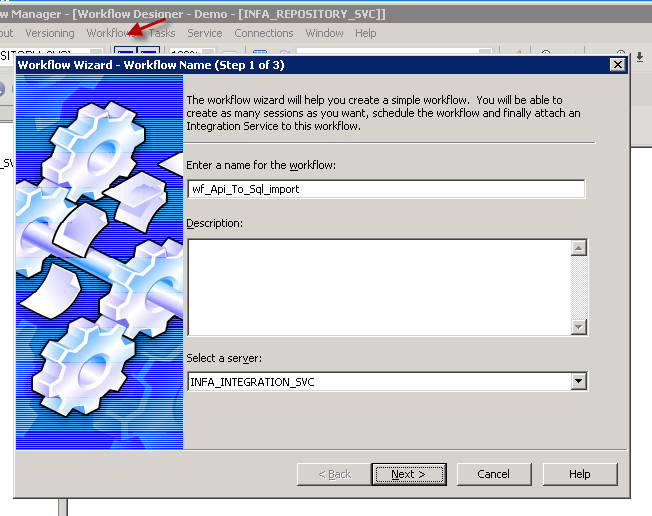

Create Workflow and Session in Informatica

Now the final step is to create a new workflow. Perform following steps to create workflow which with a session task to import JSON data into SQL table.

- Open workflow designer by click [W] icon.

-

Launch new workflow creation wizard by click Workflow top menu > Wizard

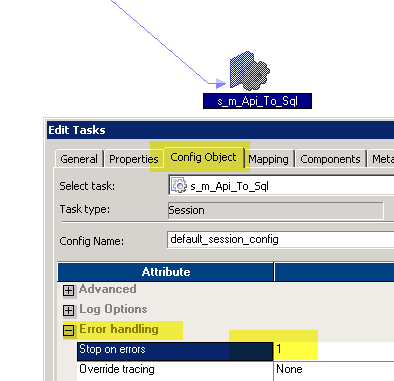

name your workflow (e.g. wf_Api_Tp_Sql_Table_Import) - Finish the wizard and double-click the Session to edit some default properties.

- First change Error settings so we fail session on error (By default its always green)

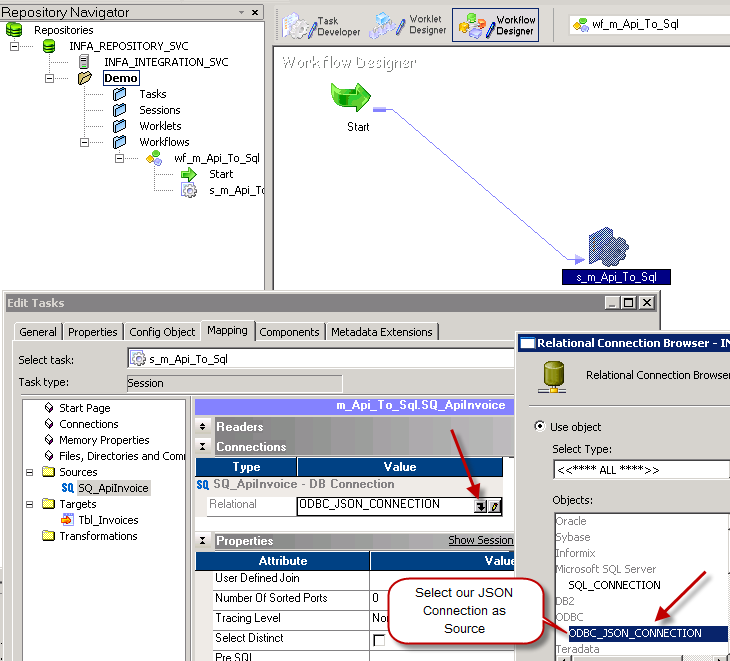

- Select JSON connection for Source

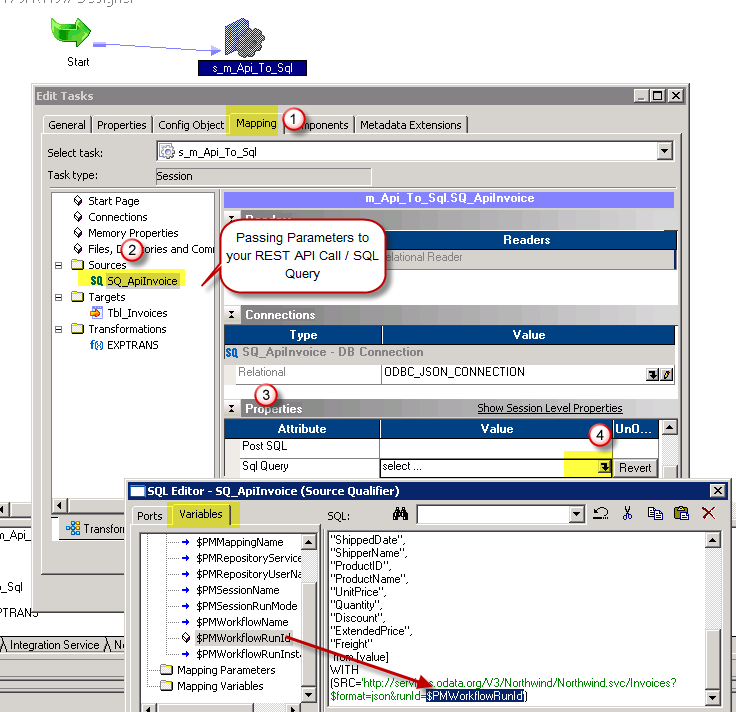

- Change default Source query if needed. You can pass parameters to this query to make it dynamic.

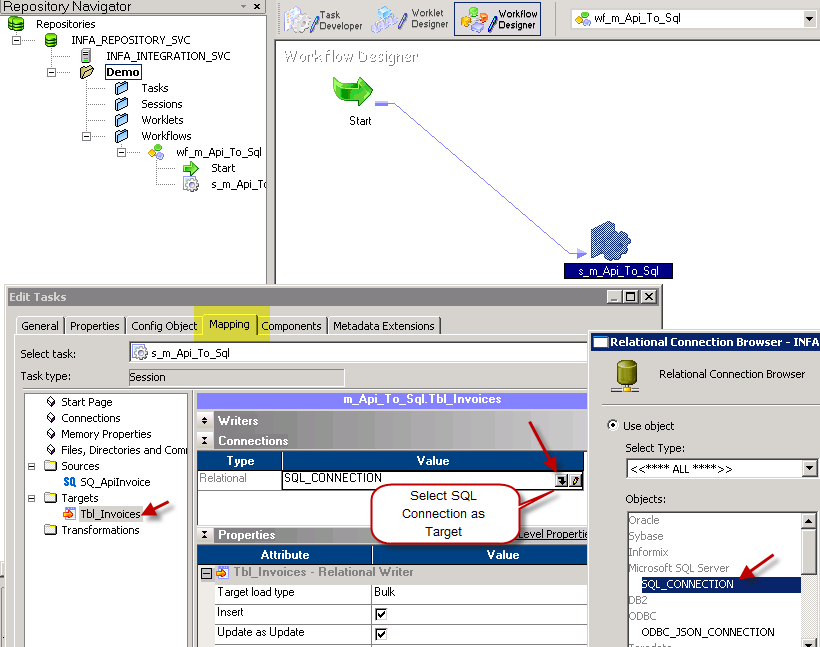

- Select Target connection of SQL Target Table

- Save workflow

- That’s it. We ready to run our first workflow to load JSON data to SQL.

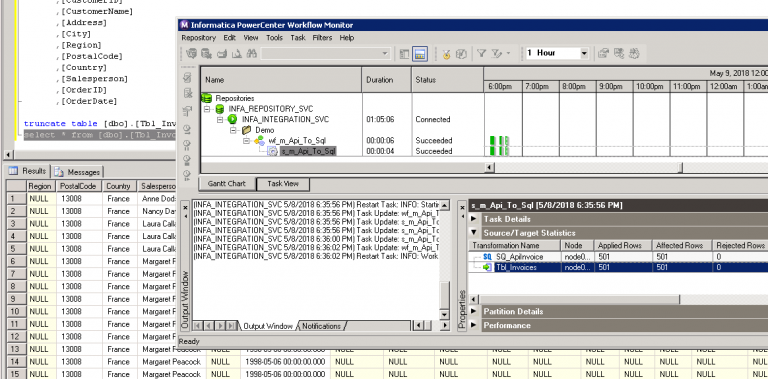

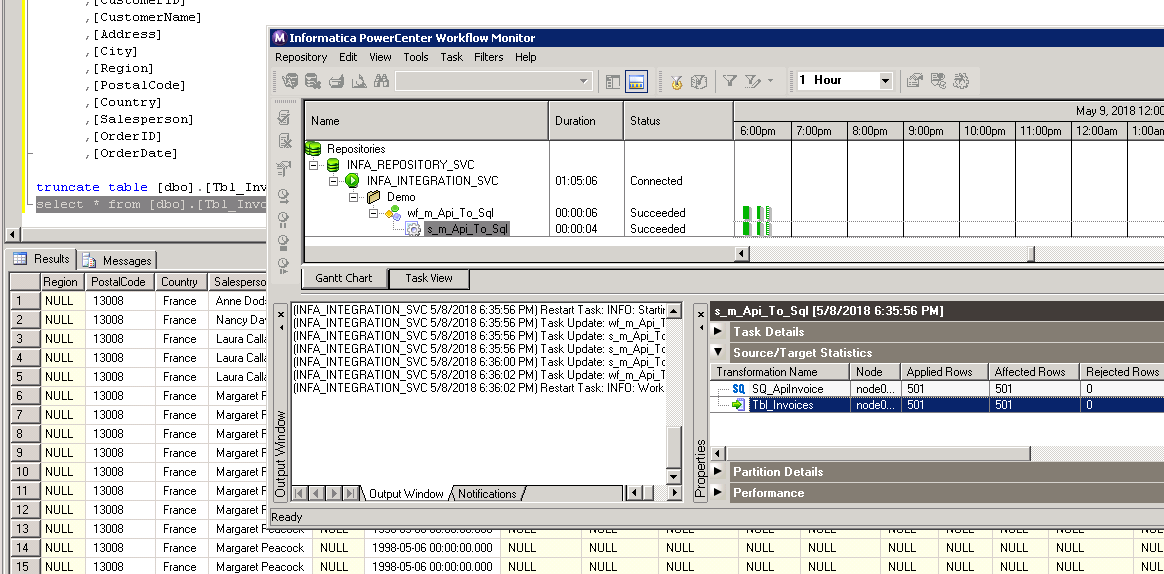

Execute Workflow and Validate Log in Informatica

Now once you are done with your workflow, execute it to see the log.

POST data to Cosmos DB in Informatica

There will be a time when you like to send Source data to REST API or SOAP Web Service. You can use below Query for example. For detailed explanation on how to POST data in Informatica check this article .

Video Tutorial – How to POST data to REST API in Informatica

Here is detailed step by step video on REST API POST in informatica PowerCenter

Keywords

how to import Cosmos DB in informatica | how to read Cosmos DB data in informatica powercenter | how to test json from informatica | how to use Cosmos DB data as source in informatica power center | how to connect Cosmos DB in informatica 10 | informatica how to import data from Cosmos DB | informatica jtx to import Cosmos DB (use of java transformation) | informatica plugin for restful api using json | informatica power center and Cosmos DB support | informatica read Cosmos DB | informatica rest api | informatica Cosmos DB connector | json parser import informatica

Cosmos DB Connector actions

Got a specific use case in mind? We've mapped out exactly how to perform a variety of essential Cosmos DB operations directly in Informatica PowerCenter, so you don't have to figure out the setup from scratch. Check out the step-by-step guides below:

- Get List of Databases

- Get Database Information by Id or Name

- Get List of Tables

- Get table information by Id or Name

- Get table partition key ranges

- Query documents using Cosmos DB SQL query language

- Get All Documents for a Table

- Get Document by Id

- Delete a Document by Id

- Get All Users for a Database

- Get User by Id or Name

- Create User for Database

- Create a document in the container

- Upsert a document in the container

- Update Document in the Container

- Create Permission Token for a User (One Table)

- Make Generic REST API Request

- Make Generic REST API Request (Bulk Write)

Optional: Centralized data access via ZappySys Data Gateway

In some situations, you may need to provide Cosmos DB data access to multiple users or services. Configuring the data source on a Data Gateway creates a single, centralized connection point for this purpose.

This configuration provides two primary advantages:

-

Centralized data access

The data source is configured once on the gateway, eliminating the need to set it up individually on each user's machine or application. This significantly simplifies the management process.

-

Centralized access control

Since all connections route through the gateway, access can be governed or revoked from a single location for all users.

| Data Gateway |

Local ODBC

data source

|

|

|---|---|---|

| Simple configuration | ||

| Installation | Single machine | Per machine |

| Connectivity | Local and remote | Local only |

| Connections limit | Limited by License | Unlimited |

| Central data access | ||

| Central access control | ||

| More flexible cost |

To achieve this, you must first create a data source in the Data Gateway (server-side) and then create an ODBC data source in Informatica PowerCenter (client-side) to connect to it.

Let's not wait and get going!

Create Cosmos DB data source in the gateway

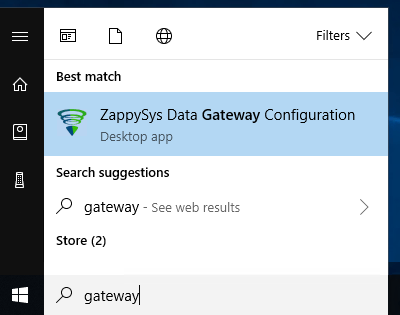

In this section we will create a data source for Cosmos DB in the Data Gateway. Let's follow these steps to accomplish that:

-

Search for

gatewayin the Windows Start Menu and open ZappySys Data Gateway Configuration:

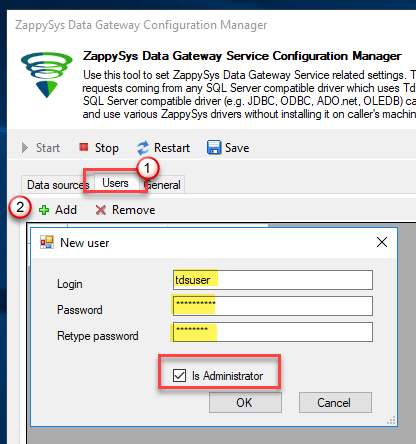

-

Go to the Users tab and follow these steps to add a Data Gateway user:

- Click the Add button

-

In the Login field enter a username, e.g.,

john - Then enter a Password

- Check the Is Administrator checkbox

- Click OK to save

-

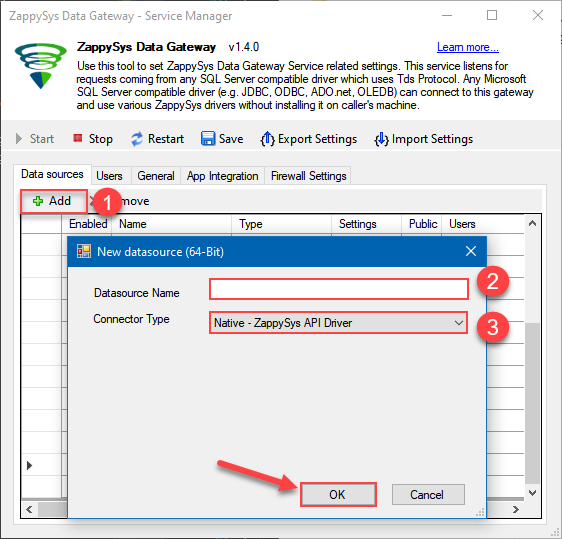

Now we are ready to add a data source:

- Click the Add button

- Give the Data source a name (have it handy for later)

- Then select Native - ZappySys API Driver

- Finally, click OK

CosmosDbDSNZappySys API Driver

-

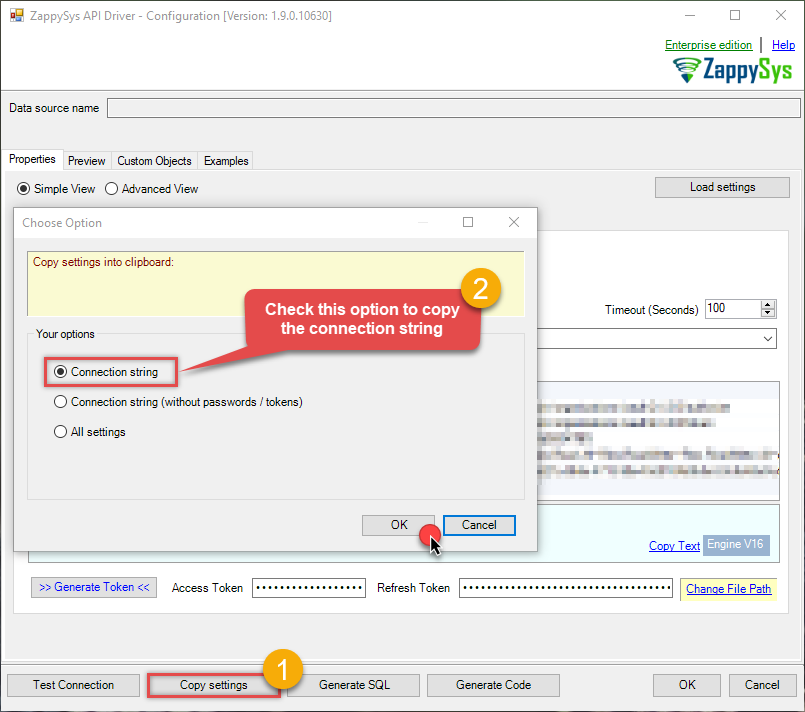

When the ZappySys API Driver configuration window opens, go back to ODBC Data Source Administrator where you already have the Cosmos DB ODBC data source created and configured, and follow these steps on how to Import data source configuration into the Gateway:

-

Open ODBC data source configuration and click Copy settings:

ZappySys API Driver - Cosmos DBRead and write Azure Cosmos DB data effortlessly. Query, integrate, and manage databases, containers, documents, and users — almost no coding required.CosmosDbDSN

-

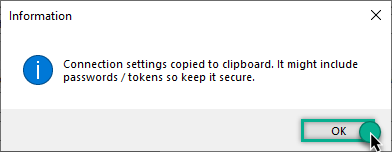

The window opens, telling us the connection string was successfully copied to the clipboard:

-

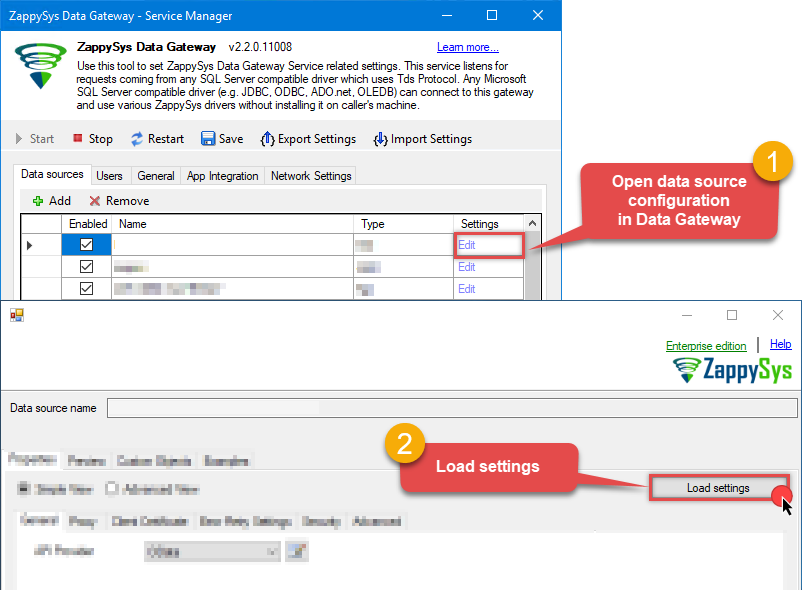

Then go to Data Gateway configuration and in data source configuration window click Load settings:

CosmosDbDSNZappySys API Driver - Configuration [Version: 2.0.1.10418]ZappySys API Driver - Cosmos DBRead and write Azure Cosmos DB data effortlessly. Query, integrate, and manage databases, containers, documents, and users — almost no coding required.CosmosDbDSN

-

Once a window opens, just paste the settings by pressing

CTRL+Vor by clicking right mouse button and then Paste option.

-

Open ODBC data source configuration and click Copy settings:

-

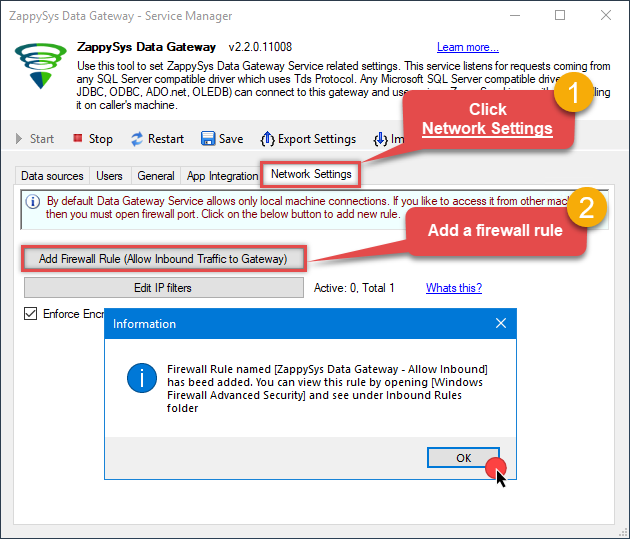

Once done, go to the Network Settings tab and Add a firewall rule for inbound traffic:

- This will initially allow all inbound traffic.

- Click Edit IP filters to restrict access to specific IP addresses or ranges.

-

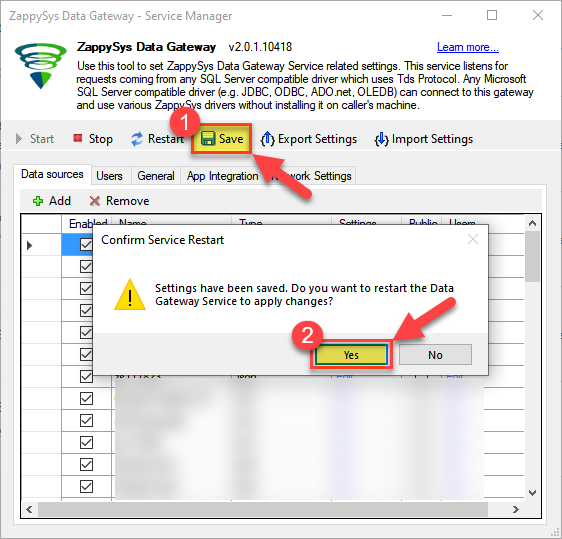

Crucial Step: After creating or modifying the data source, you must:

- Click the Save button to persist your changes.

- Hit Yes when prompted to restart the Data Gateway service.

This ensures all changes are properly applied:

Skipping this step may cause the new settings to fail, preventing you from connecting to the data source.

Skipping this step may cause the new settings to fail, preventing you from connecting to the data source.

Create ODBC data source to connect to the gateway

In this part we will create an ODBC data source to connect to the ZappySys Data Gateway from Informatica PowerCenter. To achieve that, let's perform these steps:

-

Search for

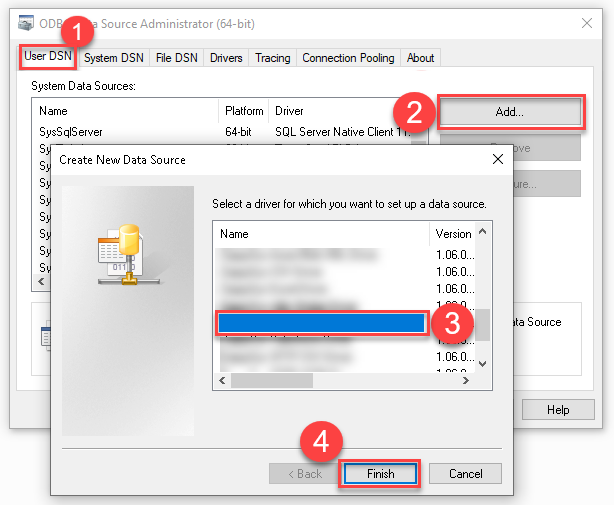

odbcand open the ODBC Data Sources (64-bit):

-

Create a User data source (User DSN) based on the ODBC Driver 17 for SQL Server driver:

ODBC Driver 17 for SQL Server If you don't see the ODBC Driver 17 for SQL Server driver in the list, choose a similar version.

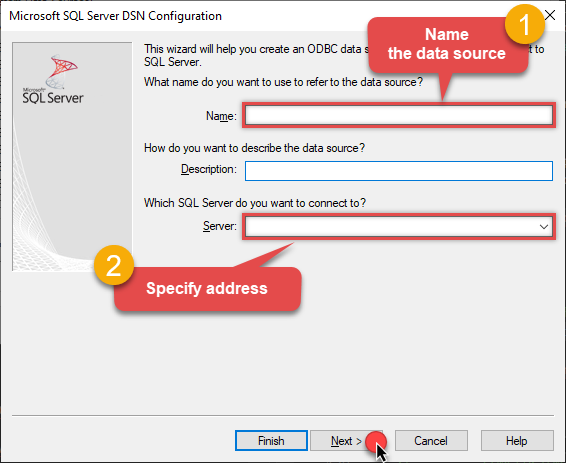

If you don't see the ODBC Driver 17 for SQL Server driver in the list, choose a similar version. -

Then set a Name for the data source (e.g.

Gateway) and the address of the Data Gateway:ZappySysGatewayDSNlocalhost,5000 Make sure you separate the hostname and port with a comma, e.g.

Make sure you separate the hostname and port with a comma, e.g.localhost,5000. -

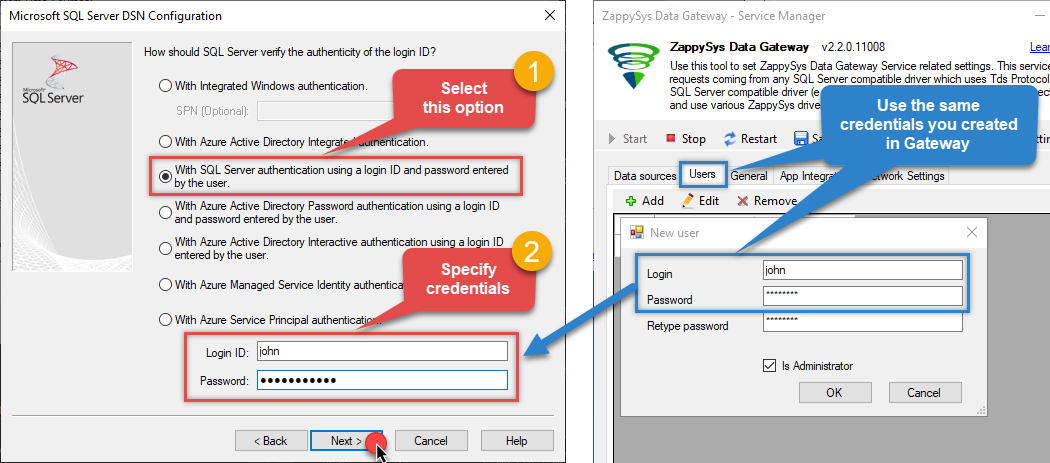

Proceed with the authentication part:

- Select SQL Server authentication

-

In the Login ID field enter the user name you created in the Data Gateway, e.g.,

john - Set Password to the one you configured in the Data Gateway

-

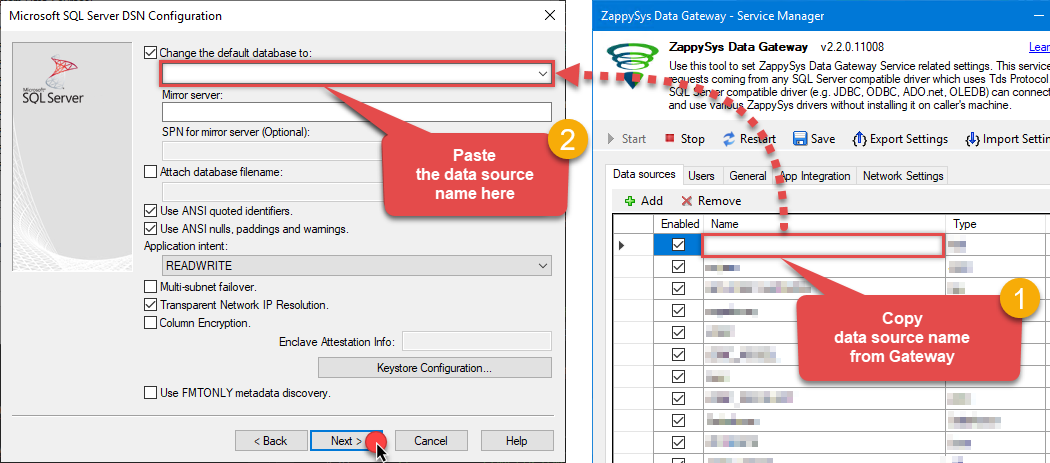

Then set the default database property to

CosmosDbDSN(the one we used in the Data Gateway):CosmosDbDSNCosmosDbDSN Make sure to type the data source name manually or copy/paste it directly into the field. Using the dropdown might fail because the Trust server certificate option is not enabled yet (next step).

Make sure to type the data source name manually or copy/paste it directly into the field. Using the dropdown might fail because the Trust server certificate option is not enabled yet (next step). -

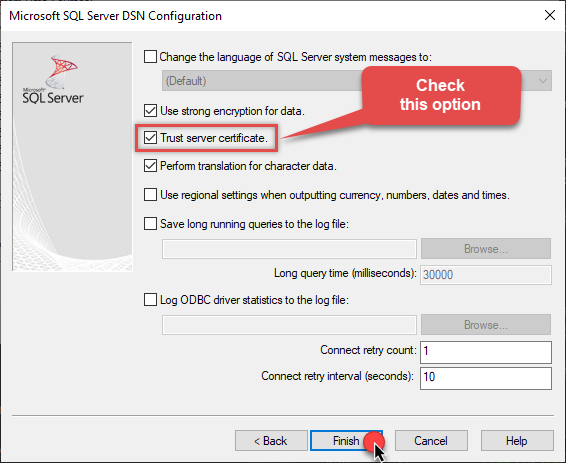

Continue by checking the Trust server certificate option:

-

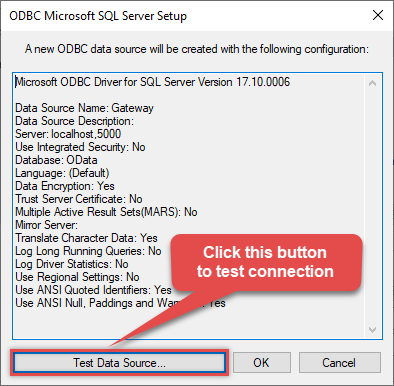

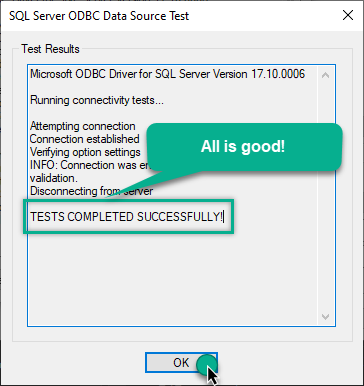

Once you do that, test the connection:

-

If the connection is successful, everything is good:

-

Done!

We are ready to move to the final step. Let's do it!

Access data in Informatica PowerCenter via the gateway

Finally, we are ready to read data from Cosmos DB in Informatica PowerCenter via the Data Gateway. Follow these final steps:

-

Go back to Informatica PowerCenter.

-

Open Informatica Mapping Designer (Click [D] icon)

-

Click on Source Icon to switch to Sources designer

-

From the top menu > Click on Sources > Import from Database …

-

Select ODBC data source from the dropdown (Find out DSN we created earlier to use as JSON Source)

-

Click Connect button to get a list of tables. Any array node is listed as a table. Also, you will see array node with parent columns (e.g. value_with_parent). You may get some warning like below but they are harmless so just ignore by clicking OK.

DLL name entry missing from C:\Informatica\PowerCenter8.6.1\client\bin\powrmart.ini Section = ODBCDLL Entry = ZappySys JSON Driver

—————————————————-

Using EXTODBC.DLL to support ZappySys JSON Driver. For native support of ZappySys JSON Driver make an entry in the .ini file. -

Read the data the same way we discussed at the beginning of this article.

-

That's it!

Now you can connect to Cosmos DB data in Informatica PowerCenter via the ZappySys Data Gateway.

john and your password.

Conclusion

In this guide, we demonstrated how to connect to Cosmos DB in Informatica PowerCenter and integrate your data — all without writing complex code.

Ready to get started? Download ODBC PowerPack now or ping us via chat if you still need help: