Introduction

You can connect to your Jira data from Power BI via the high-performance Jira ODBC Driver. We'll walk you through the entire setup.

Let's not waste time and get started!

Video tutorial

Watch this quick video to see the integration in action. It walks you through the end-to-end setup, including:

- Installing the ODBC PowerPack

- Configuring a secure connection to Jira

- Working with Jira data directly inside Power BI

- Exploring advanced API Driver features

Ready to dive in? Download the product to jump right in, or follow the step-by-step guide below to see how it works.

Create data source using Jira ODBC Driver

Step-by-step instructions

To get data from Jira using Power BI, we first need to create an ODBC data source. We will later read this data in Power BI. Perform these steps:

-

Download and install ODBC PowerPack (if you haven't already).

-

Search for

odbcand open the ODBC Data Sources (64-bit):

-

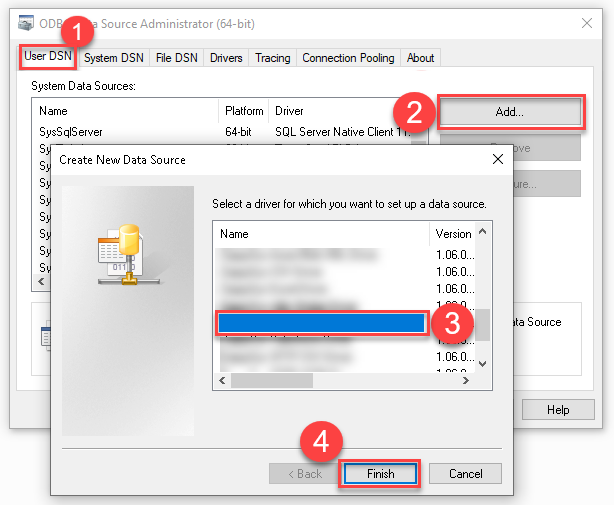

Create a User data source (User DSN) based on the ZappySys API Driver driver:

ZappySys API Driver

- Create and use a User DSN if the client application runs under a User Account. This is the ideal option at design time (e.g., when developing in Visual Studio). Use it for both types of applications (64-bit and 32-bit).

- Create and use a System DSN if the client application runs under a System Account (e.g., as a Windows Service). This is usually the required option in a production environment. If your Windows Service is a 32-bit application, you must use the 32-bit ODBC Data Source Administrator to configure this

When deployed to production, Power BI runs under a Service Account. Therefore, for the production environment, you must create and use a System DSN. -

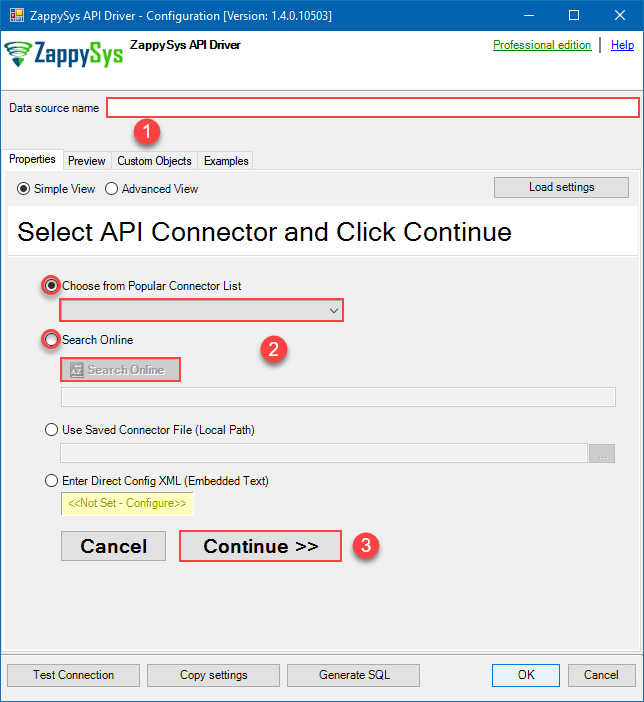

When the Configuration window appears give your data source a name if you haven't done that already, then select "Jira" from the list of Popular Connectors. If "Jira" is not present in the list, then click "Search Online" and download it. Then set the path to the location where you downloaded it. Finally, click Continue >> to proceed with configuring the DSN:

JiraDSNJira

-

Select your authentication scenario below to expand connection configuration steps to:

- Configure the authentication in Jira.

- Enter those details into the ZappySys API Driver data source configuration.

API Key based Authentication

Jira authentication

Firstly, login into your Atlassian account and then go to your Jira profile:- Go to Profile > Security.

- Click Create and manage API tokens.

- Then click Create API token button and give your token a label.

- When window appears with new API token, copy and use it in this connection manager.

- That's it!

API Connection Manager configuration

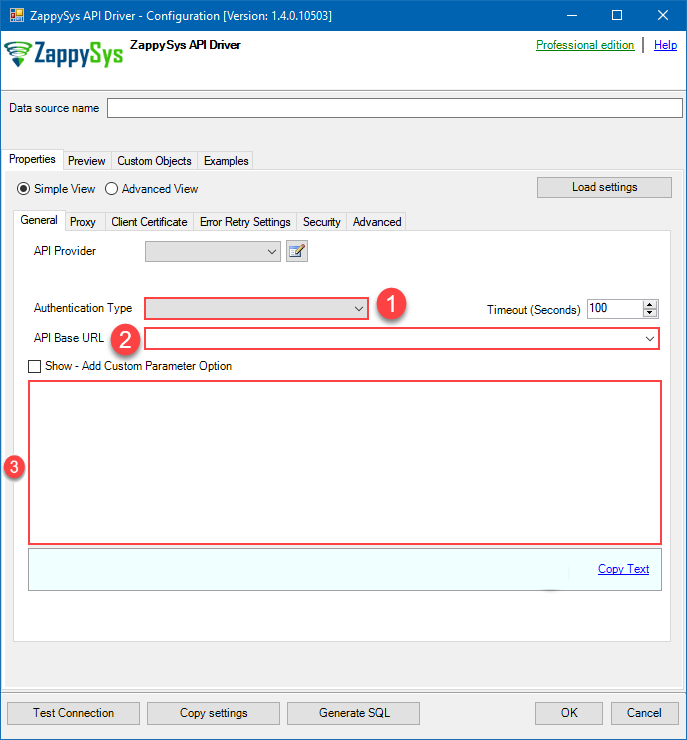

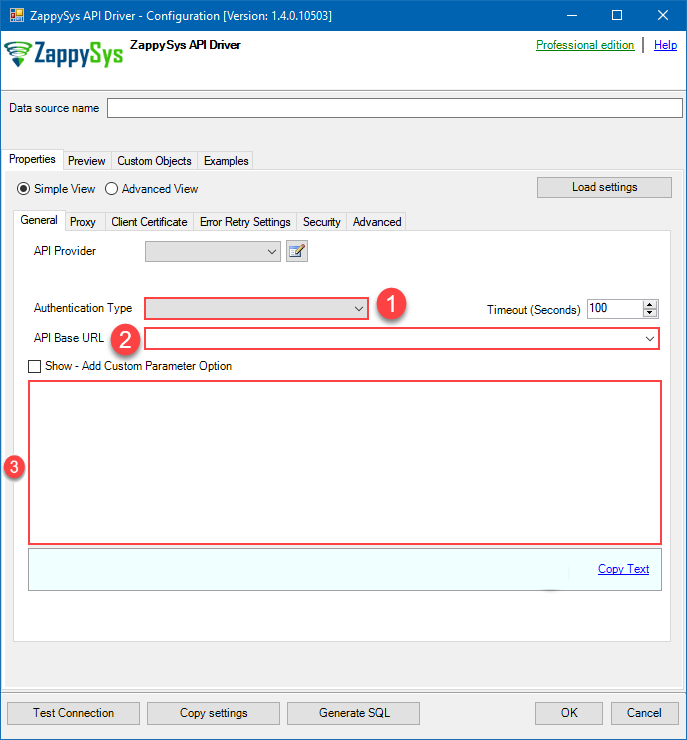

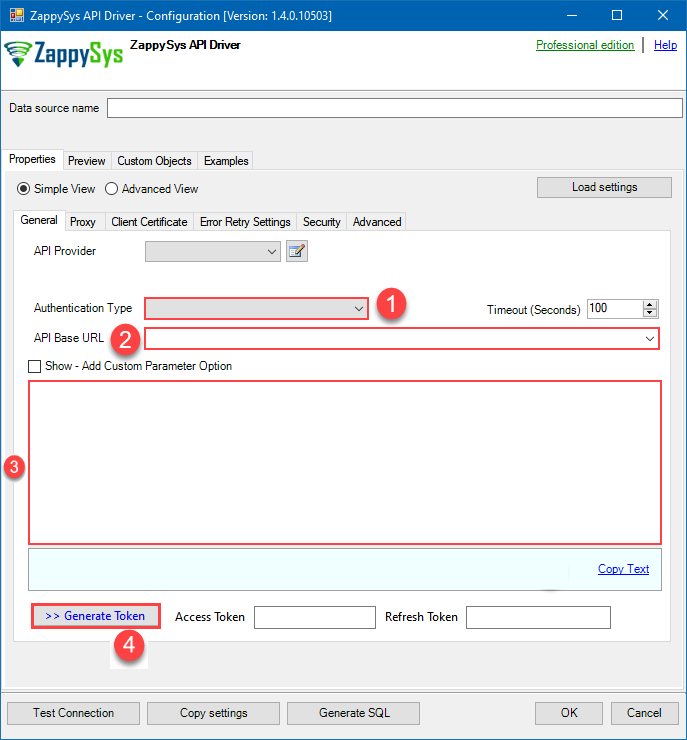

Just perform these simple steps to finish authentication configuration:

-

Set Authentication Type to

API Key based Authentication [Http] - Optional step. Modify API Base URL if needed (in most cases default will work).

- Fill in all the required parameters and set optional parameters if needed.

- Finally, hit OK button:

JiraDSNJiraAPI Key based Authentication [Http]https://[$Subdomain$].atlassian.net/rest/api/3Required Parameters Subdomain Fill-in the parameter... Atlassian User Name (email) Fill-in the parameter... API Key Fill-in the parameter... Optional Parameters CustomColumnsRegex RetryMode RetryWhenStatusCodeMatch RetryStatusCodeList 429 RetryCountMax 5 RetryMultiplyWaitTime True  Find full details in the Jira Connector authentication reference.

Find full details in the Jira Connector authentication reference.Personal Access Token (PAT) Authentication

Jira authentication

Follow official Atlassian instructions on how to create a PAT (Personal Access Token) for JIRAAPI Connection Manager configuration

Just perform these simple steps to finish authentication configuration:

-

Set Authentication Type to

Personal Access Token (PAT) Authentication [Http] - Optional step. Modify API Base URL if needed (in most cases default will work).

- Fill in all the required parameters and set optional parameters if needed.

- Finally, hit OK button:

JiraDSNJiraPersonal Access Token (PAT) Authentication [Http]https://[$Subdomain$].atlassian.net/rest/api/3Required Parameters Subdomain Fill-in the parameter... Token (PAT Bearer Token) Fill-in the parameter... Optional Parameters CustomColumnsRegex RetryMode RetryWhenStatusCodeMatch RetryStatusCodeList 429 RetryCountMax 5 RetryMultiplyWaitTime True  Find full details in the Jira Connector authentication reference.

Find full details in the Jira Connector authentication reference.OAuth (**Must change API Base URL to V3 OAuth**)

Jira authentication

OAuth App must be created in Atlassian Developer Console. It is found at https://developer.atlassian.com/console/myapps/ [API reference]

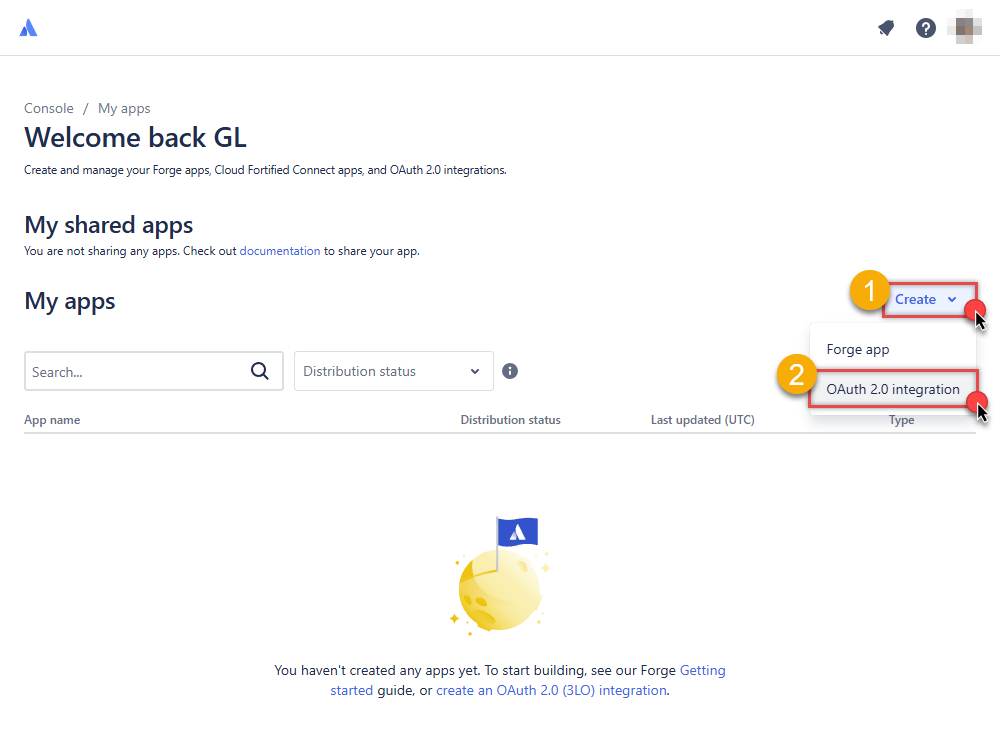

Firstly, login into your Atlassian account and then create Jira application:- Go to Atlassian Developer area.

-

Click Create and select OAuth 2.0 integration item to create an OAuth app:

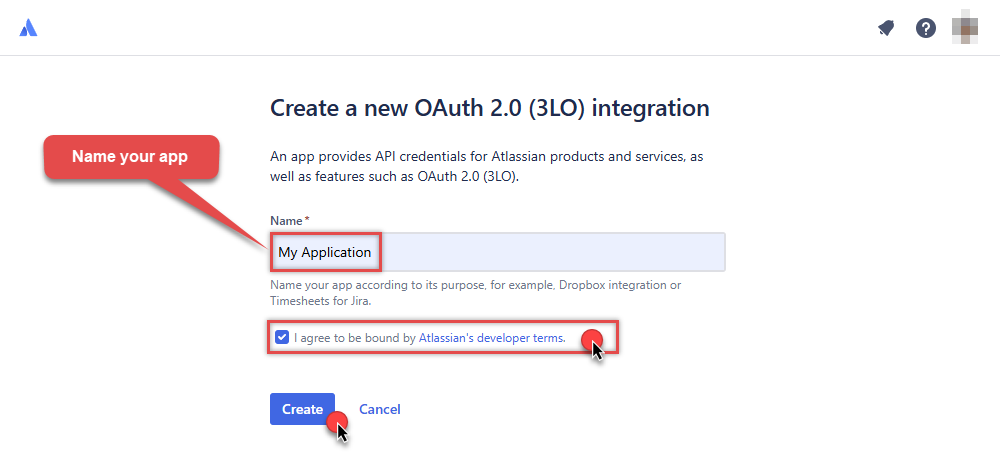

-

Give your app a name, accept the terms and hit Create:

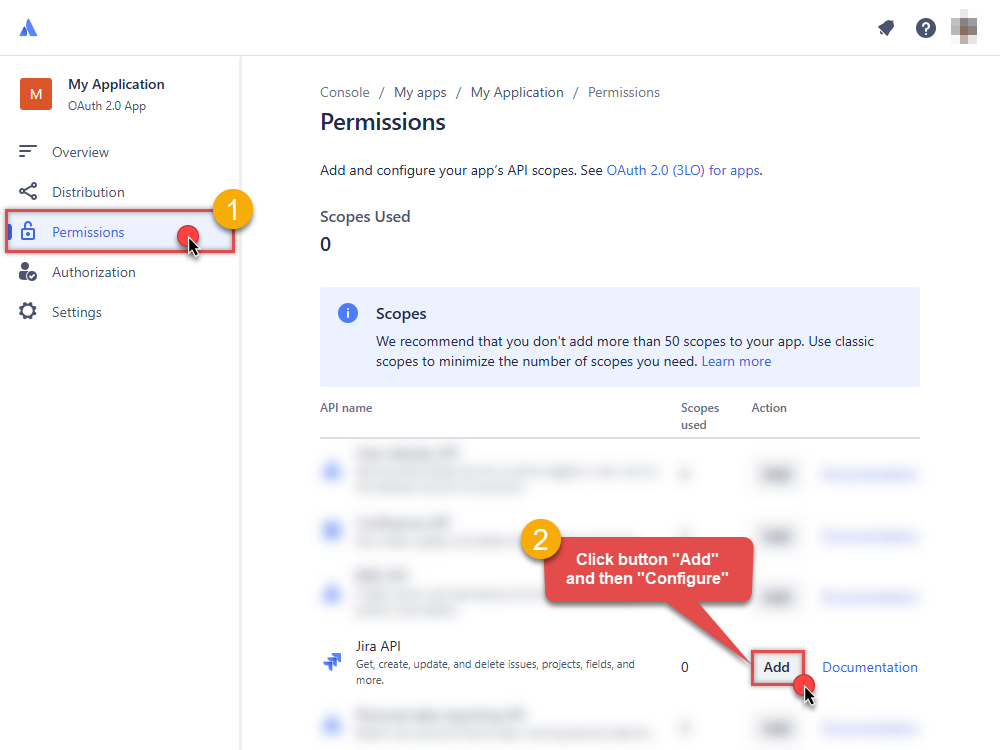

-

To enable permissions/scopes for your application, click Permissions tab, then hit Add button, and click Configure button, once it appears:

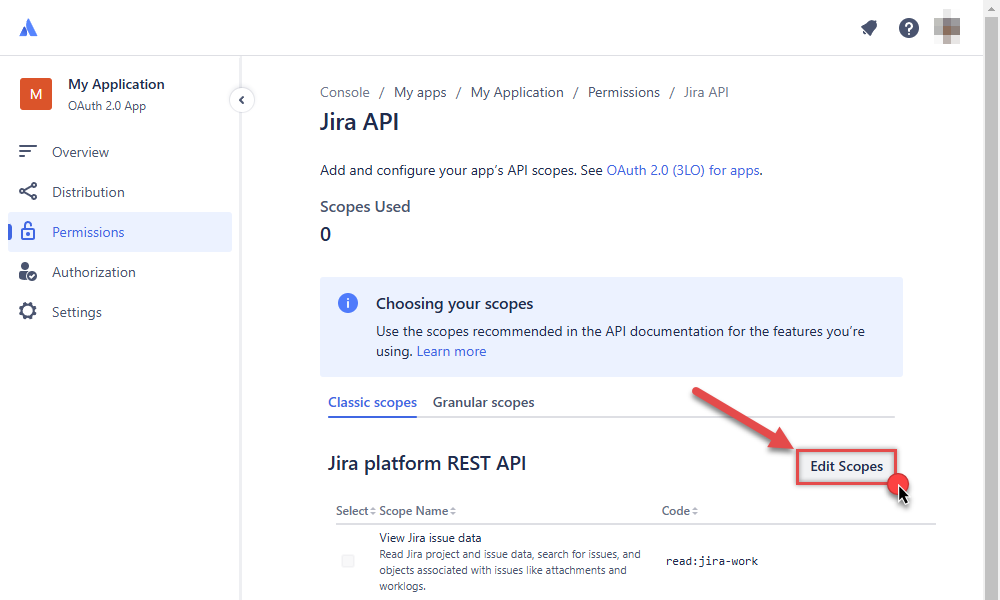

-

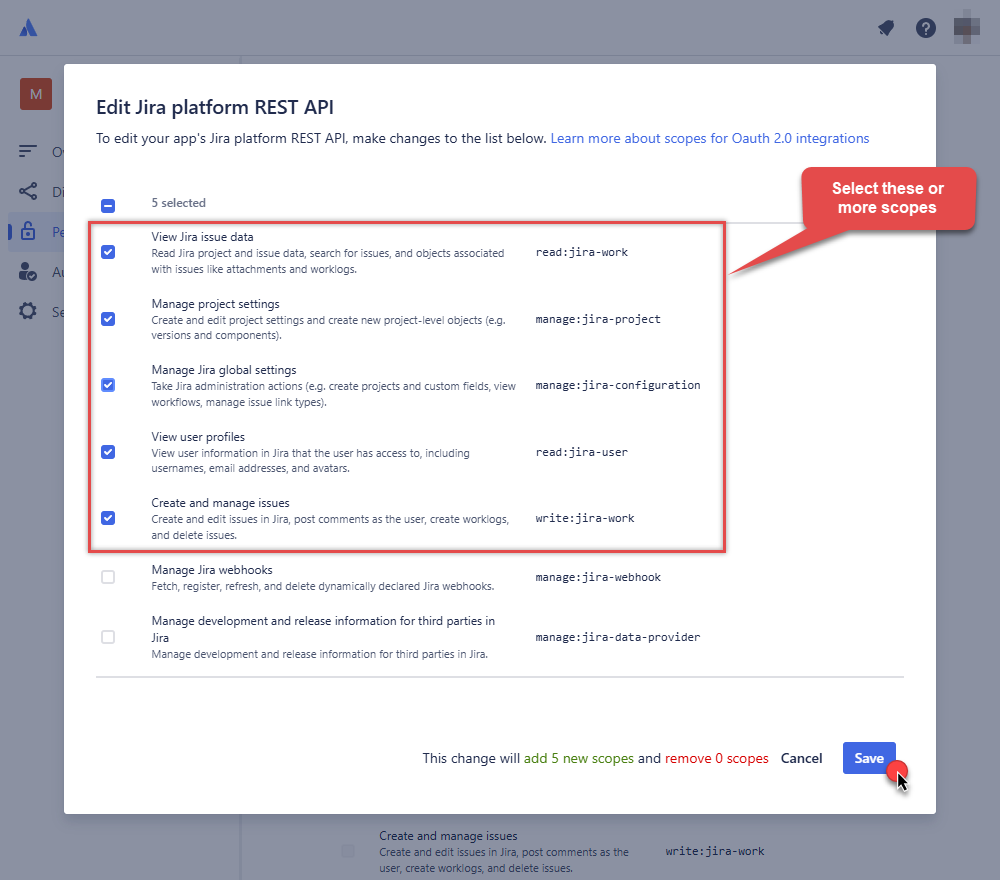

Continue by hitting Edit Scopes button to assign scopes for the application:

-

Select these scopes or all of them:

-

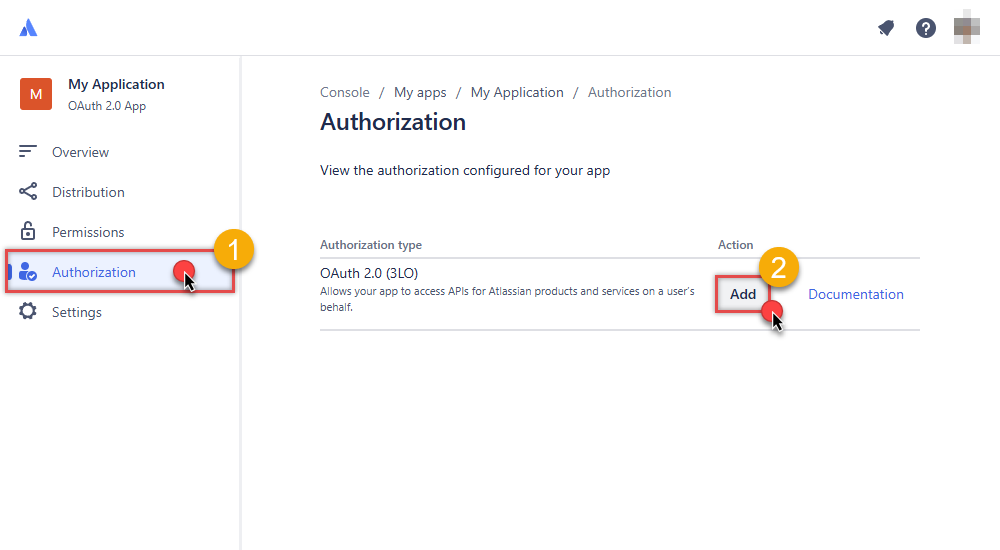

Then click Authorization option on the left and click Add button:

-

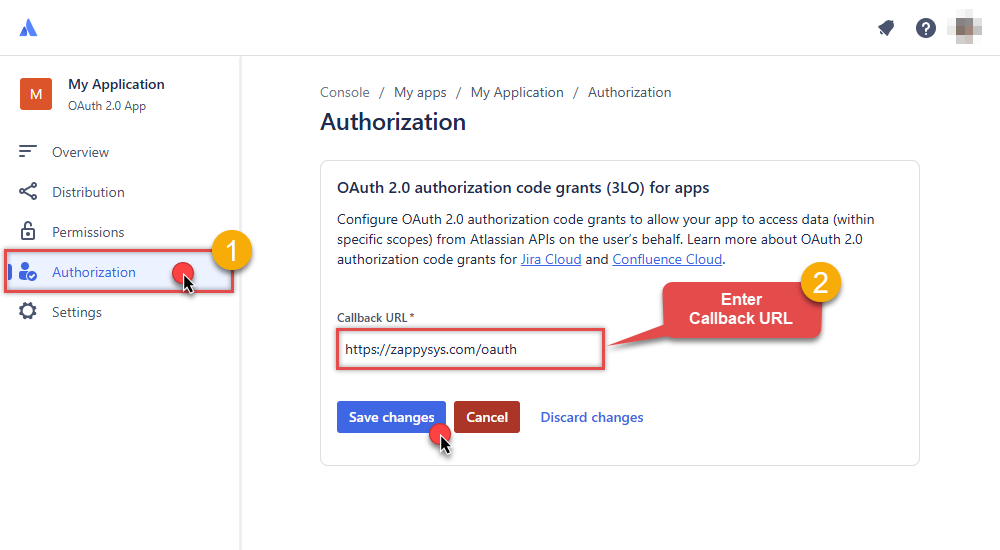

Enter your own Callback URL (Redirect URL) or simply enter

https://zappysys.com/oauth, if you don't have one:

-

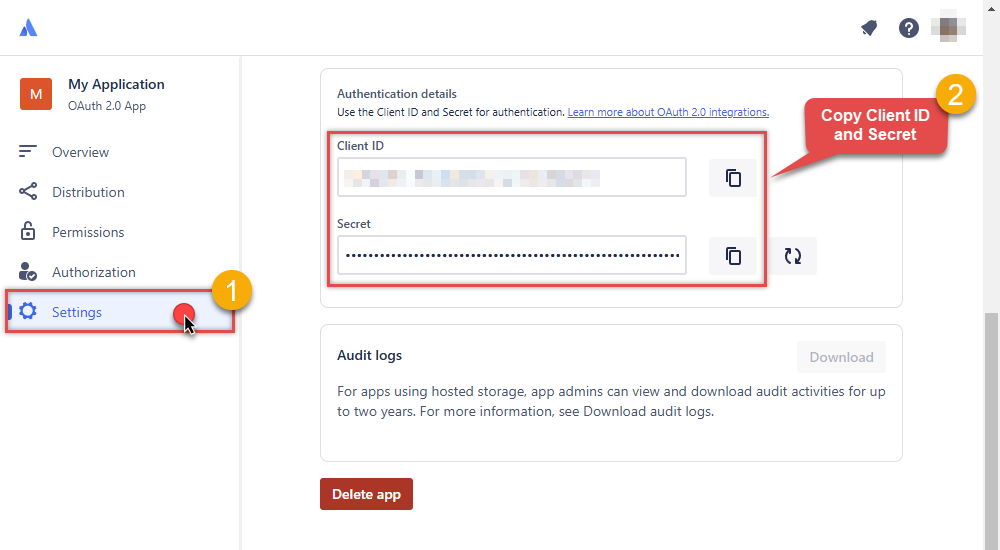

Then hit Settings option and copy Client ID and Secret into your favorite text editor (we will need them in the next step):

-

Now go to SSIS package or ODBC data source and in OAuth authentication set these parameters:

- For ClientId parameter use Client ID value from the previous steps.

- For ClientSecret parameter use Secret value from the previous steps.

- For Scope parameter use the Scopes you set previously (specify them all here):

- offline_access (a must)

- read:jira-user

- read:jira-work

- write:jira-work

- manage:jira-project

- manage:jira-configuration

NOTE: A full list of available scopes is available in Atlassian documentation. -

For Subdomain parameter use your Atlassian subdomain value

(e.g.

mycompany, if full host name ismycompany.atlassian.net).

- Click Generate Token to generate tokens.

- Finally, select Organization Id from the drop down.

- That's it! You can now use Jira Connector!

API Connection Manager configuration

Just perform these simple steps to finish authentication configuration:

-

Set Authentication Type to

OAuth (**Must change API Base URL to V3 OAuth**) [OAuth] - Optional step. Modify API Base URL if needed (in most cases default will work).

- Fill in all the required parameters and set optional parameters if needed.

- Press Generate Token button to generate the tokens.

- Finally, hit OK button:

JiraDSNJiraOAuth (**Must change API Base URL to V3 OAuth**) [OAuth]https://[$Subdomain$].atlassian.net/rest/api/3Required Parameters ClientId Fill-in the parameter... ClientSecret Fill-in the parameter... Scope Fill-in the parameter... ReturnUrl Fill-in the parameter... Organization Id (Select after clicking [Generate Token]) Fill-in the parameter... Optional Parameters Custom Columns for output (Select after clicking [Generate Token]) RetryMode RetryWhenStatusCodeMatch RetryStatusCodeList 429 RetryCountMax 5 RetryMultiplyWaitTime True  Find full details in the Jira Connector authentication reference.

Find full details in the Jira Connector authentication reference. -

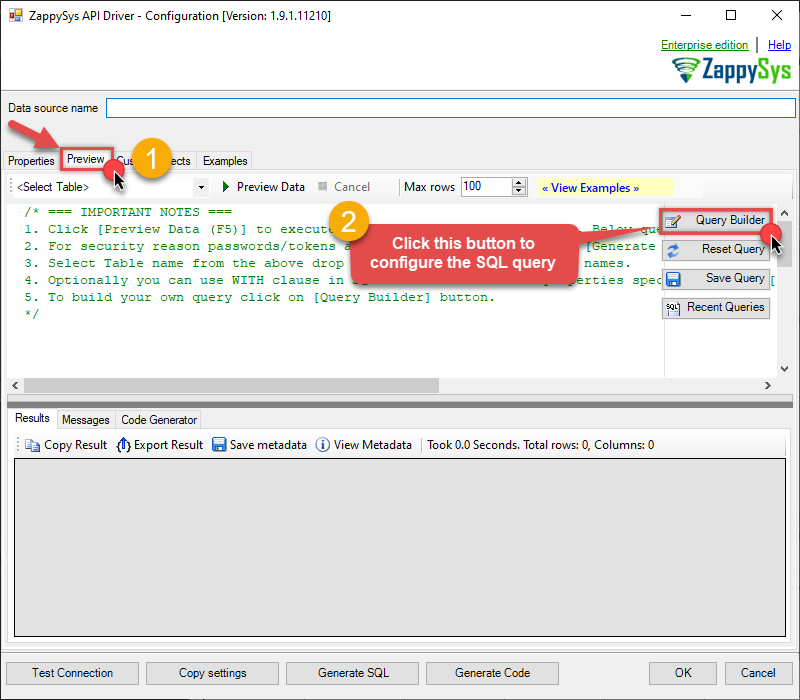

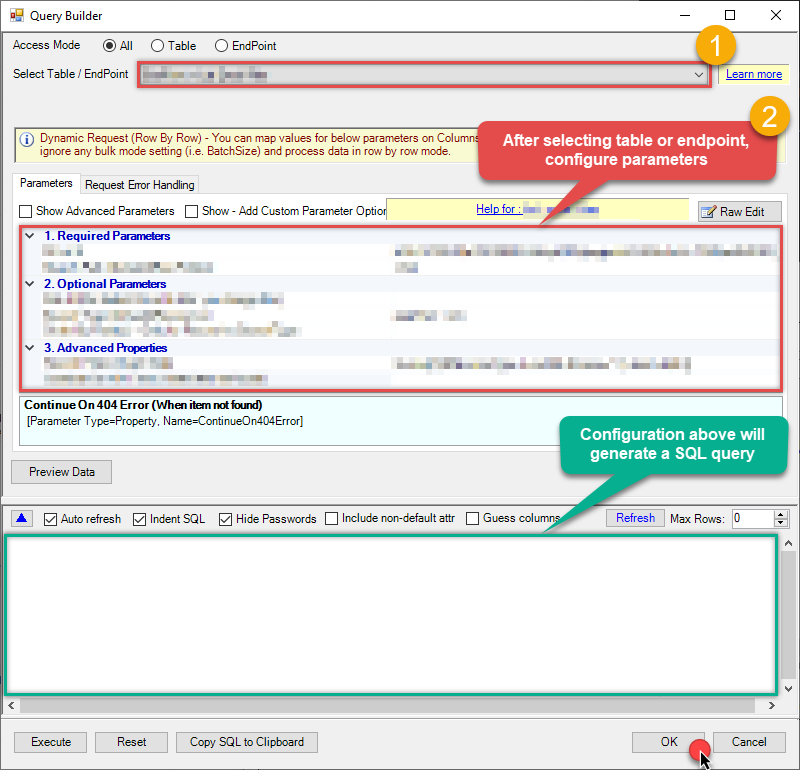

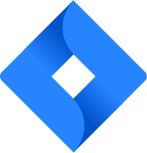

Once the data source connection has been configured, it's time to configure the SQL query. Select the Preview tab and then click Query Builder button to configure the SQL query:

ZappySys API Driver - JiraRead and write Jira data effortlessly. Track, manage, and automate issues, projects, worklogs, and comments — almost no coding required.JiraDSN

ZappySys API Driver - JiraRead and write Jira data effortlessly. Track, manage, and automate issues, projects, worklogs, and comments — almost no coding required.JiraDSN

-

Start by selecting the Table or Endpoint you are interested in and then configure the parameters. This will generate a query that we will use in Power BI to retrieve data from Jira. Hit OK button to use this query in the next step.

SELECT * FROM Issues --//Query single issue by numeric Issue Id --SELECT * FROM Issues Where Id=101234 --//Query issue by numeric Issue Ids (multiple) --SELECT * FROM Issues WITH(SearchBy='Key', Key='101234,101235,101236') --//Query issue by Issue Key(s) (alpha-numeric) --SELECT * FROM Issues WITH(SearchBy='Key', Key='PROJ-11') --SELECT * FROM Issues WITH(SearchBy='Key', Key='PROJ-11,PROJ-12,PROJ-13') --//Query issue by project(s) --SELECT * FROM Issues WITH(SearchBy='Project', Project='PROJ') --SELECT * FROM Issues WITH(SearchBy='Project', Project='PROJ,KAN,CS') --//Query issue by JQL expression --SELECT * FROM Issues WITH(SearchBy='Jql', Jql='status IN (Done, Closed) AND created > -5d' ) Some parameters configured in this window will be passed to the Jira API, e.g. filtering parameters. It means that filtering will be done on the server side (instead of the client side), enabling you to get only the meaningful data

Some parameters configured in this window will be passed to the Jira API, e.g. filtering parameters. It means that filtering will be done on the server side (instead of the client side), enabling you to get only the meaningful datamuch faster . -

Now hit Preview Data button to preview the data using the generated SQL query. If you are satisfied with the result, use this query in Power BI:

ZappySys API Driver - JiraRead and write Jira data effortlessly. Track, manage, and automate issues, projects, worklogs, and comments — almost no coding required.JiraDSN

ZappySys API Driver - JiraRead and write Jira data effortlessly. Track, manage, and automate issues, projects, worklogs, and comments — almost no coding required.JiraDSNSELECT * FROM Issues --//Query single issue by numeric Issue Id --SELECT * FROM Issues Where Id=101234 --//Query issue by numeric Issue Ids (multiple) --SELECT * FROM Issues WITH(SearchBy='Key', Key='101234,101235,101236') --//Query issue by Issue Key(s) (alpha-numeric) --SELECT * FROM Issues WITH(SearchBy='Key', Key='PROJ-11') --SELECT * FROM Issues WITH(SearchBy='Key', Key='PROJ-11,PROJ-12,PROJ-13') --//Query issue by project(s) --SELECT * FROM Issues WITH(SearchBy='Project', Project='PROJ') --SELECT * FROM Issues WITH(SearchBy='Project', Project='PROJ,KAN,CS') --//Query issue by JQL expression --SELECT * FROM Issues WITH(SearchBy='Jql', Jql='status IN (Done, Closed) AND created > -5d' ) You can also access data quickly from the tables dropdown by selecting <Select table>.A

You can also access data quickly from the tables dropdown by selecting <Select table>.AWHEREclause,LIMITkeyword will be performed on the client side, meaning that thewhole result set will be retrieved from the Jira API first, and only then the filtering will be applied to the data. If possible, it is recommended to use parameters in Query Builder to filter the data on the server side (in Jira servers). -

Click OK to finish creating the data source.

Connect to Jira data in Power BI

Import data from a table or view

-

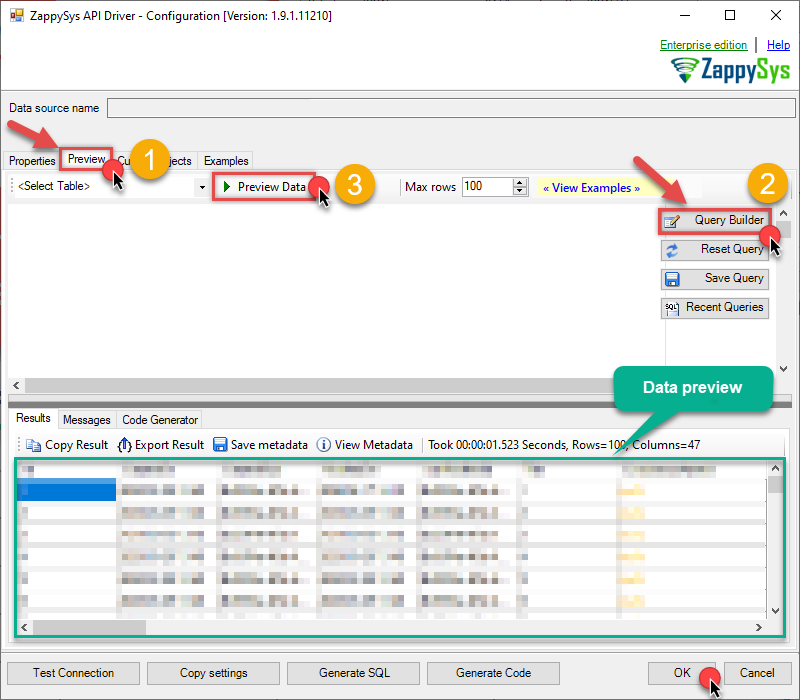

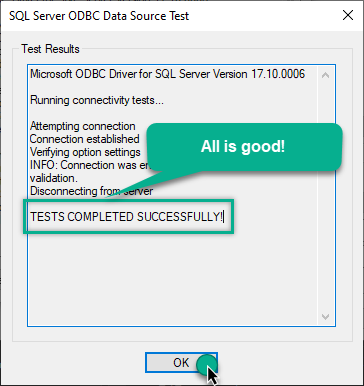

Once you open Power BI Desktop click Get Data to get data from ODBC:

-

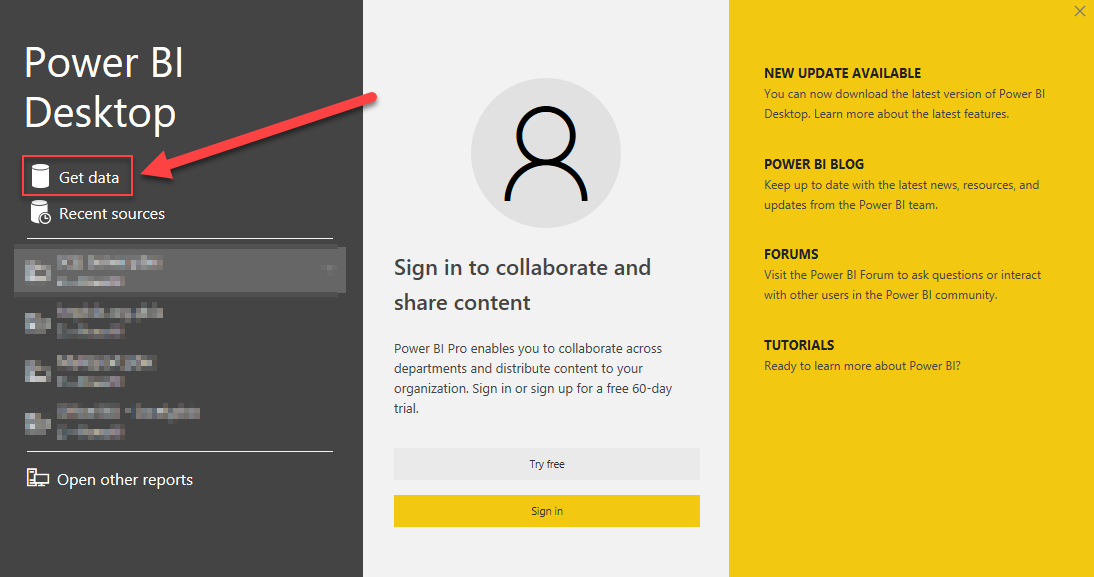

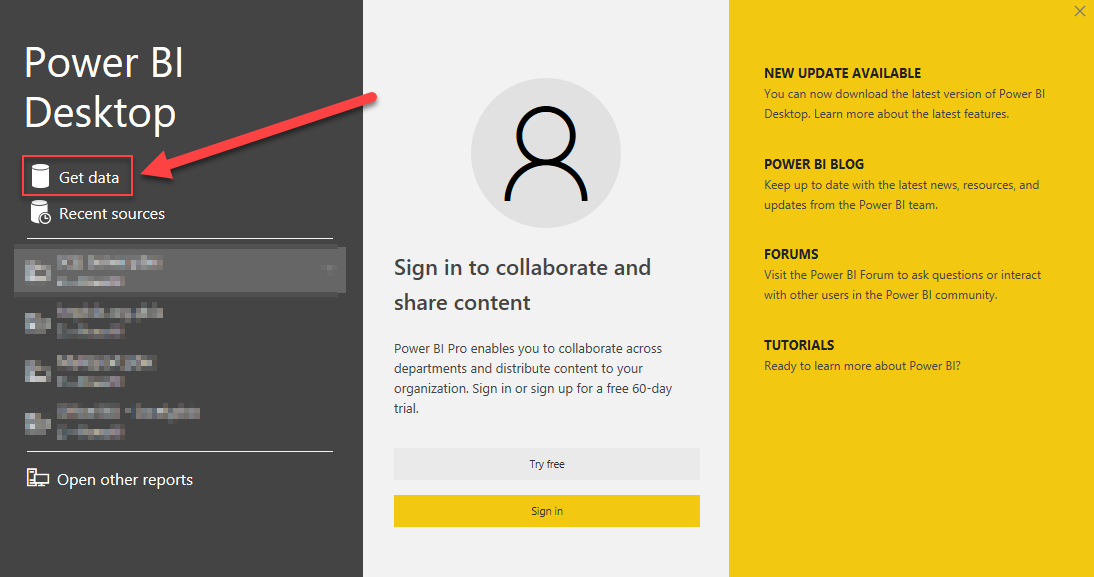

A window opens, and then search for "odbc" to get data from ODBC data source:

-

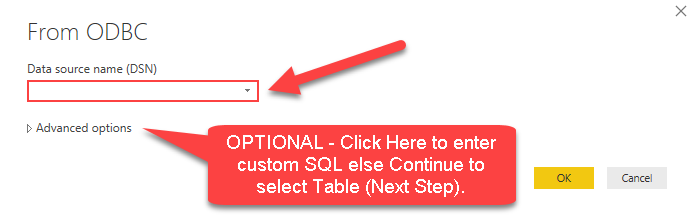

Another window opens and asks to select a Data Source we already created. Choose JiraDSN and continue:

JiraDSN

-

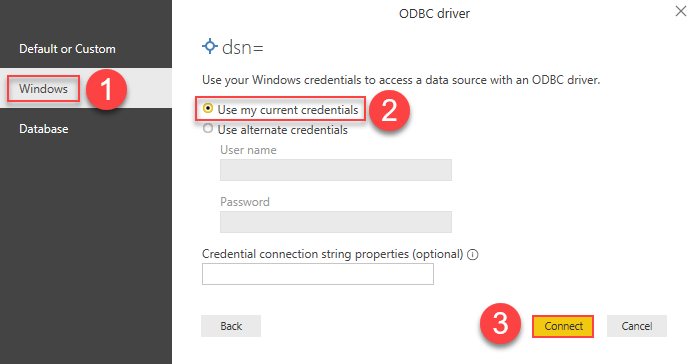

Most likely, you will be asked to authenticate to a newly created DSN. Just select Windows authentication option together with Use my current credentials option:

JiraDSN

-

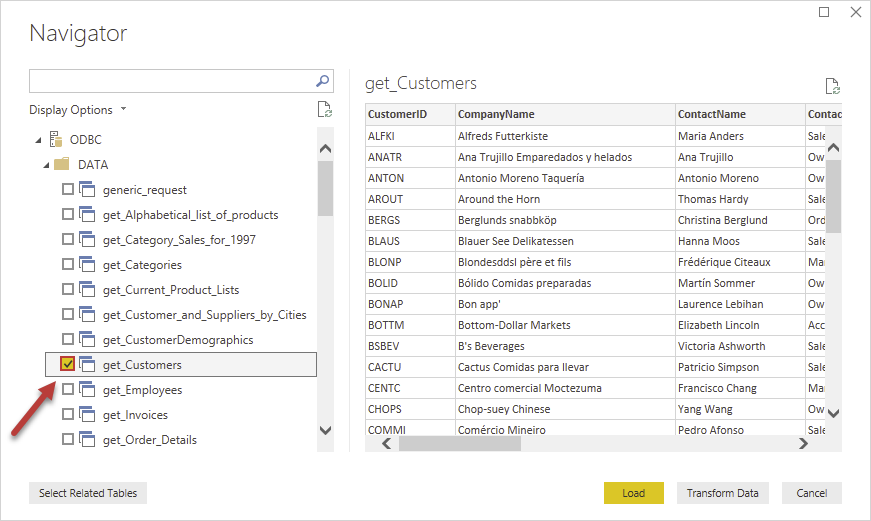

Finally, you will be asked to select a table or view to get data from. Select one and load the data!

-

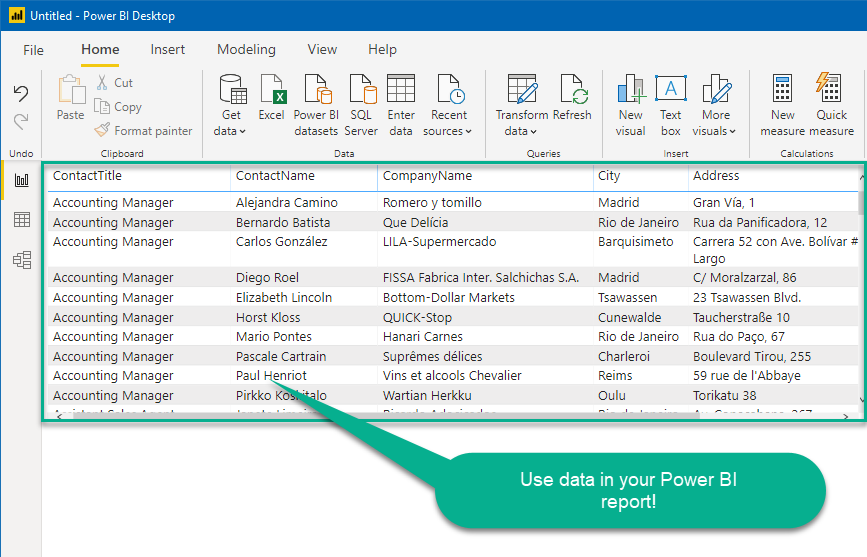

Finally, finally, read extracted data from Jira in a Power BI report:

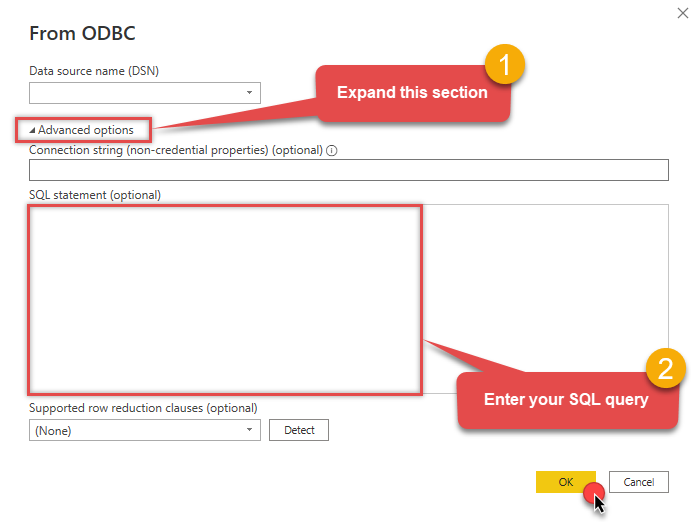

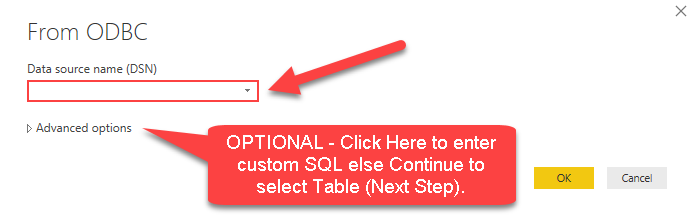

Import data using a SQL query

If you wish to import Jira data from SQL query rather than a table then you can use advanced options during import steps (as below). After selecting DSN you can click on advanced options to see SQL Query editor.

SELECT * FROM Issues --//Query single issue by numeric Issue Id --SELECT * FROM Issues Where Id=101234 --//Query issue by numeric Issue Ids (multiple) --SELECT * FROM Issues WITH(SearchBy='Key', Key='101234,101235,101236') --//Query issue by Issue Key(s) (alpha-numeric) --SELECT * FROM Issues WITH(SearchBy='Key', Key='PROJ-11') --SELECT * FROM Issues WITH(SearchBy='Key', Key='PROJ-11,PROJ-12,PROJ-13') --//Query issue by project(s) --SELECT * FROM Issues WITH(SearchBy='Project', Project='PROJ') --SELECT * FROM Issues WITH(SearchBy='Project', Project='PROJ,KAN,CS') --//Query issue by JQL expression --SELECT * FROM Issues WITH(SearchBy='Jql', Jql='status IN (Done, Closed) AND created > -5d' )

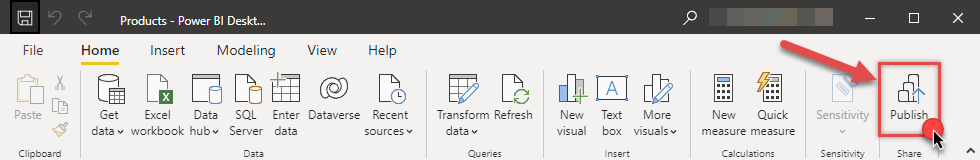

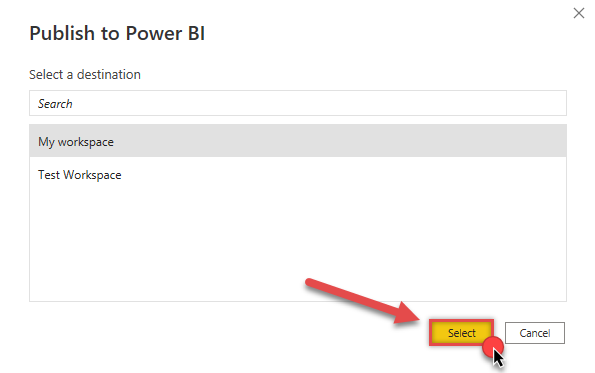

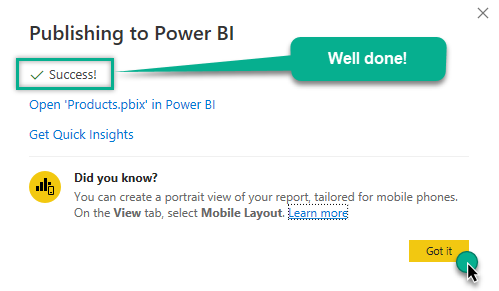

Publish Power BI report to Power BI service

Here are the instructions on how to publish a Power BI report to Power BI service from Power BI Desktop application:

-

First of all, go to Power BI Desktop, open a Power BI report, and click Publish button:

-

Then select the Workspace you want to publish report to and hit Select button:

-

Finally, if everything went right, you will see a window indicating success:

What's next? If you need to periodically refresh Power BI semantic model (dataset) to ensure data accuracy and up-to-dateness, you can accomplish that by using Microsoft On-premises data gateway. Proceed to the next section - Refresh the Power BI semantic model via the gateway - and learn how to do that.

Refresh the Power BI semantic model (dataset) via the gateway

Power BI allows you to refresh semantic models (previously known as "datasets") that are based on data sources residing on-premises. This is achieved using the Microsoft On-premises data gateway. It acts as a secure bridge between Power BI cloud services and your local Jira ODBC data source:

There are two types of On-premises data gateways:

- Supports Power BI and other Microsoft Cloud services

- Installs as a Windows service

- Starts automatically

- Supports centralized user access control

- Supports the

Direct Queryfeature - Ideal for enterprise solutions

- Supports Power BI services only

- Cannot run as a Windows service

- Stops when you sign out of Windows

- Does not support access control

- Does not support the

Direct Queryfeature - Best for individual use and POC solutions

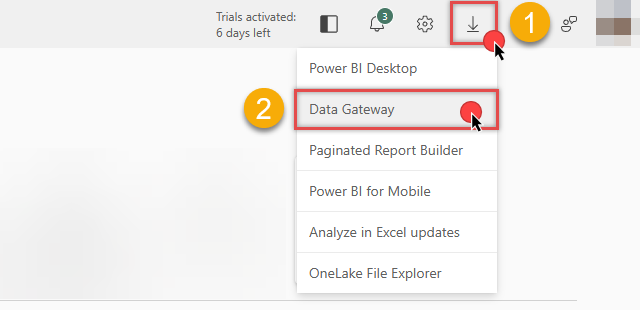

You can download the On-premises data gateway directly from the Microsoft Fabric or Power BI portals:

Below are instructions on how to refresh the semantic model using both gateway types.

Use the Standard mode gateway (recommended)

Best for enterprise production environments where multiple users need to share the same gateway connection.

Follow these steps to refresh a Power BI semantic model using the On-premises data gateway (Standard mode):

-

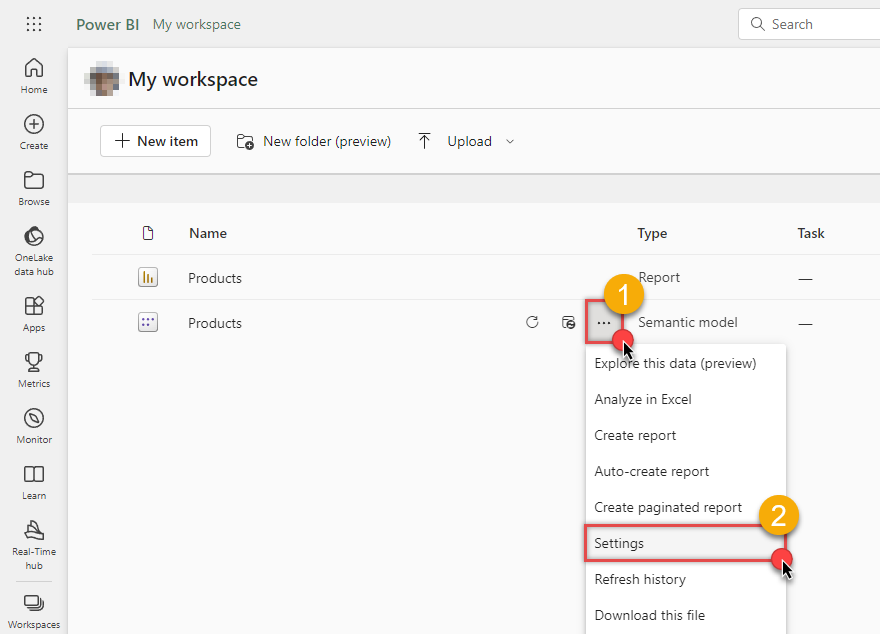

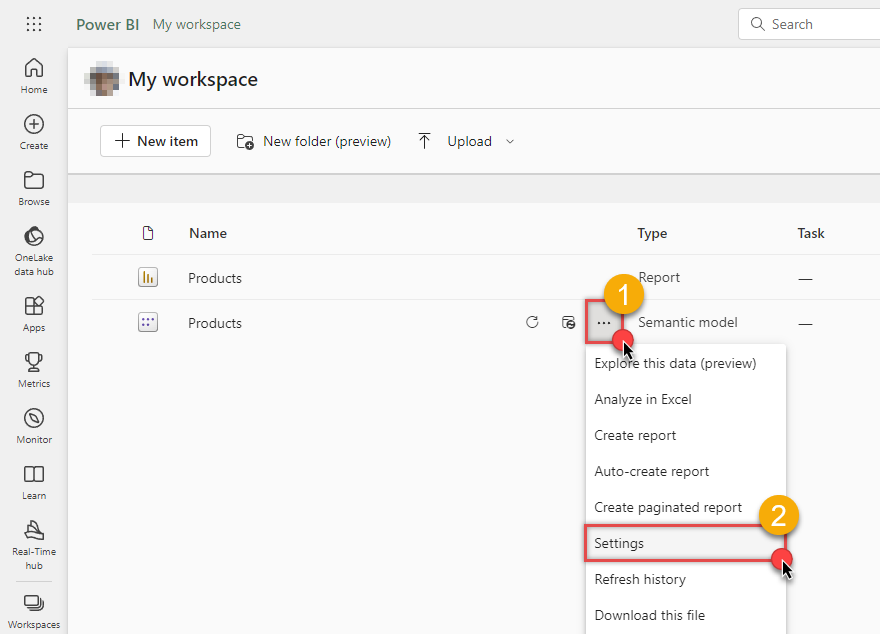

Go to Power BI My workspace, hover your mouse cursor over your semantic model, and click Settings:

-

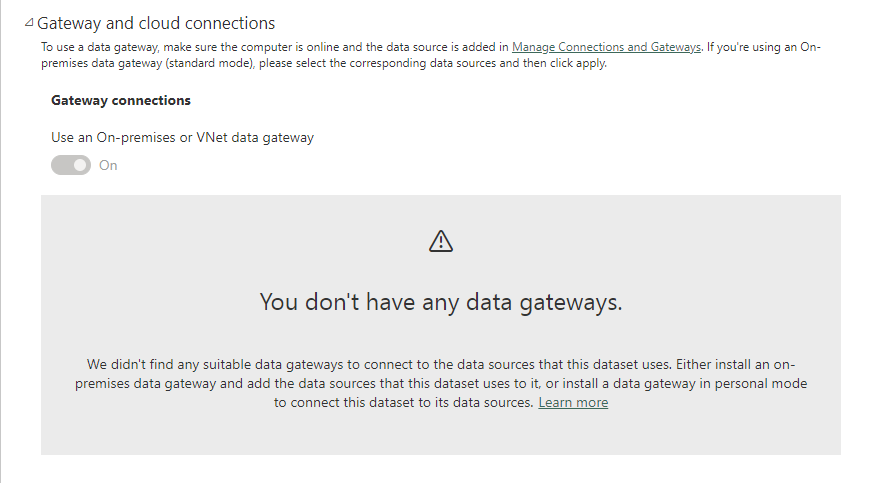

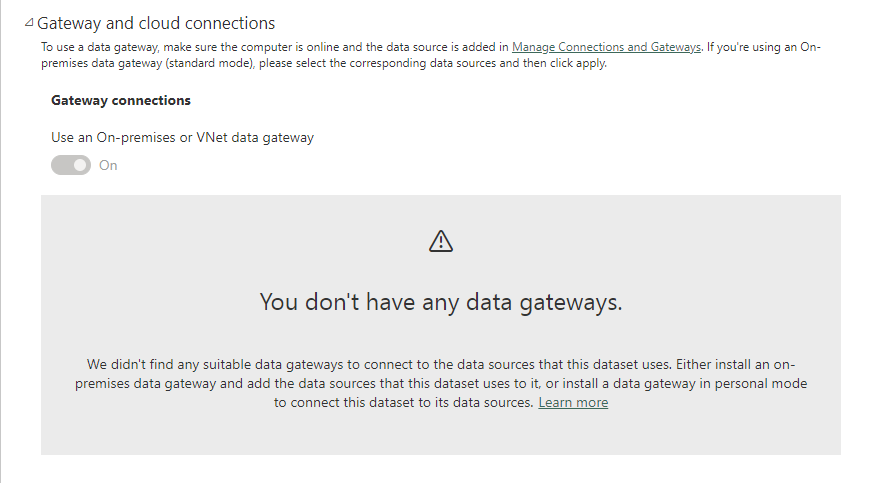

If you see this view, it means you must install the On-premises data gateway (Standard mode):

-

Download On-premises data gateway (standard mode) and run the installer.

-

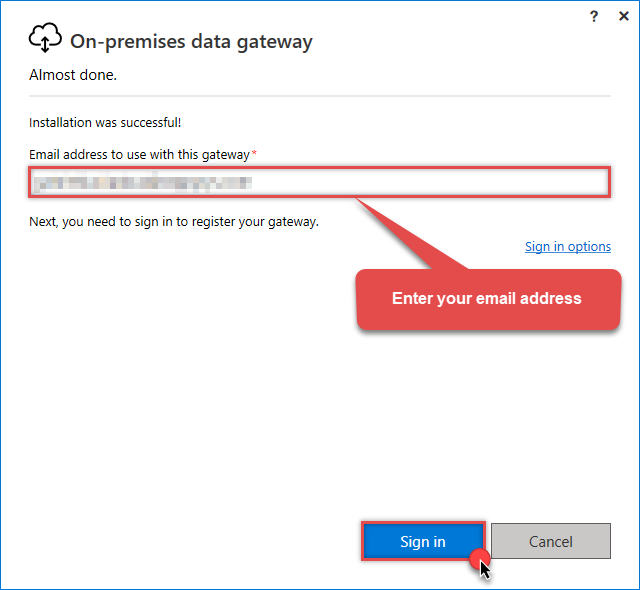

Once the configuration window opens, sign in:

Sign in with the same email address you use for Microsoft Fabric.

Sign in with the same email address you use for Microsoft Fabric. -

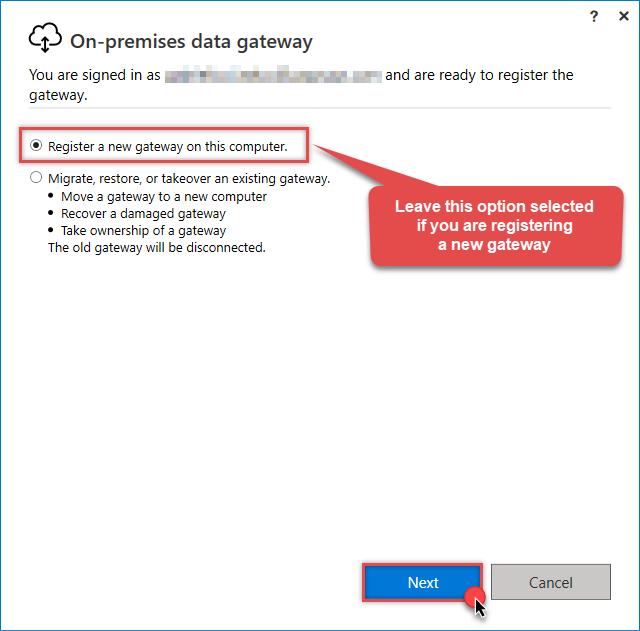

Select Register a new gateway on this computer (or migrate an existing one):

-

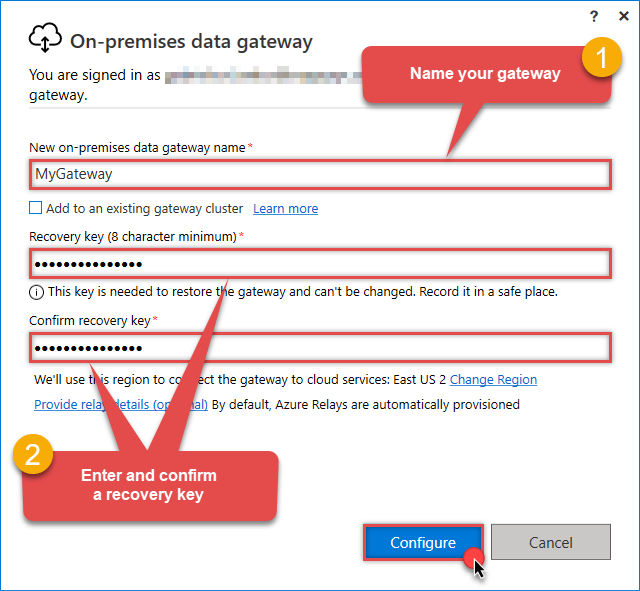

Name your gateway, enter a Recovery key, and click the Configure button:

Save your Recovery Key in a safe place (like a password manager). If you lose it, you cannot restore or migrate this gateway later.

Save your Recovery Key in a safe place (like a password manager). If you lose it, you cannot restore or migrate this gateway later. -

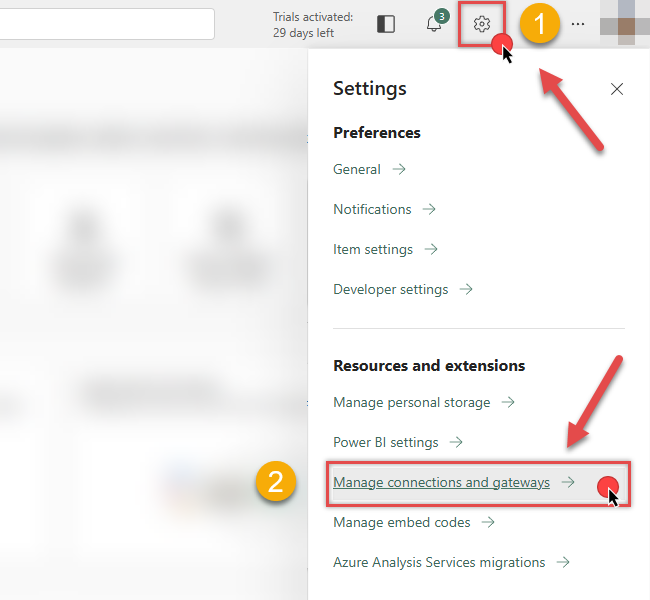

Once Microsoft gateway is installed, check if it registered correctly:

-

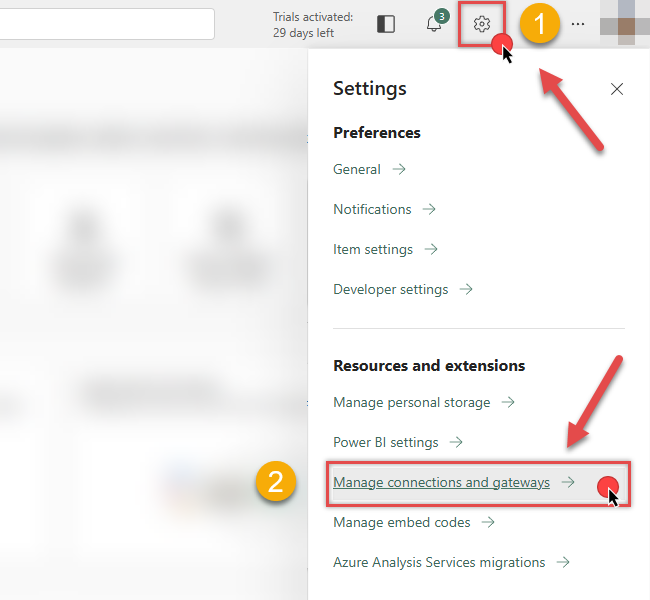

Go back to Power BI portal

-

Click Gear icon on top-right

-

And then hit Manage connections and gateways menu item

-

-

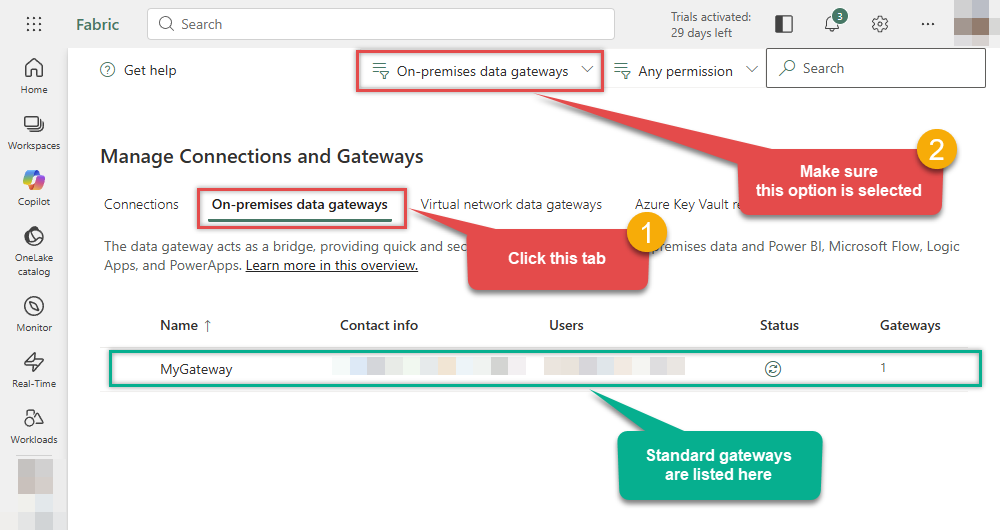

Continue by clicking the On-premises data gateway tab and selecting Standard mode gateways from the dropdown menu:

If your gateway is not listed, the registration may have failed. To resolve this:

- Wait a couple of minutes and refresh Power BI portal page

- Restart the machine where On-premises data gateway is installed

- Check firewall settings

-

Success! The gateway is now Online and ready to handle requests.

-

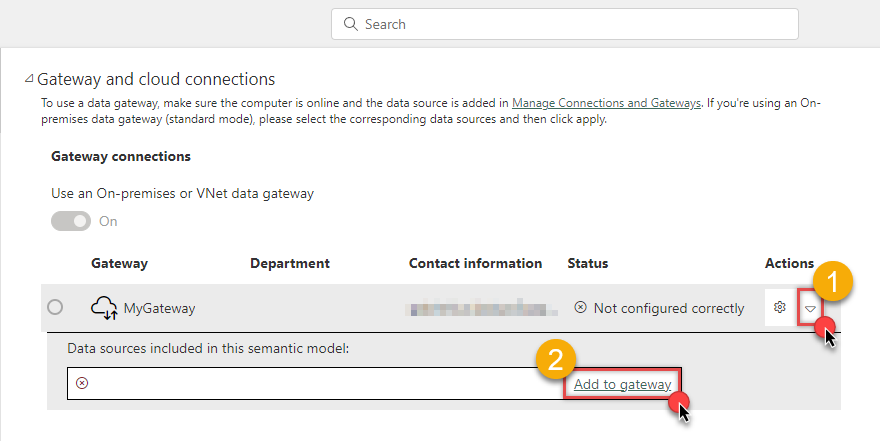

Now, return to your semantic model settings in the Power BI portal. Refresh the page, and you should see your newly created gateway. Click the arrow icon to expand the options, and then click the Add to gateway link:

ODBC{"connectionstring":"dsn=JiraDSN"}

-

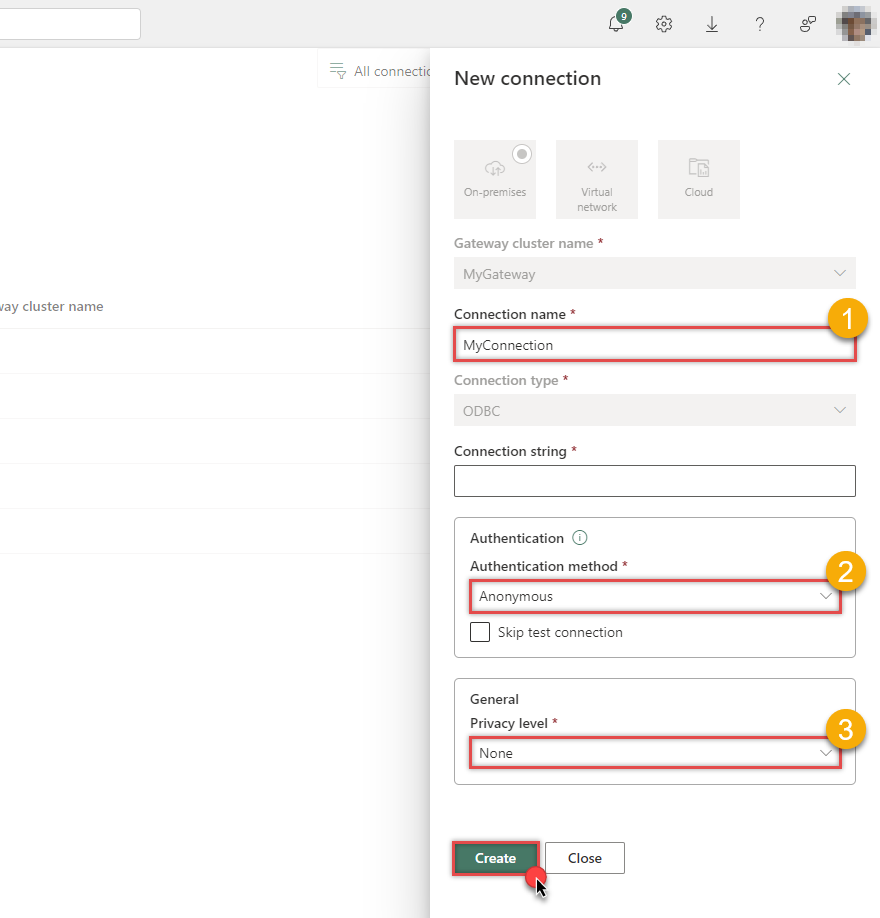

Once you do that, you will create a new gateway connection. Give it a name, set the Authentication method, Privacy level, and click the Create button:

dsn=JiraDSN In this example, we use the least restrictive Privacy level.

In this example, we use the least restrictive Privacy level.If your connection uses a full connection string, you may hit a length limitation when entering it into the field. To create the connection, you will need to shorten it manually. Check the section about the limitation of a full connection string on how to accomplish this.

On-premises data gateway (Personal mode) does not have this limitation.

-

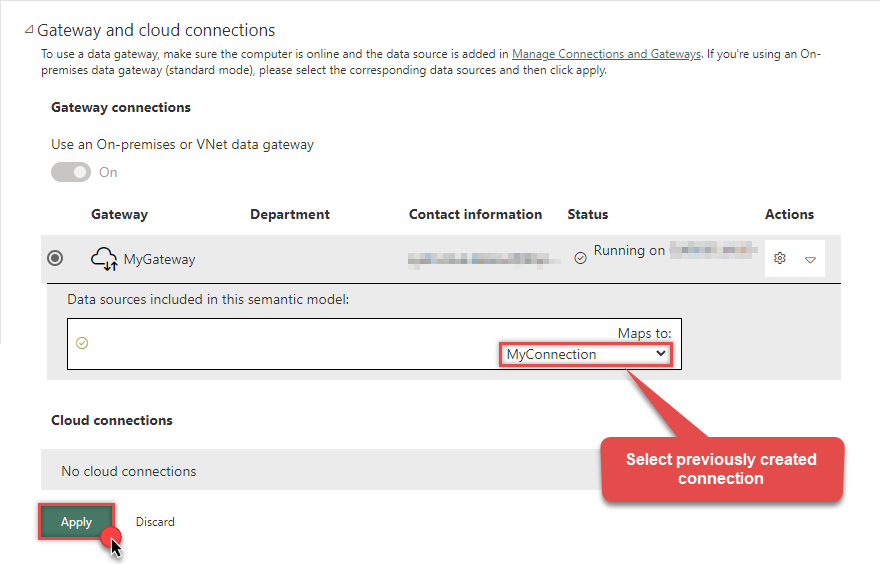

Select the newly created connection to map it to your dataset:

ODBC{"connectionstring":"dsn=JiraDSN"}

-

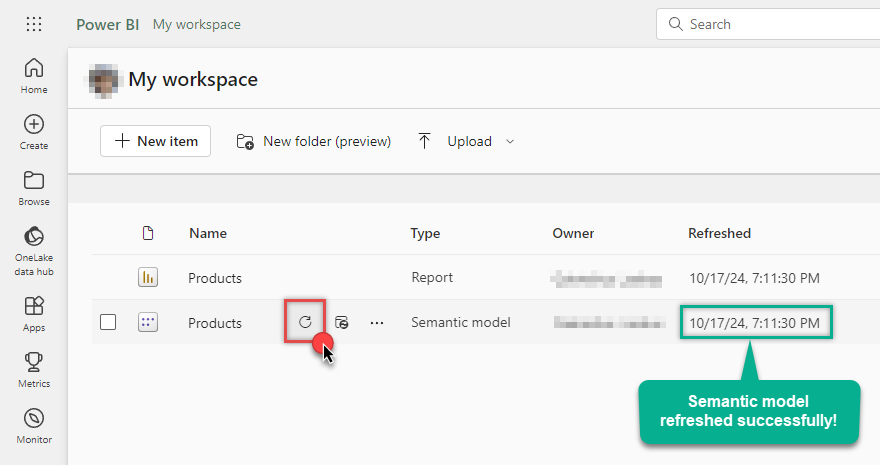

Finally, you can refresh the semantic model:

Use the Personal mode gateway (POC)

Best for single-user scenarios, quick tests (POC), or when you don't have administrative rights to install the Standard gateway.

Follow these steps to refresh a Power BI semantic model using the On-premises data gateway (Personal mode):

-

Go to Power BI My workspace, hover your mouse cursor over your semantic model, and click Settings:

-

If you see this view, it means you must install the On-premises data gateway (Personal mode):

-

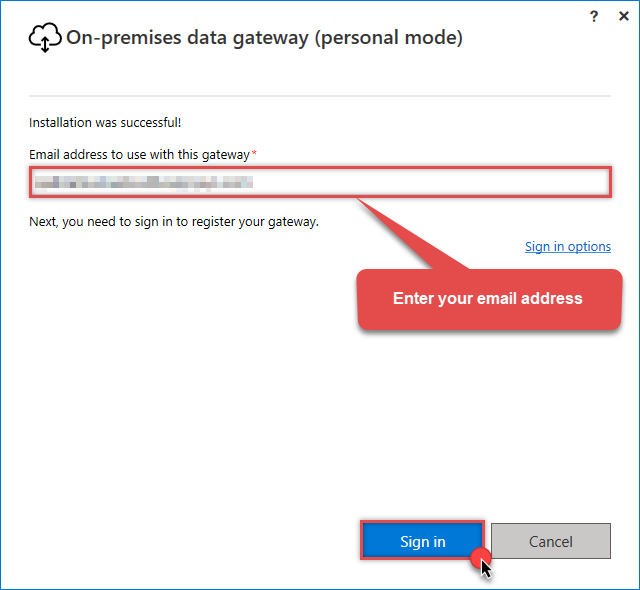

Install On-premises data gateway (personal mode) and sign-in:

Use the same email address you use when logging in into your account.

Use the same email address you use when logging in into your account. -

Once Microsoft gateway is installed, check if it registered correctly:

-

Go back to Power BI portal

-

Click Gear icon on top-right

-

And then hit Manage connections and gateways menu item

-

-

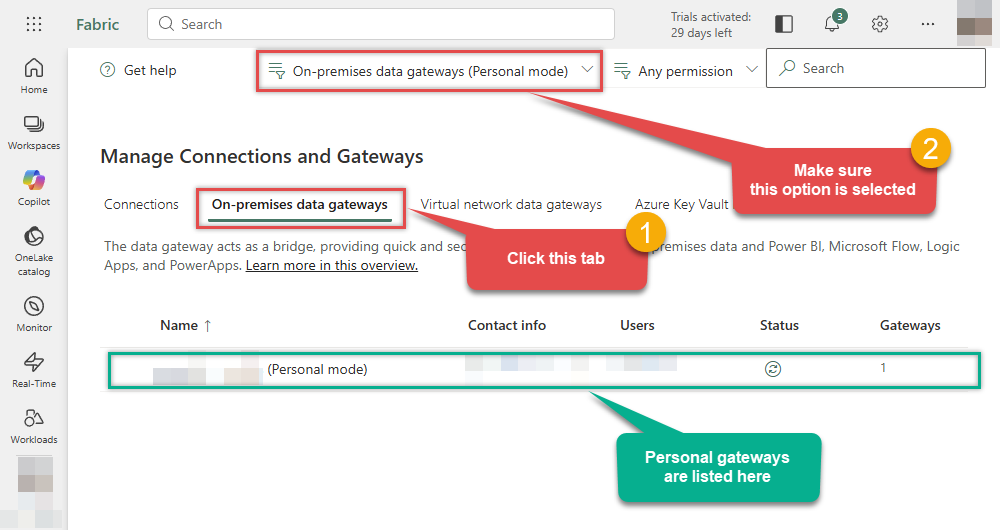

Continue by clicking On-premises data gateway tab and select Personal mode option from the dropdown:

If your gateway is not listed, the registration may have failed. To resolve this:

- Wait a couple of minutes and refresh Power BI portal page

- Restart the machine where On-premises data gateway is installed

- Check firewall settings

-

The On-premises data gateway is now Online and ready to receive requests.

-

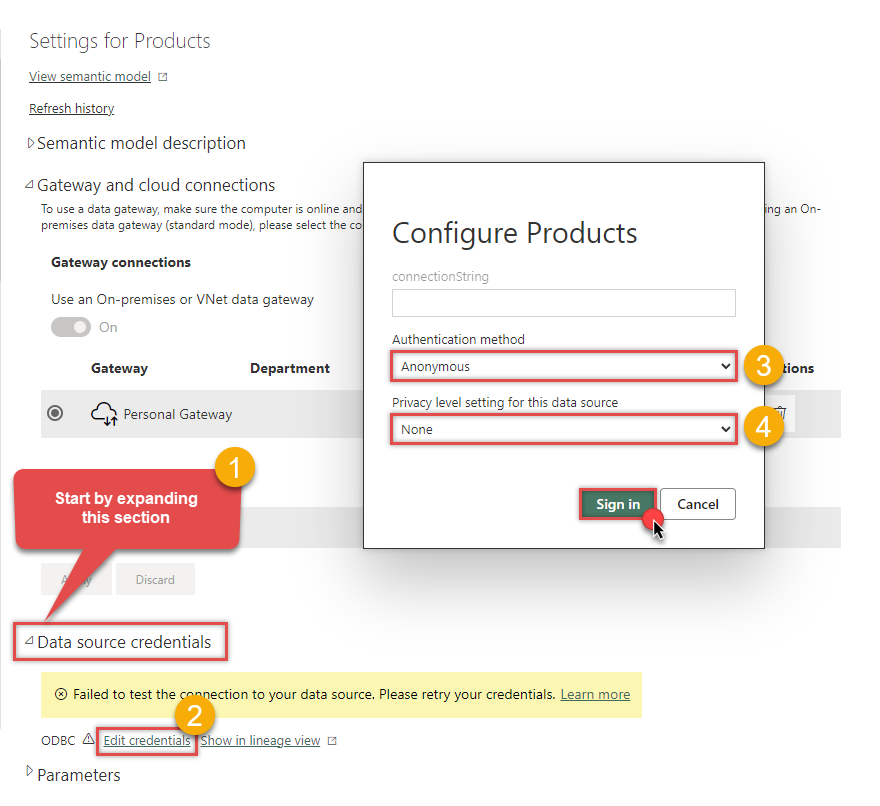

Return to your semantic model Settings, expand Data source credentials, click Edit credentials, select the Authentication method and the Privacy level, and then click the Sign in button:

dsn=JiraDSN

-

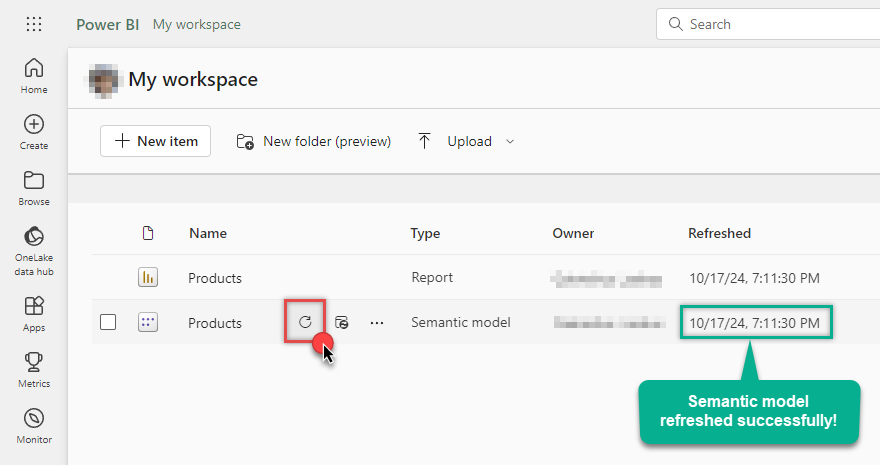

Finally, you are ready to refresh your semantic model:

Advanced topics

Editing query in Power BI

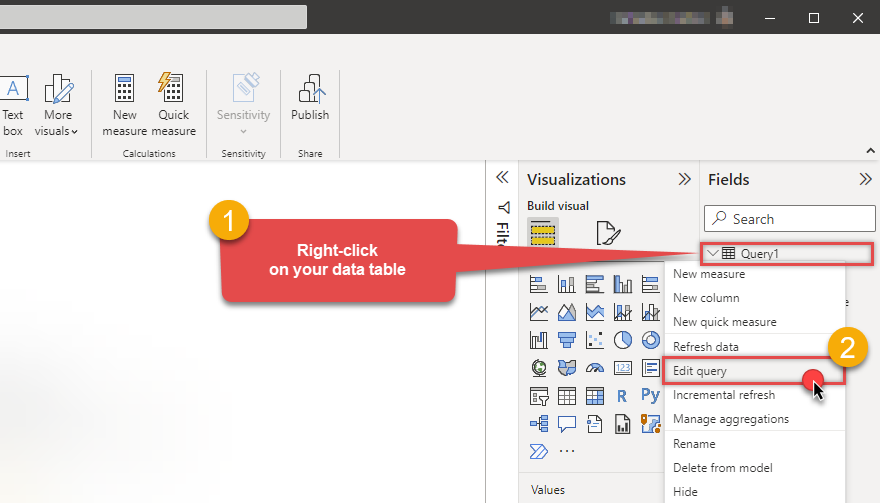

There will be a time you need to change the initial query after importing data into Power BI. Don't worry, just right-click on your table and click Edit query menu item:

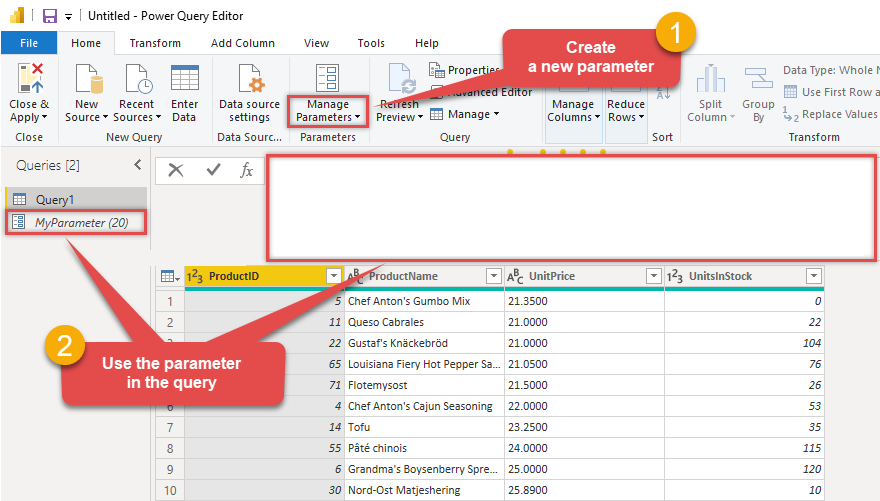

Using parameters for dynamic queries

In the real world, many values of your REST / SOAP API call may be coming from parameters. If that's the case for you can try to edit script manually as below. In below example its calling SQL Query with POST method and passing some parameters. Notice below where paraAPIKey is Power BI Parameter (string type). You can use parameters anywhere in your script just like the normal variable.

To use a parameter in Power BI report, follow these simple steps:

-

Firstly, you need to Edit query of your table (see previous section)

-

Then just create a new parameter by clicking Manage Parameters dropdown, click New Parameter option, and use it in the query:

= Odbc.Query("dsn=JiraDSN", "SELECT ProductID, ProductName, UnitPrice, UnitsInStock FROM Products WHERE UnitPrice > " & Text.From(MyParameter) & " ORDER BY UnitPrice") Refer to Power Query M reference for more information on how to use its advanced features in your queries.

Refer to Power Query M reference for more information on how to use its advanced features in your queries.

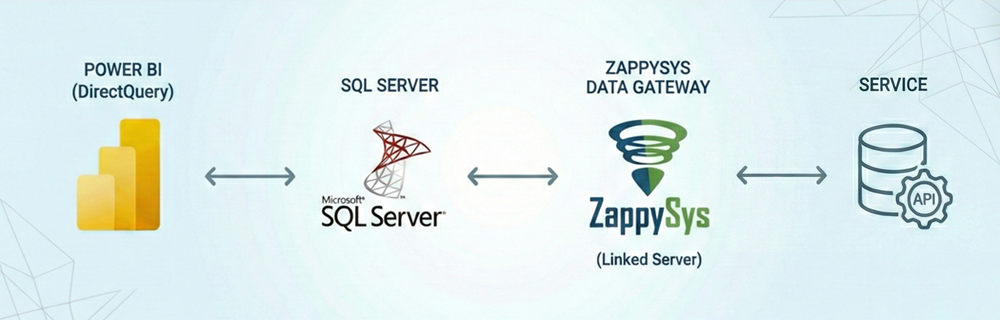

Using DirectQuery (live connection)

By default, Power BI imports Jira data into its internal cache. However, if you require real-time data, you can use the DirectQuery mode.

Since the native Power BI ODBC connector limits you to Import mode,

we must bridge the connection via Microsoft SQL Server.

To do this, we configure the ZappySys Data Gateway

and create a Linked Server pointing to it:

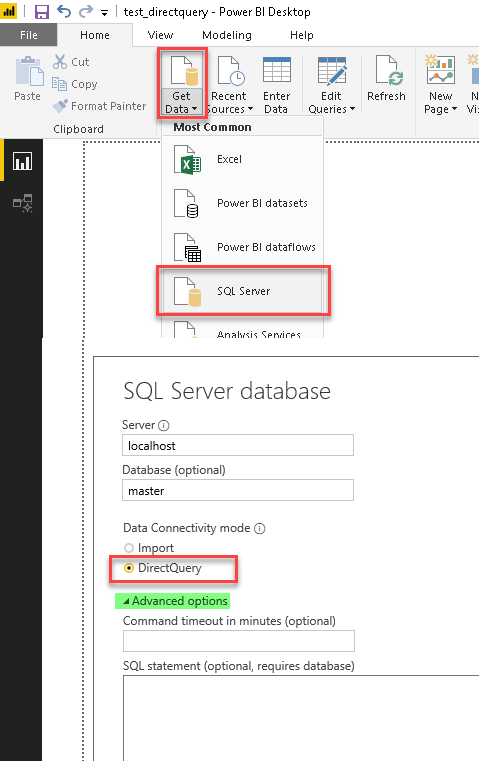

Follow these steps to use DirectQuery:

-

Configure ZappySys Data Gateway and create a Linked Server in SQL Server.

-

Once the Linked Server is ready, open Power BI Desktop.

-

Click Get Data and select SQL Server.

-

Enter your SQL Server instance name and a valid database name (e.g.,

master). -

Under Data Connectivity mode, select DirectQuery.

-

Expand Advanced options and enter your SQL query using the

OPENQUERYsyntax below (replace[LINKED_SERVER_NAME]with your actual Linked Server name):SELECT * FROM OPENQUERY([LS_TO_JIRA_IN_GATEWAY], 'SELECT * FROM Issues --//Query single issue by numeric Issue Id --SELECT * FROM Issues Where Id=101234 --//Query issue by numeric Issue Ids (multiple) --SELECT * FROM Issues WITH(SearchBy=''Key'', Key=''101234,101235,101236'') --//Query issue by Issue Key(s) (alpha-numeric) --SELECT * FROM Issues WITH(SearchBy=''Key'', Key=''PROJ-11'') --SELECT * FROM Issues WITH(SearchBy=''Key'', Key=''PROJ-11,PROJ-12,PROJ-13'') --//Query issue by project(s) --SELECT * FROM Issues WITH(SearchBy=''Project'', Project=''PROJ'') --SELECT * FROM Issues WITH(SearchBy=''Project'', Project=''PROJ,KAN,CS'') --//Query issue by JQL expression --SELECT * FROM Issues WITH(SearchBy=''Jql'', Jql=''status IN (Done, Closed) AND created > -5d'' )')

SELECT * FROM OPENQUERY([LS_TO_JIRA_IN_GATEWAY], 'SELECT * FROM Issues --//Query single issue by numeric Issue Id --SELECT * FROM Issues Where Id=101234 --//Query issue by numeric Issue Ids (multiple) --SELECT * FROM Issues WITH(SearchBy=''Key'', Key=''101234,101235,101236'') --//Query issue by Issue Key(s) (alpha-numeric) --SELECT * FROM Issues WITH(SearchBy=''Key'', Key=''PROJ-11'') --SELECT * FROM Issues WITH(SearchBy=''Key'', Key=''PROJ-11,PROJ-12,PROJ-13'') --//Query issue by project(s) --SELECT * FROM Issues WITH(SearchBy=''Project'', Project=''PROJ'') --SELECT * FROM Issues WITH(SearchBy=''Project'', Project=''PROJ,KAN,CS'') --//Query issue by JQL expression --SELECT * FROM Issues WITH(SearchBy=''Jql'', Jql=''status IN (Done, Closed) AND created > -5d'' )') - Click OK and load the data. Your Jira data is now linked live rather than imported.

DirectQuery unless it is required for very large datasets or real-time data needs.

Data is fetched on demand, which can impact performance compared to the cached Import mode.

Using full ODBC connection string

In the previous steps we used a very short format of ODBC connection string - a DSN. Yet sometimes you don't want a dependency on an ODBC data source (and an extra step). In those times, you can define a full connection string and skip creating an ODBC data source entirely. Let's see below how to accomplish that in the below steps:

-

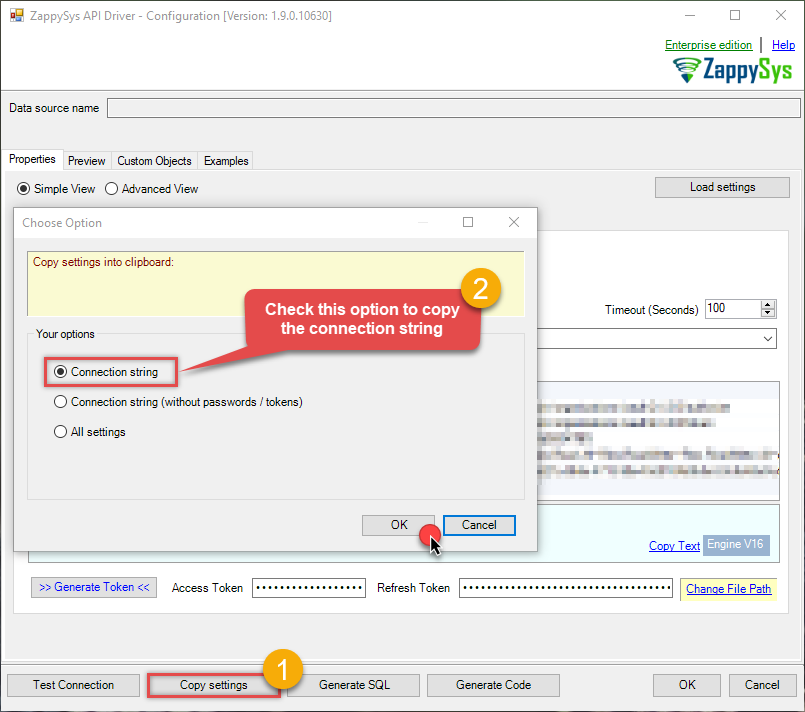

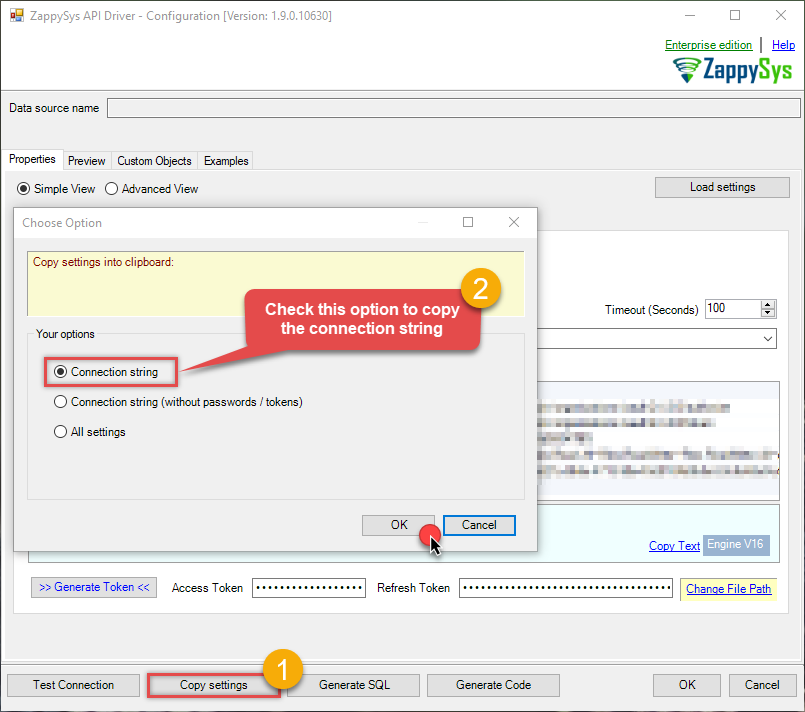

Open ODBC data source configuration and click Copy settings:

ZappySys API Driver - JiraRead and write Jira data effortlessly. Track, manage, and automate issues, projects, worklogs, and comments — almost no coding required.JiraDSN

ZappySys API Driver - JiraRead and write Jira data effortlessly. Track, manage, and automate issues, projects, worklogs, and comments — almost no coding required.JiraDSN

-

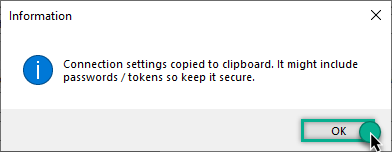

The window opens, telling us the connection string was successfully copied to the clipboard:

-

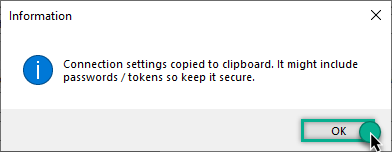

Then just paste the connection string into your script:

JiraDSNDRIVER={ZappySys API Driver};ServiceUrl=https://[$Subdomain$].atlassian.net/rest/api/3;CredentialType=Basic;

- You are good to go! The script will execute the same way as using a DSN.

Have in mind that a full connection string has length limitations.

Proceed to the next step to find out the details.

Handling limitations of using a full connection string

Despite using a full ODBC connection string may be very convenient it comes with a limitation: it's length is limited to 1024 symbols (or sometimes more). It usually happens when API provider generates a very long Refresh Token when OAuth is at play. If you are using such a long ODBC connection string, you may get this error:

"Connection string exceeds maximum allowed length of 1024"But there is a solution to this by storing the full connection string in a file. Follow the steps below to achieve this:

- Open your ODBC data source.

- Click Copy settings button to copy a full connection string (see the previous section on how to accomplish that).

- Then create a new file, let's say, in C:\temp\odbc-connection-string.txt.

- Continue by pasting the copied connection string into a newly created file and save it.

-

Finally, the last step! Just construct a shorter ODBC connection string using this format:

DRIVER={ZappySys API Driver};SettingsFile=C:\temp\odbc-connection-string.txt - Our troubles are over! Now you should be able to use this connection string in Power BI with no problems.

Jira Connector actions

Got a specific use case in mind? We've mapped out exactly how to perform a variety of essential Jira operations directly in Power BI, so you don't have to figure out the setup from scratch. Check out the step-by-step guides below:

- Read Resources

- Read Fields

- Read Custom Fields

- Read Issue Types

- Read Application Roles

- Read Groups

- Read Users

- Create User

- Delete User

- Read Projects

- Create Project

- Upsert Project

- Delete Project

- Read Issues

- Read Issue (By Id)

- Create Issues

- Update Issue

- Delete Issue

- Read Worklogs

- Read Worklogs modified after a specified date

- Create Worklog

- Update Worklog

- Delete Worklog

- Read Comments

- Create Issue Comment

- Update Issue Comment

- Delete Issue Comment

- Read Changelogs

- Read Changelog Details

- Read Changelogs by IDs

- Get custom field contexts

- Get custom field context options

- Make Generic REST API Request

- Make Generic REST API Request (Bulk Write)

Optional: Centralized data access via ZappySys Data Gateway

In some situations, you may need to provide Jira data access to multiple users or services. Configuring the data source on a Data Gateway creates a single, centralized connection point for this purpose.

This configuration provides two primary advantages:

-

Centralized data access

The data source is configured once on the gateway, eliminating the need to set it up individually on each user's machine or application. This significantly simplifies the management process.

-

Centralized access control

Since all connections route through the gateway, access can be governed or revoked from a single location for all users.

| Data Gateway |

Local ODBC

data source

|

|

|---|---|---|

| Simple configuration | ||

| Installation | Single machine | Per machine |

| Connectivity | Local and remote | Local only |

| Connections limit | Limited by License | Unlimited |

| Central data access | ||

| Central access control | ||

| More flexible cost |

To achieve this, you must first create a data source in the Data Gateway (server-side) and then create an ODBC data source in Power BI (client-side) to connect to it.

Let's not wait and get going!

Create Jira data source in the gateway

In this section we will create a data source for Jira in the Data Gateway. Let's follow these steps to accomplish that:

-

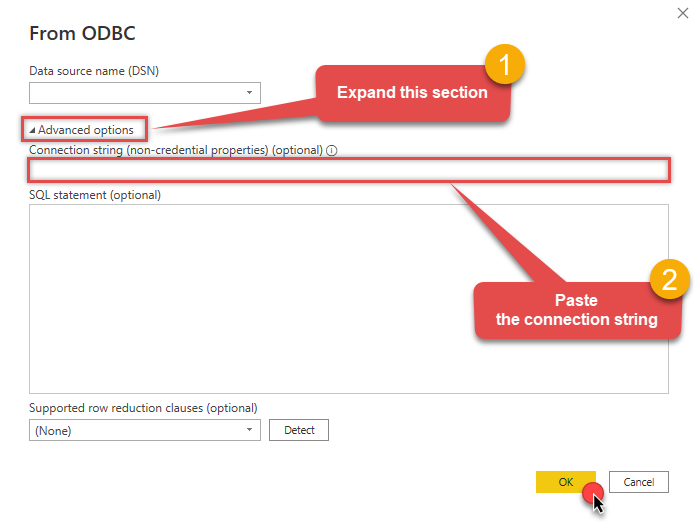

Search for

gatewayin the Windows Start Menu and open ZappySys Data Gateway Configuration:

-

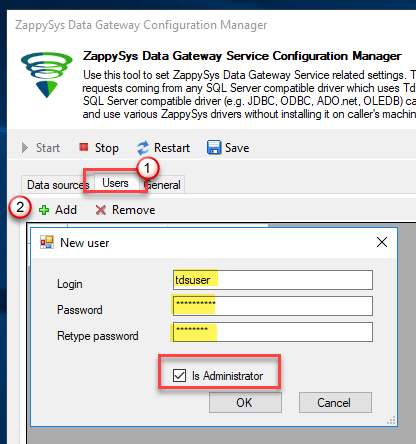

Go to the Users tab and follow these steps to add a Data Gateway user:

- Click the Add button

-

In the Login field enter a username, e.g.,

john - Then enter a Password

- Check the Is Administrator checkbox

- Click OK to save

-

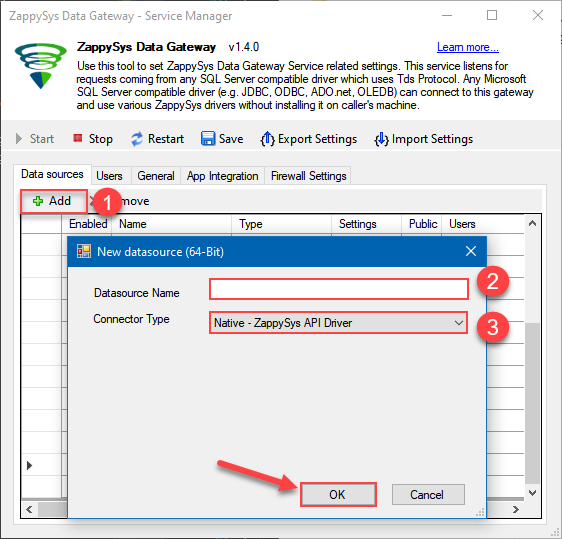

Now we are ready to add a data source:

- Click the Add button

- Give the Data source a name (have it handy for later)

- Then select Native - ZappySys API Driver

- Finally, click OK

JiraDSNZappySys API Driver

-

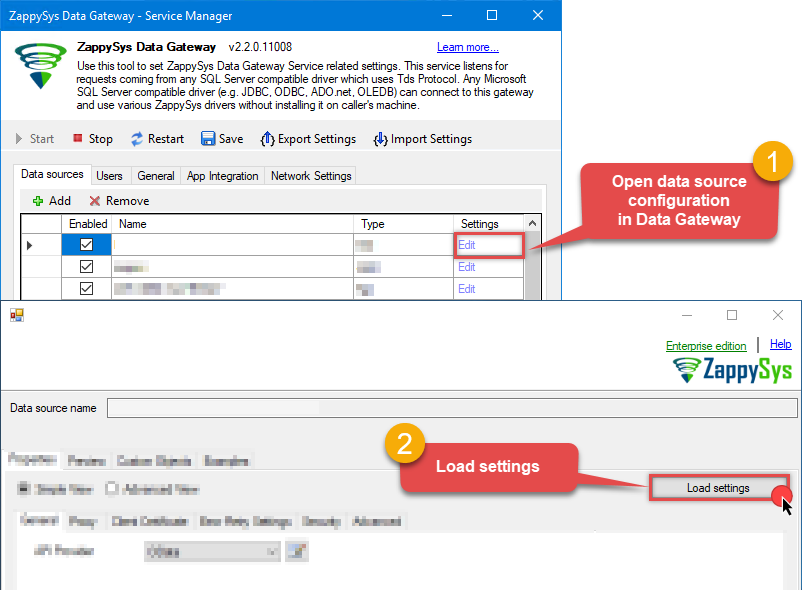

When the ZappySys API Driver configuration window opens, go back to ODBC Data Source Administrator where you already have the Jira ODBC data source created and configured, and follow these steps on how to Import data source configuration into the Gateway:

-

Open ODBC data source configuration and click Copy settings:

ZappySys API Driver - JiraRead and write Jira data effortlessly. Track, manage, and automate issues, projects, worklogs, and comments — almost no coding required.JiraDSN

-

The window opens, telling us the connection string was successfully copied to the clipboard:

-

Then go to Data Gateway configuration and in data source configuration window click Load settings:

JiraDSNZappySys API Driver - Configuration [Version: 2.0.1.10418]ZappySys API Driver - JiraRead and write Jira data effortlessly. Track, manage, and automate issues, projects, worklogs, and comments — almost no coding required.JiraDSN

-

Once a window opens, just paste the settings by pressing

CTRL+Vor by clicking right mouse button and then Paste option.

-

Open ODBC data source configuration and click Copy settings:

-

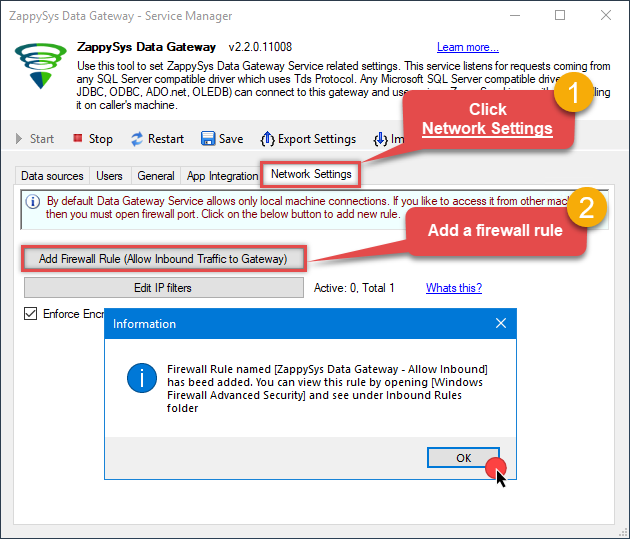

Once done, go to the Network Settings tab and Add a firewall rule for inbound traffic:

- This will initially allow all inbound traffic.

- Click Edit IP filters to restrict access to specific IP addresses or ranges.

-

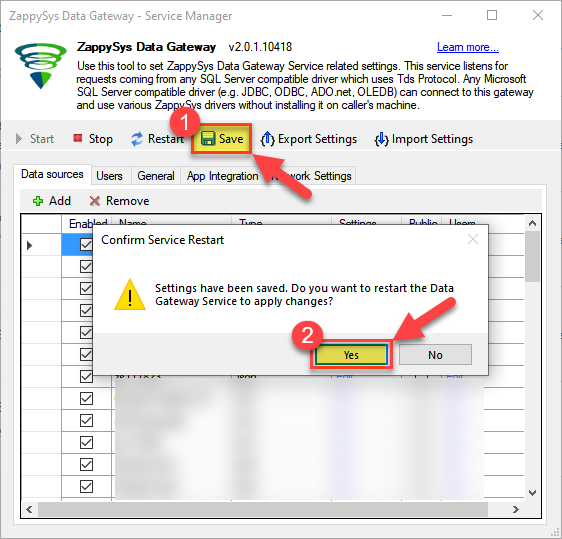

Crucial Step: After creating or modifying the data source, you must:

- Click the Save button to persist your changes.

- Hit Yes when prompted to restart the Data Gateway service.

This ensures all changes are properly applied:

Skipping this step may cause the new settings to fail, preventing you from connecting to the data source.

Skipping this step may cause the new settings to fail, preventing you from connecting to the data source.

Create ODBC data source to connect to the gateway

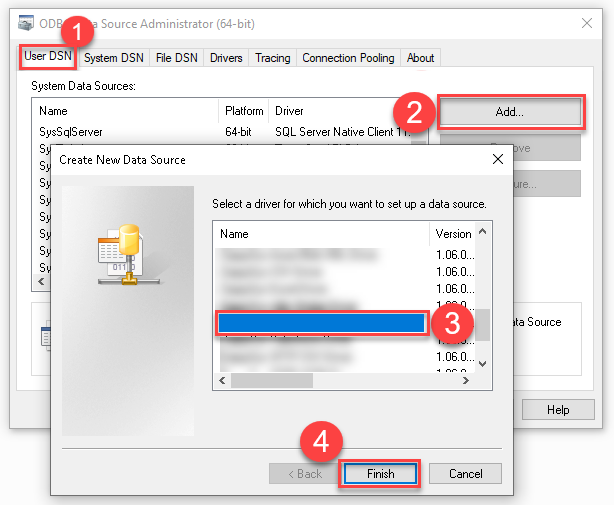

In this part we will create an ODBC data source to connect to the ZappySys Data Gateway from Power BI. To achieve that, let's perform these steps:

-

Search for

odbcand open the ODBC Data Sources (64-bit):

-

Create a User data source (User DSN) based on the ODBC Driver 17 for SQL Server driver:

ODBC Driver 17 for SQL Server If you don't see the ODBC Driver 17 for SQL Server driver in the list, choose a similar version.

If you don't see the ODBC Driver 17 for SQL Server driver in the list, choose a similar version. -

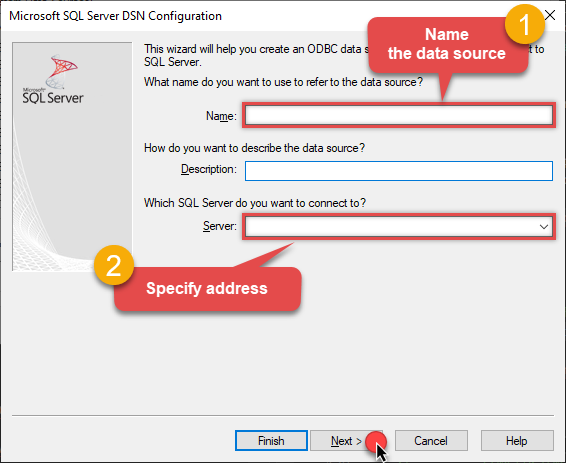

Then set a Name for the data source (e.g.

Gateway) and the address of the Data Gateway:ZappySysGatewayDSNlocalhost,5000 Make sure you separate the hostname and port with a comma, e.g.

Make sure you separate the hostname and port with a comma, e.g.localhost,5000. -

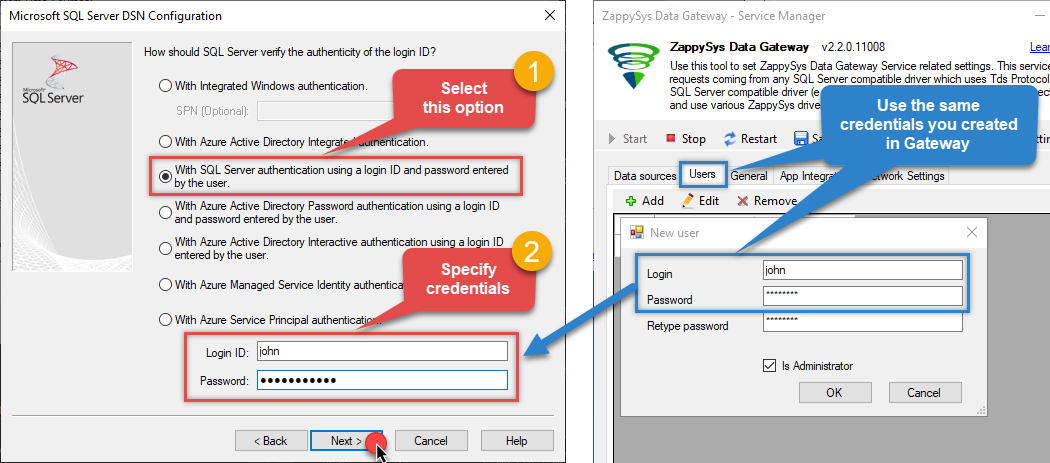

Proceed with the authentication part:

- Select SQL Server authentication

-

In the Login ID field enter the user name you created in the Data Gateway, e.g.,

john - Set Password to the one you configured in the Data Gateway

-

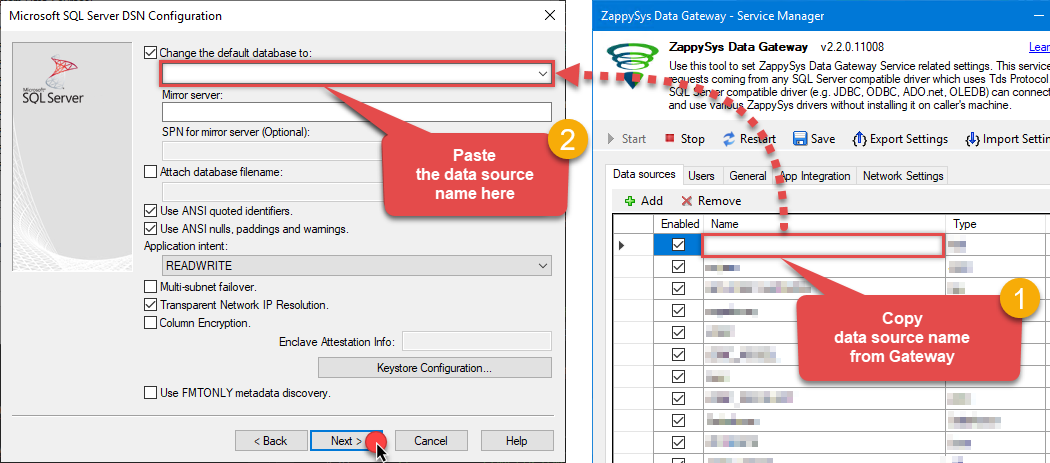

Then set the default database property to

JiraDSN(the one we used in the Data Gateway):JiraDSNJiraDSN Make sure to type the data source name manually or copy/paste it directly into the field. Using the dropdown might fail because the Trust server certificate option is not enabled yet (next step).

Make sure to type the data source name manually or copy/paste it directly into the field. Using the dropdown might fail because the Trust server certificate option is not enabled yet (next step). -

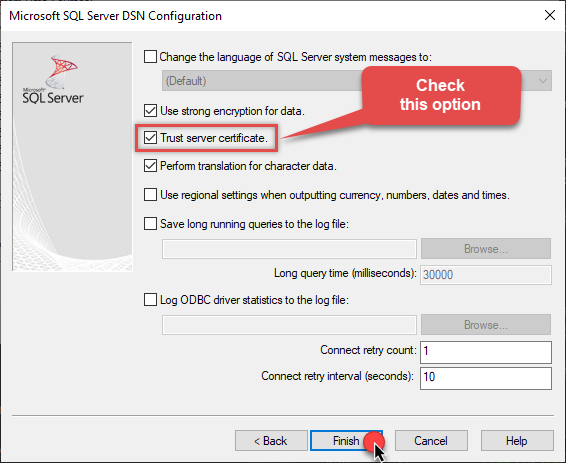

Continue by checking the Trust server certificate option:

-

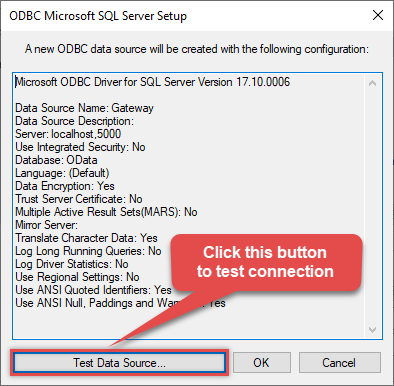

Once you do that, test the connection:

-

If the connection is successful, everything is good:

-

Done!

We are ready to move to the final step. Let's do it!

Access data in Power BI via the gateway

Finally, we are ready to read data from Jira in Power BI via the Data Gateway. Follow these final steps:

-

Go back to Power BI.

-

Once you open Power BI Desktop click Get Data to get data from ODBC:

-

A window opens, and then search for "odbc" to get data from ODBC data source:

-

Another window opens and asks to select a Data Source we already created. Choose ZappySysGatewayDSN and continue:

ZappySysGatewayDSN

-

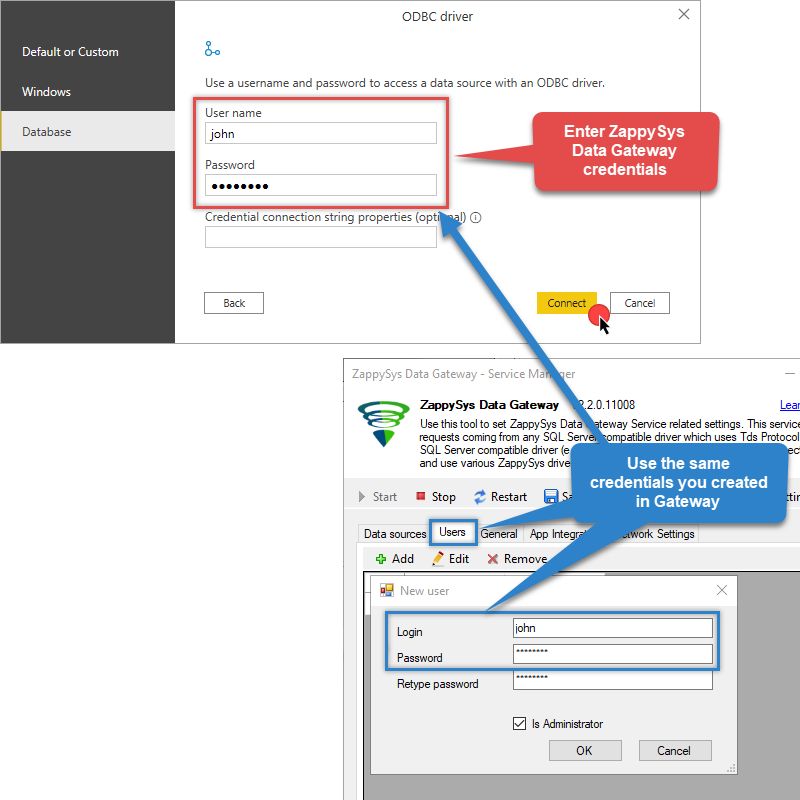

When the ODBC driver authentication window opens, configure the following:

-

Enter the User name (e.g.,

john) - Enter the Password that you configured in ZappySys Data Gateway

- Hit the Connect button

dsn=ZappySysGatewayDSN Make sure the Database tab is selected; otherwise, Power BI won't be able to connect to the ZappySys Data Gateway.

Make sure the Database tab is selected; otherwise, Power BI won't be able to connect to the ZappySys Data Gateway. -

Enter the User name (e.g.,

-

Read the data the same way we discussed at the beginning of this article.

-

That's it!

Now you can connect to Jira data in Power BI via the ZappySys Data Gateway.

Conclusion

In this guide, we demonstrated how to connect to Jira in Power BI and integrate your data — all without writing complex code.

Ready to get started? Download ODBC PowerPack now or ping us via chat if you still need help: