Jira Connector for Azure Data Factory (Pipeline) : Read issues via SQL

Learn how to read issues using the Jira Connector for Azure Data Factory (Pipeline). This connector enables you to read and write Jira data effortlessly. Track, manage, and automate issues, projects, worklogs, and comments — almost no coding required. We'll walk you through the exact setup.

Let's dive in!

Create data source using Jira ODBC Driver

-

Download and install ODBC PowerPack (if you haven't already).

-

Search for

odbcand open the ODBC Data Sources (64-bit):

-

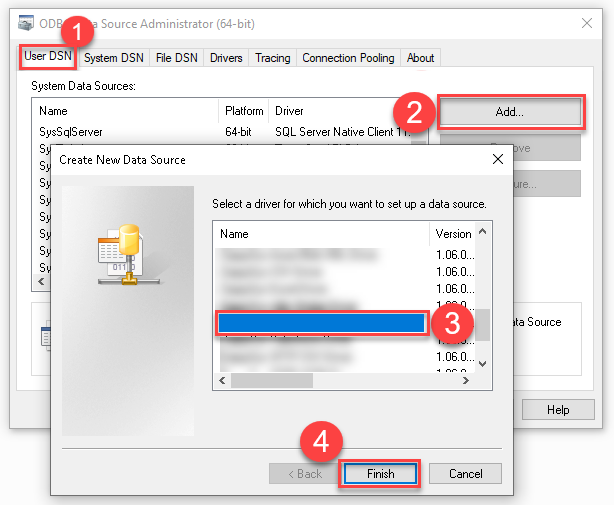

Create a User data source (User DSN) based on the ZappySys API Driver driver:

ZappySys API Driver

- Create and use a User DSN if the client application runs under a User Account. This is the ideal option at design time (e.g., when developing in Visual Studio). Use it for both types of applications (64-bit and 32-bit).

- Create and use a System DSN if the client application runs under a System Account (e.g., as a Windows Service). This is usually the required option in a production environment. If your Windows Service is a 32-bit application, you must use the 32-bit ODBC Data Source Administrator to configure this

-

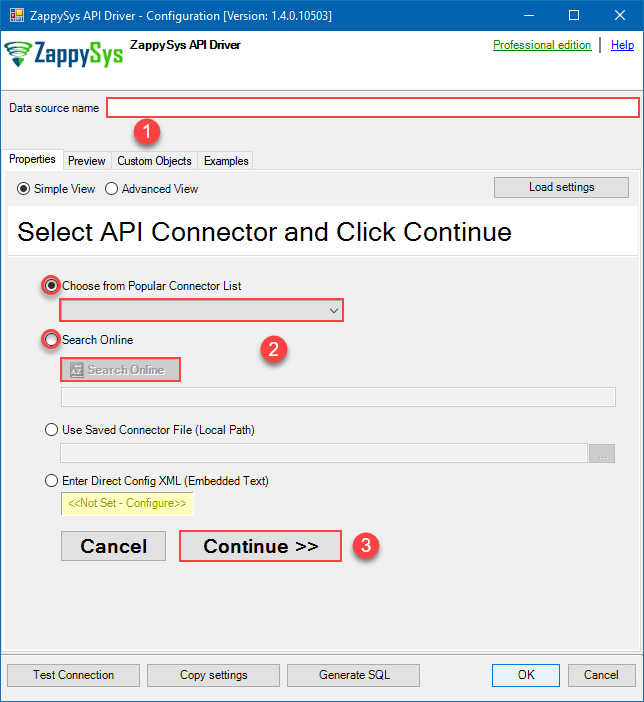

When the Configuration window appears give your data source a name if you haven't done that already, then select "Jira" from the list of Popular Connectors. If "Jira" is not present in the list, then click "Search Online" and download it. Then set the path to the location where you downloaded it. Finally, click Continue >> to proceed with configuring the DSN:

JiraDSNJira

-

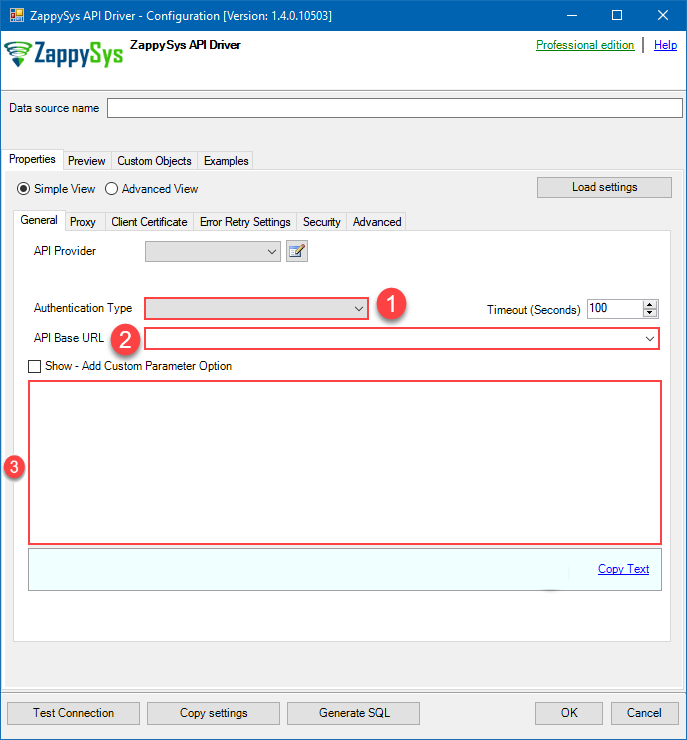

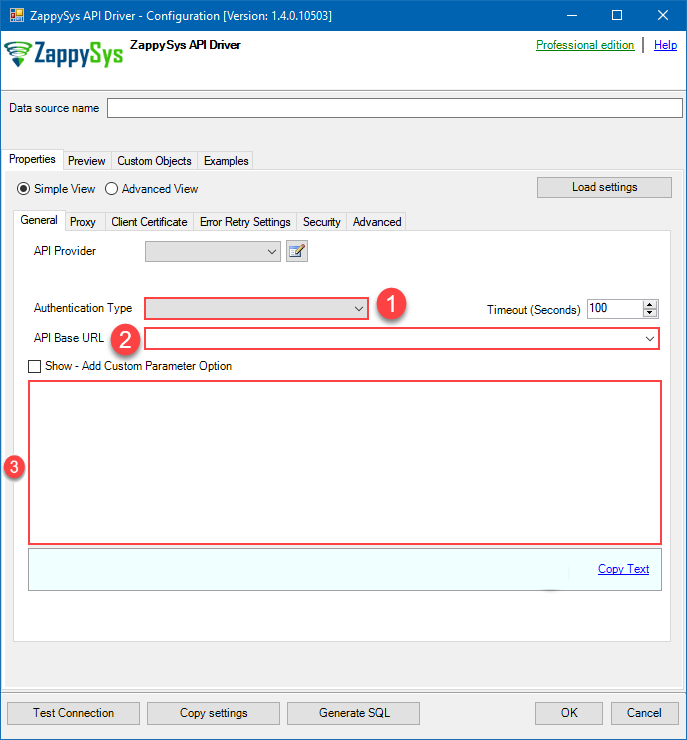

Now it's time to configure the Connection Manager. Select Authentication Type, e.g. Token Authentication. Then select API Base URL (in most cases, the default one is the right one). More info is available in the Authentication section.

Jira authentication

Firstly, login into your Atlassian account and then go to your Jira profile:- Go to Profile > Security.

- Click Create and manage API tokens.

- Then click Create API token button and give your token a label.

- When window appears with new API token, copy and use it in this connection manager.

- That's it!

API Connection Manager configuration

Just perform these simple steps to finish authentication configuration:

-

Set Authentication Type to

API Key based Authentication [Http] - Optional step. Modify API Base URL if needed (in most cases default will work).

- Fill in all the required parameters and set optional parameters if needed.

- Finally, hit OK button:

JiraDSNJiraAPI Key based Authentication [Http]https://[$Subdomain$].atlassian.net/rest/api/3Required Parameters Subdomain Fill-in the parameter... Atlassian User Name (email) Fill-in the parameter... API Key Fill-in the parameter... Optional Parameters CustomColumnsRegex RetryMode RetryWhenStatusCodeMatch RetryStatusCodeList 429 RetryCountMax 5 RetryMultiplyWaitTime True

Jira authentication

Follow official Atlassian instructions on how to create a PAT (Personal Access Token) for JIRAAPI Connection Manager configuration

Just perform these simple steps to finish authentication configuration:

-

Set Authentication Type to

Personal Access Token (PAT) Authentication [Http] - Optional step. Modify API Base URL if needed (in most cases default will work).

- Fill in all the required parameters and set optional parameters if needed.

- Finally, hit OK button:

JiraDSNJiraPersonal Access Token (PAT) Authentication [Http]https://[$Subdomain$].atlassian.net/rest/api/3Required Parameters Subdomain Fill-in the parameter... Token (PAT Bearer Token) Fill-in the parameter... Optional Parameters CustomColumnsRegex RetryMode RetryWhenStatusCodeMatch RetryStatusCodeList 429 RetryCountMax 5 RetryMultiplyWaitTime True

Jira authentication

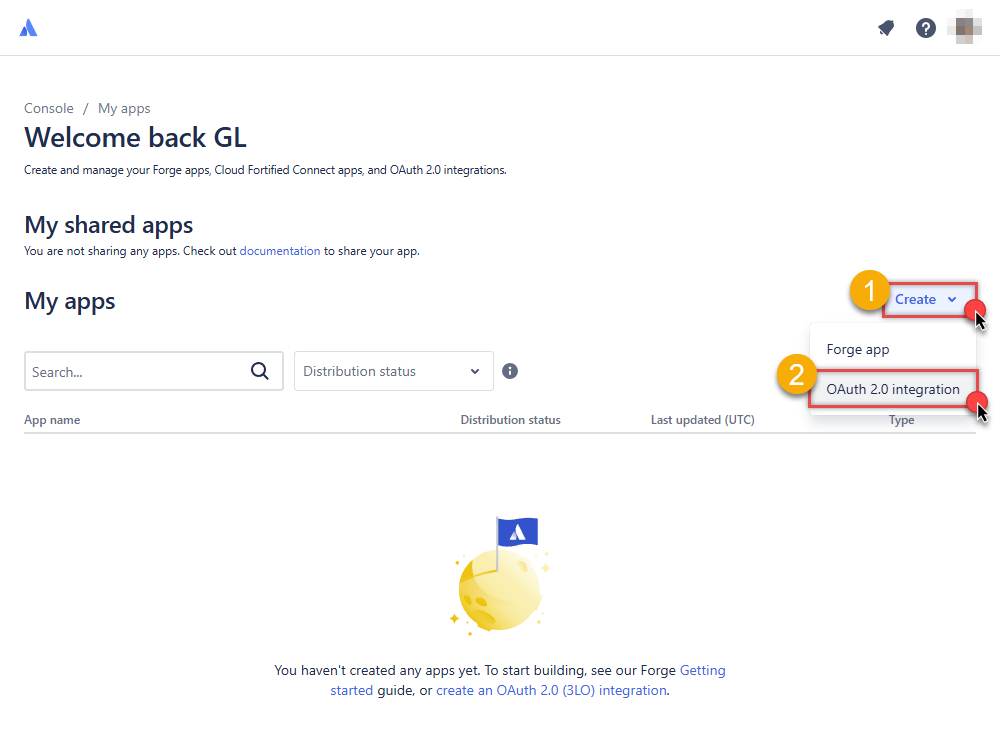

OAuth App must be created in Atlassian Developer Console. It is found at https://developer.atlassian.com/console/myapps/ [API reference]

Firstly, login into your Atlassian account and then create Jira application:- Go to Atlassian Developer area.

-

Click Create and select OAuth 2.0 integration item to create an OAuth app:

-

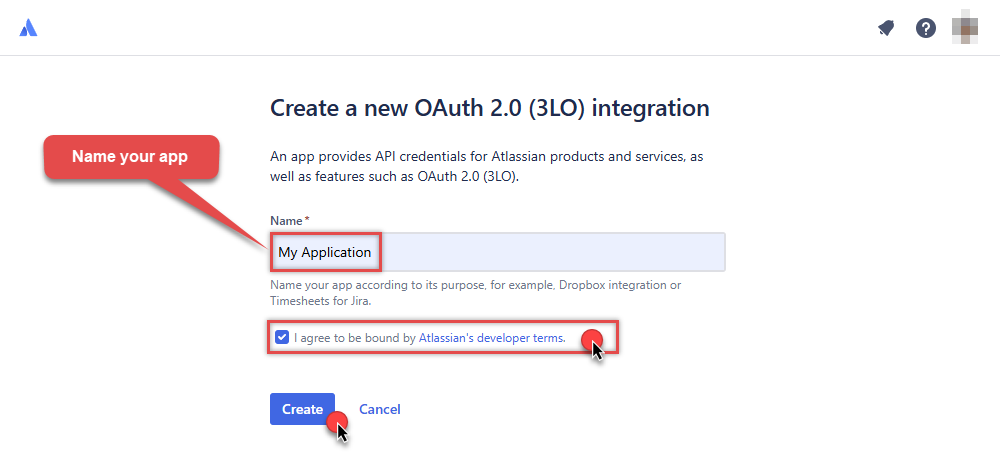

Give your app a name, accept the terms and hit Create:

-

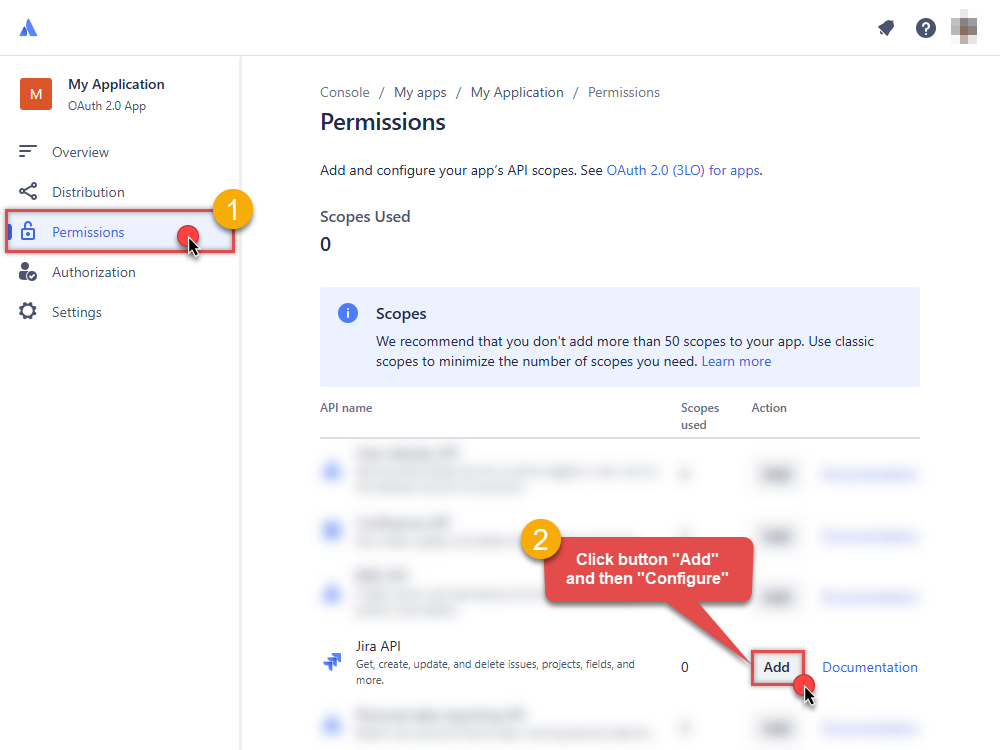

To enable permissions/scopes for your application, click Permissions tab, then hit Add button, and click Configure button, once it appears:

-

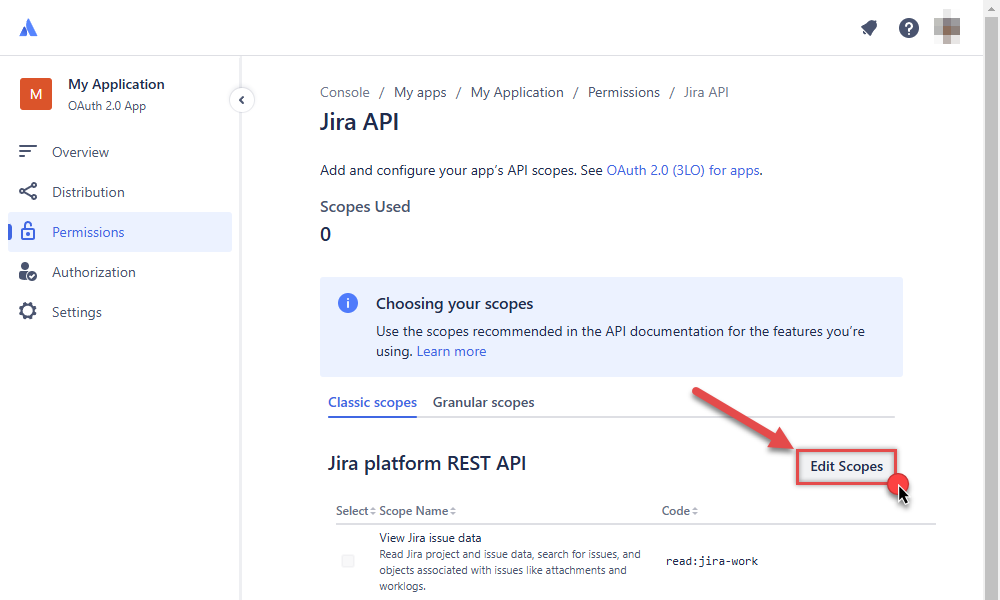

Continue by hitting Edit Scopes button to assign scopes for the application:

-

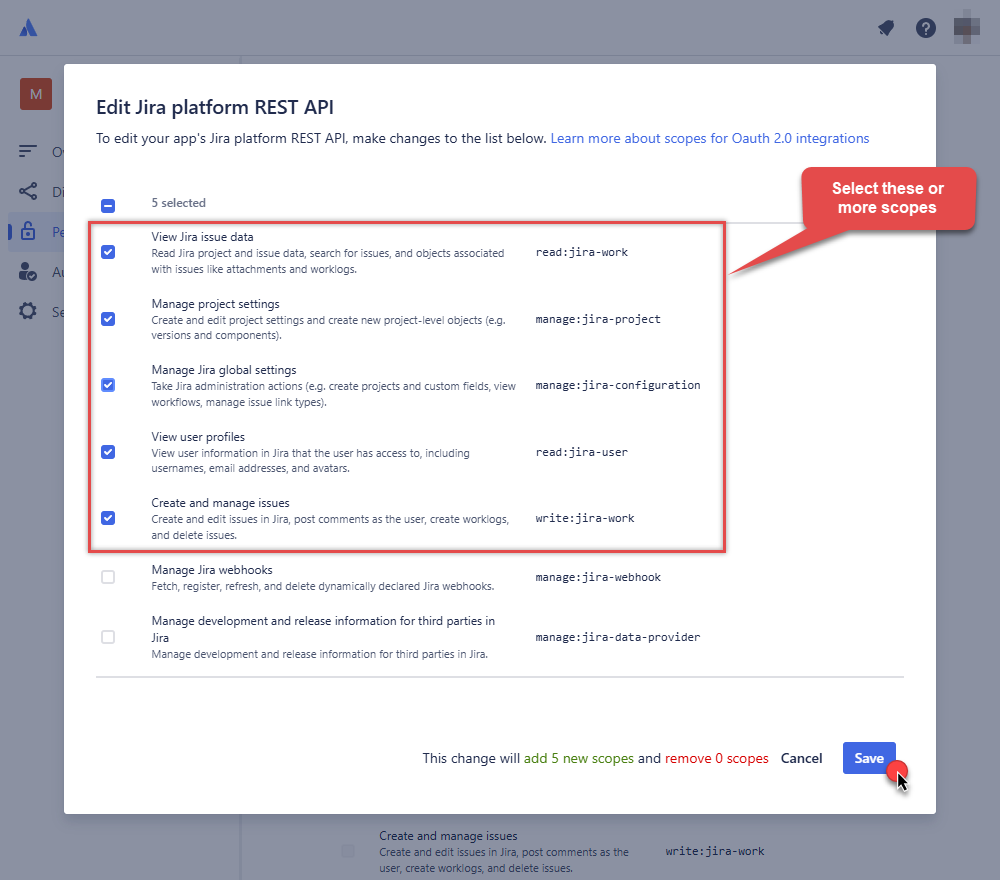

Select these scopes or all of them:

-

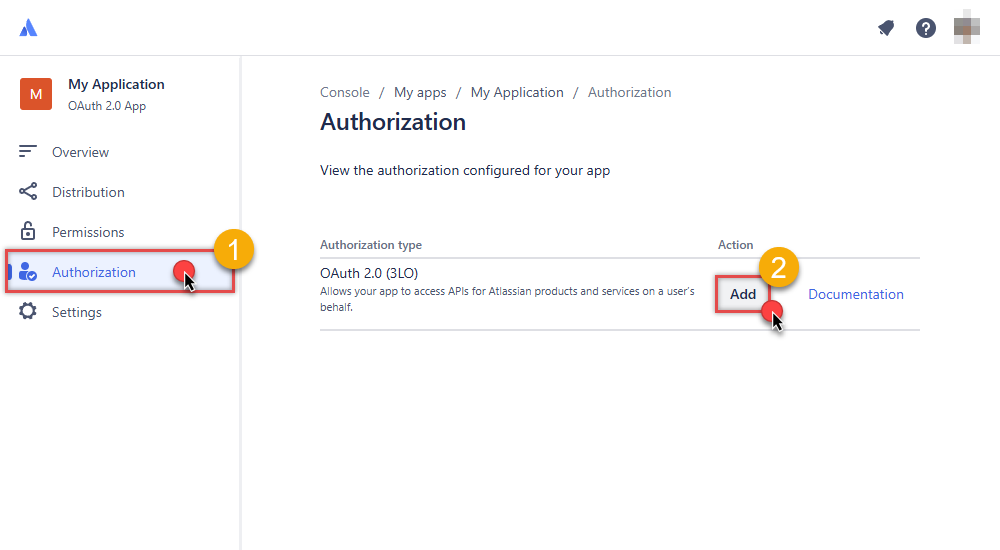

Then click Authorization option on the left and click Add button:

-

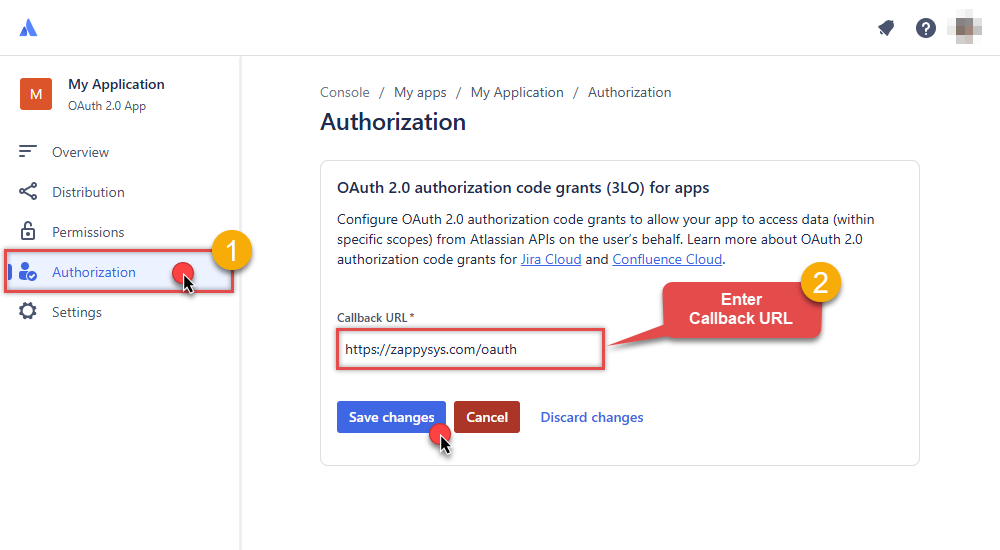

Enter your own Callback URL (Redirect URL) or simply enter

https://zappysys.com/oauth, if you don't have one:

-

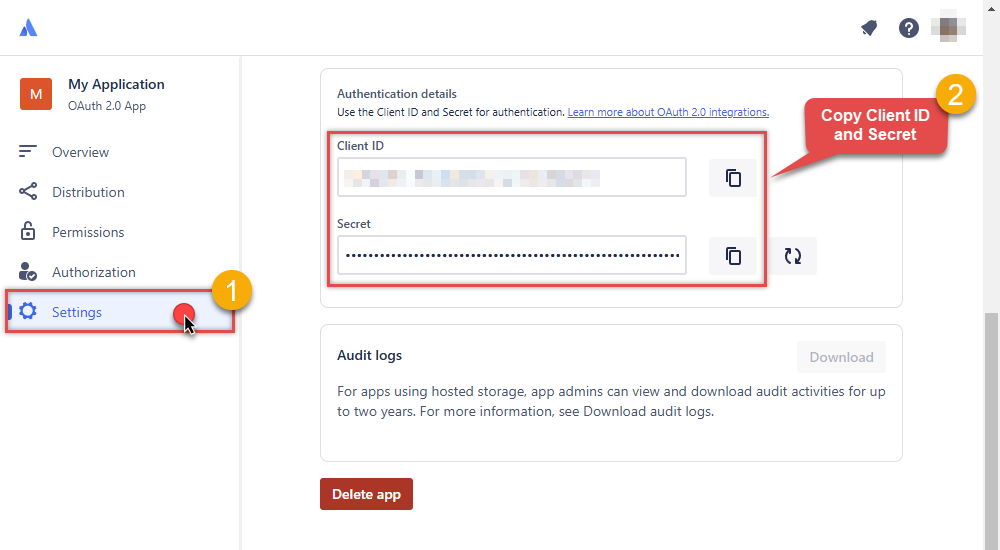

Then hit Settings option and copy Client ID and Secret into your favorite text editor (we will need them in the next step):

-

Now go to SSIS package or ODBC data source and in OAuth authentication set these parameters:

- For ClientId parameter use Client ID value from the previous steps.

- For ClientSecret parameter use Secret value from the previous steps.

- For Scope parameter use the Scopes you set previously (specify them all here):

- offline_access (a must)

- read:jira-user

- read:jira-work

- write:jira-work

- manage:jira-project

- manage:jira-configuration

NOTE: A full list of available scopes is available in Atlassian documentation. -

For Subdomain parameter use your Atlassian subdomain value

(e.g.

mycompany, if full host name ismycompany.atlassian.net).

- Click Generate Token to generate tokens.

- Finally, select Organization Id from the drop down.

- That's it! You can now use Jira Connector!

API Connection Manager configuration

Just perform these simple steps to finish authentication configuration:

-

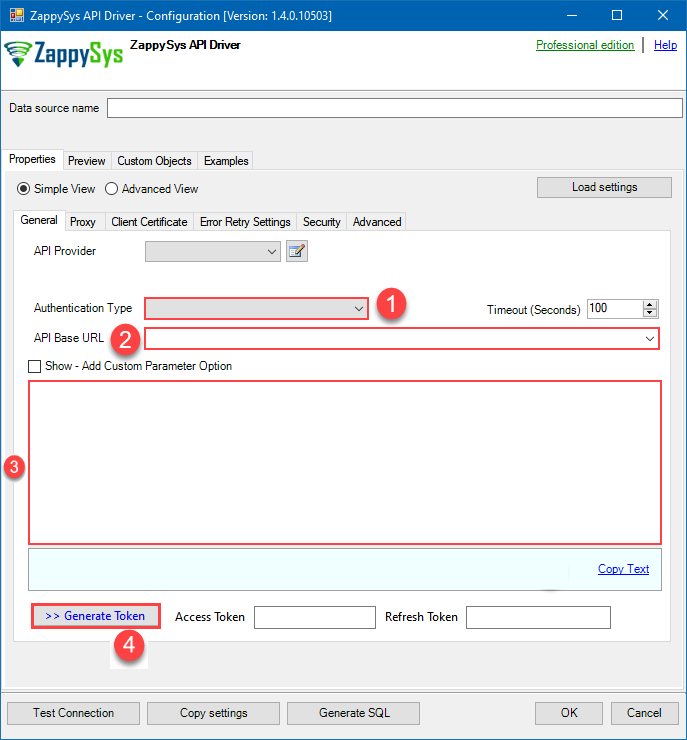

Set Authentication Type to

OAuth (**Must change API Base URL to V3 OAuth**) [OAuth] - Optional step. Modify API Base URL if needed (in most cases default will work).

- Fill in all the required parameters and set optional parameters if needed.

- Press Generate Token button to generate the tokens.

- Finally, hit OK button:

JiraDSNJiraOAuth (**Must change API Base URL to V3 OAuth**) [OAuth]https://[$Subdomain$].atlassian.net/rest/api/3Required Parameters ClientId Fill-in the parameter... ClientSecret Fill-in the parameter... Scope Fill-in the parameter... ReturnUrl Fill-in the parameter... Organization Id (Select after clicking [Generate Token]) Fill-in the parameter... Optional Parameters Custom Columns for output (Select after clicking [Generate Token]) RetryMode RetryWhenStatusCodeMatch RetryStatusCodeList 429 RetryCountMax 5 RetryMultiplyWaitTime True

-

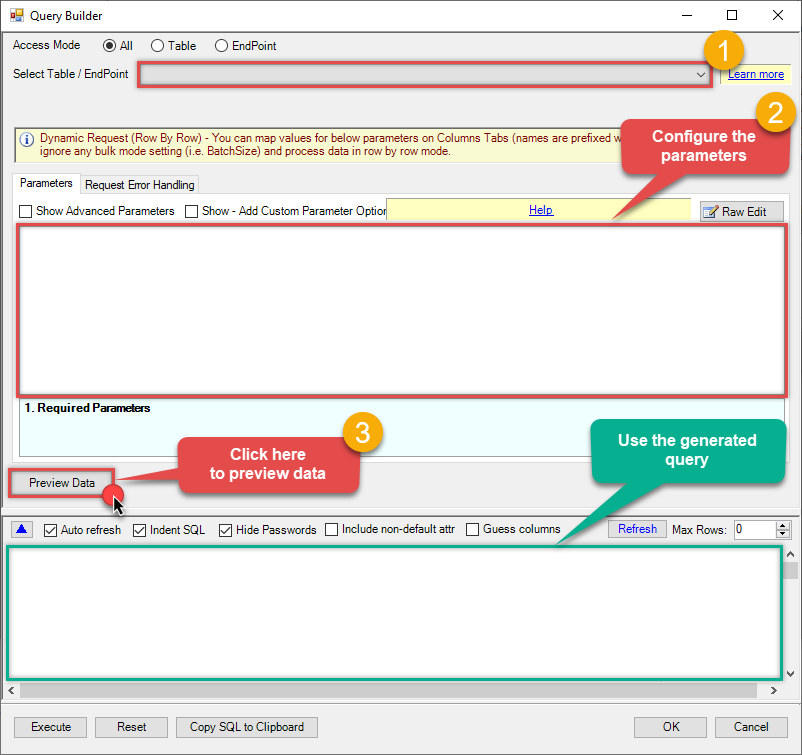

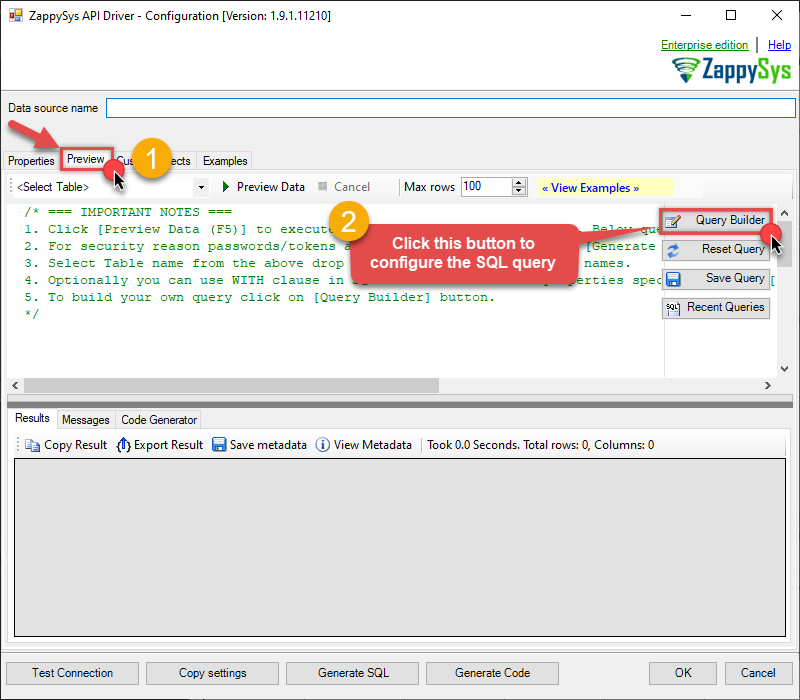

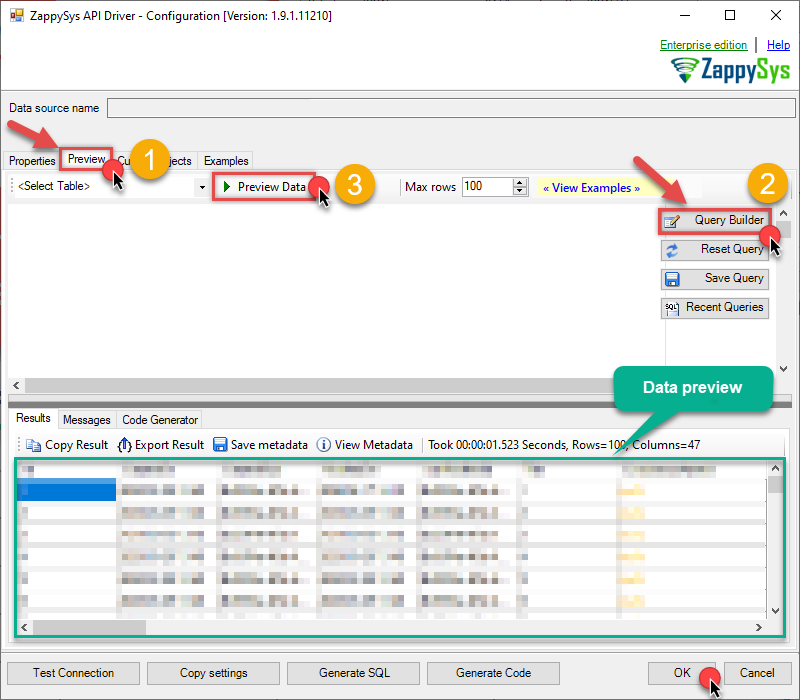

Then go to Preview tab to start building a SQL query.

-

Once you do that, proceed by opening Query Builder:

ZappySys API Driver - JiraRead and write Jira data effortlessly. Track, manage, and automate issues, projects, worklogs, and comments — almost no coding required.JiraDSN

-

Then simply select the Read Issues endpoint (action).

-

Continue by configuring the Required parameters. You can also set optional parameters too.

-

Move on by hitting Preview Data button to preview the results.

-

If you see the results you need, simply copy the generated query:

-

Click OK to use built SQL query and close the Query Builder.

-

Now hit Preview Data button to preview the data using the generated SQL query. If you are satisfied with the result, use this query in Azure Data Factory (Pipeline):

ZappySys API Driver - JiraRead and write Jira data effortlessly. Track, manage, and automate issues, projects, worklogs, and comments — almost no coding required.JiraDSN

ZappySys API Driver - JiraRead and write Jira data effortlessly. Track, manage, and automate issues, projects, worklogs, and comments — almost no coding required.JiraDSNSELECT * FROM Issues --//Query single issue by numeric Issue Id --SELECT * FROM Issues Where Id=101234 --//Query issue by numeric Issue Ids (multiple) --SELECT * FROM Issues WITH(SearchBy='Key', Key='101234,101235,101236') --//Query issue by Issue Key(s) (alpha-numeric) --SELECT * FROM Issues WITH(SearchBy='Key', Key='PROJ-11') --SELECT * FROM Issues WITH(SearchBy='Key', Key='PROJ-11,PROJ-12,PROJ-13') --//Query issue by project(s) --SELECT * FROM Issues WITH(SearchBy='Project', Project='PROJ') --SELECT * FROM Issues WITH(SearchBy='Project', Project='PROJ,KAN,CS') --//Query issue by JQL expression --SELECT * FROM Issues WITH(SearchBy='Jql', Jql='status IN (Done, Closed) AND created > -5d' ) You can also access data quickly from the tables dropdown by selecting <Select table>.A

You can also access data quickly from the tables dropdown by selecting <Select table>.AWHEREclause,LIMITkeyword will be performed on the client side, meaning that thewhole result set will be retrieved from the Jira API first, and only then the filtering will be applied to the data. If possible, it is recommended to use parameters in Query Builder to filter the data on the server side (in Jira servers).

Let's not stop here and explore SQL query examples, including how to use them in Stored Procedures and Views (virtual tables) in the next steps.

Jira SQL query examples

Use these SQL queries in your Azure Data Factory (Pipeline) data source:

Read issues

Gets all issues. By default the query returns every issue; you can narrow results by adding a WITH clause to search by Key, Project, or JQL expression (see the commented options in the code).

Use SearchBy='Key' with a single key or ID for one issue, or comma-separated keys/IDs for multiple. Use SearchBy='Project' to limit to one or more project codes. Use SearchBy='Jql' with a JQL expression for advanced filtering.

SELECT * FROM Issues

--//Query single issue by numeric Issue Id

--SELECT * FROM Issues Where Id=101234

--//Query issue by numeric Issue Ids (multiple)

--SELECT * FROM Issues WITH(SearchBy='Key', Key='101234,101235,101236')

--//Query issue by Issue Key(s) (alpha-numeric)

--SELECT * FROM Issues WITH(SearchBy='Key', Key='PROJ-11')

--SELECT * FROM Issues WITH(SearchBy='Key', Key='PROJ-11,PROJ-12,PROJ-13')

--//Query issue by project(s)

--SELECT * FROM Issues WITH(SearchBy='Project', Project='PROJ')

--SELECT * FROM Issues WITH(SearchBy='Project', Project='PROJ,KAN,CS')

--//Query issue by JQL expression

--SELECT * FROM Issues WITH(SearchBy='Jql', Jql='status IN (Done, Closed) AND created > -5d' )Read an issue by ID

Gets a single issue by its key (e.g. CS-123) or numeric ID (e.g. 10001). Supply the key or ID in the Key parameter; the connector returns that issue’s fields.

SELECT * FROM Issues WITH(SearchBy='Key', Key='10001')Read an issue by ID — continue on specific error

By default, if the issue is not found or the search condition is invalid, the query returns an error. You can tell the connector to continue instead by setting ContineOnErrorForMessage=1 and supplying the error message (or a substring) to ignore in ErrorSubstringToMatch.

SELECT * FROM Issues WITH(SearchBy='Key', Key='10001', ContineOnErrorForMessage=1, ErrorSubstringToMatch='Issue does not exist')Read an issue by Key

Gets a single issue by its project key and number (e.g. CS-1). Same as reading by ID; use whichever identifier you have.

SELECT * FROM Issues WITH(SearchBy='Key', Key='CS-1')Read multiple issues by ID or Key

Gets multiple issues in one request. Supply comma-separated keys and/or numeric IDs in the Key parameter; the connector returns all matching issues.

SELECT * FROM Issues WITH(SearchBy='Key', Key='CS-1,CS-2,10003,10004')Read issues for a project

Gets all issues belonging to a given project (or projects). Supply one or more project codes in Project; use a comma-separated list for multiple projects.

SELECT * FROM Issues WITH(SearchBy='Project', Project='CS')Read issues (specific fields only)

Gets all issues but returns only the columns you specify in Fields, which can speed up the query when you need just a few fields. Use a comma-separated list of field names; *all returns every field.

SELECT * FROM Issues WITH(Fields='id,key,summary,status')Read issues using JQL query

Gets issues that match a JQL (Jira Query Language) expression. Supply the JQL string in the Jql parameter; you can filter by status, project, date, assignee, text, and more.

See the commented examples in the code for common patterns (IDs, keys, dates, text search, etc.). JQL reference links are included in the snippet.

SELECT * FROM Issues WITH (Jql='status IN (Done, Closed) AND created > -5d' )

/*

Useful links:

https://support.atlassian.com/jira-work-management/docs/jql-fields/

https://www.atlassian.com/software/jira/guides/jql/tutorials#advanced-search

https://www.atlassian.com/blog/jira/jql-the-most-flexible-way-to-search-jira-14

Other Possible JQL expressions:

Ids (IN): key IN(10001, 10002, 10003);

Keys (IN): key IN(CS-1, CS-2, CS-3);

Projects (IN): project IN(PROJ1, PROJ2, PROJ3);

Status (EQUAL): status='Done';

Date (Expression 1): created >=-5d;

Date (Expression 2): created >=startOfMonth() AND created <=now();

Date (Expression 3): created >=startOfYear() AND created <=startOfDay();

Date (Static): created >= '2008/12/31';

Date (Static with time): created >= '2008/12/31 23:59';

Project and Status (AND + IN): project=CS AND status NOT IN ('Done', 'Open', 'Closed');

Assignee and Created Date: assignee is NOT EMPTY and created < -1d;

Text (Contains - Fuzzy): Summary ~ 'some words' OR description ~ 'some words';

Text (Contains - Fuzzy Wildcard): Summary ~ 'some*' OR description ~ 'some*';

Text (Contains - Exact): Summary ~ '\"exact words\"' OR description ~ '\"exact words\"';

Text (Does Not Contain - Fuzzy): Summary !~ 'some words' OR description !~ 'some words';

Empty OR Null: fixVersion is empty OR fixVersion is null;

Is Not Empty OR Is Not Null: fixVersion is not empty OR fixVersion is not null;

WAS Operator (previous value): status WAS "Resolved" BY (jsmith,srogen) BEFORE "2019/02/02";

WAS IN Operator: status WAS IN ("Resolved", "In Progress");

WAS NOT IN Operator: status WAS NOT IN ("Resolved", "In Progress");

WAS + BY + DURING (date range): status WAS "Resolved" BY (jsmith,srogen) DURING("2019/02/02", "2020/02/02");

CHANGED operator: assignee CHANGED;

CHANGED operator (multiple): status CHANGED FROM "In Progress" TO "Open"

*/Read issues with all columns (including SLA / nested custom fields)

Gets issues with all columns exposed, including SLA and nested custom fields, without defining a META parameter. Set the connector’s Metadata Mode to MergeStaticDynamic so the driver scans sample data and merges static and dynamic columns. This approach is simpler but slower because of the extra requests used to infer column types from data.

-- No META needed. Set MetaDetectionOrder to MergeStaticDynamic so nested/SLA columns are discovered automatically.

-- Slower: driver makes extra requests to scan data and merge with static metadata.

SELECT *

FROM Issues

WITH(

JQL='project IN(SUP)'

,MetaDetectionOrder='MergeStaticDynamic'

)Read custom fields with nested structures (SLA fields)

Shows how to query custom fields that contain nested document structures (not arrays), such as SLA fields. Use META='@OverrideMode:1' to merge static and dynamic metadata so you can select both standard columns and nested custom field properties. Replace the example custom field IDs (e.g. customfield_10084) with your own; run SELECT * FROM Fields or check Jira project settings to find field IDs.

-- NOTE: Replace customfield_10084, customfield_10085, customfield_10086 with your own custom field IDs.

-- You can run [SELECT * FROM Fields] in preview tab to get the field IDs.

-- OR you can find field IDs in Jira: Project Settings > Issue types > Edit field > field ID in URL or API.

SELECT

-- static fields

id

, key

, summary

, statusname

-- dynamic fields

-- SLA: Time to Resolution (replace customfield_10084 with your SLA field ID)

,[fields.customfield_10084.id] as c10084_id

,[fields.customfield_10084.name] as c10084_name

,[fields.customfield_10084.ongoingCycle.startTime.friendly] as c10084_startTime_friendly

,[fields.customfield_10084.ongoingCycle.startTime.jira] as c10084_startTime_jira

,[fields.customfield_10084.ongoingCycle.breachTime.friendly] as c10084_breachTime_friendly

,[fields.customfield_10084.ongoingCycle.breachTime.jira] as c10084_breachTime_jira

,[fields.customfield_10084.ongoingCycle.elapsedTime.friendly] as c10084_elapsedTime_friendly

,[fields.customfield_10084.ongoingCycle.remainingTime.friendly] as c10084_remainingTime_friendly

,[fields.customfield_10084.ongoingCycle.goalDuration.friendly] as c10084_goalDuration_friendly

,[fields.customfield_10084.ongoingCycle.breached] as c10084_breached

,[fields.customfield_10084.ongoingCycle.paused] as c10084_paused

-- SLA: Time to First Response (replace customfield_10085 with your SLA field ID)

,[fields.customfield_10085.id] as c10085_id

,[fields.customfield_10085.name] as c10085_name

,[fields.customfield_10085.ongoingCycle.startTime.friendly] as c10085_startTime_friendly

,[fields.customfield_10085.ongoingCycle.startTime.jira] as c10085_startTime_jira

,[fields.customfield_10085.ongoingCycle.breachTime.friendly] as c10085_breachTime_friendly

,[fields.customfield_10085.ongoingCycle.breachTime.jira] as c10085_breachTime_jira

,[fields.customfield_10085.ongoingCycle.elapsedTime.friendly] as c10085_elapsedTime_friendly

,[fields.customfield_10085.ongoingCycle.remainingTime.friendly] as c10085_remainingTime_friendly

,[fields.customfield_10085.ongoingCycle.goalDuration.friendly] as c10085_goalDuration_friendly

,[fields.customfield_10085.ongoingCycle.breached] as c10085_breached

,[fields.customfield_10085.ongoingCycle.paused] as c10085_paused

-- SLA: Time to Close After Resolution (replace customfield_10086 with your SLA field ID)

,[fields.customfield_10086.id] as c10086_id

,[fields.customfield_10086.name] as c10086_name

-- Other nested custom fields (replace IDs as needed)

,[fields.customfield_10024.id] as c10024_id

,[fields.customfield_10024.name] as c10024_name

,[fields.customfield_10075.languageCode] as c10075_languageCode

,[fields.customfield_10075.displayName] as c10075_displayName

FROM Issues

WITH(

JQL='project IN(SUP)'

-- @OverrideMode:1 merges metadata so you can use static + dynamic fields together

,META='@OverrideMode:1

;fields.customfield_10084.id : string(10)

;fields.customfield_10084.name : string(180)

;fields.customfield_10084.ongoingCycle.startTime.friendly : string(130)

;fields.customfield_10084.ongoingCycle.startTime.jira : DateTime

;fields.customfield_10084.ongoingCycle.breachTime.friendly : string(130)

;fields.customfield_10084.ongoingCycle.breachTime.jira : DateTime

;fields.customfield_10084.ongoingCycle.elapsedTime.friendly : string(30)

;fields.customfield_10084.ongoingCycle.remainingTime.friendly : string(70)

;fields.customfield_10084.ongoingCycle.goalDuration.friendly : string(30)

;fields.customfield_10084.ongoingCycle.breached : Boolean

;fields.customfield_10084.ongoingCycle.paused : Boolean

;fields.customfield_10085.id : string(10)

;fields.customfield_10085.name : string(220)

;fields.customfield_10085.ongoingCycle.startTime.friendly : string(130)

;fields.customfield_10085.ongoingCycle.startTime.jira : DateTime

;fields.customfield_10085.ongoingCycle.breachTime.friendly : string(130)

;fields.customfield_10085.ongoingCycle.breachTime.jira : DateTime

;fields.customfield_10085.ongoingCycle.elapsedTime.friendly : string(30)

;fields.customfield_10085.ongoingCycle.remainingTime.friendly : string(70)

;fields.customfield_10085.ongoingCycle.goalDuration.friendly : string(30)

;fields.customfield_10085.ongoingCycle.breached : Boolean

;fields.customfield_10085.ongoingCycle.paused : Boolean

;fields.customfield_10086.id : string(10)

;fields.customfield_10086.name : string(300)

;fields.customfield_10024.id : string(40)

;fields.customfield_10024.name : string(80)

;fields.customfield_10075.languageCode : string(20)

;fields.customfield_10075.displayName : string(70)

;--add more nested custom field properties here as needed--

'

)

get_issues endpoint belongs to

Issues

table(s), and can therefore be used via those table(s).

Create SQL view in ODBC data source

ZappySys API Drivers support flexible Query language so you can override Default Properties you configured on Data Source such as URL, Body. This way you don't have to create multiple Data Sources if you like to read data from multiple EndPoints. However not every application support supplying custom SQL to driver so you can only select Table from list returned from driver.

If you're dealing with Microsoft Access and need to import data from an SQL query, it's important to note that Access doesn't allow direct import of SQL queries. Instead, you can create custom objects (Virtual Tables) to handle the import process.

Many applications like MS Access, Informatica Designer wont give you option to specify custom SQL when you import Objects. In such case Virtual Table is very useful. You can create many Virtual Tables on the same Data Source (e.g. If you have 50 URLs with slight variations you can create virtual tables with just URL as Parameter setting.

-

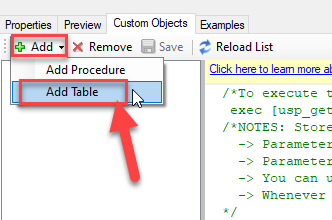

Go to Custom Objects Tab and Click on Add button and Select Add Table:

-

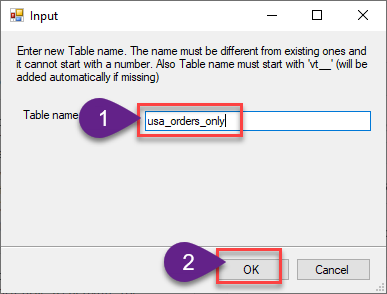

Enter the desired Table name and click on OK:

-

And it will open the New Query Window Click on Cancel to close that window and go to Custom Objects Tab.

-

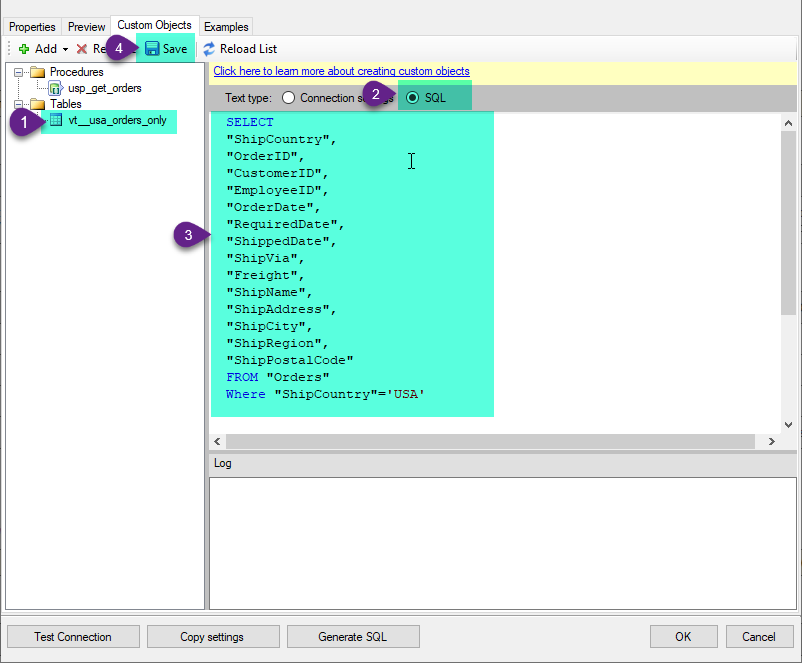

Select the created table, Select Text Type AS SQL and write the your desired SQL Query and Save it and it will create the custom table in the ZappySys Driver:

Here is an example SQL query for ZappySys Driver. You can insert Placeholders also. Read more about placeholders here

SELECT "ShipCountry", "OrderID", "CustomerID", "EmployeeID", "OrderDate", "RequiredDate", "ShippedDate", "ShipVia", "Freight", "ShipName", "ShipAddress", "ShipCity", "ShipRegion", "ShipPostalCode" FROM "Orders" Where "ShipCountry"='USA'

-

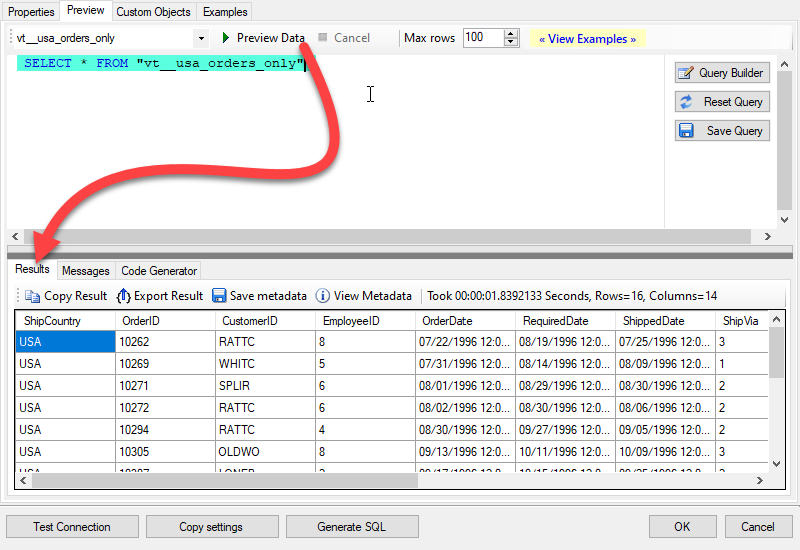

That's it now go to Preview Tab and Execute your custom virtual table query. In this example it will extract the orders for the USA Shipping Country only:

SELECT * FROM "vt__usa_orders_only"

Read issues in Azure Data Factory (Pipeline) via SQL view

-

Sign in to Azure Portal

-

Open your browser and go to: https://portal.azure.com

-

Enter your Azure credentials and complete MFA if required.

-

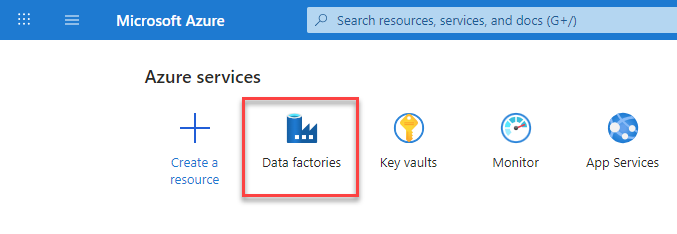

After login, go to Data factories.

-

-

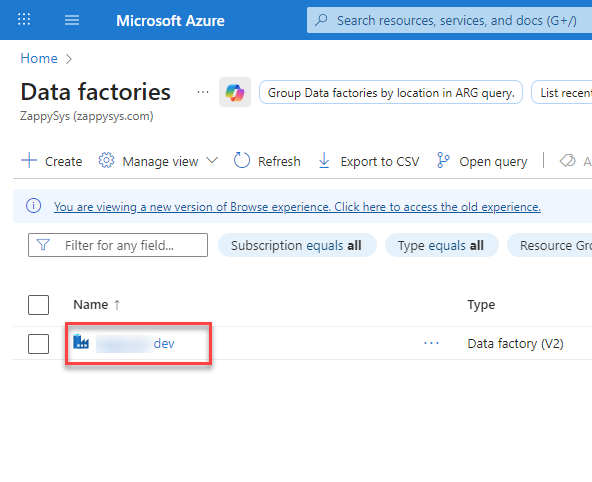

Under Azure Data Factory Resource - Create or select the Data Factory you want to work with.

-

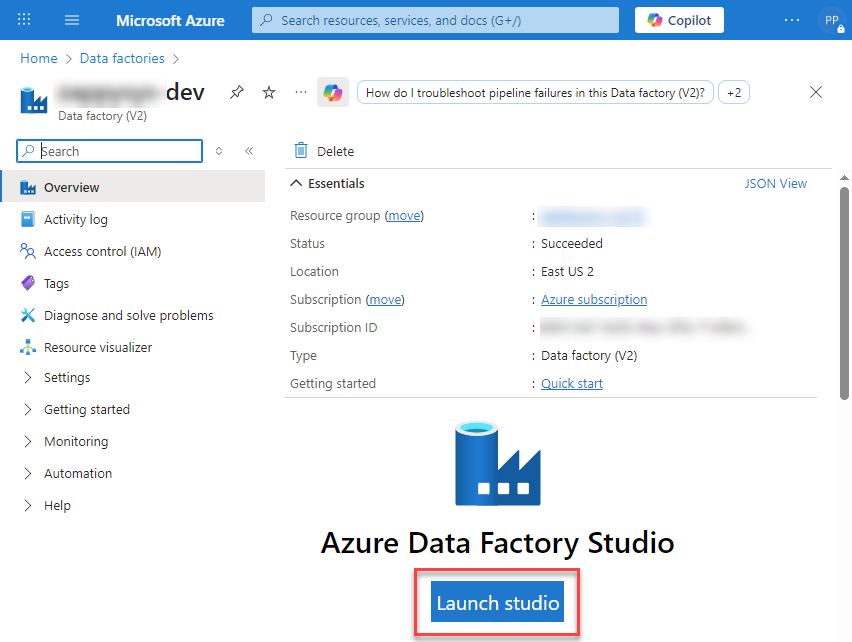

Inside the Data Factory resource page, click Launch studio.

-

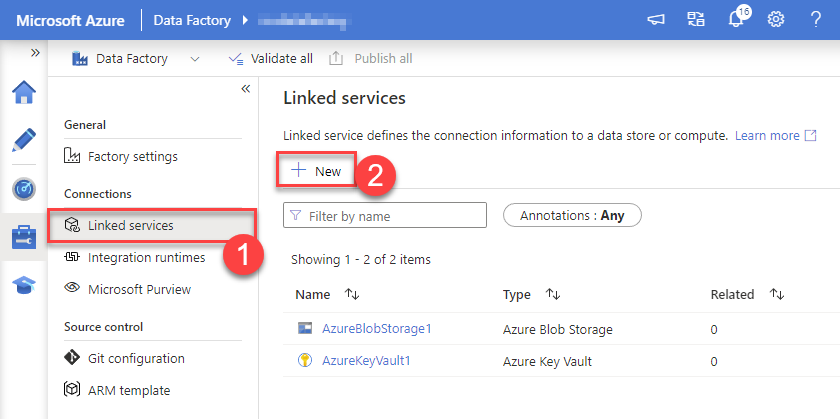

Create a New Linked service:

In the Manage section (left menu).

Under Connections, select Linked services.

Click + New to create a new Linked service based on ODBC.

-

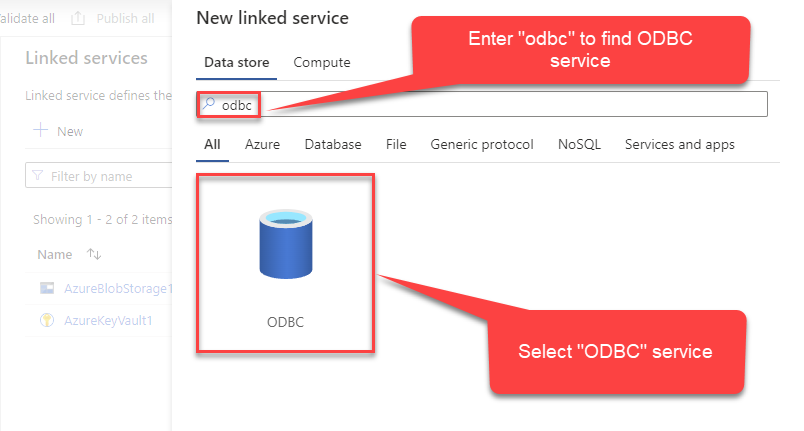

Select ODBC service:

-

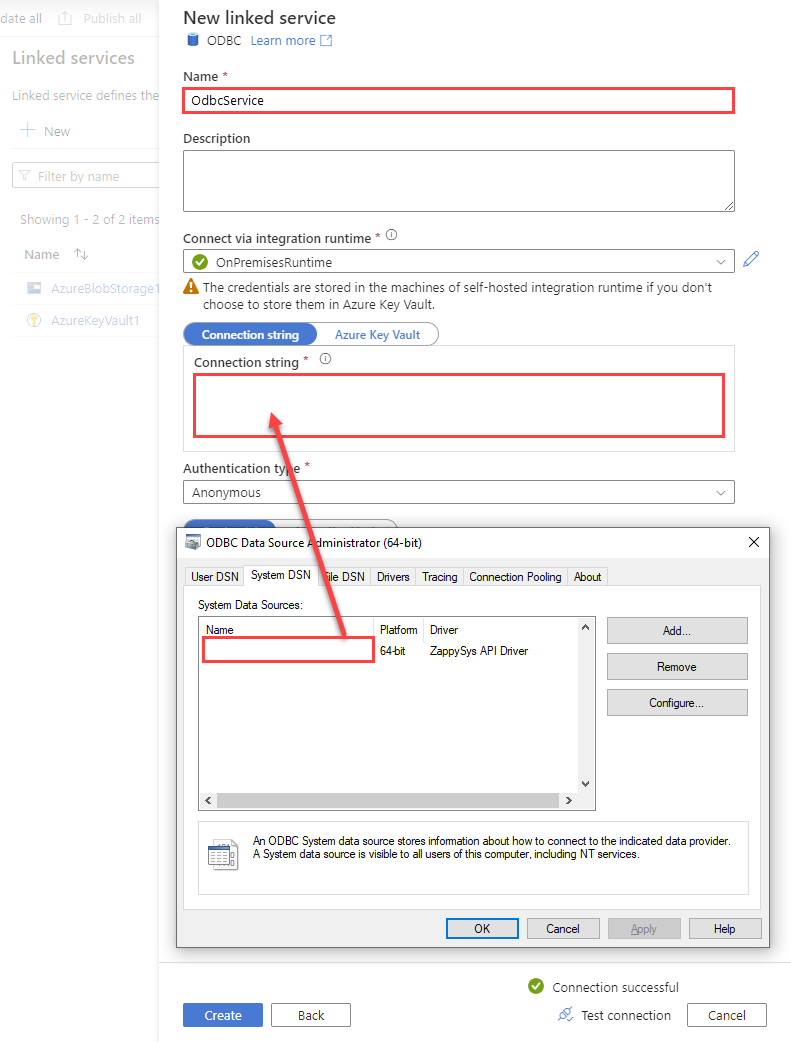

Configure new ODBC service. Use the same DSN name we used in the previous step and copy it to Connection string box:

JiraDSNDSN=JiraDSN

-

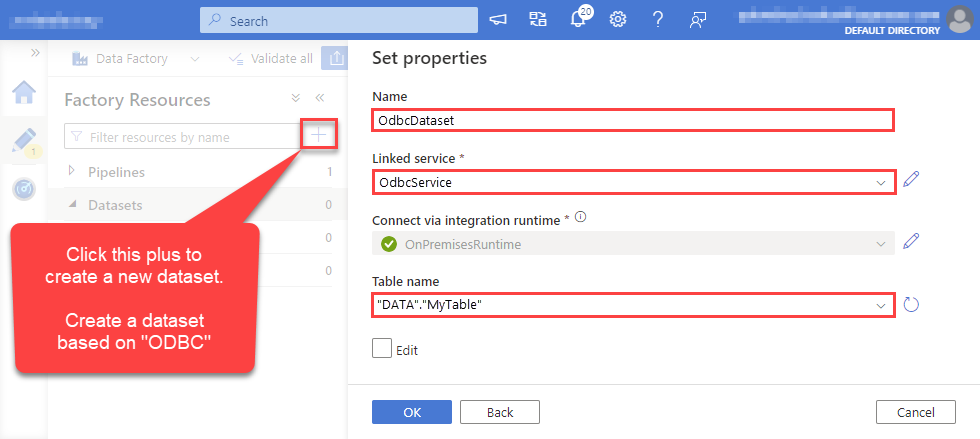

For created ODBC service create ODBC-based dataset:

-

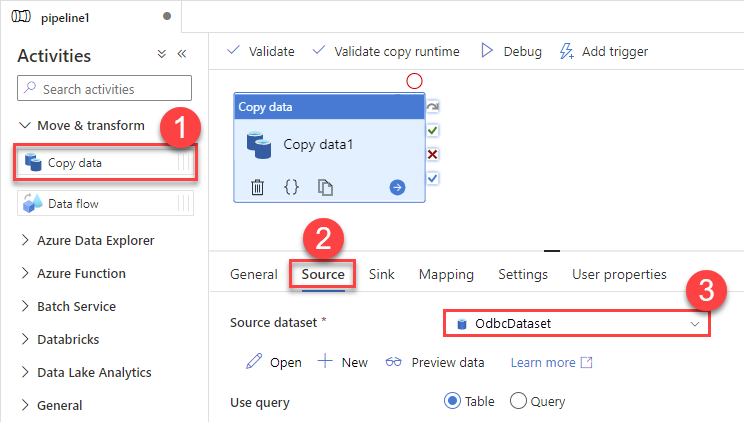

Go to your pipeline and add Copy data connector into the flow. In Source section use OdbcDataset we created as a source dataset:

-

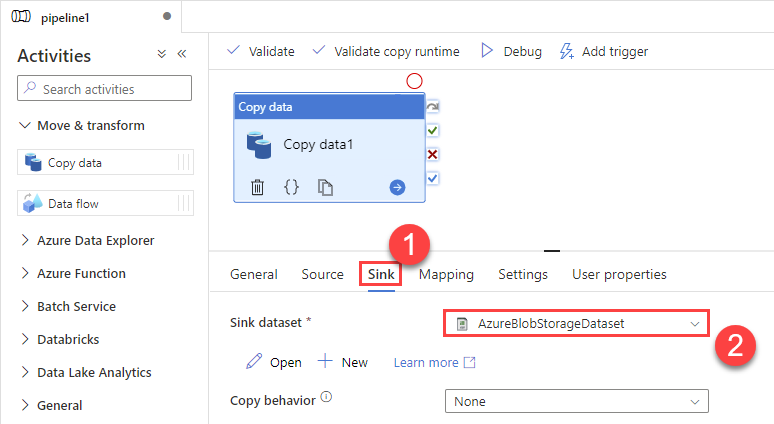

Then go to Sink section and select a destination/sink dataset. In this example we use precreated AzureBlobStorageDataset which saves data into an Azure Blob:

-

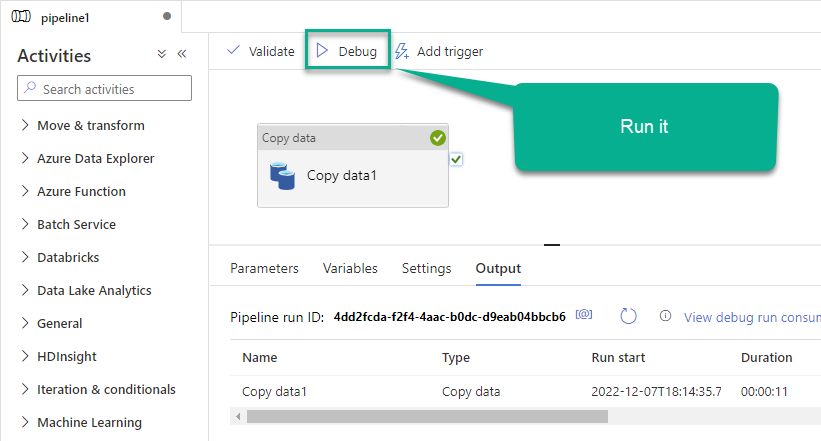

Finally, run the pipeline and see data being transferred from OdbcDataset to your destination dataset:

Advanced topics

Creating SQL stored procedures

You can create procedures to encapsulate custom logic and then only pass handful parameters rather than long SQL to execute your API call.

Steps to create Custom Stored Procedure in ZappySys Driver. You can insert Placeholders anywhere inside Procedure Body. Read more about placeholders here

-

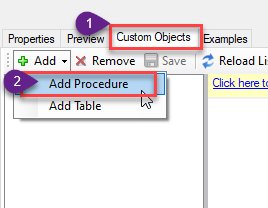

Go to Custom Objects Tab and Click on Add button and Select Add Procedure:

-

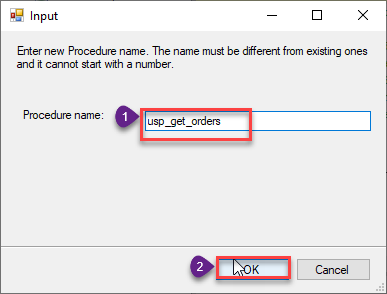

Enter the desired Procedure name and click on OK:

-

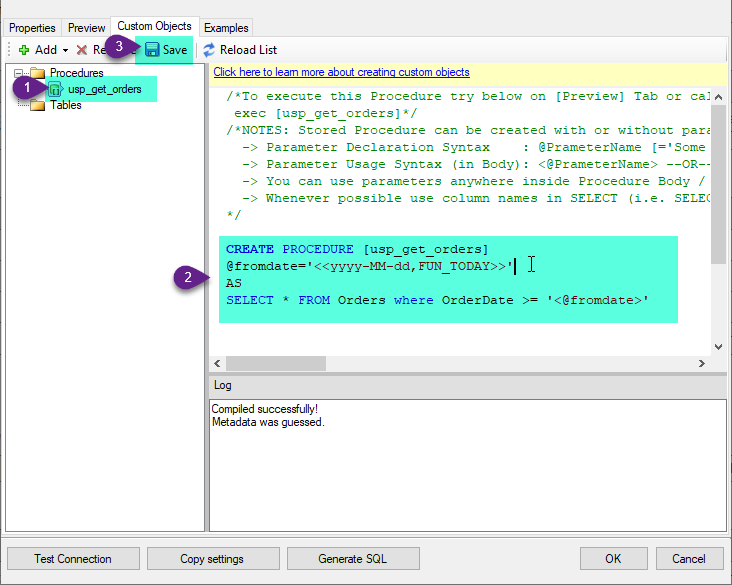

Select the created Stored Procedure and write the your desired stored procedure and Save it and it will create the custom stored procedure in the ZappySys Driver. Here is an example stored procedure for ZappySys Driver. You can insert Placeholders anywhere inside Procedure Body. Read more about placeholders here

CREATE PROCEDURE [usp_get_orders] @fromdate = '<<yyyy-MM-dd,FUN_TODAY>>' AS SELECT * FROM Orders where OrderDate >= '<@fromdate>';

-

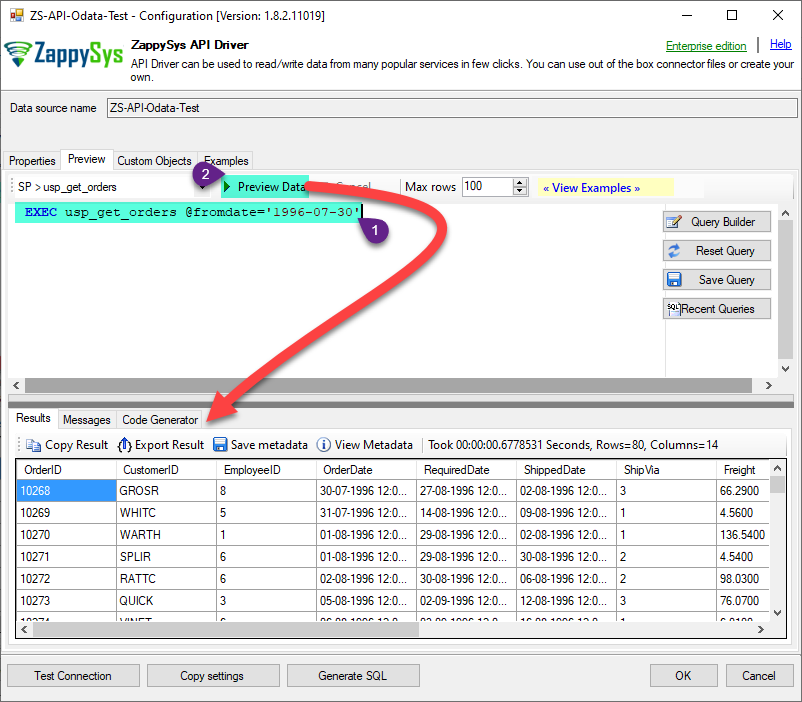

That's it now go to Preview Tab and Execute your Stored Procedure using Exec Command. In this example it will extract the orders from the date 1996-01-01:

Exec usp_get_orders '1996-01-01';

Conclusion

And there you have it — a complete guide on how to read issues in Azure Data Factory (Pipeline) without writing complex code. All of this was powered by Jira ODBC Driver, which handled the REST API pagination and authentication for us automatically.

Download the trial now or ping us via chat if you have any questions or are looking for a specific feature (you can also reach out to us by submitting a ticket):

More actions supported by Jira Connector

Got another use case in mind? We've documented the exact setups for a variety of essential Jira operations directly in Azure Data Factory (Pipeline), so you can skip the trial and error. Find your next step-by-step guide below:

- Create Issue Comment

- Create Issues

- Create Project

- Create User

- Create Worklog

- Delete Issue

- Delete Issue Comment

- Delete Project

- Delete User

- Delete Worklog

- Get custom field context options

- Get custom field contexts

- Read Application Roles

- Read Changelog Details

- Read Changelogs

- Read Changelogs by IDs

- Read Comments

- Read Custom Fields

- Read Fields

- Read Groups

- Read Issue (By Id)

- Read Issue Types

- Read Projects

- Read Resources

- Read Users

- Read Worklogs

- Read Worklogs modified after a specified date

- Update Issue

- Update Issue Comment

- Update Worklog

- Upsert Project

- Make Generic REST API Request

- Make Generic REST API Request (Bulk Write)